Zhenhuan Huang

Adaptive Transfer Learning on Graph Neural Networks

Jul 20, 2021

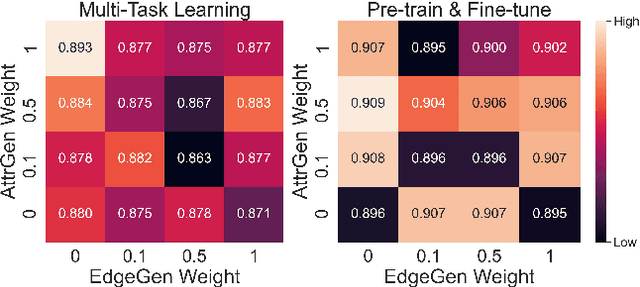

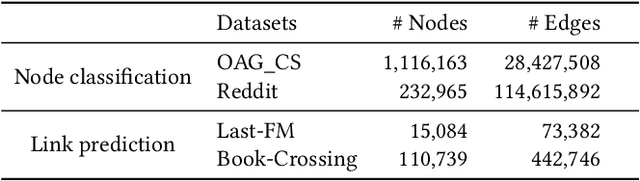

Abstract:Graph neural networks (GNNs) is widely used to learn a powerful representation of graph-structured data. Recent work demonstrates that transferring knowledge from self-supervised tasks to downstream tasks could further improve graph representation. However, there is an inherent gap between self-supervised tasks and downstream tasks in terms of optimization objective and training data. Conventional pre-training methods may be not effective enough on knowledge transfer since they do not make any adaptation for downstream tasks. To solve such problems, we propose a new transfer learning paradigm on GNNs which could effectively leverage self-supervised tasks as auxiliary tasks to help the target task. Our methods would adaptively select and combine different auxiliary tasks with the target task in the fine-tuning stage. We design an adaptive auxiliary loss weighting model to learn the weights of auxiliary tasks by quantifying the consistency between auxiliary tasks and the target task. In addition, we learn the weighting model through meta-learning. Our methods can be applied to various transfer learning approaches, it performs well not only in multi-task learning but also in pre-training and fine-tuning. Comprehensive experiments on multiple downstream tasks demonstrate that the proposed methods can effectively combine auxiliary tasks with the target task and significantly improve the performance compared to state-of-the-art methods.

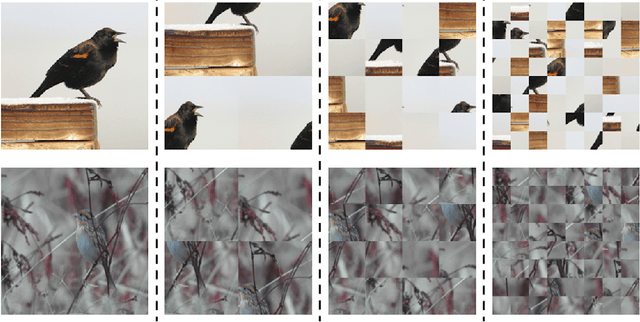

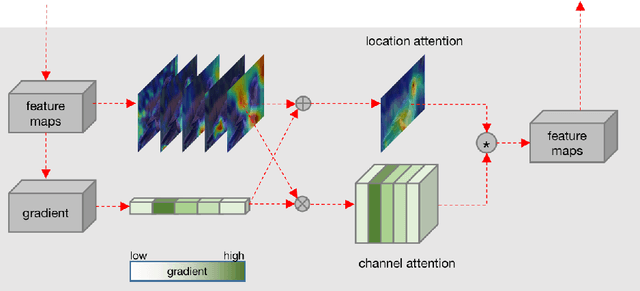

Interpretable Attention Guided Network for Fine-grained Visual Classification

Mar 09, 2021

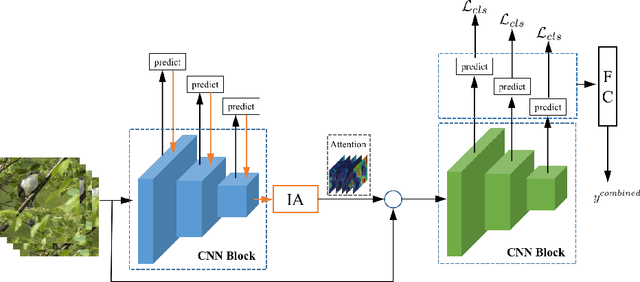

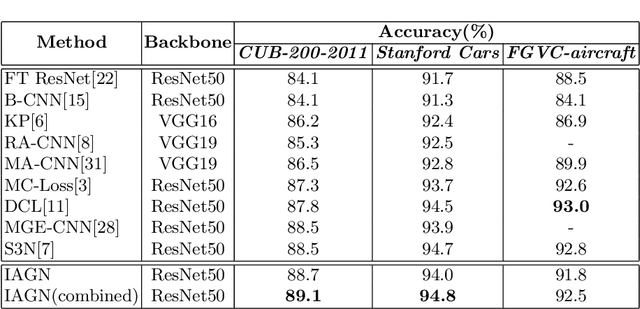

Abstract:Fine-grained visual classification (FGVC) is challenging but more critical than traditional classification tasks. It requires distinguishing different subcategories with the inherently subtle intra-class object variations. Previous works focus on enhancing the feature representation ability using multiple granularities and discriminative regions based on the attention strategy or bounding boxes. However, these methods highly rely on deep neural networks which lack interpretability. We propose an Interpretable Attention Guided Network (IAGN) for fine-grained visual classification. The contributions of our method include: i) an attention guided framework which can guide the network to extract discriminitive regions in an interpretable way; ii) a progressive training mechanism obtained to distill knowledge stage by stage to fuse features of various granularities; iii) the first interpretable FGVC method with a competitive performance on several standard FGVC benchmark datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge