Zengyou He

Adversarial Fair Multi-View Clustering

Aug 06, 2025Abstract:Cluster analysis is a fundamental problem in data mining and machine learning. In recent years, multi-view clustering has attracted increasing attention due to its ability to integrate complementary information from multiple views. However, existing methods primarily focus on clustering performance, while fairness-a critical concern in human-centered applications-has been largely overlooked. Although recent studies have explored group fairness in multi-view clustering, most methods impose explicit regularization on cluster assignments, relying on the alignment between sensitive attributes and the underlying cluster structure. However, this assumption often fails in practice and can degrade clustering performance. In this paper, we propose an adversarial fair multi-view clustering (AFMVC) framework that integrates fairness learning into the representation learning process. Specifically, our method employs adversarial training to fundamentally remove sensitive attribute information from learned features, ensuring that the resulting cluster assignments are unaffected by it. Furthermore, we theoretically prove that aligning view-specific clustering assignments with a fairness-invariant consensus distribution via KL divergence preserves clustering consistency without significantly compromising fairness, thereby providing additional theoretical guarantees for our framework. Extensive experiments on data sets with fairness constraints demonstrate that AFMVC achieves superior fairness and competitive clustering performance compared to existing multi-view clustering and fairness-aware clustering methods.

Interpretable Clustering Ensemble

Jun 06, 2025Abstract:Clustering ensemble has emerged as an important research topic in the field of machine learning. Although numerous methods have been proposed to improve clustering quality, most existing approaches overlook the need for interpretability in high-stakes applications. In domains such as medical diagnosis and financial risk assessment, algorithms must not only be accurate but also interpretable to ensure transparent and trustworthy decision-making. Therefore, to fill the gap of lack of interpretable algorithms in the field of clustering ensemble, we propose the first interpretable clustering ensemble algorithm in the literature. By treating base partitions as categorical variables, our method constructs a decision tree in the original feature space and use the statistical association test to guide the tree building process. Experimental results demonstrate that our algorithm achieves comparable performance to state-of-the-art (SOTA) clustering ensemble methods while maintaining an additional feature of interpretability. To the best of our knowledge, this is the first interpretable algorithm specifically designed for clustering ensemble, offering a new perspective for future research in interpretable clustering.

Conjunction Subspaces Test for Conformal and Selective Classification

Oct 16, 2024

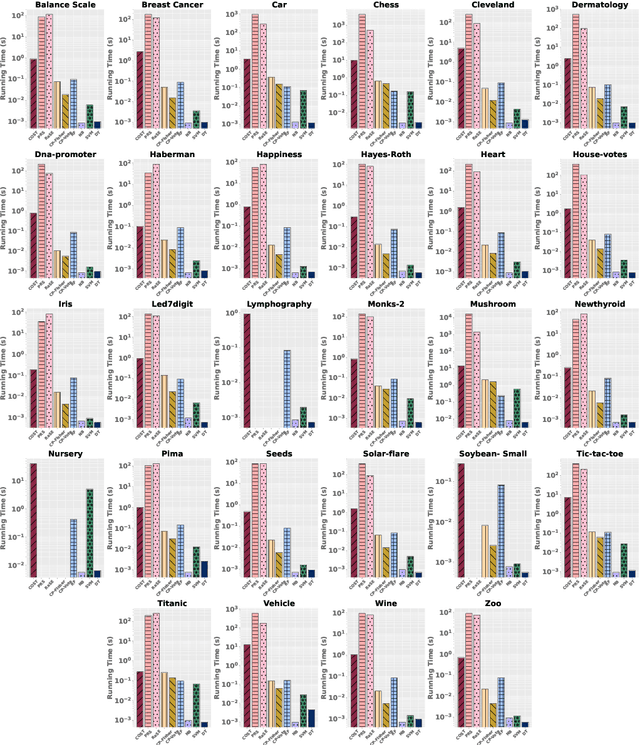

Abstract:In this paper, we present a new classifier, which integrates significance testing results over different random subspaces to yield consensus p-values for quantifying the uncertainty of classification decision. The null hypothesis is that the test sample has no association with the target class on a randomly chosen subspace, and hence the classification problem can be formulated as a problem of testing for the conjunction of hypotheses. The proposed classifier can be easily deployed for the purpose of conformal prediction and selective classification with reject and refine options by simply thresholding the consensus p-values. The theoretical analysis on the generalization error bound of the proposed classifier is provided and empirical studies on real data sets are conducted as well to demonstrate its effectiveness.

Interpretable Clustering: A Survey

Sep 01, 2024

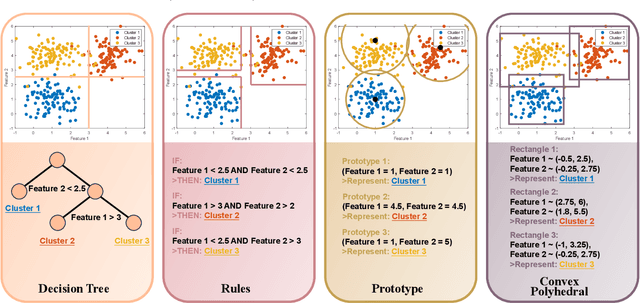

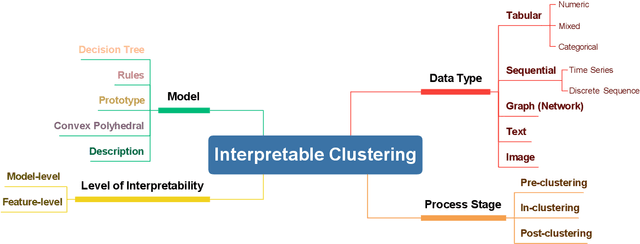

Abstract:In recent years, much of the research on clustering algorithms has primarily focused on enhancing their accuracy and efficiency, frequently at the expense of interpretability. However, as these methods are increasingly being applied in high-stakes domains such as healthcare, finance, and autonomous systems, the need for transparent and interpretable clustering outcomes has become a critical concern. This is not only necessary for gaining user trust but also for satisfying the growing ethical and regulatory demands in these fields. Ensuring that decisions derived from clustering algorithms can be clearly understood and justified is now a fundamental requirement. To address this need, this paper provides a comprehensive and structured review of the current state of explainable clustering algorithms, identifying key criteria to distinguish between various methods. These insights can effectively assist researchers in making informed decisions about the most suitable explainable clustering methods for specific application contexts, while also promoting the development and adoption of clustering algorithms that are both efficient and transparent.

Interpretable Multi-View Clustering

May 04, 2024Abstract:Multi-view clustering has become a significant area of research, with numerous methods proposed over the past decades to enhance clustering accuracy. However, in many real-world applications, it is crucial to demonstrate a clear decision-making process-specifically, explaining why samples are assigned to particular clusters. Consequently, there remains a notable gap in developing interpretable methods for clustering multi-view data. To fill this crucial gap, we make the first attempt towards this direction by introducing an interpretable multi-view clustering framework. Our method begins by extracting embedded features from each view and generates pseudo-labels to guide the initial construction of the decision tree. Subsequently, it iteratively optimizes the feature representation for each view along with refining the interpretable decision tree. Experimental results on real datasets demonstrate that our method not only provides a transparent clustering process for multi-view data but also delivers performance comparable to state-of-the-art multi-view clustering methods. To the best of our knowledge, this is the first effort to design an interpretable clustering framework specifically for multi-view data, opening a new avenue in this field.

Hamming Encoder: Mining Discriminative k-mers for Discrete Sequence Classification

Oct 20, 2023

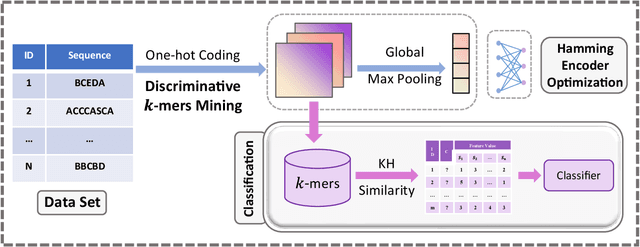

Abstract:Sequence classification has numerous applications in various fields. Despite extensive studies in the last decades, many challenges still exist, particularly in pattern-based methods. Existing pattern-based methods measure the discriminative power of each feature individually during the mining process, leading to the result of missing some combinations of features with discriminative power. Furthermore, it is difficult to ensure the overall discriminative performance after converting sequences into feature vectors. To address these challenges, we propose a novel approach called Hamming Encoder, which utilizes a binarized 1D-convolutional neural network (1DCNN) architecture to mine discriminative k-mer sets. In particular, we adopt a Hamming distance-based similarity measure to ensure consistency in the feature mining and classification procedure. Our method involves training an interpretable CNN encoder for sequential data and performing a gradient-based search for discriminative k-mer combinations. Experiments show that the Hamming Encoder method proposed in this paper outperforms existing state-of-the-art methods in terms of classification accuracy.

Interpretable Sequence Clustering

Sep 03, 2023

Abstract:Categorical sequence clustering plays a crucial role in various fields, but the lack of interpretability in cluster assignments poses significant challenges. Sequences inherently lack explicit features, and existing sequence clustering algorithms heavily rely on complex representations, making it difficult to explain their results. To address this issue, we propose a method called Interpretable Sequence Clustering Tree (ISCT), which combines sequential patterns with a concise and interpretable tree structure. ISCT leverages k-1 patterns to generate k leaf nodes, corresponding to k clusters, which provides an intuitive explanation on how each cluster is formed. More precisely, ISCT first projects sequences into random subspaces and then utilizes the k-means algorithm to obtain high-quality initial cluster assignments. Subsequently, it constructs a pattern-based decision tree using a boosting-based construction strategy in which sequences are re-projected and re-clustered at each node before mining the top-1 discriminative splitting pattern. Experimental results on 14 real-world data sets demonstrate that our proposed method provides an interpretable tree structure while delivering fast and accurate cluster assignments.

A testing-based approach to assess the clusterability of categorical data

Jul 14, 2023Abstract:The objective of clusterability evaluation is to check whether a clustering structure exists within the data set. As a crucial yet often-overlooked issue in cluster analysis, it is essential to conduct such a test before applying any clustering algorithm. If a data set is unclusterable, any subsequent clustering analysis would not yield valid results. Despite its importance, the majority of existing studies focus on numerical data, leaving the clusterability evaluation issue for categorical data as an open problem. Here we present TestCat, a testing-based approach to assess the clusterability of categorical data in terms of an analytical $p$-value. The key idea underlying TestCat is that clusterable categorical data possess many strongly correlated attribute pairs and hence the sum of chi-squared statistics of all attribute pairs is employed as the test statistic for $p$-value calculation. We apply our method to a set of benchmark categorical data sets, showing that TestCat outperforms those solutions based on existing clusterability evaluation methods for numeric data. To the best of our knowledge, our work provides the first way to effectively recognize the clusterability of categorical data in a statistically sound manner.

Personalized Interpretable Classification

Feb 06, 2023

Abstract:How to interpret a data mining model has received much attention recently, because people may distrust a black-box predictive model if they do not understand how the model works. Hence, it will be trustworthy if a model can provide transparent illustrations on how to make the decision. Although many rule-based interpretable classification algorithms have been proposed, all these existing solutions cannot directly construct an interpretable model to provide personalized prediction for each individual test sample. In this paper, we make a first step towards formally introducing personalized interpretable classification as a new data mining problem to the literature. In addition to the problem formulation on this new issue, we present a greedy algorithm called PIC (Personalized Interpretable Classifier) to identify a personalized rule for each individual test sample. To demonstrate the necessity, feasibility and advantages of such a personalized interpretable classification method, we conduct a series of empirical studies on real data sets. The experimental results show that: (1) The new problem formulation enables us to find interesting rules for test samples that may be missed by existing non-personalized classifiers. (2) Our algorithm can achieve the same-level predictive accuracy as those state-of-the-art (SOTA) interpretable classifiers. (3) On a real data set for predicting breast cancer metastasis, such a personalized interpretable classifier can outperform SOTA methods in terms of both accuracy and interpretability.

Significance-Based Categorical Data Clustering

Nov 08, 2022

Abstract:Although numerous algorithms have been proposed to solve the categorical data clustering problem, how to access the statistical significance of a set of categorical clusters remains unaddressed. To fulfill this void, we employ the likelihood ratio test to derive a test statistic that can serve as a significance-based objective function in categorical data clustering. Consequently, a new clustering algorithm is proposed in which the significance-based objective function is optimized via a Monte Carlo search procedure. As a by-product, we can further calculate an empirical $p$-value to assess the statistical significance of a set of clusters and develop an improved gap statistic for estimating the cluster number. Extensive experimental studies suggest that our method is able to achieve comparable performance to state-of-the-art categorical data clustering algorithms. Moreover, the effectiveness of such a significance-based formulation on statistical cluster validation and cluster number estimation is demonstrated through comprehensive empirical results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge