Zefeng Zhang

S1-Bench: A Simple Benchmark for Evaluating System 1 Thinking Capability of Large Reasoning Models

Apr 14, 2025

Abstract:We introduce S1-Bench, a novel benchmark designed to evaluate Large Reasoning Models' (LRMs) performance on simple tasks that favor intuitive system 1 thinking rather than deliberative system 2 reasoning. While LRMs have achieved significant breakthroughs in complex reasoning tasks through explicit chains of thought, their reliance on deep analytical thinking may limit their system 1 thinking capabilities. Moreover, a lack of benchmark currently exists to evaluate LRMs' performance in tasks that require such capabilities. To fill this gap, S1-Bench presents a set of simple, diverse, and naturally clear questions across multiple domains and languages, specifically designed to assess LRMs' performance in such tasks. Our comprehensive evaluation of 22 LRMs reveals significant lower efficiency tendencies, with outputs averaging 15.5 times longer than those of traditional small LLMs. Additionally, LRMs often identify correct answers early but continue unnecessary deliberation, with some models even producing numerous errors. These findings highlight the rigid reasoning patterns of current LRMs and underscore the substantial development needed to achieve balanced dual-system thinking capabilities that can adapt appropriately to task complexity.

Revealing the Challenge of Detecting Character Knowledge Errors in LLM Role-Playing

Sep 18, 2024

Abstract:Large language model (LLM) role-playing has gained widespread attention, where the authentic character knowledge is crucial for constructing realistic LLM role-playing agents. However, existing works usually overlook the exploration of LLMs' ability to detect characters' known knowledge errors (KKE) and unknown knowledge errors (UKE) while playing roles, which would lead to low-quality automatic construction of character trainable corpus. In this paper, we propose a probing dataset to evaluate LLMs' ability to detect errors in KKE and UKE. The results indicate that even the latest LLMs struggle to effectively detect these two types of errors, especially when it comes to familiar knowledge. We experimented with various reasoning strategies and propose an agent-based reasoning method, Self-Recollection and Self-Doubt (S2RD), to further explore the potential for improving error detection capabilities. Experiments show that our method effectively improves the LLMs' ability to detect error character knowledge, but it remains an issue that requires ongoing attention.

Optimal Transport Guided Correlation Assignment for Multimodal Entity Linking

Jun 04, 2024

Abstract:Multimodal Entity Linking (MEL) aims to link ambiguous mentions in multimodal contexts to entities in a multimodal knowledge graph. A pivotal challenge is to fully leverage multi-element correlations between mentions and entities to bridge modality gap and enable fine-grained semantic matching. Existing methods attempt several local correlative mechanisms, relying heavily on the automatically learned attention weights, which may over-concentrate on partial correlations. To mitigate this issue, we formulate the correlation assignment problem as an optimal transport (OT) problem, and propose a novel MEL framework, namely OT-MEL, with OT-guided correlation assignment. Thereby, we exploit the correlation between multimodal features to enhance multimodal fusion, and the correlation between mentions and entities to enhance fine-grained matching. To accelerate model prediction, we further leverage knowledge distillation to transfer OT assignment knowledge to attention mechanism. Experimental results show that our model significantly outperforms previous state-of-the-art baselines and confirm the effectiveness of the OT-guided correlation assignment.

Neural Contextual Conversation Learning with Labeled Question-Answering Pairs

Jul 20, 2016

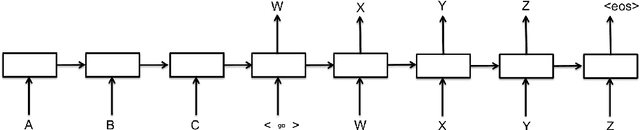

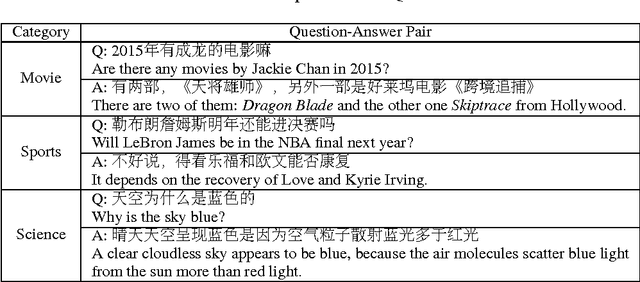

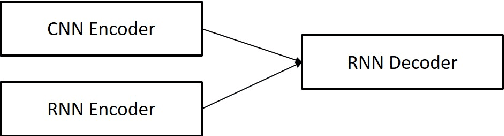

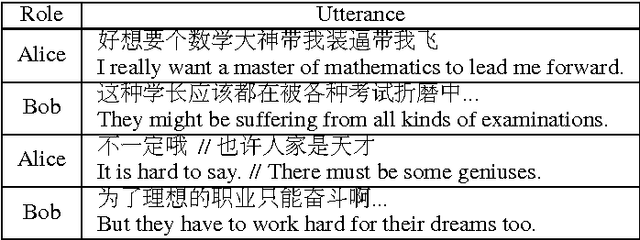

Abstract:Neural conversational models tend to produce generic or safe responses in different contexts, e.g., reply \textit{"Of course"} to narrative statements or \textit{"I don't know"} to questions. In this paper, we propose an end-to-end approach to avoid such problem in neural generative models. Additional memory mechanisms have been introduced to standard sequence-to-sequence (seq2seq) models, so that context can be considered while generating sentences. Three seq2seq models, which memorize a fix-sized contextual vector from hidden input, hidden input/output and a gated contextual attention structure respectively, have been trained and tested on a dataset of labeled question-answering pairs in Chinese. The model with contextual attention outperforms others including the state-of-the-art seq2seq models on perplexity test. The novel contextual model generates diverse and robust responses, and is able to carry out conversations on a wide range of topics appropriately.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge