Neural Contextual Conversation Learning with Labeled Question-Answering Pairs

Paper and Code

Jul 20, 2016

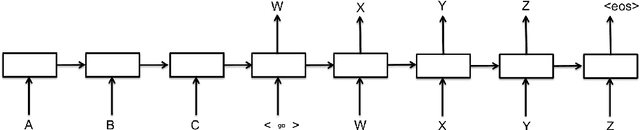

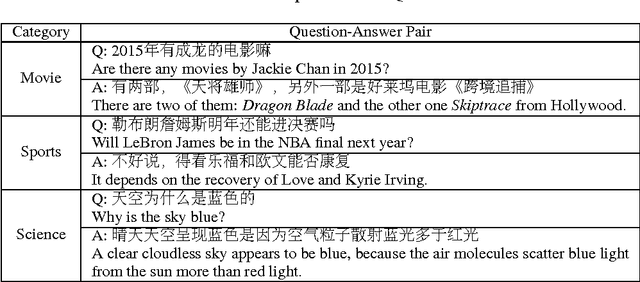

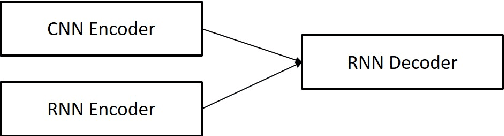

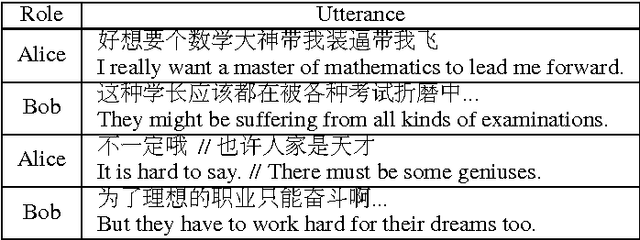

Neural conversational models tend to produce generic or safe responses in different contexts, e.g., reply \textit{"Of course"} to narrative statements or \textit{"I don't know"} to questions. In this paper, we propose an end-to-end approach to avoid such problem in neural generative models. Additional memory mechanisms have been introduced to standard sequence-to-sequence (seq2seq) models, so that context can be considered while generating sentences. Three seq2seq models, which memorize a fix-sized contextual vector from hidden input, hidden input/output and a gated contextual attention structure respectively, have been trained and tested on a dataset of labeled question-answering pairs in Chinese. The model with contextual attention outperforms others including the state-of-the-art seq2seq models on perplexity test. The novel contextual model generates diverse and robust responses, and is able to carry out conversations on a wide range of topics appropriately.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge