Yuki Noguchi

Robust Imitation Learning for Mobile Manipulator Focusing on Task-Related Viewpoints and Regions

Oct 02, 2024

Abstract:We study how to generalize the visuomotor policy of a mobile manipulator from the perspective of visual observations. The mobile manipulator is prone to occlusion owing to its own body when only a single viewpoint is employed and a significant domain shift when deployed in diverse situations. However, to the best of the authors' knowledge, no study has been able to solve occlusion and domain shift simultaneously and propose a robust policy. In this paper, we propose a robust imitation learning method for mobile manipulators that focuses on task-related viewpoints and their spatial regions when observing multiple viewpoints. The multiple viewpoint policy includes attention mechanism, which is learned with an augmented dataset, and brings optimal viewpoints and robust visual embedding against occlusion and domain shift. Comparison of our results for different tasks and environments with those of previous studies revealed that our proposed method improves the success rate by up to 29.3 points. We also conduct ablation studies using our proposed method. Learning task-related viewpoints from the multiple viewpoints dataset increases robustness to occlusion than using a uniquely defined viewpoint. Focusing on task-related regions contributes to up to a 33.3-point improvement in the success rate against domain shift.

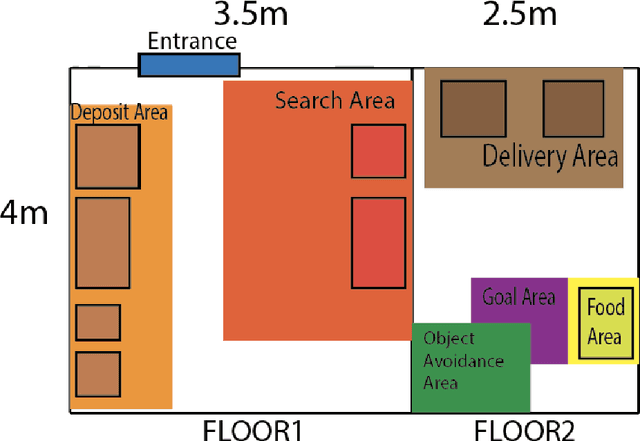

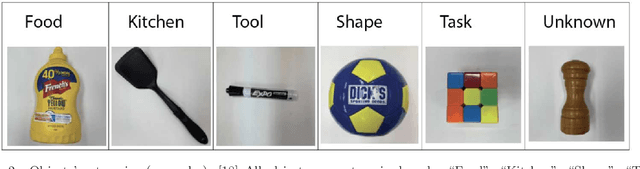

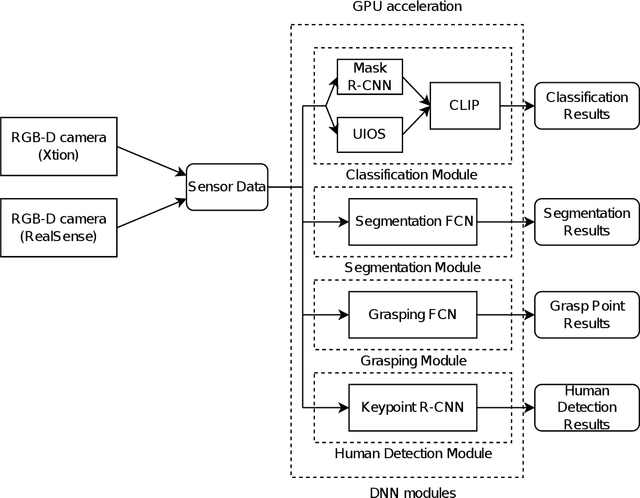

World Robot Challenge 2020 -- Partner Robot: A Data-Driven Approach for Room Tidying with Mobile Manipulator

Jul 22, 2022

Abstract:Tidying up a household environment using a mobile manipulator poses various challenges in robotics, such as adaptation to large real-world environmental variations, and safe and robust deployment in the presence of humans.The Partner Robot Challenge in World Robot Challenge (WRC) 2020, a global competition held in September 2021, benchmarked tidying tasks in the real home environments, and importantly, tested for full system performances.For this challenge, we developed an entire household service robot system, which leverages a data-driven approach to adapt to numerous edge cases that occur during the execution, instead of classical manual pre-programmed solutions. In this paper, we describe the core ingredients of the proposed robot system, including visual recognition, object manipulation, and motion planning. Our robot system won the second prize, verifying the effectiveness and potential of data-driven robot systems for mobile manipulation in home environments.

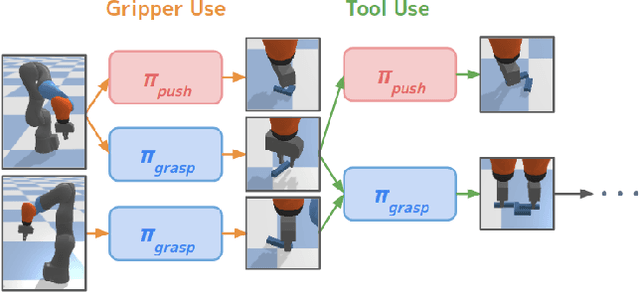

Tool as Embodiment for Recursive Manipulation

Dec 01, 2021

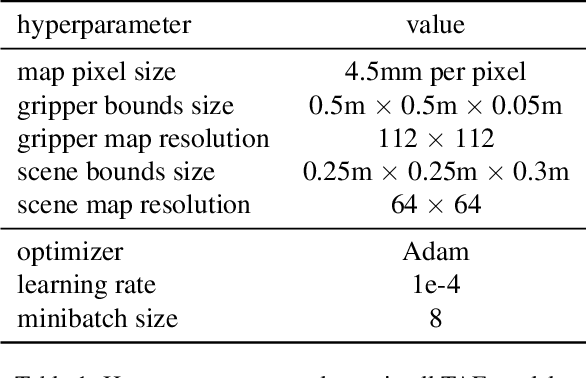

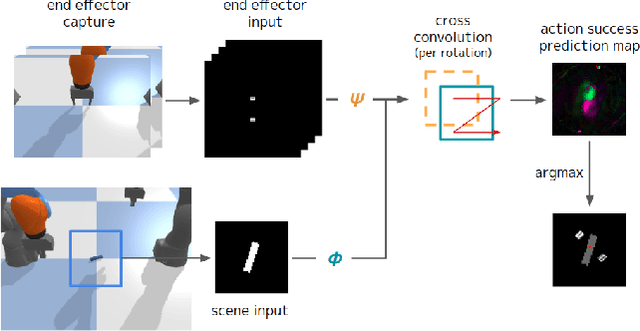

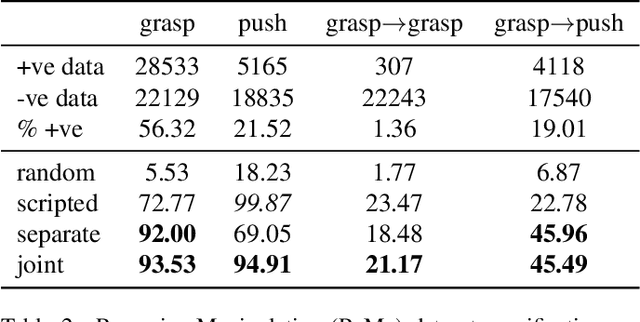

Abstract:Humans and many animals exhibit a robust capability to manipulate diverse objects, often directly with their bodies and sometimes indirectly with tools. Such flexibility is likely enabled by the fundamental consistency in underlying physics of object manipulation such as contacts and force closures. Inspired by viewing tools as extensions of our bodies, we present Tool-As-Embodiment (TAE), a parameterization for tool-based manipulation policies that treat hand-object and tool-object interactions in the same representation space. The result is a single policy that can be applied recursively on robots to use end effectors to manipulate objects, and use objects as tools, i.e. new end-effectors, to manipulate other objects. By sharing experiences across different embodiments for grasping or pushing, our policy exhibits higher performance than if separate policies were trained. Our framework could utilize all experiences from different resolutions of tool-enabled embodiments to a single generic policy for each manipulation skill. Videos at https://sites.google.com/view/recursivemanipulation

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge