Yingnan Sun

Mining Discriminative Food Regions for Accurate Food Recognition

Jul 08, 2022

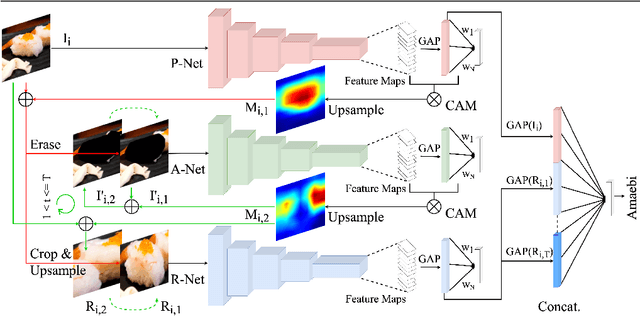

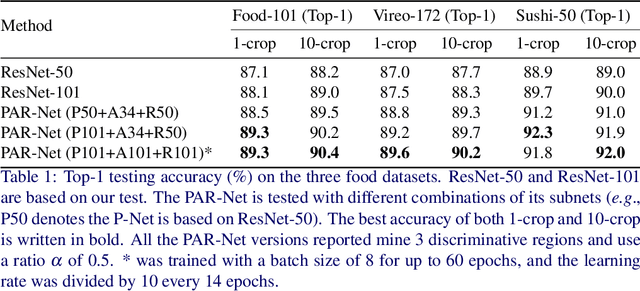

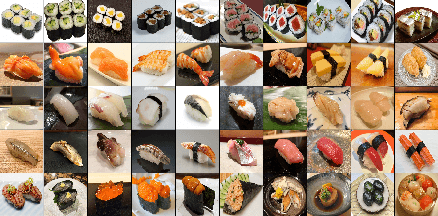

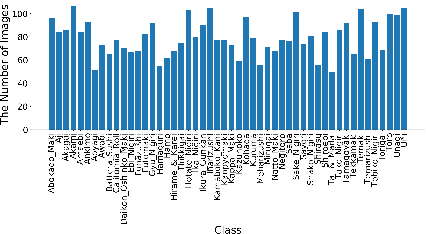

Abstract:Automatic food recognition is the very first step towards passive dietary monitoring. In this paper, we address the problem of food recognition by mining discriminative food regions. Taking inspiration from Adversarial Erasing, a strategy that progressively discovers discriminative object regions for weakly supervised semantic segmentation, we propose a novel network architecture in which a primary network maintains the base accuracy of classifying an input image, an auxiliary network adversarially mines discriminative food regions, and a region network classifies the resulting mined regions. The global (the original input image) and the local (the mined regions) representations are then integrated for the final prediction. The proposed architecture denoted as PAR-Net is end-to-end trainable, and highlights discriminative regions in an online fashion. In addition, we introduce a new fine-grained food dataset named as Sushi-50, which consists of 50 different sushi categories. Extensive experiments have been conducted to evaluate the proposed approach. On three food datasets chosen (Food-101, Vireo-172, and Sushi-50), our approach performs consistently and achieves state-of-the-art results (top-1 testing accuracy of $90.4\%$, $90.2\%$, $92.0\%$, respectively) compared with other existing approaches. Dataset and code are available at https://github.com/Jianing-Qiu/PARNet

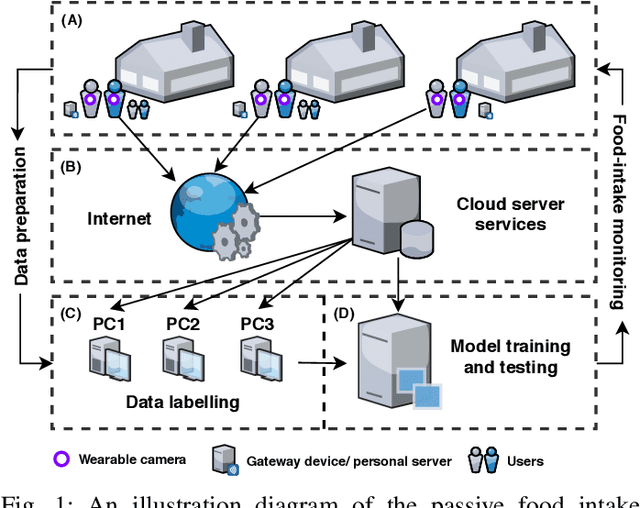

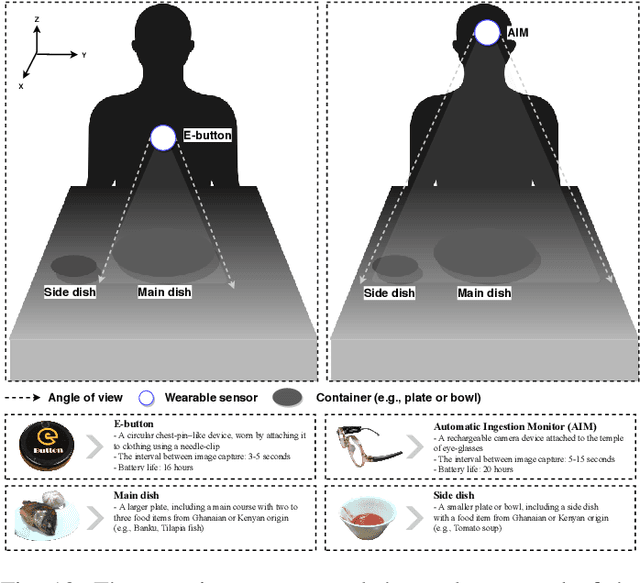

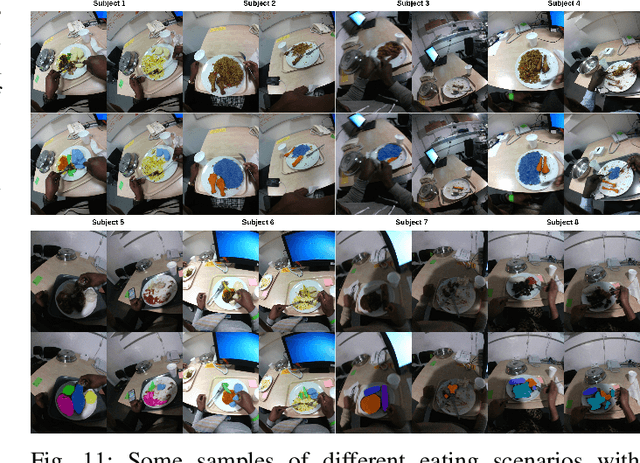

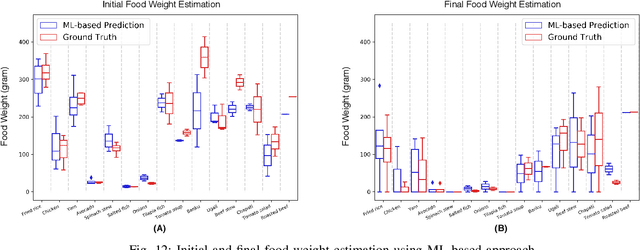

An Intelligent Passive Food Intake Assessment System with Egocentric Cameras

May 07, 2021

Abstract:Malnutrition is a major public health concern in low-and-middle-income countries (LMICs). Understanding food and nutrient intake across communities, households and individuals is critical to the development of health policies and interventions. To ease the procedure in conducting large-scale dietary assessments, we propose to implement an intelligent passive food intake assessment system via egocentric cameras particular for households in Ghana and Uganda. Algorithms are first designed to remove redundant images for minimising the storage memory. At run time, deep learning-based semantic segmentation is applied to recognise multi-food types and newly-designed handcrafted features are extracted for further consumed food weight monitoring. Comprehensive experiments are conducted to validate our methods on an in-the-wild dataset captured under the settings which simulate the unique LMIC conditions with participants of Ghanaian and Kenyan origin eating common Ghanaian/Kenyan dishes. To demonstrate the efficacy, experienced dietitians are involved in this research to perform the visual portion size estimation, and their predictions are compared to our proposed method. The promising results have shown that our method is able to reliably monitor food intake and give feedback on users' eating behaviour which provides guidance for dietitians in regular dietary assessment.

Indoor Future Person Localization from an Egocentric Wearable Camera

Mar 06, 2021

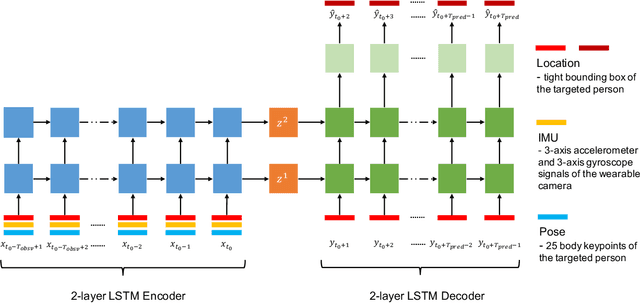

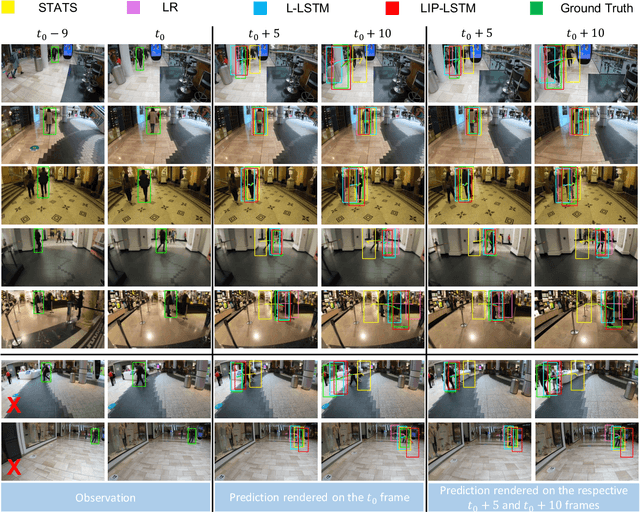

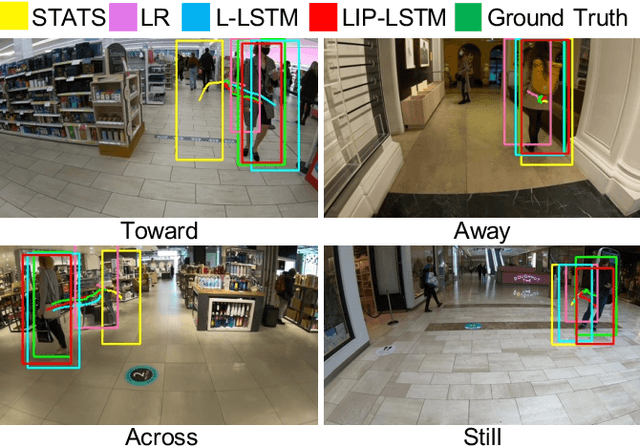

Abstract:Accurate prediction of future person location and movement trajectory from an egocentric wearable camera can benefit a wide range of applications, such as assisting visually impaired people in navigation, and the development of mobility assistance for people with disability. In this work, a new egocentric dataset was constructed using a wearable camera, with 8,250 short clips of a targeted person either walking 1) toward, 2) away, or 3) across the camera wearer in indoor environments, or 4) staying still in the scene, and 13,817 person bounding boxes were manually labelled. Apart from the bounding boxes, the dataset also contains the estimated pose of the targeted person as well as the IMU signal of the wearable camera at each time point. An LSTM-based encoder-decoder framework was designed to predict the future location and movement trajectory of the targeted person in this egocentric setting. Extensive experiments have been conducted on the new dataset, and have shown that the proposed method is able to reliably and better predict future person location and trajectory in egocentric videos captured by the wearable camera compared to three baselines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge