Yigit Demirag

Analog Alchemy: Neural Computation with In-Memory Inference, Learning and Routing

Dec 30, 2024Abstract:As neural computation is revolutionizing the field of Artificial Intelligence (AI), rethinking the ideal neural hardware is becoming the next frontier. Fast and reliable von Neumann architecture has been the hosting platform for neural computation. Although capable, its separation of memory and computation creates the bottleneck for the energy efficiency of neural computation, contrasting the biological brain. The question remains: how can we efficiently combine memory and computation, while exploiting the physics of the substrate, to build intelligent systems? In this thesis, I explore an alternative way with memristive devices for neural computation, where the unique physical dynamics of the devices are used for inference, learning and routing. Guided by the principles of gradient-based learning, we selected functions that need to be materialized, and analyzed connectomics principles for efficient wiring. Despite non-idealities and noise inherent in analog physics, I will provide hardware evidence of adaptability of local learning to memristive substrates, new material stacks and circuit blocks that aid in solving the credit assignment problem and efficient routing between analog crossbars for scalable architectures.

DenRAM: Neuromorphic Dendritic Architecture with RRAM for Efficient Temporal Processing with Delays

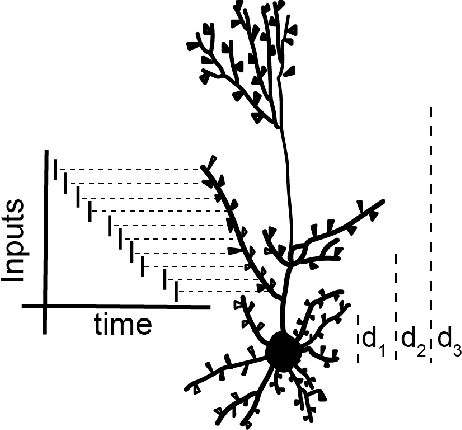

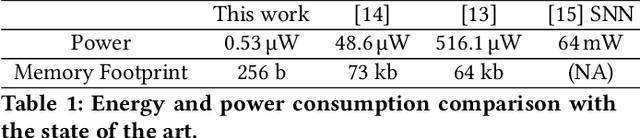

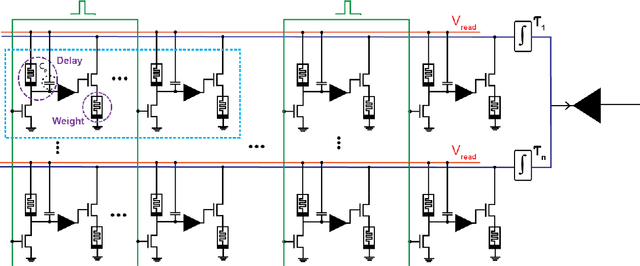

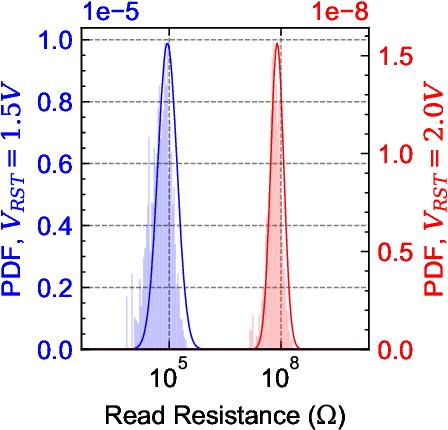

Dec 14, 2023Abstract:An increasing number of neuroscience studies are highlighting the importance of spatial dendritic branching in pyramidal neurons in the brain for supporting non-linear computation through localized synaptic integration. In particular, dendritic branches play a key role in temporal signal processing and feature detection, using coincidence detection (CD) mechanisms, made possible by the presence of synaptic delays that align temporally disparate inputs for effective integration. Computational studies on spiking neural networks further highlight the significance of delays for CD operations, enabling spatio-temporal pattern recognition within feed-forward neural networks without the need for recurrent architectures. In this work, we present DenRAM, the first realization of a spiking neural network with analog dendritic circuits, integrated into a 130nm technology node coupled with resistive memory (RRAM) technology. DenRAM's dendritic circuits use the RRAM devices to implement both delays and synaptic weights in the network. By configuring the RRAM devices to reproduce bio-realistic timescales, and through exploiting their heterogeneity, we experimentally demonstrate DenRAM's capability to replicate synaptic delay profiles, and efficiently implement CD for spatio-temporal pattern recognition. To validate the architecture, we conduct comprehensive system-level simulations on two representative temporal benchmarks, highlighting DenRAM's resilience to analog hardware noise, and its superior accuracy compared to recurrent architectures with an equivalent number of parameters. DenRAM not only brings rich temporal processing capabilities to neuromorphic architectures, but also reduces the memory footprint of edge devices, provides high accuracy on temporal benchmarks, and represents a significant step-forward in low-power real-time signal processing technologies.

Dendritic Computation through Exploiting Resistive Memory as both Delays and Weights

May 11, 2023

Abstract:Biological neurons can detect complex spatio-temporal features in spiking patterns via their synapses spread across across their dendritic branches. This is achieved by modulating the efficacy of the individual synapses, and by exploiting the temporal delays of their response to input spikes, depending on their position on the dendrite. Inspired by this mechanism, we propose a neuromorphic hardware architecture equipped with multiscale dendrites, each of which has synapses with tunable weight and delay elements. Weights and delays are both implemented using Resistive Random Access Memory (RRAM). We exploit the variability in the high resistance state of RRAM to implement a distribution of delays in the millisecond range for enabling spatio-temporal detection of sensory signals. We demonstrate the validity of the approach followed with a RRAM-aware simulation of a heartbeat anomaly detection task. In particular we show that, by incorporating delays directly into the network, the network's power and memory footprint can be reduced by up to 100x compared to equivalent state-of-the-art spiking recurrent networks with no delays.

NeuroBench: Advancing Neuromorphic Computing through Collaborative, Fair and Representative Benchmarking

Apr 15, 2023

Abstract:The field of neuromorphic computing holds great promise in terms of advancing computing efficiency and capabilities by following brain-inspired principles. However, the rich diversity of techniques employed in neuromorphic research has resulted in a lack of clear standards for benchmarking, hindering effective evaluation of the advantages and strengths of neuromorphic methods compared to traditional deep-learning-based methods. This paper presents a collaborative effort, bringing together members from academia and the industry, to define benchmarks for neuromorphic computing: NeuroBench. The goals of NeuroBench are to be a collaborative, fair, and representative benchmark suite developed by the community, for the community. In this paper, we discuss the challenges associated with benchmarking neuromorphic solutions, and outline the key features of NeuroBench. We believe that NeuroBench will be a significant step towards defining standards that can unify the goals of neuromorphic computing and drive its technological progress. Please visit neurobench.ai for the latest updates on the benchmark tasks and metrics.

Online Training of Spiking Recurrent Neural Networks with Phase-Change Memory Synapses

Aug 04, 2021

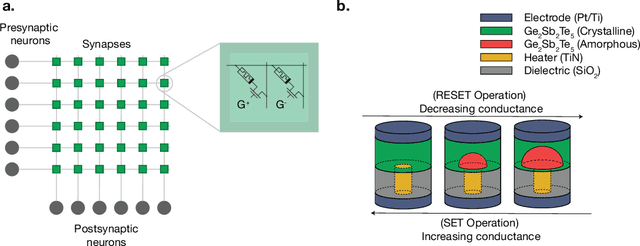

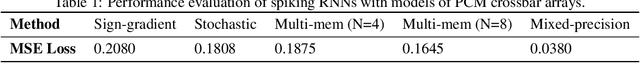

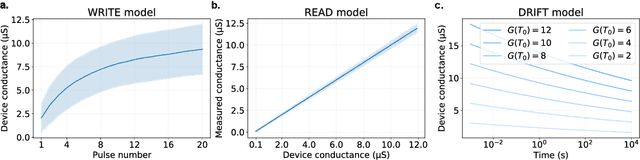

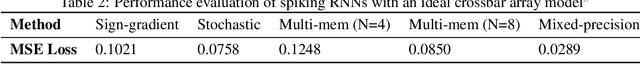

Abstract:Spiking recurrent neural networks (RNNs) are a promising tool for solving a wide variety of complex cognitive and motor tasks, due to their rich temporal dynamics and sparse processing. However training spiking RNNs on dedicated neuromorphic hardware is still an open challenge. This is due mainly to the lack of local, hardware-friendly learning mechanisms that can solve the temporal credit assignment problem and ensure stable network dynamics, even when the weight resolution is limited. These challenges are further accentuated, if one resorts to using memristive devices for in-memory computing to resolve the von-Neumann bottleneck problem, at the expense of a substantial increase in variability in both the computation and the working memory of the spiking RNNs. To address these challenges and enable online learning in memristive neuromorphic RNNs, we present a simulation framework of differential-architecture crossbar arrays based on an accurate and comprehensive Phase-Change Memory (PCM) device model. We train a spiking RNN whose weights are emulated in the presented simulation framework, using a recently proposed e-prop learning rule. Although e-prop locally approximates the ideal synaptic updates, it is difficult to implement the updates on the memristive substrate due to substantial PCM non-idealities. We compare several widely adapted weight update schemes that primarily aim to cope with these device non-idealities and demonstrate that accumulating gradients can enable online and efficient training of spiking RNN on memristive substrates.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge