Yajun Chen

Scalable Class-Incremental Learning Based on Parametric Neural Collapse

Dec 26, 2025Abstract:Incremental learning often encounter challenges such as overfitting to new data and catastrophic forgetting of old data. Existing methods can effectively extend the model for new tasks while freezing the parameters of the old model, but ignore the necessity of structural efficiency to lead to the feature difference between modules and the class misalignment due to evolving class distributions. To address these issues, we propose scalable class-incremental learning based on parametric neural collapse (SCL-PNC) that enables demand-driven, minimal-cost backbone expansion by adapt-layer and refines the static into a dynamic parametric Equiangular Tight Frame (ETF) framework according to incremental class. This method can efficiently handle the model expansion question with the increasing number of categories in real-world scenarios. Additionally, to counteract feature drift in serial expansion models, the parallel expansion framework is presented with a knowledge distillation algorithm to align features across expansion modules. Therefore, SCL-PNC can not only design a dynamic and extensible ETF classifier to address class misalignment due to evolving class distributions, but also ensure feature consistency by an adapt-layer with knowledge distillation between extended modules. By leveraging neural collapse, SCL-PNC induces the convergence of the incremental expansion model through a structured combination of the expandable backbone, adapt-layer, and the parametric ETF classifier. Experiments on standard benchmarks demonstrate the effectiveness and efficiency of our proposed method. Our code is available at https://github.com/zhangchuangxin71-cyber/dynamic_ ETF2. Keywords: Class incremental learning; Catastrophic forgetting; Neural collapse;Knowledge distillation; Expanded model.

Robust Anti-jamming Communications with DMA-Based Reconfigurable Heterogeneous Array

Oct 14, 2023

Abstract:In the future commercial and military communication systems, anti-jamming remains a critical issue. Existing homogeneous or heterogeneous arrays with a limited degrees of freedom (DoF) and high consumption are unable to meet the requirements of communication in rapidly changing and intense jamming environments. To address these challenges, we propose a reconfigurable heterogeneous array (RHA) architecture based on dynamic metasurface antenna (DMA), which will increase the DoF and further improve anti-jamming capabilities. We propose a two-step anti-jamming scheme based on RHA, where the multipaths are estimated by an atomic norm minimization (ANM) based scheme, and then the received signal-to-interference-plus-noise ratio (SINR) is maximized by jointly designing the phase shift of each DMA element and the weights of the array elements. To solve the challenging non-convex discrete fractional problem along with the estimation error in the direction of arrival (DoA) and channel state information (CSI), we propose a robust alternative algorithm based on the S-procedure to solve the lower-bound SINR maximization problem. Simulation results demonstrate that the proposed RHA architecture and corresponding schemes have superior performance in terms of jamming immunity and robustness.

Deep graph learning for semi-supervised classification

May 29, 2020

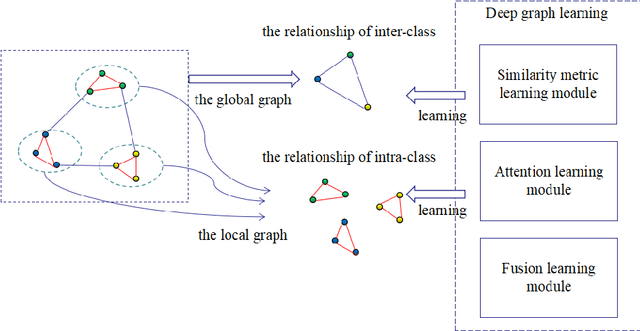

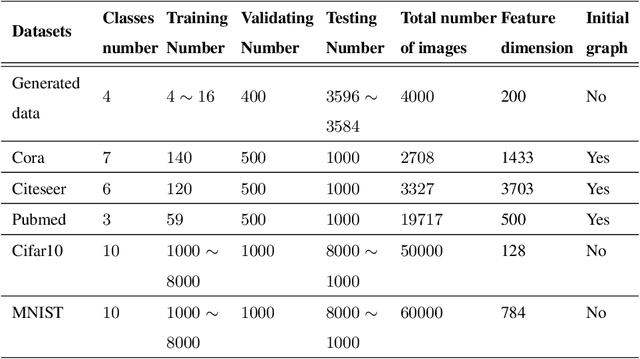

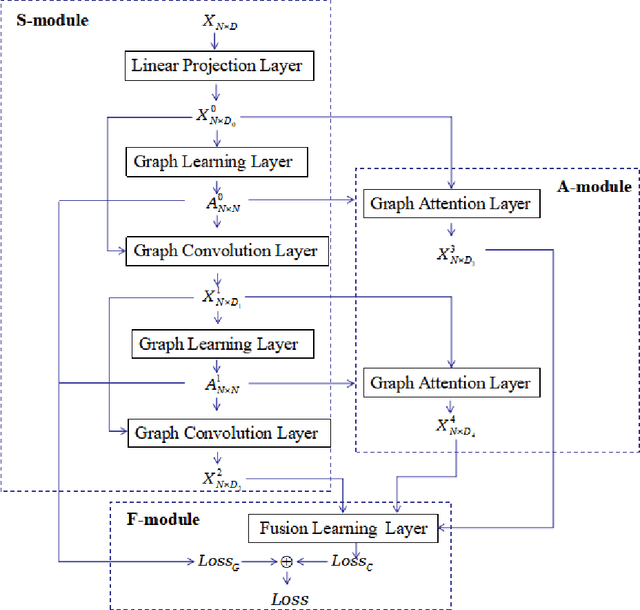

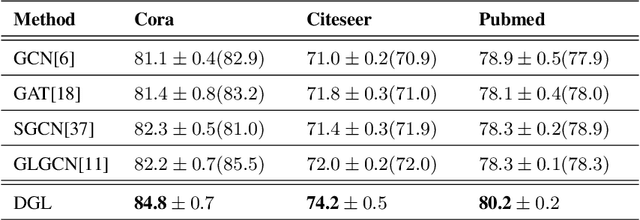

Abstract:Graph learning (GL) can dynamically capture the distribution structure (graph structure) of data based on graph convolutional networks (GCN), and the learning quality of the graph structure directly influences GCN for semi-supervised classification. Existing methods mostly combine the computational layer and the related losses into GCN for exploring the global graph(measuring graph structure from all data samples) or local graph (measuring graph structure from local data samples). Global graph emphasises on the whole structure description of the inter-class data, while local graph trend to the neighborhood structure representation of intra-class data. However, it is difficult to simultaneously balance these graphs of the learning process for semi-supervised classification because of the interdependence of these graphs. To simulate the interdependence, deep graph learning(DGL) is proposed to find the better graph representation for semi-supervised classification. DGL can not only learn the global structure by the previous layer metric computation updating, but also mine the local structure by next layer local weight reassignment. Furthermore, DGL can fuse the different structures by dynamically encoding the interdependence of these structures, and deeply mine the relationship of the different structures by the hierarchical progressive learning for improving the performance of semi-supervised classification. Experiments demonstrate the DGL outperforms state-of-the-art methods on three benchmark datasets (Citeseer,Cora, and Pubmed) for citation networks and two benchmark datasets (MNIST and Cifar10) for images.

Class label autoencoder for zero-shot learning

Jan 25, 2018

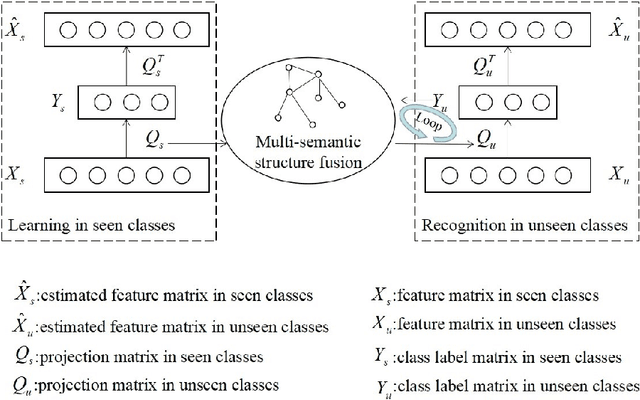

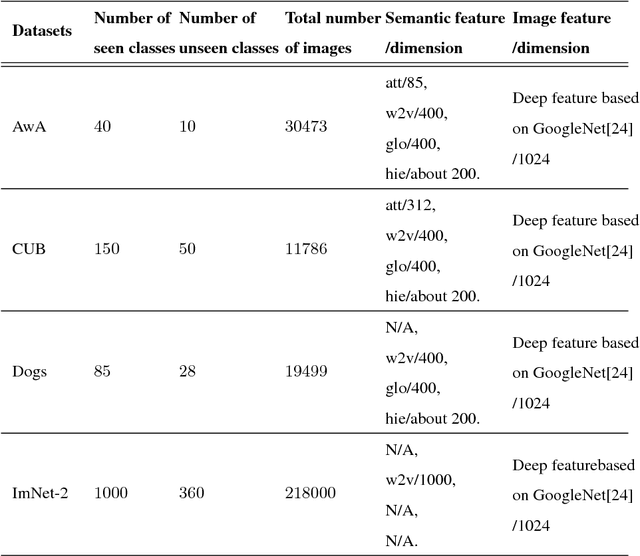

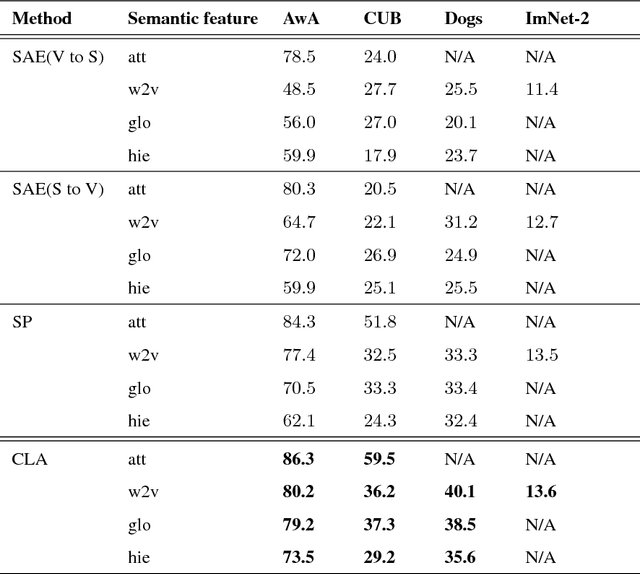

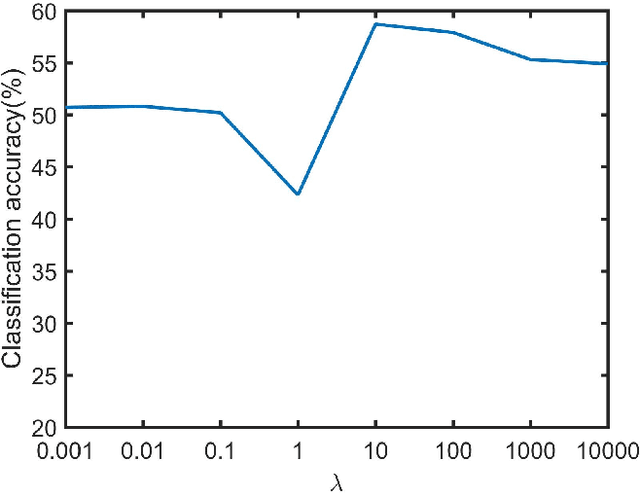

Abstract:Existing zero-shot learning (ZSL) methods usually learn a projection function between a feature space and a semantic embedding space(text or attribute space) in the training seen classes or testing unseen classes. However, the projection function cannot be used between the feature space and multi-semantic embedding spaces, which have the diversity characteristic for describing the different semantic information of the same class. To deal with this issue, we present a novel method to ZSL based on learning class label autoencoder (CLA). CLA can not only build a uniform framework for adapting to multi-semantic embedding spaces, but also construct the encoder-decoder mechanism for constraining the bidirectional projection between the feature space and the class label space. Moreover, CLA can jointly consider the relationship of feature classes and the relevance of the semantic classes for improving zero-shot classification. The CLA solution can provide both unseen class labels and the relation of the different classes representation(feature or semantic information) that can encode the intrinsic structure of classes. Extensive experiments demonstrate the CLA outperforms state-of-art methods on four benchmark datasets, which are AwA, CUB, Dogs and ImNet-2.

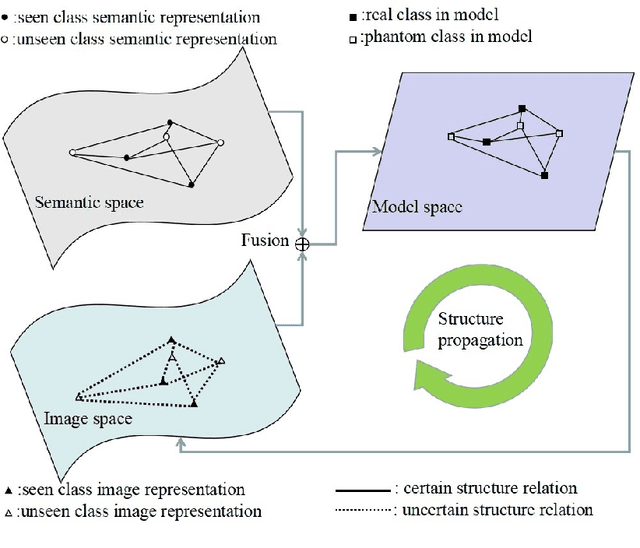

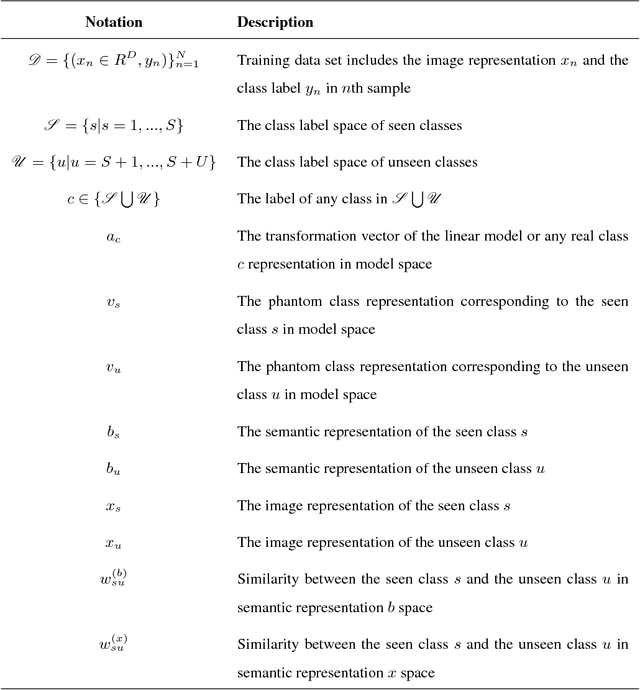

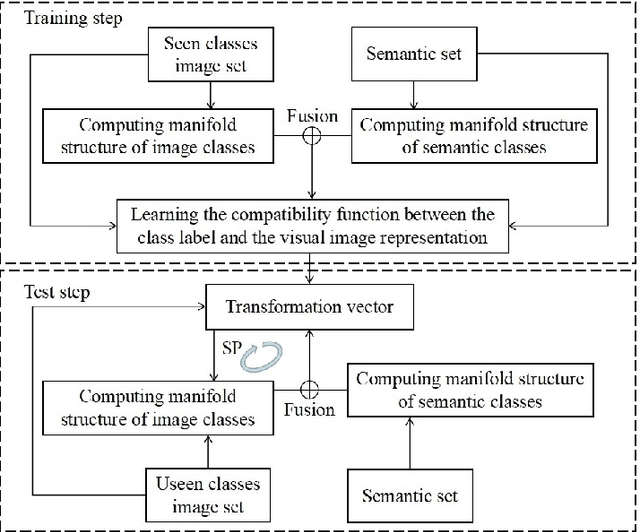

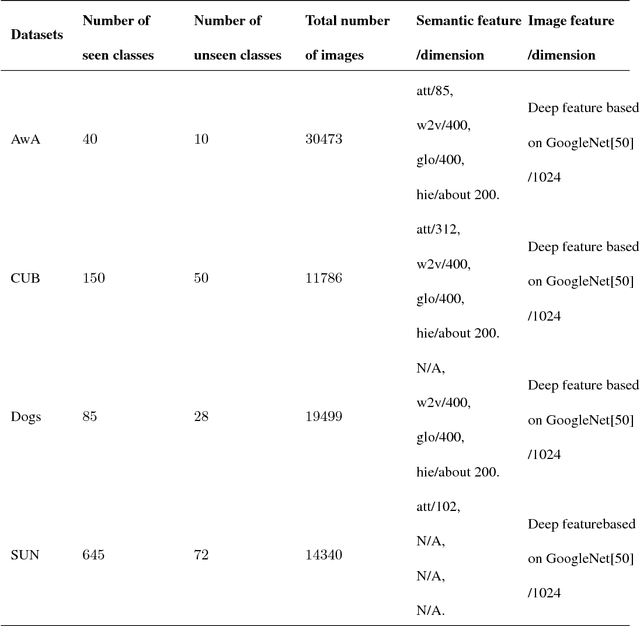

Structure propagation for zero-shot learning

Nov 27, 2017

Abstract:The key of zero-shot learning (ZSL) is how to find the information transfer model for bridging the gap between images and semantic information (texts or attributes). Existing ZSL methods usually construct the compatibility function between images and class labels with the consideration of the relevance on the semantic classes (the manifold structure of semantic classes). However, the relationship of image classes (the manifold structure of image classes) is also very important for the compatibility model construction. It is difficult to capture the relationship among image classes due to unseen classes, so that the manifold structure of image classes often is ignored in ZSL. To complement each other between the manifold structure of image classes and that of semantic classes information, we propose structure propagation (SP) for improving the performance of ZSL for classification. SP can jointly consider the manifold structure of image classes and that of semantic classes for approximating to the intrinsic structure of object classes. Moreover, the SP can describe the constrain condition between the compatibility function and these manifold structures for balancing the influence of the structure propagation iteration. The SP solution provides not only unseen class labels but also the relationship of two manifold structures that encode the positive transfer in structure propagation. Experimental results demonstrate that SP can attain the promising results on the AwA, CUB, Dogs and SUN databases.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge