Guangfeng Lin

Boundary and Position Information Mining for Aerial Small Object Detection

Jan 23, 2026Abstract:Unmanned Aerial Vehicle (UAV) applications have become increasingly prevalent in aerial photography and object recognition. However, there are major challenges to accurately capturing small targets in object detection due to the imbalanced scale and the blurred edges. To address these issues, boundary and position information mining (BPIM) framework is proposed for capturing object edge and location cues. The proposed BPIM includes position information guidance (PIG) module for obtaining location information, boundary information guidance (BIG) module for extracting object edge, cross scale fusion (CSF) module for gradually assembling the shallow layer image feature, three feature fusion (TFF) module for progressively combining position and boundary information, and adaptive weight fusion (AWF) module for flexibly merging the deep layer semantic feature. Therefore, BPIM can integrate boundary, position, and scale information in image for small object detection using attention mechanisms and cross-scale feature fusion strategies. Furthermore, BPIM not only improves the discrimination of the contextual feature by adaptive weight fusion with boundary, but also enhances small object perceptions by cross-scale position fusion. On the VisDrone2021, DOTA1.0, and WiderPerson datasets, experimental results show the better performances of BPIM compared to the baseline Yolov5-P2, and obtains the promising performance in the state-of-the-art methods with comparable computation load.

Scalable Class-Incremental Learning Based on Parametric Neural Collapse

Dec 26, 2025Abstract:Incremental learning often encounter challenges such as overfitting to new data and catastrophic forgetting of old data. Existing methods can effectively extend the model for new tasks while freezing the parameters of the old model, but ignore the necessity of structural efficiency to lead to the feature difference between modules and the class misalignment due to evolving class distributions. To address these issues, we propose scalable class-incremental learning based on parametric neural collapse (SCL-PNC) that enables demand-driven, minimal-cost backbone expansion by adapt-layer and refines the static into a dynamic parametric Equiangular Tight Frame (ETF) framework according to incremental class. This method can efficiently handle the model expansion question with the increasing number of categories in real-world scenarios. Additionally, to counteract feature drift in serial expansion models, the parallel expansion framework is presented with a knowledge distillation algorithm to align features across expansion modules. Therefore, SCL-PNC can not only design a dynamic and extensible ETF classifier to address class misalignment due to evolving class distributions, but also ensure feature consistency by an adapt-layer with knowledge distillation between extended modules. By leveraging neural collapse, SCL-PNC induces the convergence of the incremental expansion model through a structured combination of the expandable backbone, adapt-layer, and the parametric ETF classifier. Experiments on standard benchmarks demonstrate the effectiveness and efficiency of our proposed method. Our code is available at https://github.com/zhangchuangxin71-cyber/dynamic_ ETF2. Keywords: Class incremental learning; Catastrophic forgetting; Neural collapse;Knowledge distillation; Expanded model.

Gabor-guided transformer for single image deraining

Mar 12, 2024Abstract:Image deraining have have gained a great deal of attention in order to address the challenges posed by the effects of harsh weather conditions on visual tasks. While convolutional neural networks (CNNs) are popular, their limitations in capturing global information may result in ineffective rain removal. Transformer-based methods with self-attention mechanisms have improved, but they tend to distort high-frequency details that are crucial for image fidelity. To solve this problem, we propose the Gabor-guided tranformer (Gabformer) for single image deraining. The focus on local texture features is enhanced by incorporating the information processed by the Gabor filter into the query vector, which also improves the robustness of the model to noise due to the properties of the filter. Extensive experiments on the benchmarks demonstrate that our method outperforms state-of-the-art approaches.

High-order structure preserving graph neural network for few-shot learning

May 29, 2020

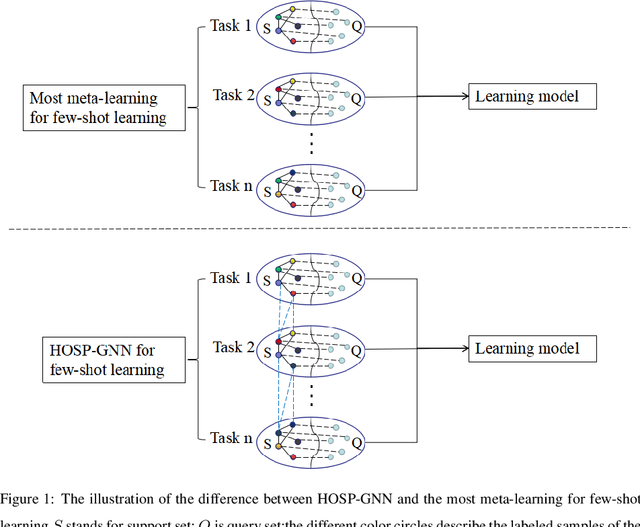

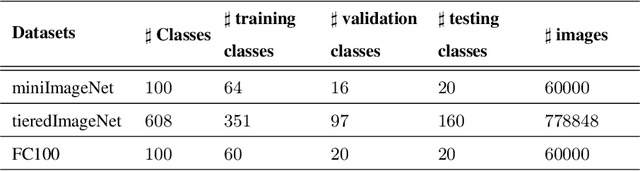

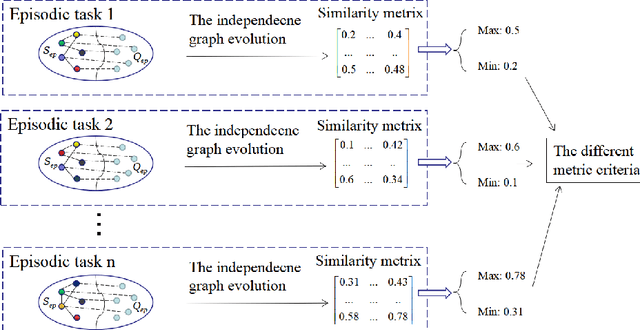

Abstract:Few-shot learning can find the latent structure information between the prior knowledge and the queried data by the similarity metric of meta-learning to construct the discriminative model for recognizing the new categories with the rare labeled samples. Most existing methods try to model the similarity relationship of the samples in the intra tasks, and generalize the model to identify the new categories. However, the relationship of samples between the separated tasks is difficultly considered because of the different metric criterion in the respective tasks. In contrast, the proposed high-order structure preserving graph neural network(HOSP-GNN) can further explore the rich structure of the samples to predict the label of the queried data on graph that enables the structure evolution to explicitly discriminate the categories by iteratively updating the high-order structure relationship (the relative metric in multi-samples,instead of pairwise sample metric) with the manifold structure constraints. HOSP-GNN can not only mine the high-order structure for complementing the relevance between samples that may be divided into the different task in meta-learning, and but also generate the rule of the structure updating by manifold constraint. Furthermore, HOSP-GNN doesn't need retrain the learning model for recognizing the new classes, and HOSP-GNN has the well-generalizable high-order structure for model adaptability. Experiments show that HOSP-GNN outperforms the state-of-the-art methods on supervised and semi-supervised few-shot learning in three benchmark datasets that are miniImageNet, tieredImageNet and FC100.

Deep graph learning for semi-supervised classification

May 29, 2020

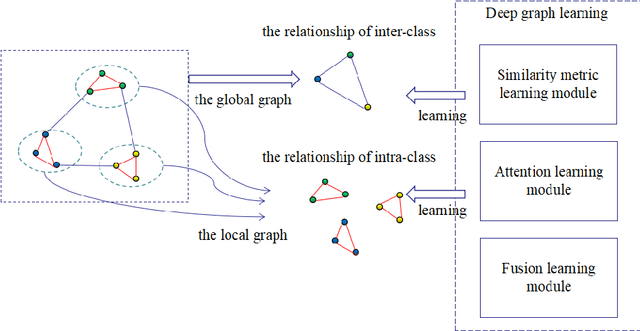

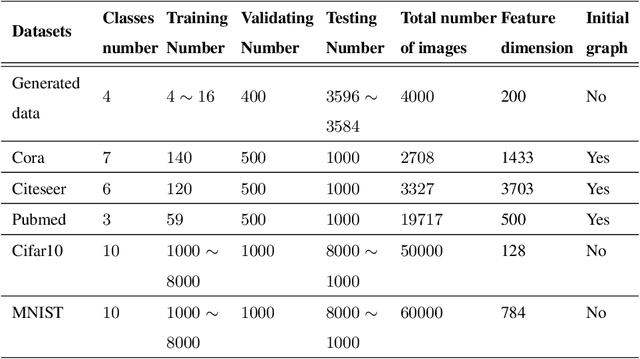

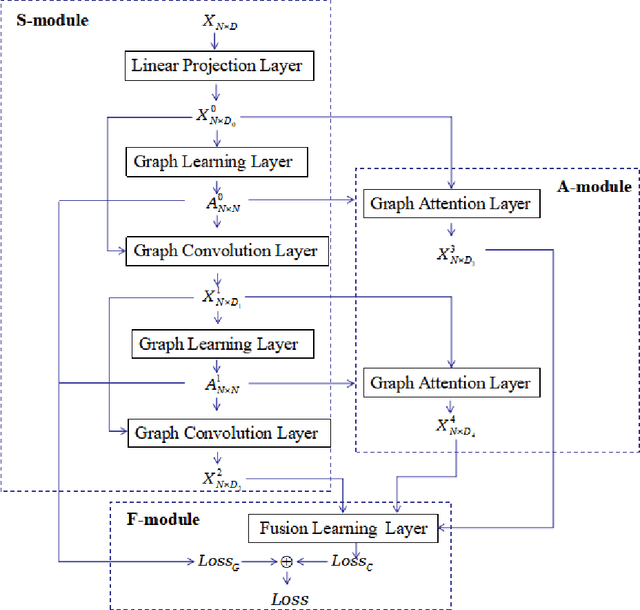

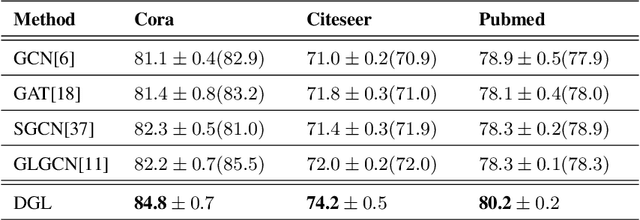

Abstract:Graph learning (GL) can dynamically capture the distribution structure (graph structure) of data based on graph convolutional networks (GCN), and the learning quality of the graph structure directly influences GCN for semi-supervised classification. Existing methods mostly combine the computational layer and the related losses into GCN for exploring the global graph(measuring graph structure from all data samples) or local graph (measuring graph structure from local data samples). Global graph emphasises on the whole structure description of the inter-class data, while local graph trend to the neighborhood structure representation of intra-class data. However, it is difficult to simultaneously balance these graphs of the learning process for semi-supervised classification because of the interdependence of these graphs. To simulate the interdependence, deep graph learning(DGL) is proposed to find the better graph representation for semi-supervised classification. DGL can not only learn the global structure by the previous layer metric computation updating, but also mine the local structure by next layer local weight reassignment. Furthermore, DGL can fuse the different structures by dynamically encoding the interdependence of these structures, and deeply mine the relationship of the different structures by the hierarchical progressive learning for improving the performance of semi-supervised classification. Experiments demonstrate the DGL outperforms state-of-the-art methods on three benchmark datasets (Citeseer,Cora, and Pubmed) for citation networks and two benchmark datasets (MNIST and Cifar10) for images.

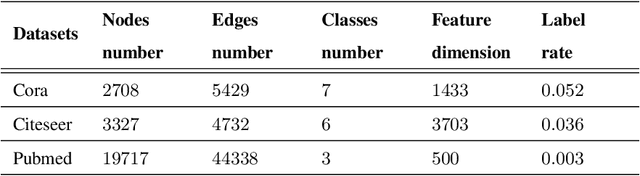

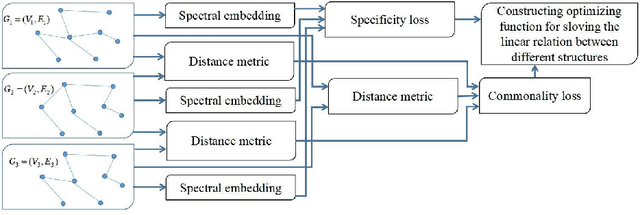

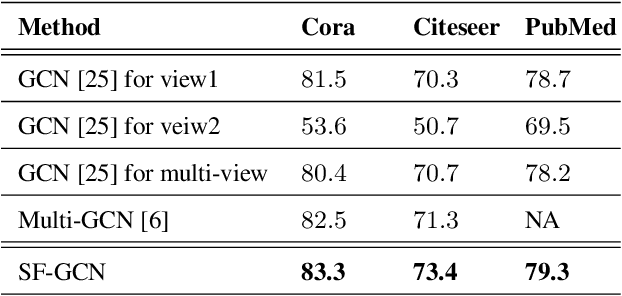

Structure fusion based on graph convolutional networks for semi-supervised classification

Jul 02, 2019

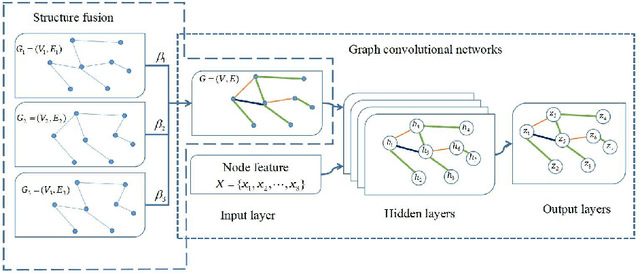

Abstract:Suffering from the multi-view data diversity and complexity for semi-supervised classification, most of existing graph convolutional networks focus on the networks architecture construction or the salient graph structure preservation, and ignore the the complete graph structure for semi-supervised classification contribution. To mine the more complete distribution structure from multi-view data with the consideration of the specificity and the commonality, we propose structure fusion based on graph convolutional networks (SF-GCN) for improving the performance of semi-supervised classification. SF-GCN can not only retain the special characteristic of each view data by spectral embedding, but also capture the common style of multi-view data by distance metric between multi-graph structures. Suppose the linear relationship between multi-graph structures, we can construct the optimization function of structure fusion model by balancing the specificity loss and the commonality loss. By solving this function, we can simultaneously obtain the fusion spectral embedding from the multi-view data and the fusion structure as adjacent matrix to input graph convolutional networks for semi-supervised classification. Experiments demonstrate that the performance of SF-GCN outperforms that of the state of the arts on three challenging datasets, which are Cora,Citeseer and Pubmed in citation networks.

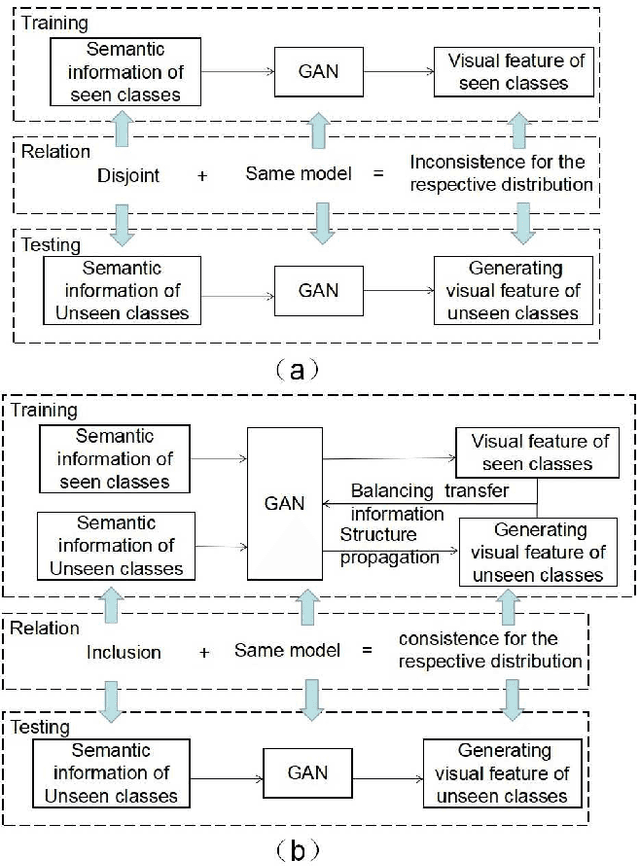

Transfer feature generating networks with semantic classes structure for zero-shot learning

Mar 06, 2019

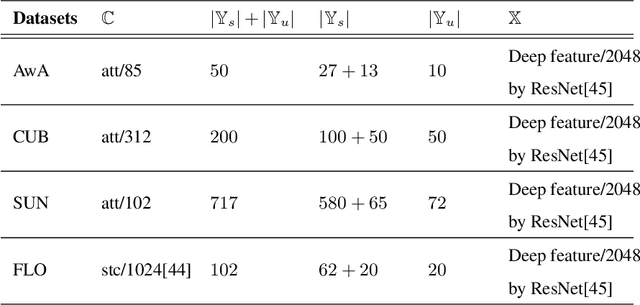

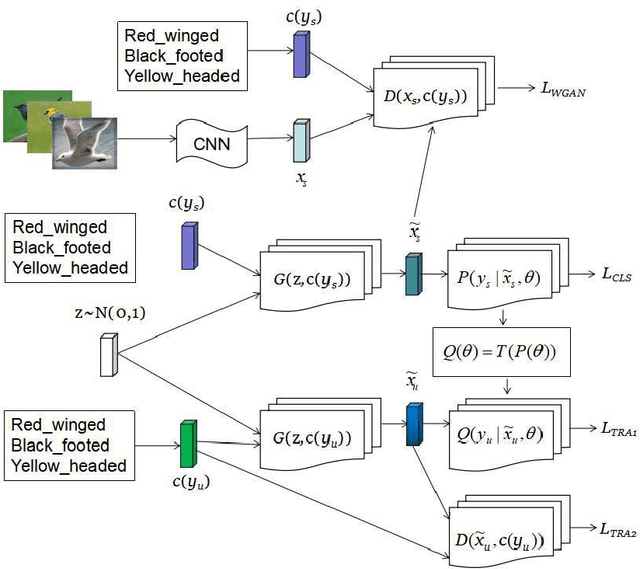

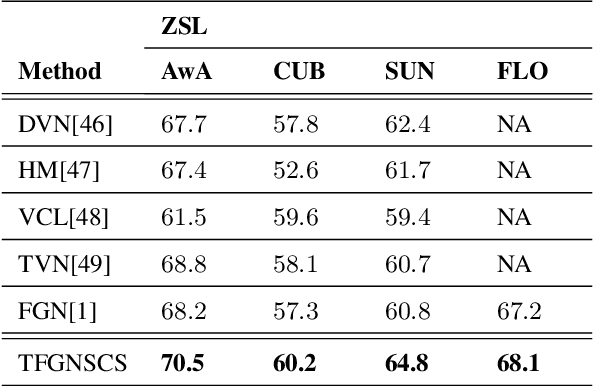

Abstract:Suffering from the generating feature inconsistence of seen classes training model for following the distribution of unseen classes , most of existing feature generating networks difficultly obtain satisfactory performance for the challenging generalization zero-shot learning (GZSL) by adversarial learning the distribution of semantic classes. To alleviate the negative influence of this inconsistence for zero-shot learning (ZSL), transfer feature generating networks with semantic classes structure (TFGNSCS) is proposed to construct networks model for improving the performance of ZSL and GZSL. TFGNSCS can not only consider the semantic structure relationship between seen and unseen classes but also learn the difference of generating features by balancing transfer information between seen and unseen classes in networks. The proposed method can integrate a Wasserstein generative adversarial network with classification loss and transfer loss to generate enough CNN feature, on which softmax classifiers are trained for ZSL and GZSL. Experiments demonstrate that the performance of TFGNSCS outperforms that of the state of the arts on four challenging datasets, which are CUB,FLO,SUN, AWA in GZSL.

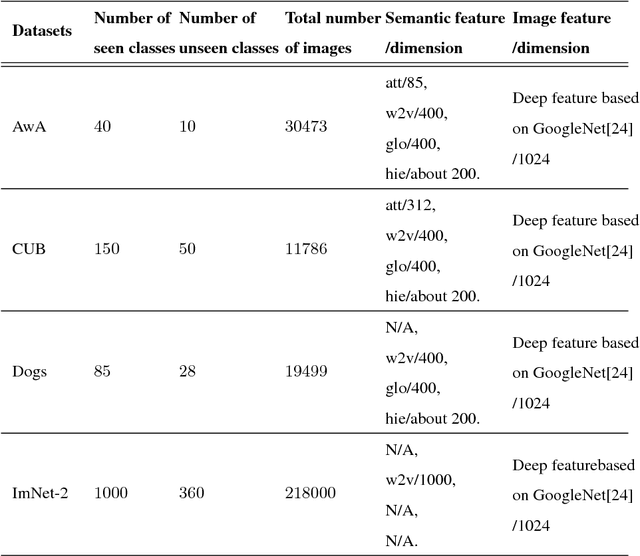

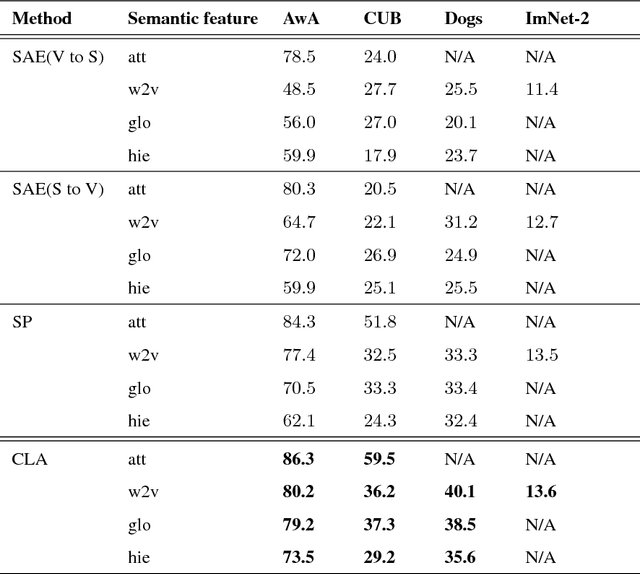

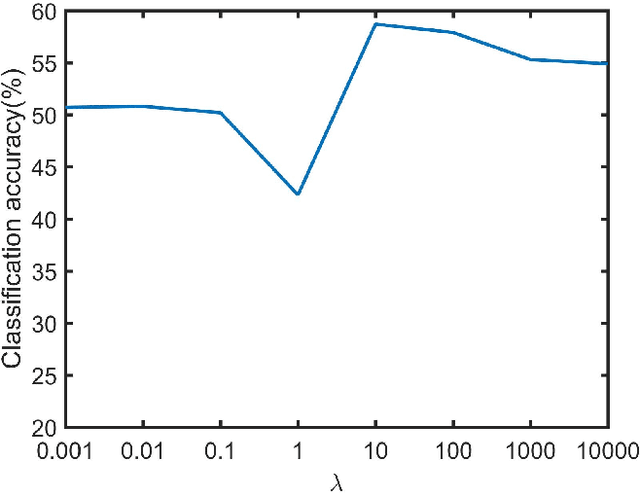

Class label autoencoder for zero-shot learning

Jan 25, 2018

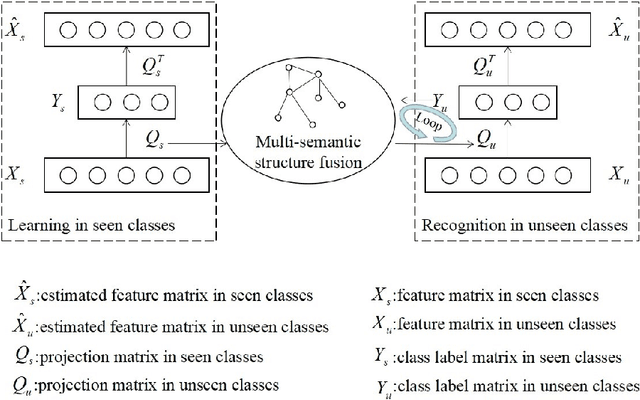

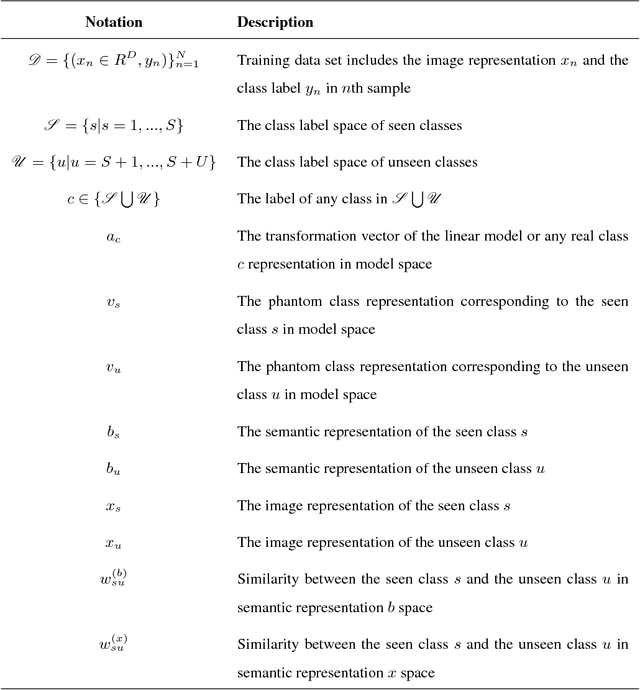

Abstract:Existing zero-shot learning (ZSL) methods usually learn a projection function between a feature space and a semantic embedding space(text or attribute space) in the training seen classes or testing unseen classes. However, the projection function cannot be used between the feature space and multi-semantic embedding spaces, which have the diversity characteristic for describing the different semantic information of the same class. To deal with this issue, we present a novel method to ZSL based on learning class label autoencoder (CLA). CLA can not only build a uniform framework for adapting to multi-semantic embedding spaces, but also construct the encoder-decoder mechanism for constraining the bidirectional projection between the feature space and the class label space. Moreover, CLA can jointly consider the relationship of feature classes and the relevance of the semantic classes for improving zero-shot classification. The CLA solution can provide both unseen class labels and the relation of the different classes representation(feature or semantic information) that can encode the intrinsic structure of classes. Extensive experiments demonstrate the CLA outperforms state-of-art methods on four benchmark datasets, which are AwA, CUB, Dogs and ImNet-2.

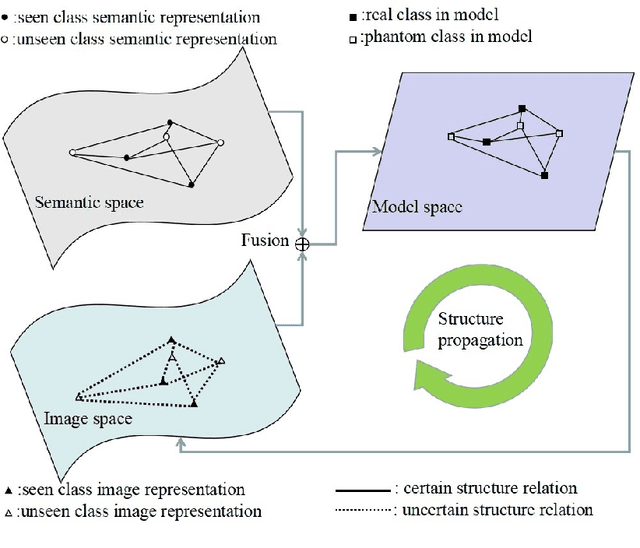

Structure propagation for zero-shot learning

Nov 27, 2017

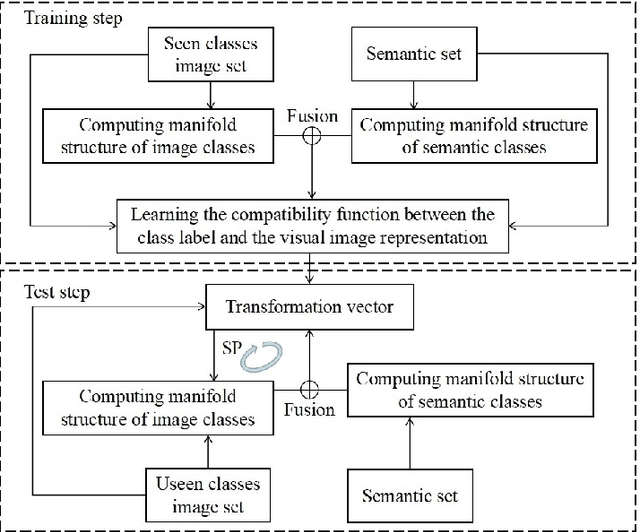

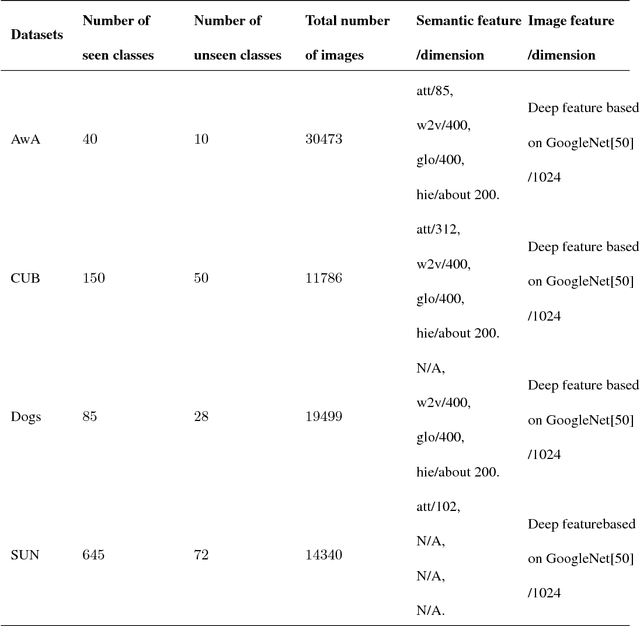

Abstract:The key of zero-shot learning (ZSL) is how to find the information transfer model for bridging the gap between images and semantic information (texts or attributes). Existing ZSL methods usually construct the compatibility function between images and class labels with the consideration of the relevance on the semantic classes (the manifold structure of semantic classes). However, the relationship of image classes (the manifold structure of image classes) is also very important for the compatibility model construction. It is difficult to capture the relationship among image classes due to unseen classes, so that the manifold structure of image classes often is ignored in ZSL. To complement each other between the manifold structure of image classes and that of semantic classes information, we propose structure propagation (SP) for improving the performance of ZSL for classification. SP can jointly consider the manifold structure of image classes and that of semantic classes for approximating to the intrinsic structure of object classes. Moreover, the SP can describe the constrain condition between the compatibility function and these manifold structures for balancing the influence of the structure propagation iteration. The SP solution provides not only unseen class labels but also the relationship of two manifold structures that encode the positive transfer in structure propagation. Experimental results demonstrate that SP can attain the promising results on the AwA, CUB, Dogs and SUN databases.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge