Xingyilang Yin

MLLM-4D: Towards Visual-based Spatial-Temporal Intelligence

Feb 28, 2026Abstract:Humans are born with vision-based 4D spatial-temporal intelligence, which enables us to perceive and reason about the evolution of 3D space over time from purely visual inputs. Despite its importance, this capability remains a significant bottleneck for current multimodal large language models (MLLMs). To tackle this challenge, we introduce MLLM-4D, a comprehensive framework designed to bridge the gaps in training data curation and model post-training for spatiotemporal understanding and reasoning. On the data front, we develop a cost-efficient data curation pipeline that repurposes existing stereo video datasets into high-quality 4D spatiotemporal instructional data. This results in the MLLM4D-2M and MLLM4D-R1-30k datasets for Supervised Fine-Tuning (SFT) and Reinforcement Fine-Tuning (RFT), alongside MLLM4D-Bench for comprehensive evaluation. Regarding model training, our post-training strategy establishes a foundational 4D understanding via SFT and further catalyzes 4D reasoning capabilities by employing Group Relative Policy Optimization (GRPO) with specialized Spatiotemporal Chain of Thought (ST-CoT) prompting and Spatiotemporal reward functions (ST-reward) without involving the modification of architecture. Extensive experiments demonstrate that MLLM-4D achieves state-of-the-art spatial-temporal understanding and reasoning capabilities from purely 2D RGB inputs. Project page: https://github.com/GVCLab/MLLM-4D.

SA3DIP: Segment Any 3D Instance with Potential 3D Priors

Nov 06, 2024

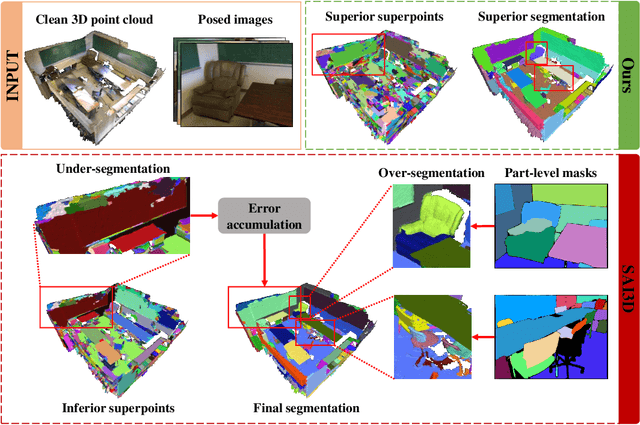

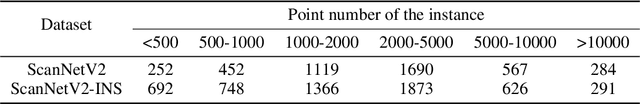

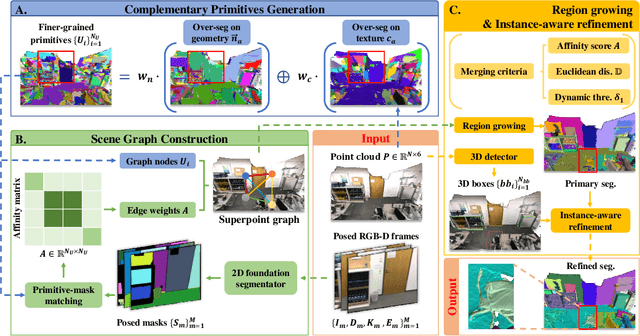

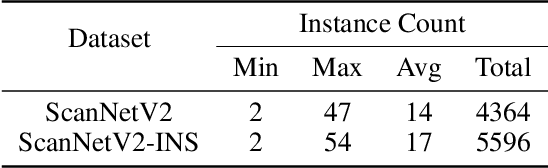

Abstract:The proliferation of 2D foundation models has sparked research into adapting them for open-world 3D instance segmentation. Recent methods introduce a paradigm that leverages superpoints as geometric primitives and incorporates 2D multi-view masks from Segment Anything model (SAM) as merging guidance, achieving outstanding zero-shot instance segmentation results. However, the limited use of 3D priors restricts the segmentation performance. Previous methods calculate the 3D superpoints solely based on estimated normal from spatial coordinates, resulting in under-segmentation for instances with similar geometry. Besides, the heavy reliance on SAM and hand-crafted algorithms in 2D space suffers from over-segmentation due to SAM's inherent part-level segmentation tendency. To address these issues, we propose SA3DIP, a novel method for Segmenting Any 3D Instances via exploiting potential 3D Priors. Specifically, on one hand, we generate complementary 3D primitives based on both geometric and textural priors, which reduces the initial errors that accumulate in subsequent procedures. On the other hand, we introduce supplemental constraints from the 3D space by using a 3D detector to guide a further merging process. Furthermore, we notice a considerable portion of low-quality ground truth annotations in ScanNetV2 benchmark, which affect the fair evaluations. Thus, we present ScanNetV2-INS with complete ground truth labels and supplement additional instances for 3D class-agnostic instance segmentation. Experimental evaluations on various 2D-3D datasets demonstrate the effectiveness and robustness of our approach. Our code and proposed ScanNetV2-INS dataset are available HERE.

Point Deformable Network with Enhanced Normal Embedding for Point Cloud Analysis

Dec 20, 2023

Abstract:Recently MLP-based methods have shown strong performance in point cloud analysis. Simple MLP architectures are able to learn geometric features in local point groups yet fail to model long-range dependencies directly. In this paper, we propose Point Deformable Network (PDNet), a concise MLP-based network that can capture long-range relations with strong representation ability. Specifically, we put forward Point Deformable Aggregation Module (PDAM) to improve representation capability in both long-range dependency and adaptive aggregation among points. For each query point, PDAM aggregates information from deformable reference points rather than points in limited local areas. The deformable reference points are generated data-dependent, and we initialize them according to the input point positions. Additional offsets and modulation scalars are learned on the whole point features, which shift the deformable reference points to the regions of interest. We also suggest estimating the normal vector for point clouds and applying Enhanced Normal Embedding (ENE) to the geometric extractors to improve the representation ability of single-point. Extensive experiments and ablation studies on various benchmarks demonstrate the effectiveness and superiority of our PDNet.

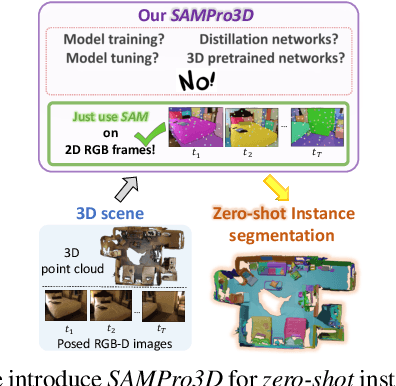

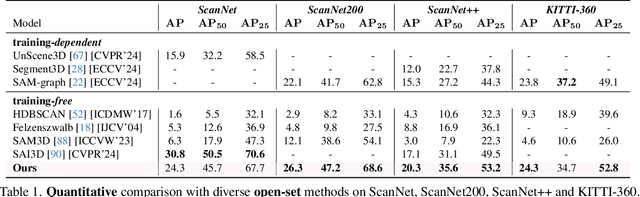

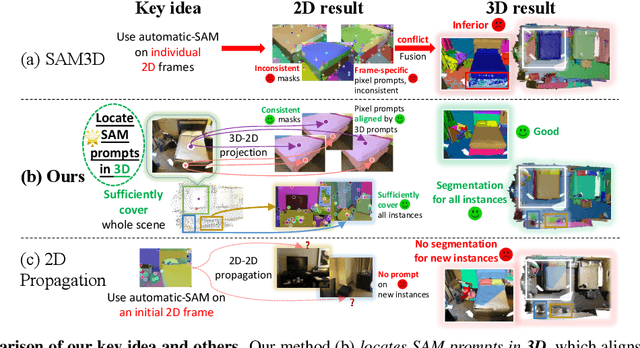

SAMPro3D: Locating SAM Prompts in 3D for Zero-Shot Scene Segmentation

Nov 29, 2023

Abstract:We introduce SAMPro3D for zero-shot 3D indoor scene segmentation. Given the 3D point cloud and multiple posed 2D frames of 3D scenes, our approach segments 3D scenes by applying the pretrained Segment Anything Model (SAM) to 2D frames. Our key idea involves locating 3D points in scenes as natural 3D prompts to align their projected pixel prompts across frames, ensuring frame-consistency in both pixel prompts and their SAM-predicted masks. Moreover, we suggest filtering out low-quality 3D prompts based on feedback from all 2D frames, for enhancing segmentation quality. We also propose to consolidate different 3D prompts if they are segmenting the same object, bringing a more comprehensive segmentation. Notably, our method does not require any additional training on domain-specific data, enabling us to preserve the zero-shot power of SAM. Extensive qualitative and quantitative results show that our method consistently achieves higher quality and more diverse segmentation than previous zero-shot or fully supervised approaches, and in many cases even surpasses human-level annotations. The project page can be accessed at https://mutianxu.github.io/sampro3d/.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge