Xiaoteng Zhou

A Data-Driven Paradigm-Based Image Denoising and Mosaicking Approach for High-Resolution Acoustic Camera

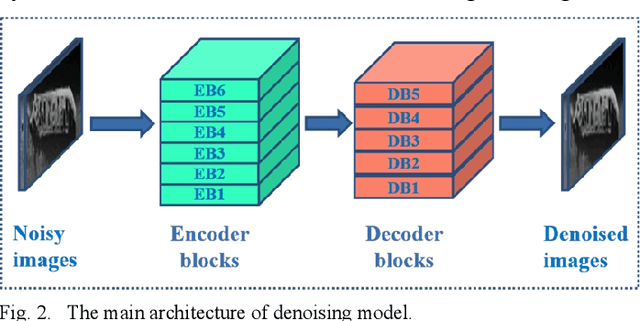

Feb 19, 2025Abstract:In this work, an approach based on a data-driven paradigm to denoise and mosaic acoustic camera images is proposed. Acoustic cameras, also known as 2D forward-looking sonar, could collect high-resolution acoustic images in dark and turbid water. However, due to the unique sensor imaging mechanism, main vision-based processing methods, like image denoising and mosaicking are still in the early stages. Due to the complex noise interference in acoustic images and the narrow field of view of acoustic cameras, it is difficult to restore the entire detection scene even if enough acoustic images are collected. Relevant research work addressing these issues focuses on the design of handcrafted operators for acoustic image processing based on prior knowledge and sensor models. However, such methods lack robustness due to noise interference and insufficient feature details on acoustic images. This study proposes an acoustic image denoising and mosaicking method based on a data-driven paradigm and conducts experimental testing using collected acoustic camera images. The results demonstrate the effectiveness of the proposal.

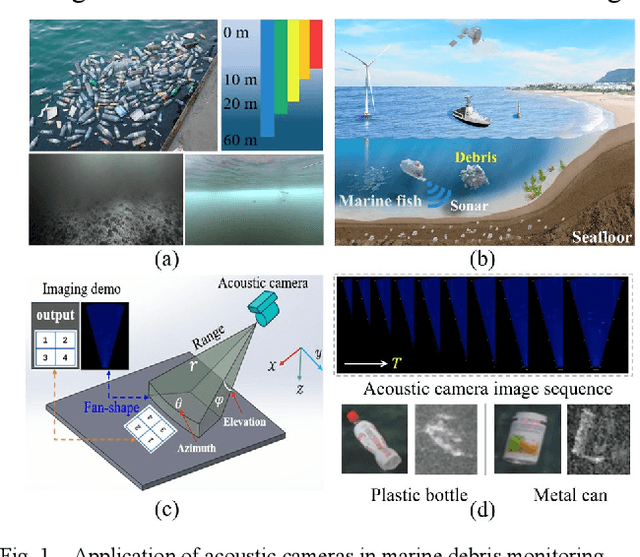

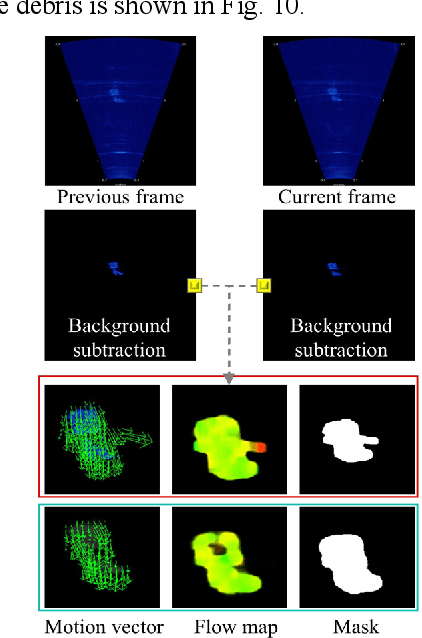

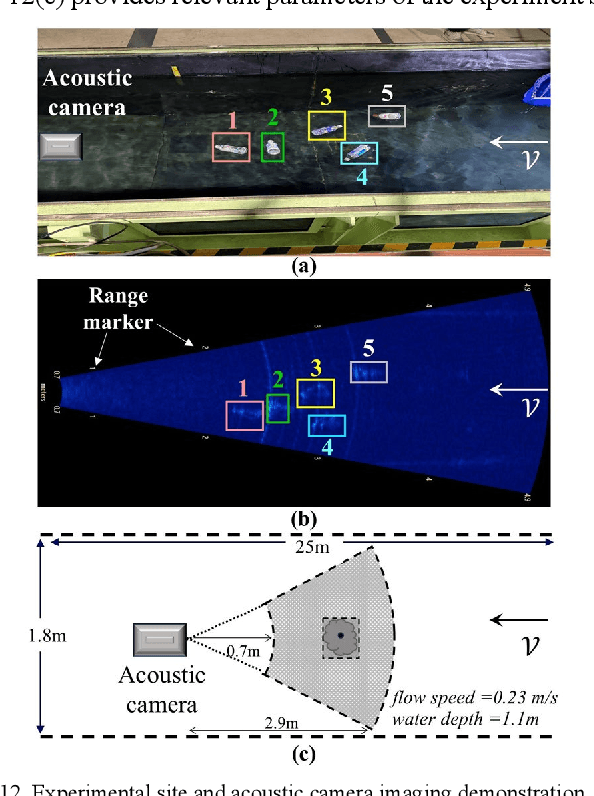

Enhancing Marine Debris Acoustic Monitoring by Optical Flow-Based Motion Vector Analysis

Dec 28, 2024

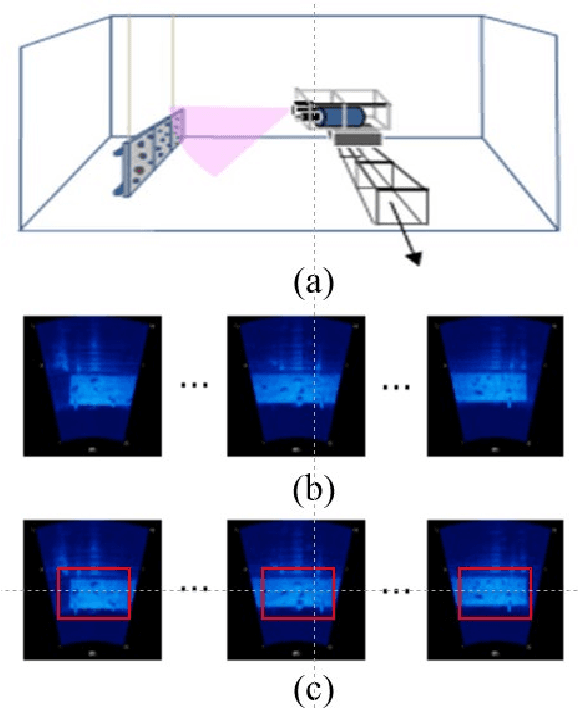

Abstract:With the development of coastal construction, a large amount of human-generated waste, particularly plastic debris, is continuously entering the ocean, posing a severe threat to marine ecosystems. The key to effectively addressing plastic pollution lies in the ability to autonomously monitor such debris. Currently, marine debris monitoring primarily relies on optical sensors, but these methods are limited in their applicability to underwater and seafloor areas due to low-visibility constraints. The acoustic camera, also known as high-resolution forward-looking sonar (FLS), has demonstrated considerable potential in the autonomous monitoring of marine debris, as they are unaffected by water turbidity and dark environments. The appearance of targets in sonar images changes with variations in the imaging viewpoint, while challenges such as low signal-to-noise ratio, weak textures, and imaging distortions in sonar imagery present significant obstacles to debris monitoring based on prior class labels. This paper proposes an optical flow-based method for marine debris monitoring, aiming to fully utilize the time series information captured by the acoustic camera to enhance the performance of marine debris monitoring without relying on prior category labels of the targets. The proposed method was validated through experiments conducted in a circulating water tank, demonstrating its feasibility and robustness. This approach holds promise for providing novel insights into the spatial and temporal distribution of debris.

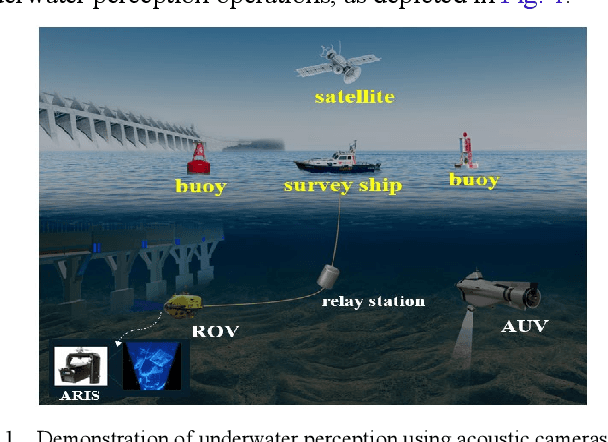

Target Height Estimation Using a Single Acoustic Camera for Compensation in 2D Seabed Mosaicking

Nov 19, 2024

Abstract:This letter proposes a novel approach for compensating target height data in 2D seabed mosaicking for low-visibility underwater perception. Acoustic cameras are effective sensors for sensing the marine environments due to their high-resolution imaging capabilities and robustness to darkness and turbidity. However, the loss of elevation angle during the imaging process results in a lack of target height information in the original acoustic camera images, leading to a simplistic 2D representation of the seabed mosaicking. In perceiving cluttered and unexplored marine environments, target height data is crucial for avoiding collisions with marine robots. This study proposes a novel approach for estimating seabed target height using a single acoustic camera and integrates height data into 2D seabed mosaicking to compensate for the missing 3D dimension of seabed targets. Unlike classic methods that model the loss of elevation angle to achieve seabed 3D reconstruction, this study focuses on utilizing available acoustic cast shadow clues and simple sensor motion to quickly estimate target height. The feasibility of our proposal is verified through a water tank experiment and a simulation experiment.

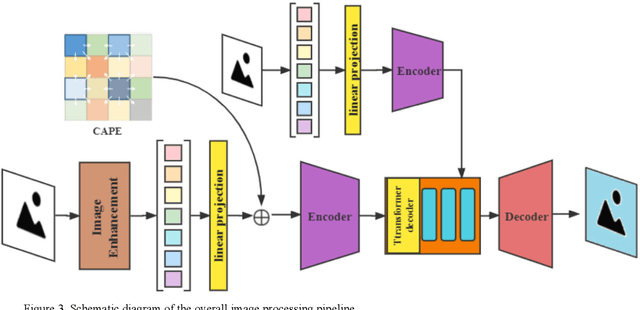

A Self-Supervised Denoising Strategy for Underwater Acoustic Camera Imageries

Jun 05, 2024

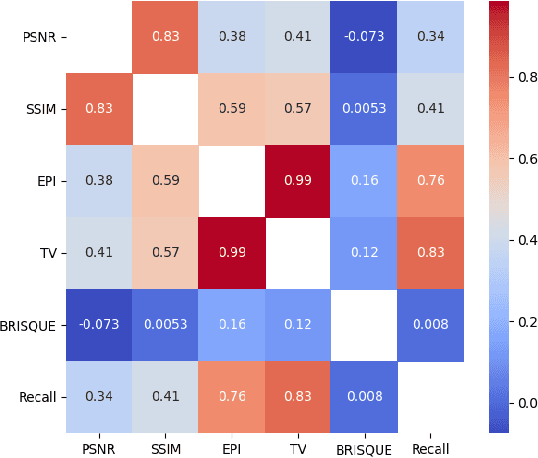

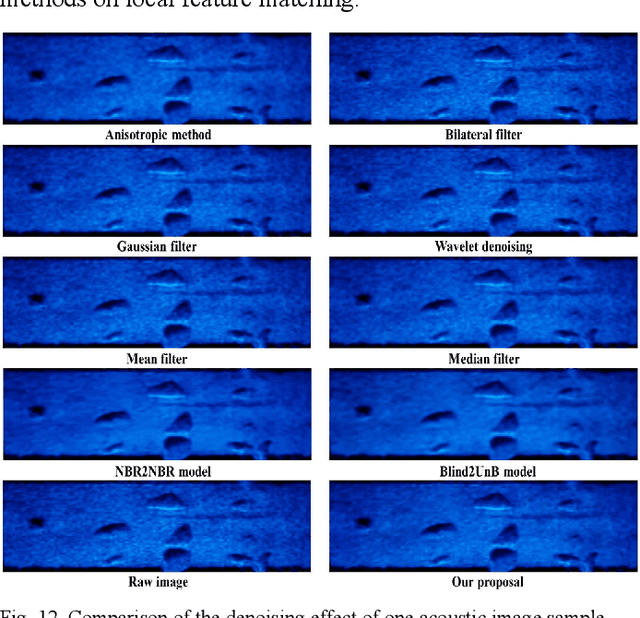

Abstract:In low-visibility marine environments characterized by turbidity and darkness, acoustic cameras serve as visual sensors capable of generating high-resolution 2D sonar images. However, acoustic camera images are interfered with by complex noise and are difficult to be directly ingested by downstream visual algorithms. This paper introduces a novel strategy for denoising acoustic camera images using deep learning techniques, which comprises two principal components: a self-supervised denoising framework and a fine feature-guided block. Additionally, the study explores the relationship between the level of image denoising and the improvement in feature-matching performance. Experimental results show that the proposed denoising strategy can effectively filter acoustic camera images without prior knowledge of the noise model. The denoising process is nearly end-to-end without complex parameter tuning and post-processing. It successfully removes noise while preserving fine feature details, thereby enhancing the performance of local feature matching.

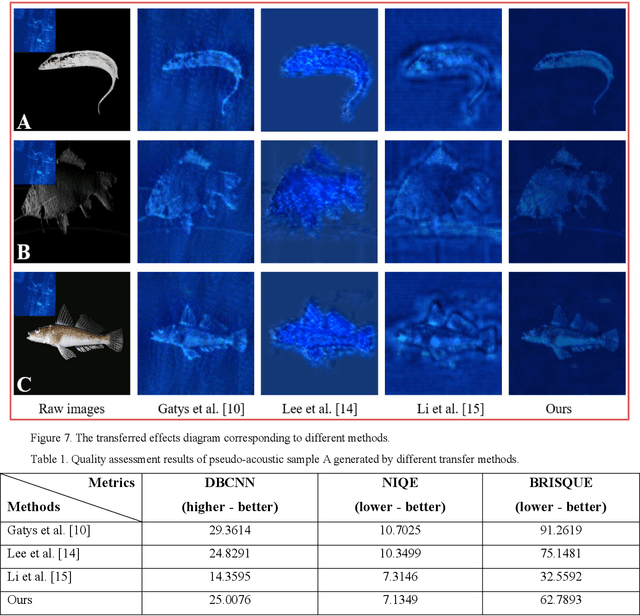

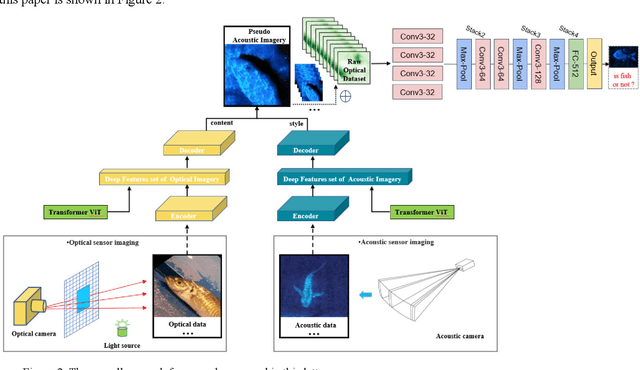

Learning Visual Representation of Underwater Acoustic Imagery Using Transformer-Based Style Transfer Method

Nov 10, 2022

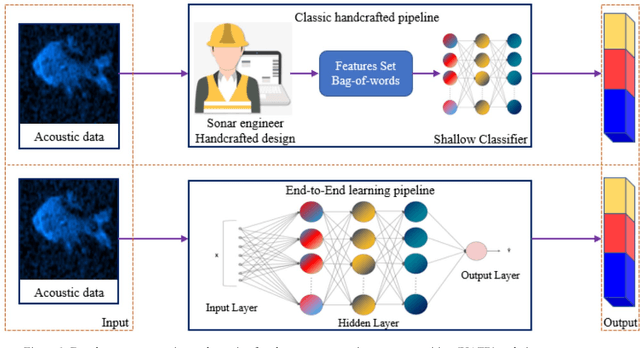

Abstract:Underwater automatic target recognition (UATR) has been a challenging research topic in ocean engineering. Although deep learning brings opportunities for target recognition on land and in the air, underwater target recognition techniques based on deep learning have lagged due to sensor performance and the size of trainable data. This letter proposed a framework for learning the visual representation of underwater acoustic imageries, which takes a transformer-based style transfer model as the main body. It could replace the low-level texture features of optical images with the visual features of underwater acoustic imageries while preserving their raw high-level semantic content. The proposed framework could fully use the rich optical image dataset to generate a pseudo-acoustic image dataset and use it as the initial sample to train the underwater acoustic target recognition model. The experiments select the dual-frequency identification sonar (DIDSON) as the underwater acoustic data source and also take fish, the most common marine creature, as the research subject. Experimental results show that the proposed method could generate high-quality and high-fidelity pseudo-acoustic samples, achieve the purpose of acoustic data enhancement and provide support for the underwater acoustic-optical images domain transfer research.

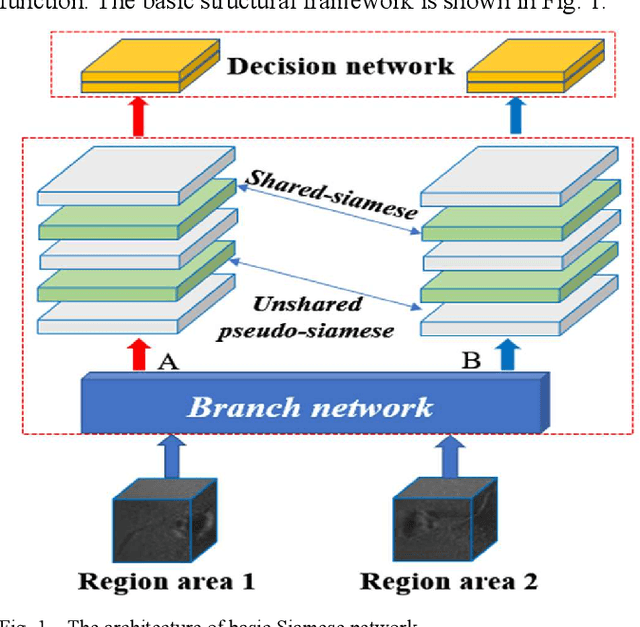

Nonlinear Intensity Sonar Image Matching based on Deep Convolution Features

Nov 29, 2021

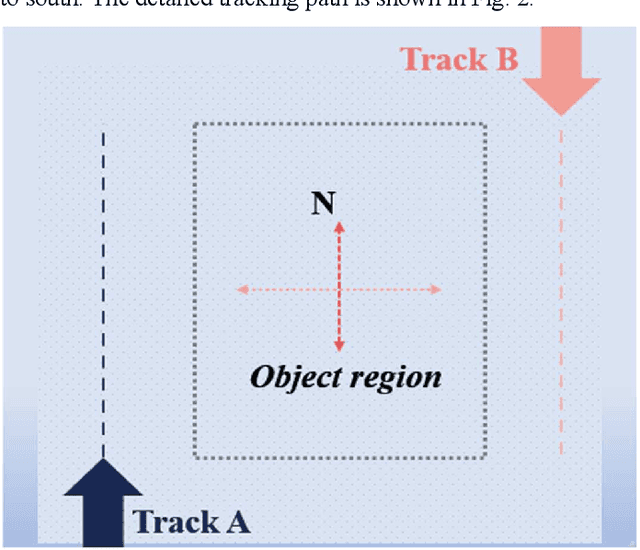

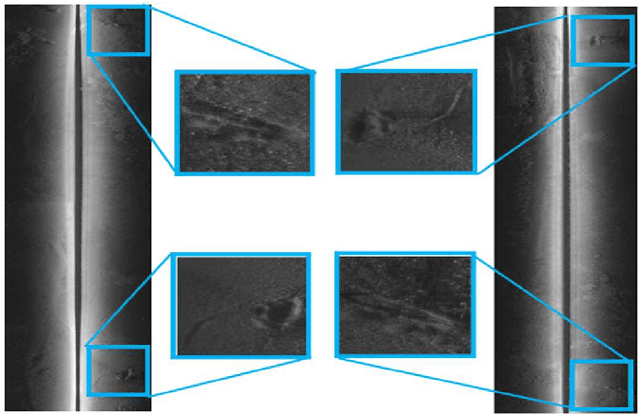

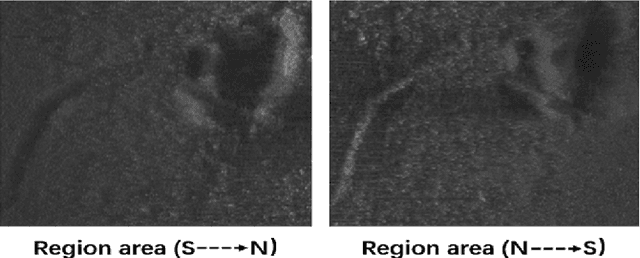

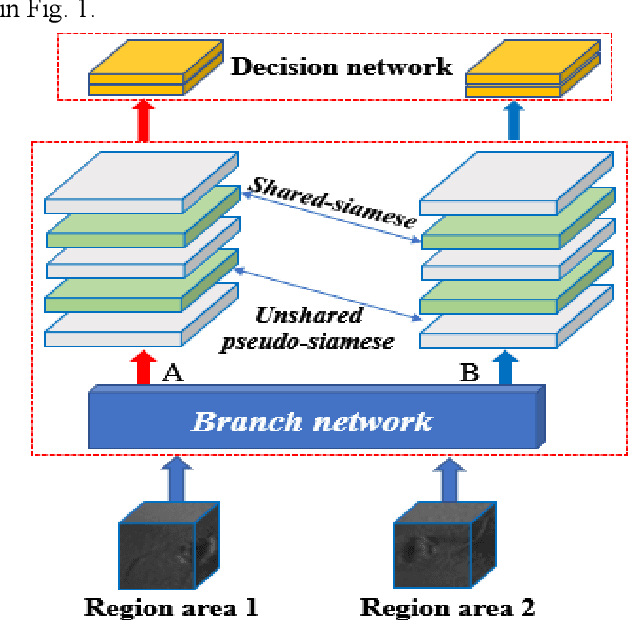

Abstract:In the field of deep-sea exploration, sonar is presently the only efficient long-distance sensing device. The complicated underwater environment, such as noise interference, low target intensity or background dynamics, has brought many negative effects on sonar imaging. Among them, the problem of nonlinear intensity is extremely prevalent. It is also known as the anisotropy of acoustic imaging, that is, when AUVs carry sonar to detect the same target from different angles, the intensity difference between image pairs is sometimes very large, which makes the traditional matching algorithm almost ineffective. However, image matching is the basis of comprehensive tasks such as navigation, positioning, and mapping. Therefore, it is very valuable to obtain robust and accurate matching results. This paper proposes a combined matching method based on phase information and deep convolution features. It has two outstanding advantages: one is that deep convolution features could be used to measure the similarity of the local and global positions of the sonar image; the other is that local feature matching could be performed at the key target position of the sonar image. This method does not need complex manual design, and completes the matching task of nonlinear intensity sonar images in a close end-to-end manner. Feature matching experiments are carried out on the deep-sea sonar images captured by AUVs, and the results show that our proposal has good matching accuracy and robustness.

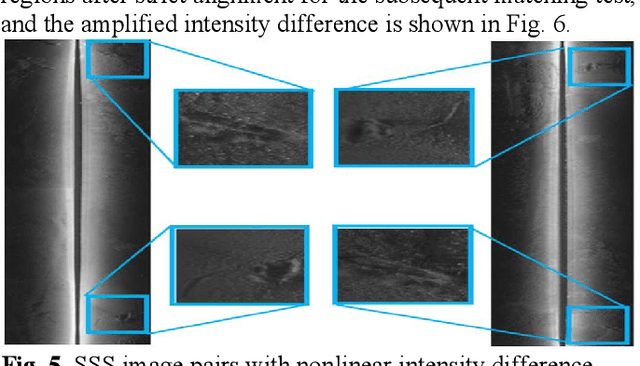

Nonlinear Intensity Underwater Sonar Image Matching Method Based on Phase Information and Deep Convolution Features

Nov 29, 2021

Abstract:In the field of deep-sea exploration, sonar is presently the only efficient long-distance sensing device. The complicated underwater environment, such as noise interference, low target intensity or background dynamics, has brought many negative effects on sonar imaging. Among them, the problem of nonlinear intensity is extremely prevalent. It is also known as the anisotropy of acoustic sensor imaging, that is, when autonomous underwater vehicles (AUVs) carry sonar to detect the same target from different angles, the intensity variation between image pairs is sometimes very large, which makes the traditional matching algorithm almost ineffective. However, image matching is the basis of comprehensive tasks such as navigation, positioning, and mapping. Therefore, it is very valuable to obtain robust and accurate matching results. This paper proposes a combined matching method based on phase information and deep convolution features. It has two outstanding advantages: one is that the deep convolution features could be used to measure the similarity of the local and global positions of the sonar image; the other is that local feature matching could be performed at the key target position of the sonar image. This method does not need complex manual designs, and completes the matching task of nonlinear intensity sonar images in a close end-to-end manner. Feature matching experiments are carried out on the deep-sea sonar images captured by AUVs, and the results show that our proposal has preeminent matching accuracy and robustness.

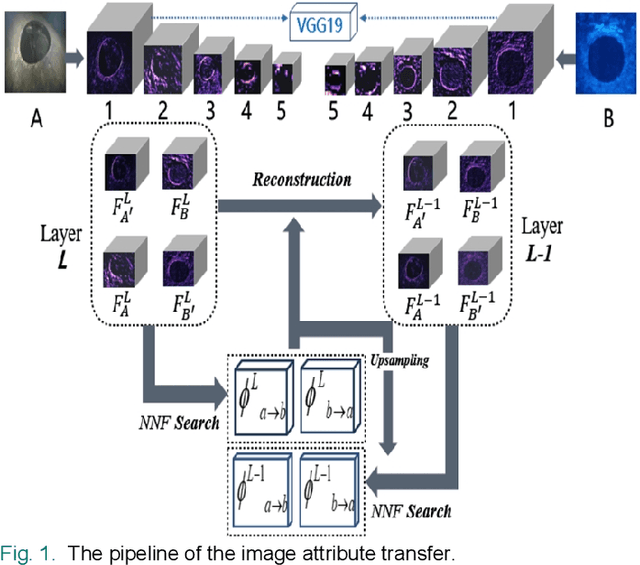

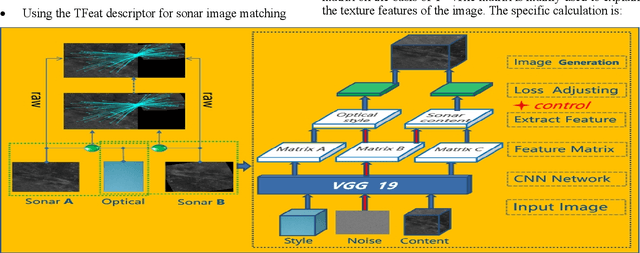

A Matching Algorithm based on Image Attribute Transfer and Local Features for Underwater Acoustic and Optical Images

Aug 27, 2021

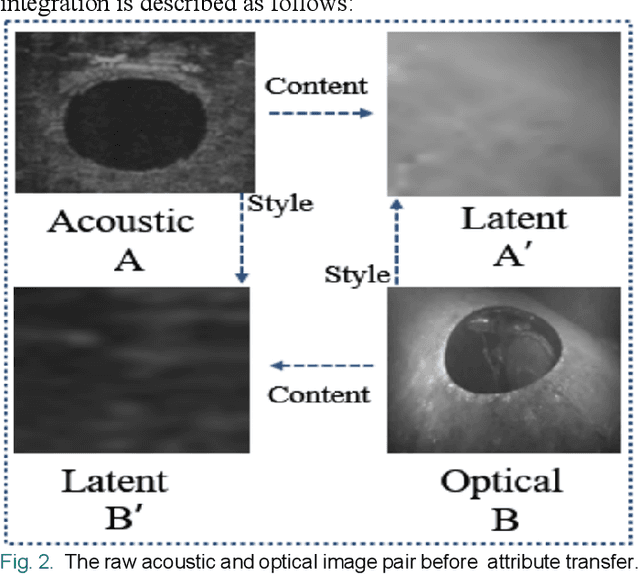

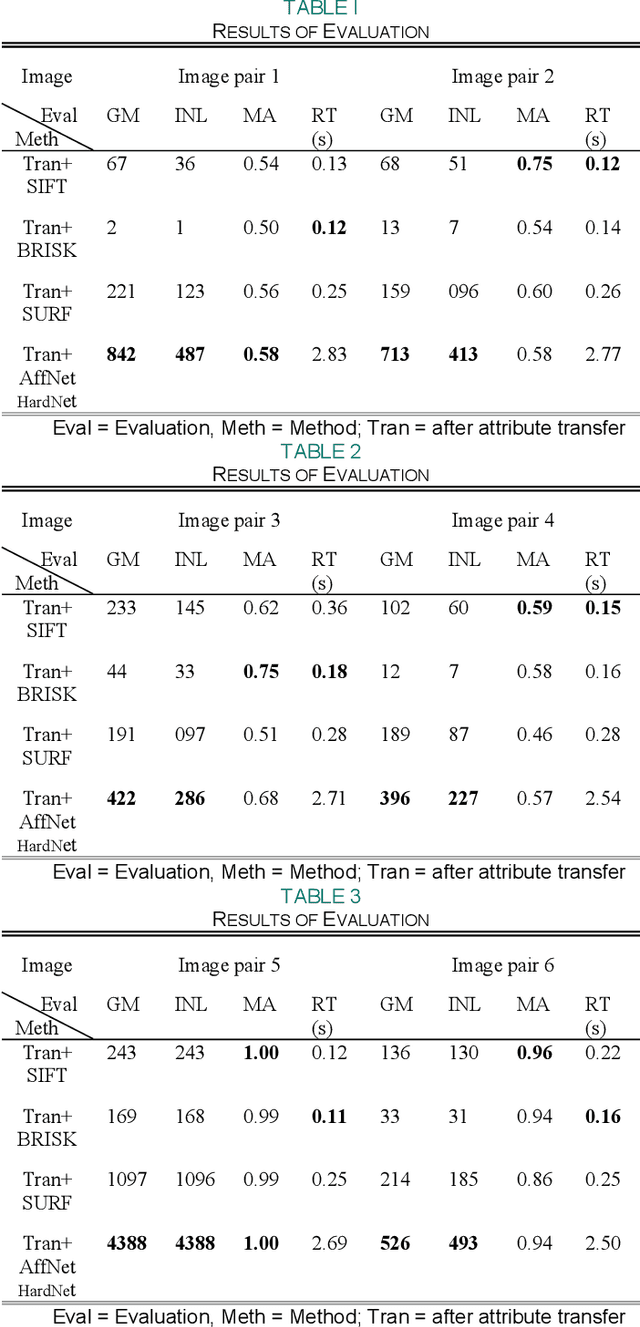

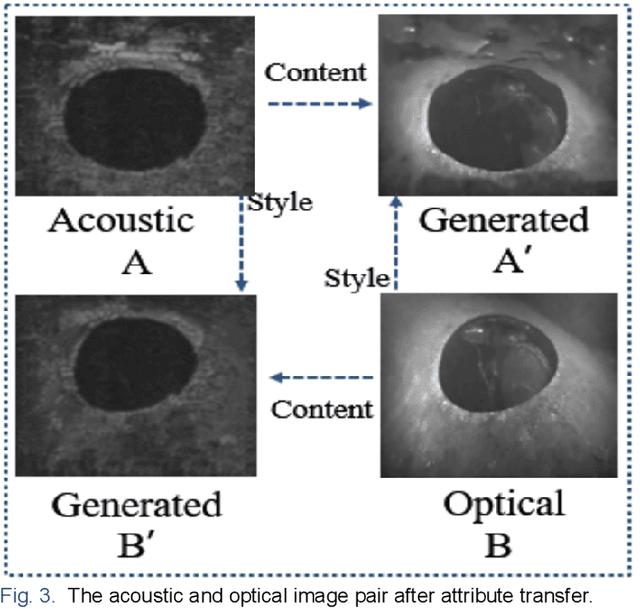

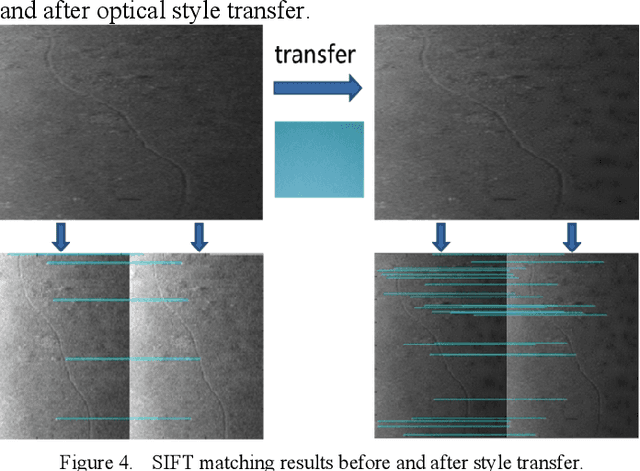

Abstract:In the field of underwater vision research, image matching between the sonar sensors and optical cameras has always been a challenging problem. Due to the difference in the imaging mechanism between them, which are the gray value, texture, contrast, etc. of the acoustic images and the optical images are also variant in local locations, which makes the traditional matching method based on the optical image invalid. Coupled with the difficulties and high costs of underwater data acquisition, it further affects the research process of acousto-optic data fusion technology. In order to maximize the use of underwater sensor data and promote the development of multi-sensor information fusion (MSIF), this study applies the image attribute transfer method based on deep learning approach to solve the problem of acousto-optic image matching, the core of which is to eliminate the imaging differences between them as much as possible. At the same time, the advanced local feature descriptor is introduced to solve the challenging acousto-optic matching problem. Experimental results show that our proposed method could preprocess acousto-optic images effectively and obtain accurate matching results. Additionally, the method is based on the combination of image depth semantic layer, and it could indirectly display the local feature matching relationship between original image pair, which provides a new solution to the underwater multi-sensor image matching problem.

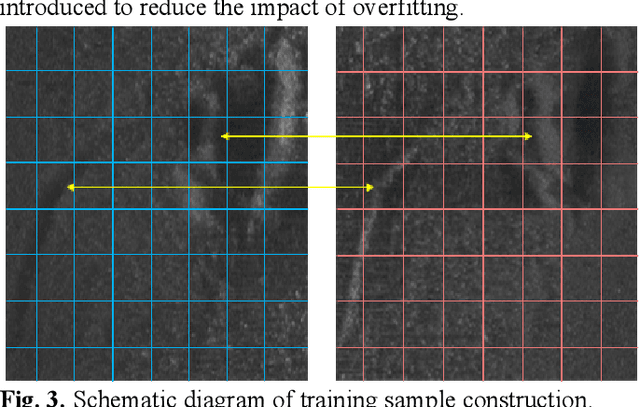

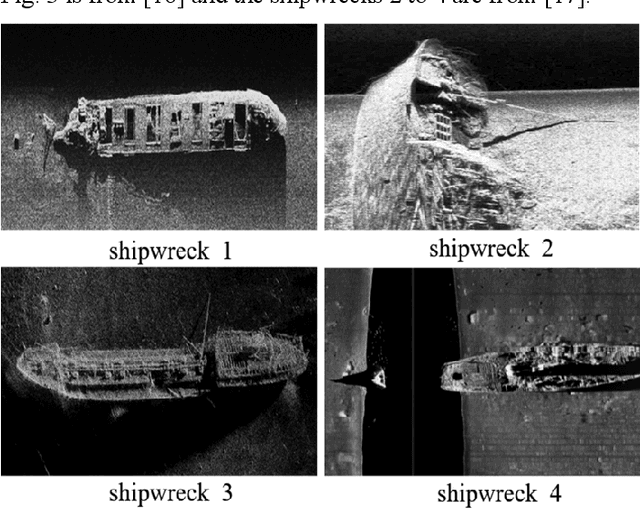

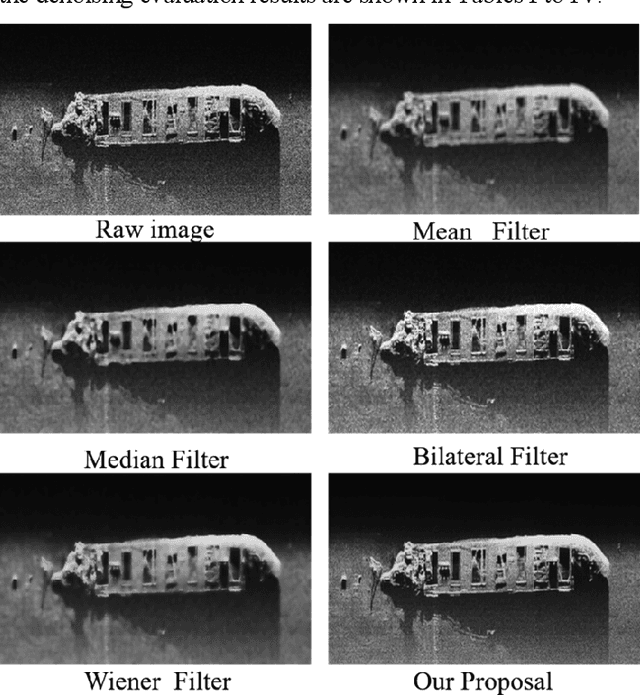

Deep Denoising Method for Side Scan Sonar Images without High-quality Reference Data

Aug 27, 2021

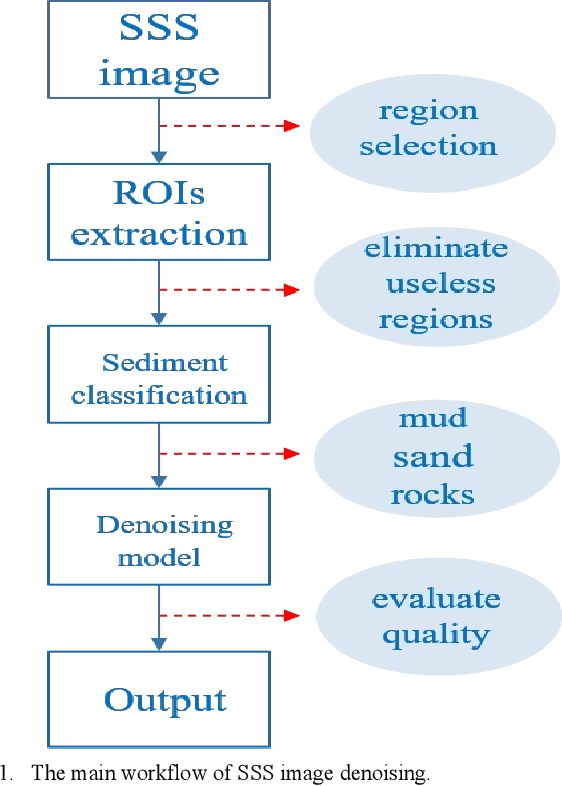

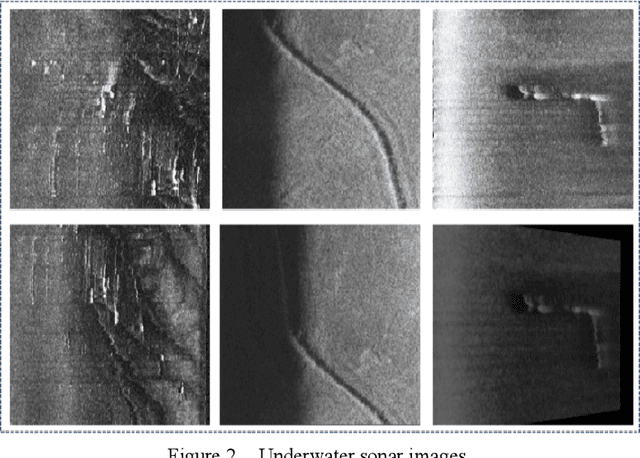

Abstract:Subsea images measured by the side scan sonars (SSSs) are necessary visual data in the process of deep-sea exploration by using the autonomous underwater vehicles (AUVs). They could vividly reflect the topography of the seabed, but usually accompanied by complex and severe noise. This paper proposes a deep denoising method for SSS images without high-quality reference data, which uses one single noise SSS image to perform self-supervised denoising. Compared with the classical artificially designed filters, the deep denoising method shows obvious advantages. The denoising experiments are performed on the real seabed SSS images, and the results demonstrate that our proposed method could effectively reduce the noise on the SSS image while minimizing the image quality and detail loss.

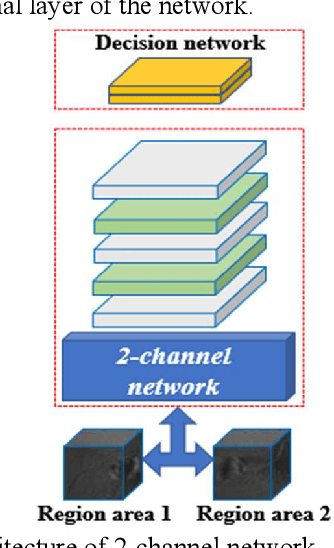

Matching Underwater Sonar Images by the Learned Descriptor Based on Style Transfer Method

Aug 27, 2021

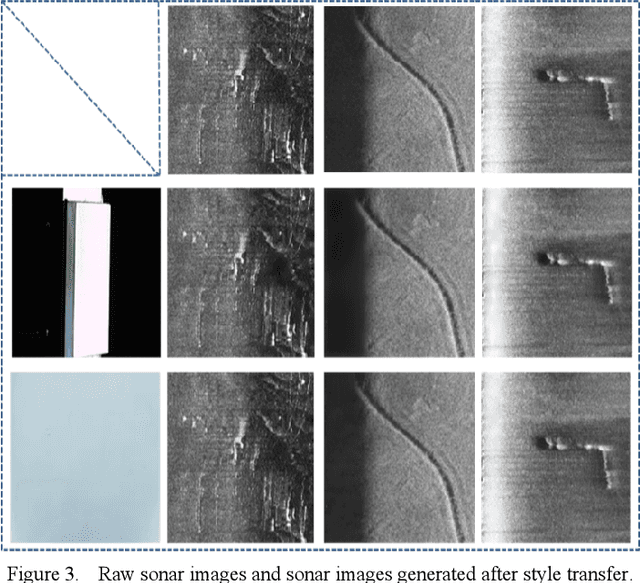

Abstract:This paper proposes a method that combines the style transfer technique and the learned descriptor to enhance the matching performances of underwater sonar images. In the field of underwater vision, sonar is currently the most effective long-distance detection sensor, it has excellent performances in map building and target search tasks. However, the traditional image matching algorithms are all developed based on optical images. In order to solve this contradiction, the style transfer method is used to convert the sonar images into optical styles, and at the same time, the learned descriptor with excellent expressiveness for sonar images matching is introduced. Experiments show that this method significantly enhances the matching quality of sonar images. In addition, it also provides new ideas for the preprocessing of underwater sonar images by using the style transfer approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge