Xiaohong Huang

Edge-Enabled VIO with Long-Tracked Features for High-Accuracy Low-Altitude IoT Navigation

May 10, 2025Abstract:This paper presents a visual-inertial odometry (VIO) method using long-tracked features. Long-tracked features can constrain more visual frames, reducing localization drift. However, they may also lead to accumulated matching errors and drift in feature tracking. Current VIO methods adjust observation weights based on re-projection errors, yet this approach has flaws. Re-projection errors depend on estimated camera poses and map points, so increased errors might come from estimation inaccuracies, not actual feature tracking errors. This can mislead the optimization process and make long-tracked features ineffective for suppressing localization drift. Furthermore, long-tracked features constrain a larger number of frames, which poses a significant challenge to real-time performance of the system. To tackle these issues, we propose an active decoupling mechanism for accumulated errors in long-tracked feature utilization. We introduce a visual reference frame reset strategy to eliminate accumulated tracking errors and a depth prediction strategy to leverage the long-term constraint. To ensure real time preformane, we implement three strategies for efficient system state estimation: a parallel elimination strategy based on predefined elimination order, an inverse-depth elimination simplification strategy, and an elimination skipping strategy. Experiments on various datasets show that our method offers higher positioning accuracy with relatively short consumption time, making it more suitable for edge-enabled low-altitude IoT navigation, where high-accuracy positioning and real-time operation on edge device are required. The code will be published at github.

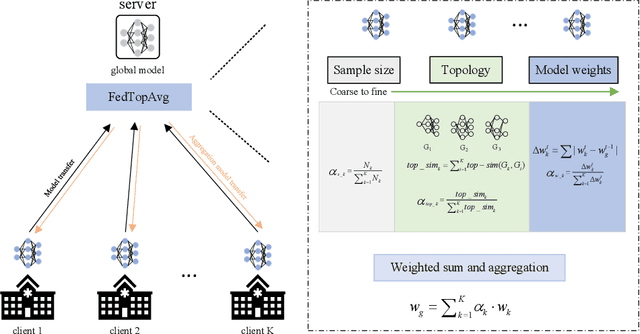

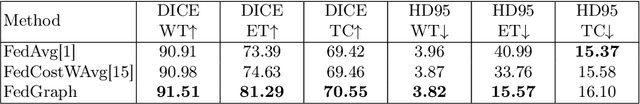

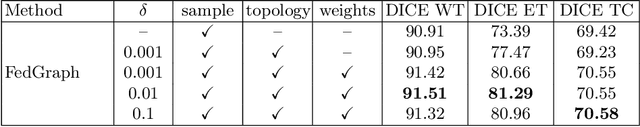

FedGraph: an Aggregation Method from Graph Perspective

Oct 06, 2022

Abstract:With the increasingly strengthened data privacy act and the difficult data centralization, Federated Learning (FL) has become an effective solution to collaboratively train the model while preserving each client's privacy. FedAvg is a standard aggregation algorithm that makes the proportion of dataset size of each client as aggregation weight. However, it can't deal with non-independent and identically distributed (non-i.i.d) data well because of its fixed aggregation weights and the neglect of data distribution. In this paper, we propose an aggregation strategy that can effectively deal with non-i.i.d dataset, namely FedGraph, which can adjust the aggregation weights adaptively according to the training condition of local models in whole training process. The FedGraph takes three factors into account from coarse to fine: the proportion of each local dataset size, the topology factor of model graphs, and the model weights. We calculate the gravitational force between local models by transforming the local models into topology graphs. The FedGraph can explore the internal correlation between local models better through the weighted combination of the proportion each local dataset, topology structure, and model weights. The proposed FedGraph has been applied to the MICCAI Federated Tumor Segmentation Challenge 2021 (FeTS) datasets, and the validation results show that our method surpasses the previous state-of-the-art by 2.76 mean Dice Similarity Score. The source code will be available at Github.

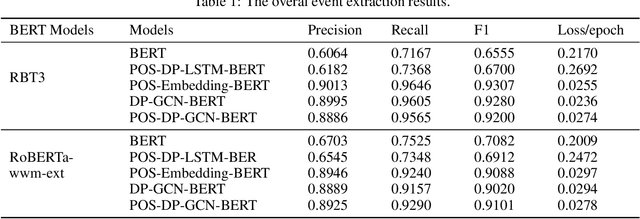

Syntactic-GCN Bert based Chinese Event Extraction

Dec 18, 2021

Abstract:With the rapid development of information technology, online platforms (e.g., news portals and social media) generate enormous web information every moment. Therefore, it is crucial to extract structured representations of events from social streams. Generally, existing event extraction research utilizes pattern matching, machine learning, or deep learning methods to perform event extraction tasks. However, the performance of Chinese event extraction is not as good as English due to the unique characteristics of the Chinese language. In this paper, we propose an integrated framework to perform Chinese event extraction. The proposed approach is a multiple channel input neural framework that integrates semantic features and syntactic features. The semantic features are captured by BERT architecture. The Part of Speech (POS) features and Dependency Parsing (DP) features are captured by profiling embeddings and Graph Convolutional Network (GCN), respectively. We also evaluate our model on a real-world dataset. Experimental results show that the proposed method outperforms the benchmark approaches significantly.

Forecasting Crude Oil Price Using Event Extraction

Nov 14, 2021

Abstract:Research on crude oil price forecasting has attracted tremendous attention from scholars and policymakers due to its significant effect on the global economy. Besides supply and demand, crude oil prices are largely influenced by various factors, such as economic development, financial markets, conflicts, wars, and political events. Most previous research treats crude oil price forecasting as a time series or econometric variable prediction problem. Although recently there have been researches considering the effects of real-time news events, most of these works mainly use raw news headlines or topic models to extract text features without profoundly exploring the event information. In this study, a novel crude oil price forecasting framework, AGESL, is proposed to deal with this problem. In our approach, an open domain event extraction algorithm is utilized to extract underlying related events, and a text sentiment analysis algorithm is used to extract sentiment from massive news. Then a deep neural network integrating the news event features, sentimental features, and historical price features is built to predict future crude oil prices. Empirical experiments are performed on West Texas Intermediate (WTI) crude oil price data, and the results show that our approach obtains superior performance compared with several benchmark methods.

* 14 pages, 5 figures, 5 tables

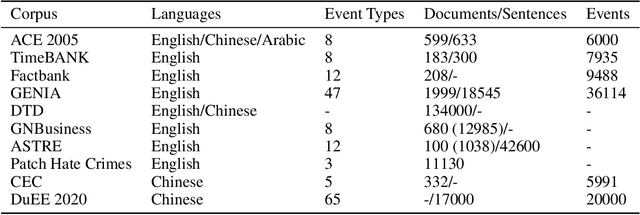

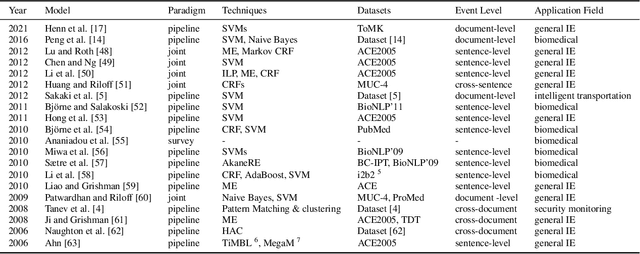

An overview of event extraction and its applications

Nov 05, 2021

Abstract:With the rapid development of information technology, online platforms have produced enormous text resources. As a particular form of Information Extraction (IE), Event Extraction (EE) has gained increasing popularity due to its ability to automatically extract events from human language. However, there are limited literature surveys on event extraction. Existing review works either spend much effort describing the details of various approaches or focus on a particular field. This study provides a comprehensive overview of the state-of-the-art event extraction methods and their applications from text, including closed-domain and open-domain event extraction. A trait of this survey is that it provides an overview in moderate complexity, avoiding involving too many details of particular approaches. This study focuses on discussing the common characters, application fields, advantages, and disadvantages of representative works, ignoring the specificities of individual approaches. Finally, we summarize the common issues, current solutions, and future research directions. We hope this work could help researchers and practitioners obtain a quick overview of recent event extraction.

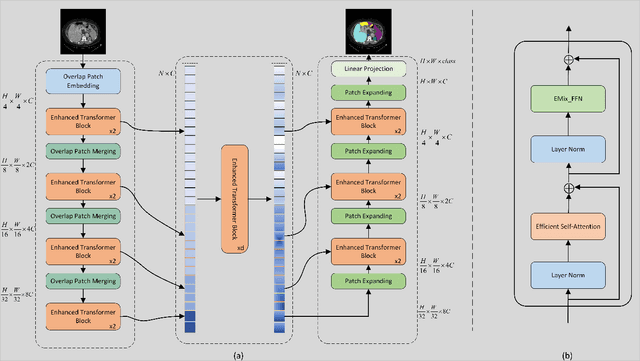

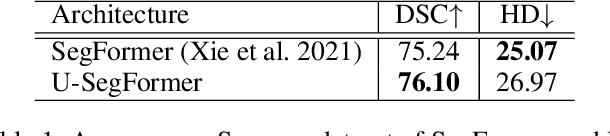

MISSFormer: An Effective Medical Image Segmentation Transformer

Sep 15, 2021

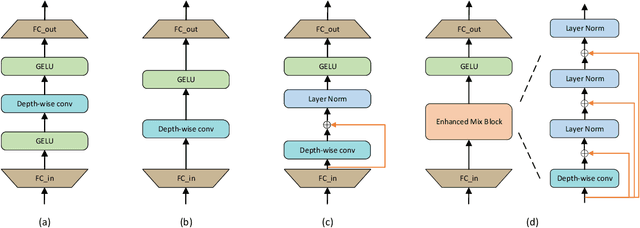

Abstract:The CNN-based methods have achieved impressive results in medical image segmentation, but it failed to capture the long-range dependencies due to the inherent locality of convolution operation. Transformer-based methods are popular in vision tasks recently because of its capacity of long-range dependencies and get a promising performance. However, it lacks in modeling local context, although some works attempted to embed convolutional layer to overcome this problem and achieved some improvement, but it makes the feature inconsistent and fails to leverage the natural multi-scale features of hierarchical transformer, which limit the performance of models. In this paper, taking medical image segmentation as an example, we present MISSFormer, an effective and powerful Medical Image Segmentation tranSFormer. MISSFormer is a hierarchical encoder-decoder network and has two appealing designs: 1) A feed forward network is redesigned with the proposed Enhanced Transformer Block, which makes features aligned adaptively and enhances the long-range dependencies and local context. 2) We proposed Enhanced Transformer Context Bridge, a context bridge with the enhanced transformer block to model the long-range dependencies and local context of multi-scale features generated by our hierarchical transformer encoder. Driven by these two designs, the MISSFormer shows strong capacity to capture more valuable dependencies and context in medical image segmentation. The experiments on multi-organ and cardiac segmentation tasks demonstrate the superiority, effectiveness and robustness of our MISSFormer, the exprimental results of MISSFormer trained from scratch even outperforms state-of-the-art methods pretrained on ImageNet, and the core designs can be generalized to other visual segmentation tasks. The code will be released in Github.

Low-latency Federated Learning and Blockchain for Edge Association in Digital Twin empowered 6G Networks

Nov 17, 2020

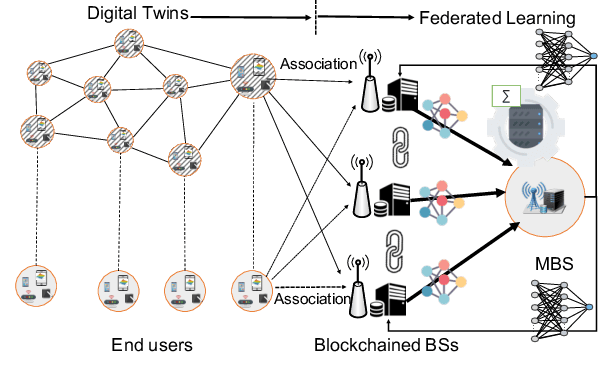

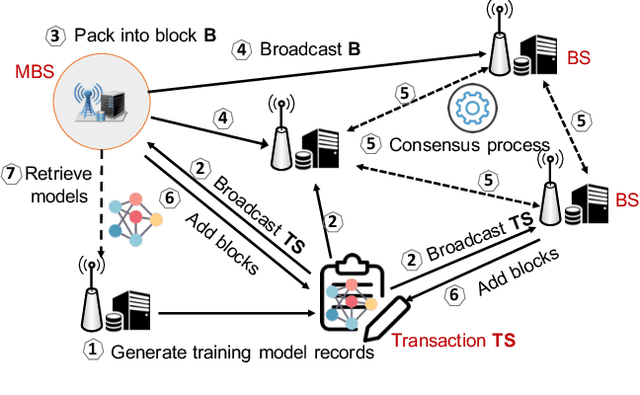

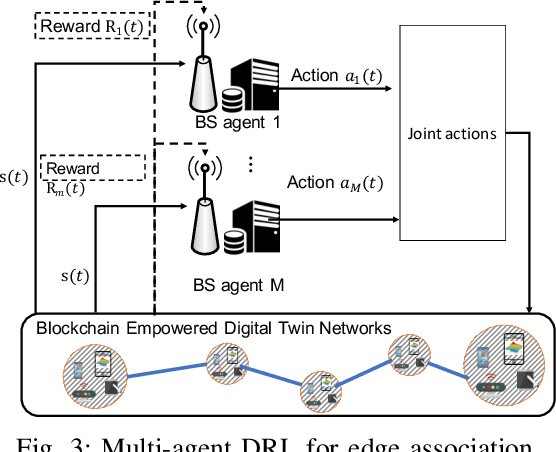

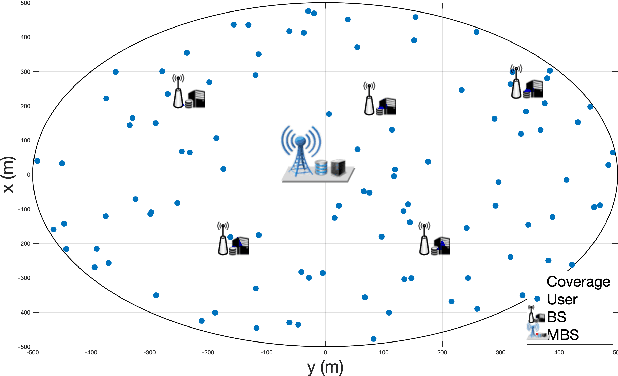

Abstract:Emerging technologies such as digital twins and 6th Generation mobile networks (6G) have accelerated the realization of edge intelligence in Industrial Internet of Things (IIoT). The integration of digital twin and 6G bridges the physical system with digital space and enables robust instant wireless connectivity. With increasing concerns on data privacy, federated learning has been regarded as a promising solution for deploying distributed data processing and learning in wireless networks. However, unreliable communication channels, limited resources, and lack of trust among users, hinder the effective application of federated learning in IIoT. In this paper, we introduce the Digital Twin Wireless Networks (DTWN) by incorporating digital twins into wireless networks, to migrate real-time data processing and computation to the edge plane. Then, we propose a blockchain empowered federated learning framework running in the DTWN for collaborative computing, which improves the reliability and security of the system, and enhances data privacy. Moreover, to balance the learning accuracy and time cost of the proposed scheme, we formulate an optimization problem for edge association by jointly considering digital twin association, training data batch size, and bandwidth allocation. We exploit multi-agent reinforcement learning to find an optimal solution to the problem. Numerical results on real-world dataset show that the proposed scheme yields improved efficiency and reduced cost compared to benchmark learning method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge