Wuxuan Jiang

Spin: An Efficient Secure Computation Framework with GPU Acceleration

Feb 04, 2024

Abstract:Accuracy and efficiency remain challenges for multi-party computation (MPC) frameworks. Spin is a GPU-accelerated MPC framework that supports multiple computation parties and a dishonest majority adversarial setup. We propose optimized protocols for non-linear functions that are critical for machine learning, as well as several novel optimizations specific to attention that is the fundamental unit of Transformer models, allowing Spin to perform non-trivial CNNs training and Transformer inference without sacrificing security. At the backend level, Spin leverages GPU, CPU, and RDMA-enabled smart network cards for acceleration. Comprehensive evaluations demonstrate that Spin can be up to $2\times$ faster than the state-of-the-art for deep neural network training. For inference on a Transformer model with 18.9 million parameters, our attention-specific optimizations enable Spin to achieve better efficiency, less communication, and better accuracy.

Wishart Mechanism for Differentially Private Principal Components Analysis

Nov 19, 2015

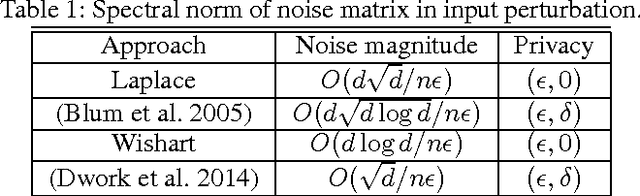

Abstract:We propose a new input perturbation mechanism for publishing a covariance matrix to achieve $(\epsilon,0)$-differential privacy. Our mechanism uses a Wishart distribution to generate matrix noise. In particular, We apply this mechanism to principal component analysis. Our mechanism is able to keep the positive semi-definiteness of the published covariance matrix. Thus, our approach gives rise to a general publishing framework for input perturbation of a symmetric positive semidefinite matrix. Moreover, compared with the classic Laplace mechanism, our method has better utility guarantee. To the best of our knowledge, Wishart mechanism is the best input perturbation approach for $(\epsilon,0)$-differentially private PCA. We also compare our work with previous exponential mechanism algorithms in the literature and provide near optimal bound while having more flexibility and less computational intractability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge