William McNally

Ice hockey player identification via transformers

Nov 22, 2021

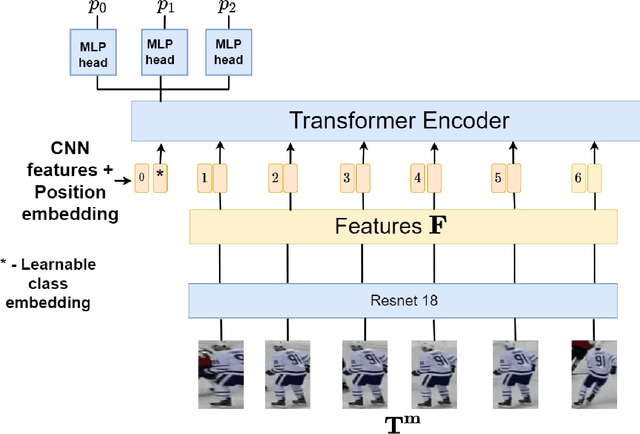

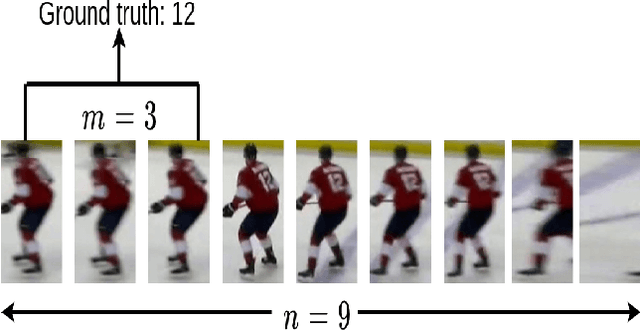

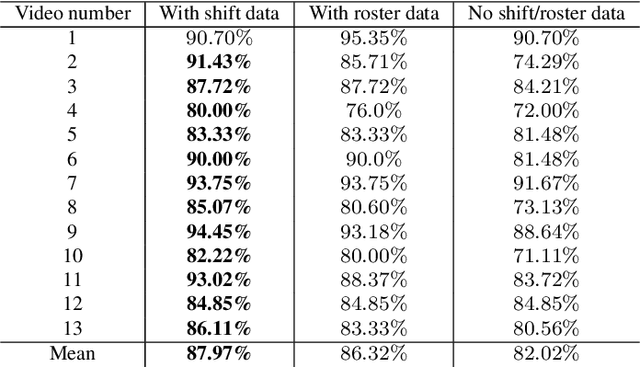

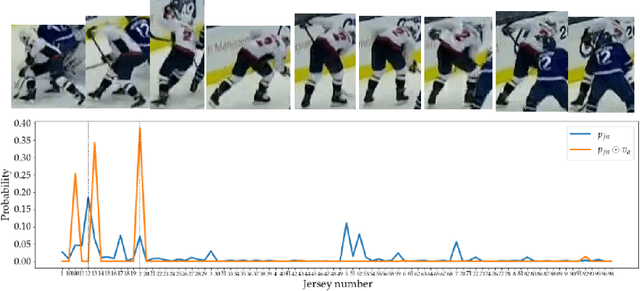

Abstract:Identifying players in video is a foundational step in computer vision-based sports analytics. Obtaining player identities is essential for analyzing the game and is used in downstream tasks such as game event recognition. Transformers are the existing standard in Natural Language Processing (NLP) and are swiftly gaining traction in computer vision. Motivated by the increasing success of transformers in computer vision, in this paper, we introduce a transformer network for recognizing players through their jersey numbers in broadcast National Hockey League (NHL) videos. The transformer takes temporal sequences of player frames (also called player tracklets) as input and outputs the probabilities of jersey numbers present in the frames. The proposed network performs better than the previous benchmark on the dataset used. We implement a weakly-supervised training approach by generating approximate frame-level labels for jersey number presence and use the frame-level labels for faster training. We also utilize player shifts available in the NHL play-by-play data by reading the game time using optical character recognition (OCR) to get the players on the ice rink at a certain game time. Using player shifts improved the player identification accuracy by 6%.

Rethinking Keypoint Representations: Modeling Keypoints and Poses as Objects for Multi-Person Human Pose Estimation

Nov 17, 2021

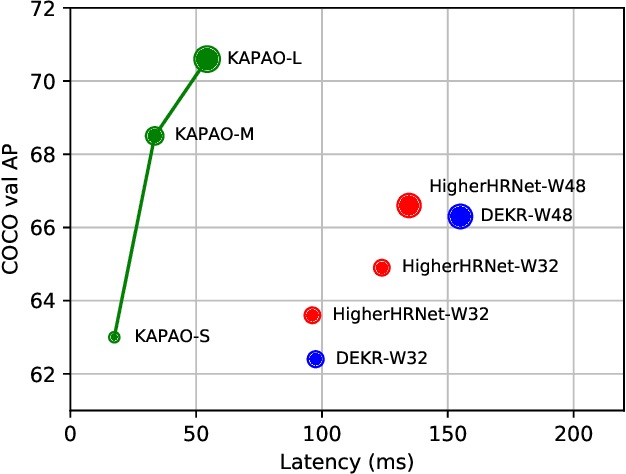

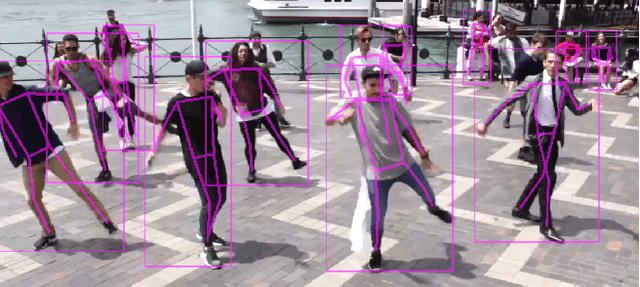

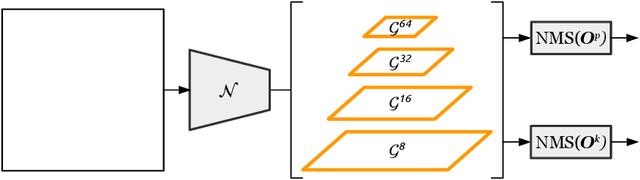

Abstract:In keypoint estimation tasks such as human pose estimation, heatmap-based regression is the dominant approach despite possessing notable drawbacks: heatmaps intrinsically suffer from quantization error and require excessive computation to generate and post-process. Motivated to find a more efficient solution, we propose a new heatmap-free keypoint estimation method in which individual keypoints and sets of spatially related keypoints (i.e., poses) are modeled as objects within a dense single-stage anchor-based detection framework. Hence, we call our method KAPAO (pronounced "Ka-Pow!") for Keypoints And Poses As Objects. We apply KAPAO to the problem of single-stage multi-person human pose estimation by simultaneously detecting human pose objects and keypoint objects and fusing the detections to exploit the strengths of both object representations. In experiments, we observe that KAPAO is significantly faster and more accurate than previous methods, which suffer greatly from heatmap post-processing. Moreover, the accuracy-speed trade-off is especially favourable in the practical setting when not using test-time augmentation. Our large model, KAPAO-L, achieves an AP of 70.6 on the Microsoft COCO Keypoints validation set without test-time augmentation while being 2.5x faster than the next best single-stage model, whose accuracy is 4.0 AP less. Furthermore, KAPAO excels in the presence of heavy occlusion. On the CrowdPose test set, KAPAO-L achieves new state-of-the-art accuracy for a single-stage method with an AP of 68.9.

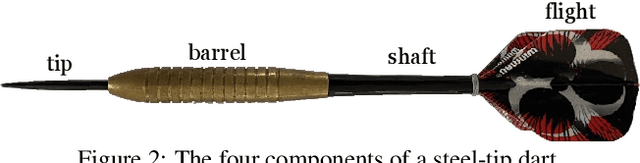

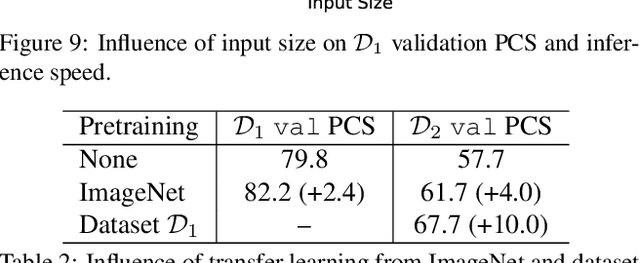

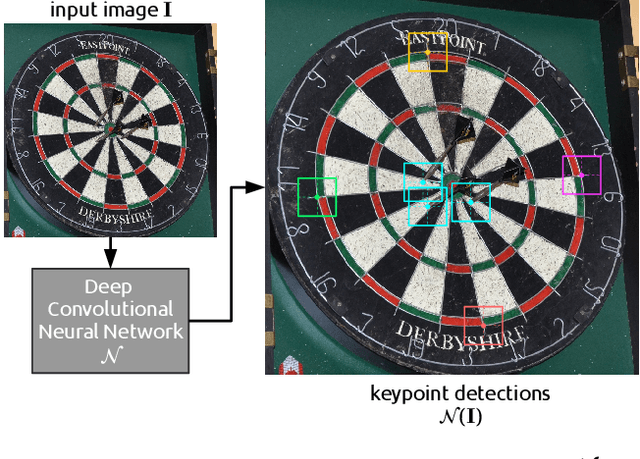

DeepDarts: Modeling Keypoints as Objects for Automatic Scorekeeping in Darts using a Single Camera

May 20, 2021

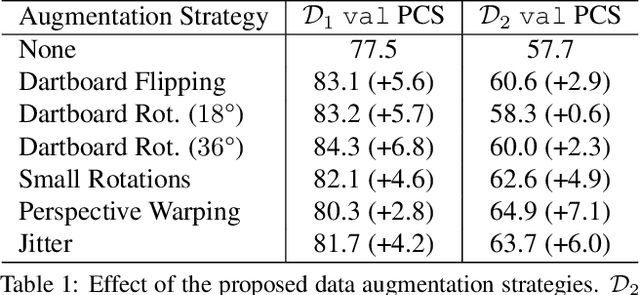

Abstract:Existing multi-camera solutions for automatic scorekeeping in steel-tip darts are very expensive and thus inaccessible to most players. Motivated to develop a more accessible low-cost solution, we present a new approach to keypoint detection and apply it to predict dart scores from a single image taken from any camera angle. This problem involves detecting multiple keypoints that may be of the same class and positioned in close proximity to one another. The widely adopted framework for regressing keypoints using heatmaps is not well-suited for this task. To address this issue, we instead propose to model keypoints as objects. We develop a deep convolutional neural network around this idea and use it to predict dart locations and dartboard calibration points within an overall pipeline for automatic dart scoring, which we call DeepDarts. Additionally, we propose several task-specific data augmentation strategies to improve the generalization of our method. As a proof of concept, two datasets comprising 16k images originating from two different dartboard setups were manually collected and annotated to evaluate the system. In the primary dataset containing 15k images captured from a face-on view of the dartboard using a smartphone, DeepDarts predicted the total score correctly in 94.7% of the test images. In a second more challenging dataset containing limited training data (830 images) and various camera angles, we utilize transfer learning and extensive data augmentation to achieve a test accuracy of 84.0%. Because DeepDarts relies only on single images, it has the potential to be deployed on edge devices, giving anyone with a smartphone access to an automatic dart scoring system for steel-tip darts. The code and datasets are available.

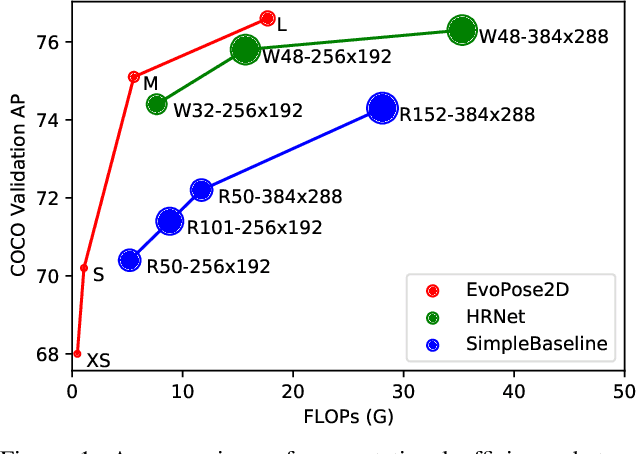

EvoPose2D: Pushing the Boundaries of 2D Human Pose Estimation using Neuroevolution

Nov 17, 2020

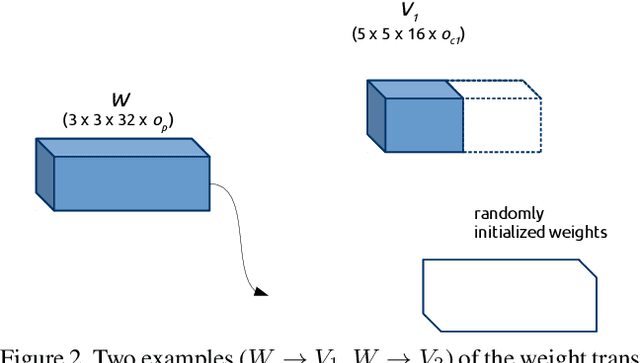

Abstract:Neural architecture search has proven to be highly effective in the design of computationally efficient, task-specific convolutional neural networks across several areas of computer vision. In 2D human pose estimation, however, its application has been limited by high computational demands. Hypothesizing that neural architecture search holds great potential for 2D human pose estimation, we propose a new weight transfer scheme that relaxes function-preserving mutations, enabling us to accelerate neuroevolution in a flexible manner. Our method produces 2D human pose network designs that are more efficient and more accurate than state-of-the-art hand-designed networks. In fact, the generated networks can process images at higher resolutions using less computation than previous networks at lower resolutions, permitting us to push the boundaries of 2D human pose estimation. Our baseline network designed using neuroevolution, which we refer to as EvoPose2D-S, provides comparable accuracy to SimpleBaseline while using 4.9x fewer floating-point operations and 13.5x fewer parameters. Our largest network, EvoPose2D-L, achieves new state-of-the-art accuracy on the Microsoft COCO Keypoints benchmark while using 2.0x fewer operations and 4.3x fewer parameters than its nearest competitor.

PuckNet: Estimating hockey puck location from broadcast video

Dec 11, 2019

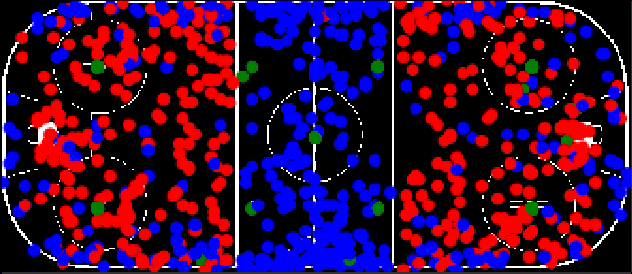

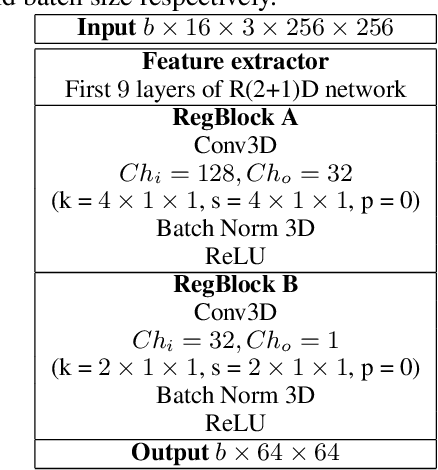

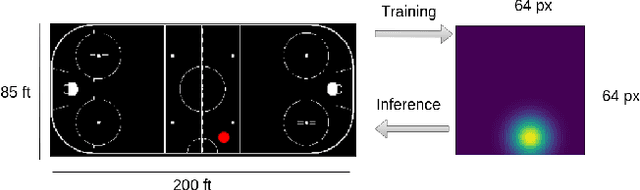

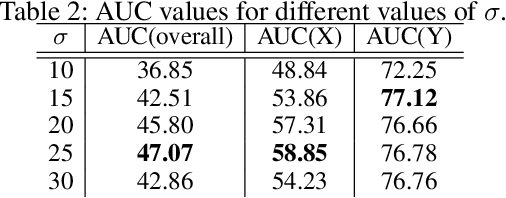

Abstract:Puck location in ice hockey is essential for hockey analysts for determining the location of play and analyzing game events. However, because of the difficulty involved in obtaining accurate annotations due to the extremely low visibility and commonly occurring occlusions of the puck, the problem is very challenging. The problem becomes even more challenging in broadcast videos with changing camera angles. We introduce a novel methodology for determining puck location from approximate puck location annotations in broadcast video. Our method uniquely leverages the existing puck location information that is publicly available in existing hockey event data and uses the corresponding one-second broadcast video clips as input to the network. The rationale behind using video as input instead of static images is that with video, the temporal information can be utilized to handle puck occlusions. The network outputs a heatmap representing the probability of the puck location using a 3D CNN based architecture. The network is able to regress the puck location from broadcast hockey video clips with varying camera angles. Experimental results demonstrate the capability of the method, achieving 47.07% AUC on the test dataset. The network is also able to estimate the puck location in defensive/offensive zones with an accuracy of greater than 80%.

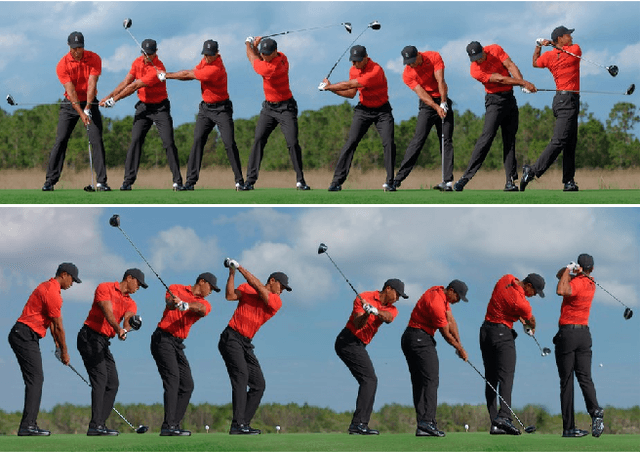

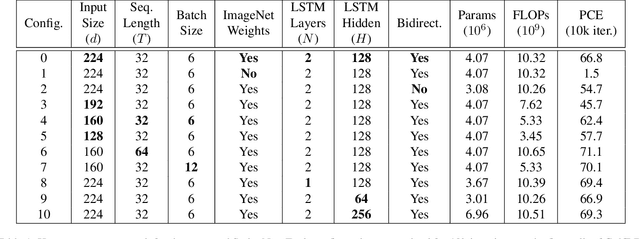

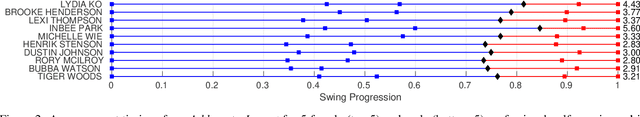

GolfDB: A Video Database for Golf Swing Sequencing

Mar 15, 2019

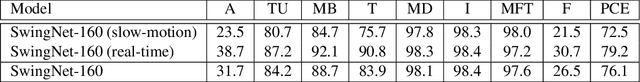

Abstract:The golf swing is a complex movement requiring considerable full-body coordination to execute proficiently. As such, it is the subject of frequent scrutiny and extensive biomechanical analyses. In this paper, we introduce the notion of golf swing sequencing for detecting key events in the golf swing and facilitating golf swing analysis. To enable consistent evaluation of golf swing sequencing performance, we also introduce the benchmark database GolfDB, consisting of 1400 high-quality golf swing videos, each labeled with event frames, bounding box, player name and sex, club type, and view type. Furthermore, to act as a reference baseline for evaluating golf swing sequencing performance on GolfDB, we propose a lightweight deep neural network called SwingNet, which possesses a hybrid deep convolutional and recurrent neural network architecture. SwingNet correctly detects eight golf swing events at an average rate of 76.1%, and six out of eight events at a rate of 91.8%. In line with the proposed baseline SwingNet, we advocate the use of computationally efficient models in future research to promote in-the-field analysis via deployment on readily-available mobile devices.

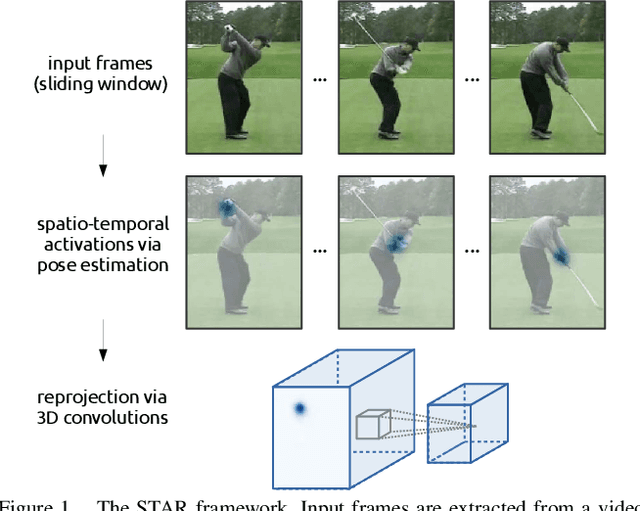

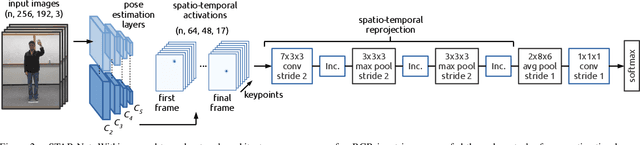

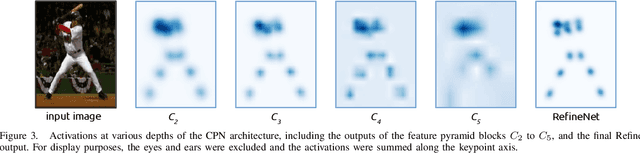

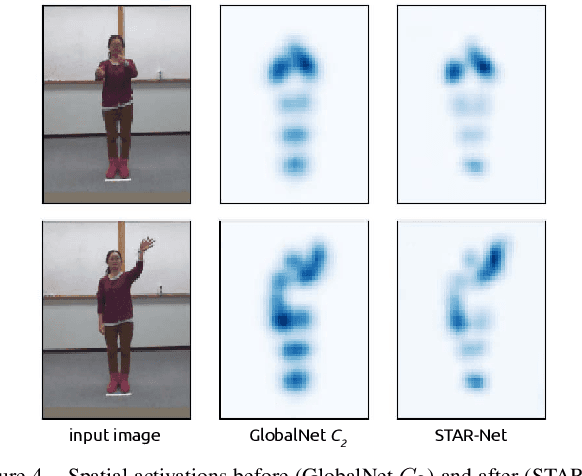

STAR-Net: Action Recognition using Spatio-Temporal Activation Reprojection

Feb 26, 2019

Abstract:While depth cameras and inertial sensors have been frequently leveraged for human action recognition, these sensing modalities are impractical in many scenarios where cost or environmental constraints prohibit their use. As such, there has been recent interest on human action recognition using low-cost, readily-available RGB cameras via deep convolutional neural networks. However, many of the deep convolutional neural networks proposed for action recognition thus far have relied heavily on learning global appearance cues directly from imaging data, resulting in highly complex network architectures that are computationally expensive and difficult to train. Motivated to reduce network complexity and achieve higher performance, we introduce the concept of spatio-temporal activation reprojection (STAR). More specifically, we reproject the spatio-temporal activations generated by human pose estimation layers in space and time using a stack of 3D convolutions. Experimental results on UTD-MHAD and J-HMDB demonstrate that an end-to-end architecture based on the proposed STAR framework (which we nickname STAR-Net) is proficient in single-environment and small-scale applications. On UTD-MHAD, STAR-Net outperforms several methods using richer data modalities such as depth and inertial sensors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge