Weihua Ou

Towards Multiple Missing Values-resistant Unsupervised Graph Anomaly Detection

Nov 13, 2025

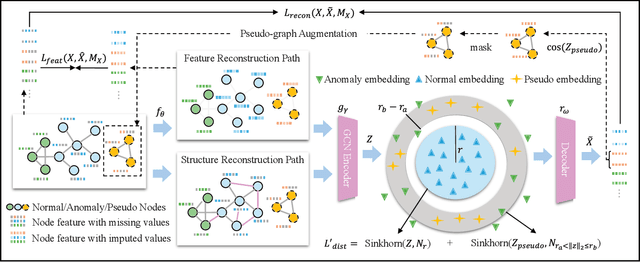

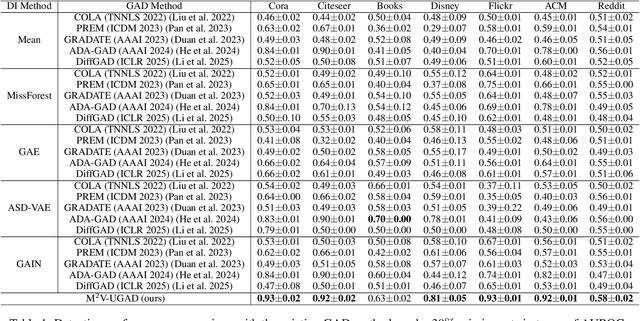

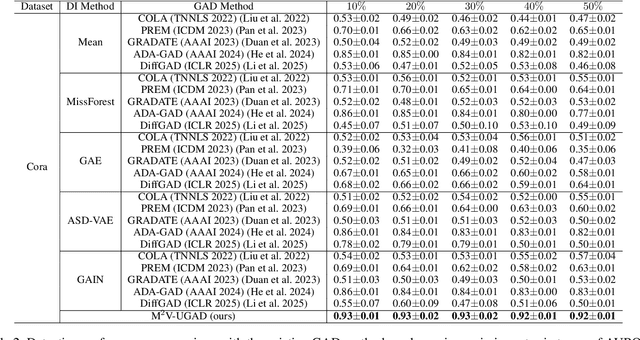

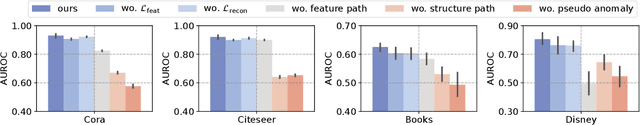

Abstract:Unsupervised graph anomaly detection (GAD) has received increasing attention in recent years, which aims to identify data anomalous patterns utilizing only unlabeled node information from graph-structured data. However, prevailing unsupervised GAD methods typically presuppose complete node attributes and structure information, a condition hardly satisfied in real-world scenarios owing to privacy, collection errors or dynamic node arrivals. Existing standard imputation schemes risk "repairing" rare anomalous nodes so that they appear normal, thereby introducing imputation bias into the detection process. In addition, when both node attributes and edges are missing simultaneously, estimation errors in one view can contaminate the other, causing cross-view interference that further undermines the detection performance. To overcome these challenges, we propose M$^2$V-UGAD, a multiple missing values-resistant unsupervised GAD framework on incomplete graphs. Specifically, a dual-pathway encoder is first proposed to independently reconstruct missing node attributes and graph structure, thereby preventing errors in one view from propagating to the other. The two pathways are then fused and regularized in a joint latent space so that normals occupy a compact inner manifold while anomalies reside on an outer shell. Lastly, to mitigate imputation bias, we sample latent codes just outside the normal region and decode them into realistic node features and subgraphs, providing hard negative examples that sharpen the decision boundary. Experiments on seven public benchmarks demonstrate that M$^2$V-UGAD consistently outperforms existing unsupervised GAD methods across varying missing rates.

Towards Effective Open-set Graph Class-incremental Learning

Jul 23, 2025Abstract:Graph class-incremental learning (GCIL) allows graph neural networks (GNNs) to adapt to evolving graph analytical tasks by incrementally learning new class knowledge while retaining knowledge of old classes. Existing GCIL methods primarily focus on a closed-set assumption, where all test samples are presumed to belong to previously known classes. Such an assumption restricts their applicability in real-world scenarios, where unknown classes naturally emerge during inference, and are absent during training. In this paper, we explore a more challenging open-set graph class-incremental learning scenario with two intertwined challenges: catastrophic forgetting of old classes, which impairs the detection of unknown classes, and inadequate open-set recognition, which destabilizes the retention of learned knowledge. To address the above problems, a novel OGCIL framework is proposed, which utilizes pseudo-sample embedding generation to effectively mitigate catastrophic forgetting and enable robust detection of unknown classes. To be specific, a prototypical conditional variational autoencoder is designed to synthesize node embeddings for old classes, enabling knowledge replay without storing raw graph data. To handle unknown classes, we employ a mixing-based strategy to generate out-of-distribution (OOD) samples from pseudo in-distribution and current node embeddings. A novel prototypical hypersphere classification loss is further proposed, which anchors in-distribution embeddings to their respective class prototypes, while repelling OOD embeddings away. Instead of assigning all unknown samples into one cluster, our proposed objective function explicitly models them as outliers through prototype-aware rejection regions, ensuring a robust open-set recognition. Extensive experiments on five benchmarks demonstrate the effectiveness of OGCIL over existing GCIL and open-set GNN methods.

Deep Manifold Hashing: A Divide-and-Conquer Approach for Semi-Paired Unsupervised Cross-Modal Retrieval

Sep 26, 2022

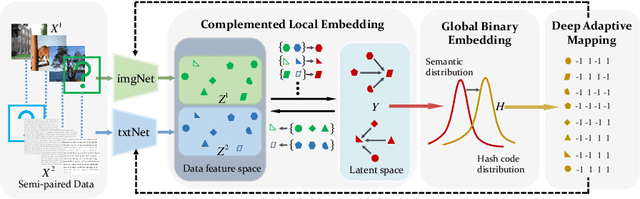

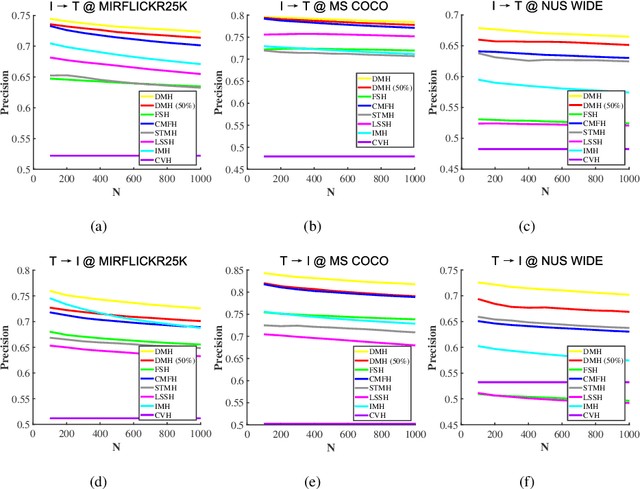

Abstract:Hashing that projects data into binary codes has shown extraordinary talents in cross-modal retrieval due to its low storage usage and high query speed. Despite their empirical success on some scenarios, existing cross-modal hashing methods usually fail to cross modality gap when fully-paired data with plenty of labeled information is nonexistent. To circumvent this drawback, motivated by the Divide-and-Conquer strategy, we propose Deep Manifold Hashing (DMH), a novel method of dividing the problem of semi-paired unsupervised cross-modal retrieval into three sub-problems and building one simple yet efficiency model for each sub-problem. Specifically, the first model is constructed for obtaining modality-invariant features by complementing semi-paired data based on manifold learning, whereas the second model and the third model aim to learn hash codes and hash functions respectively. Extensive experiments on three benchmarks demonstrate the superiority of our DMH compared with the state-of-the-art fully-paired and semi-paired unsupervised cross-modal hashing methods.

Deep Supervised Information Bottleneck Hashing for Cross-modal Retrieval based Computer-aided Diagnosis

May 06, 2022

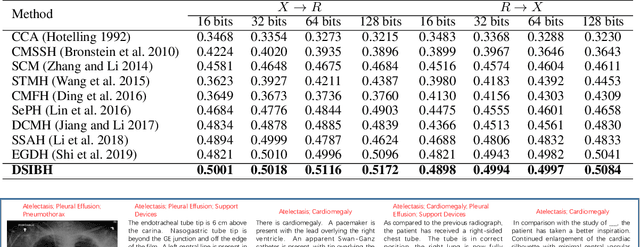

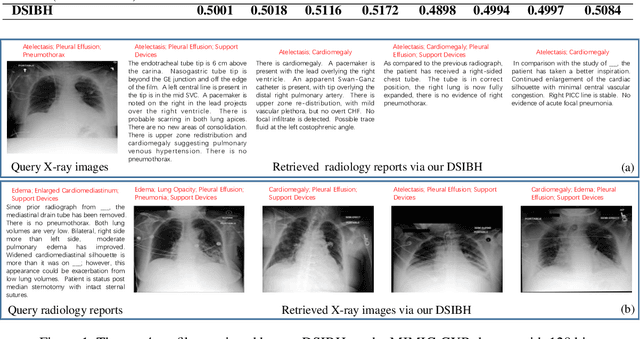

Abstract:Mapping X-ray images, radiology reports, and other medical data as binary codes in the common space, which can assist clinicians to retrieve pathology-related data from heterogeneous modalities (i.e., hashing-based cross-modal medical data retrieval), provides a new view to promot computeraided diagnosis. Nevertheless, there remains a barrier to boost medical retrieval accuracy: how to reveal the ambiguous semantics of medical data without the distraction of superfluous information. To circumvent this drawback, we propose Deep Supervised Information Bottleneck Hashing (DSIBH), which effectively strengthens the discriminability of hash codes. Specifically, the Deep Deterministic Information Bottleneck (Yu, Yu, and Principe 2021) for single modality is extended to the cross-modal scenario. Benefiting from this, the superfluous information is reduced, which facilitates the discriminability of hash codes. Experimental results demonstrate the superior accuracy of the proposed DSIBH compared with state-of-the-arts in cross-modal medical data retrieval tasks.

* 7 pages, 1 figure

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge