Weiguang Ding

Multimodal and Multiscale Deep Neural Networks for the Early Diagnosis of Alzheimer's Disease using structural MR and FDG-PET images

Oct 13, 2017

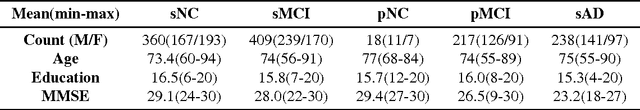

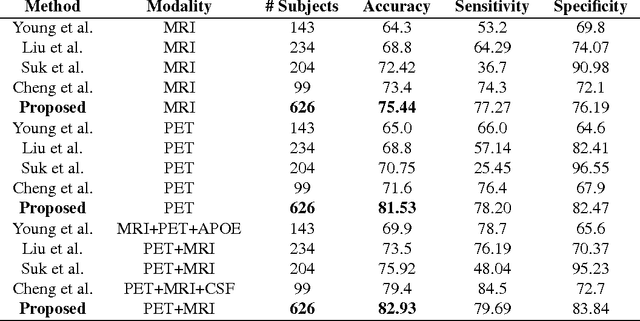

Abstract:Alzheimer's Disease (AD) is a progressive neurodegenerative disease. Amnestic mild cognitive impairment (MCI) is a common first symptom before the conversion to clinical impairment where the individual becomes unable to perform activities of daily living independently. Although there is currently no treatment available, the earlier a conclusive diagnosis is made, the earlier the potential for interventions to delay or perhaps even prevent progression to full-blown AD. Neuroimaging scans acquired from MRI and metabolism images obtained by FDG-PET provide in-vivo view into the structure and function (glucose metabolism) of the living brain. It is hypothesized that combining different image modalities could better characterize the change of human brain and result in a more accuracy early diagnosis of AD. In this paper, we proposed a novel framework to discriminate normal control(NC) subjects from subjects with AD pathology (AD and NC, MCI subjects convert to AD in future). Our novel approach utilizing a multimodal and multiscale deep neural network was found to deliver a 85.68\% accuracy in the prediction of subjects within 3 years to conversion. Cross validation experiments proved that it has better discrimination ability compared with results in existing published literature.

Automatic Moth Detection from Trap Images for Pest Management

Feb 24, 2016

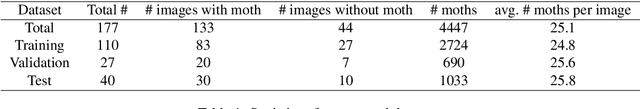

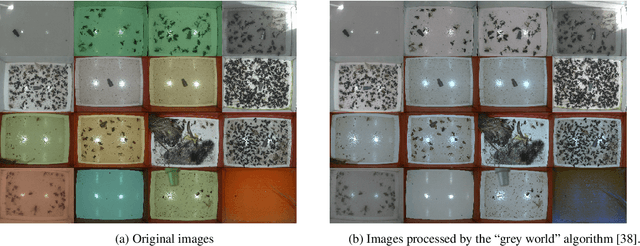

Abstract:Monitoring the number of insect pests is a crucial component in pheromone-based pest management systems. In this paper, we propose an automatic detection pipeline based on deep learning for identifying and counting pests in images taken inside field traps. Applied to a commercial codling moth dataset, our method shows promising performance both qualitatively and quantitatively. Compared to previous attempts at pest detection, our approach uses no pest-specific engineering which enables it to adapt to other species and environments with minimal human effort. It is amenable to implementation on parallel hardware and therefore capable of deployment in settings where real-time performance is required.

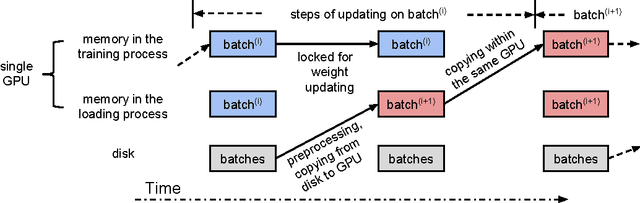

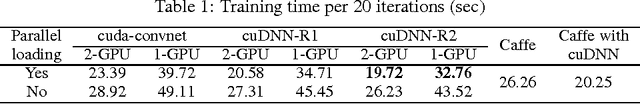

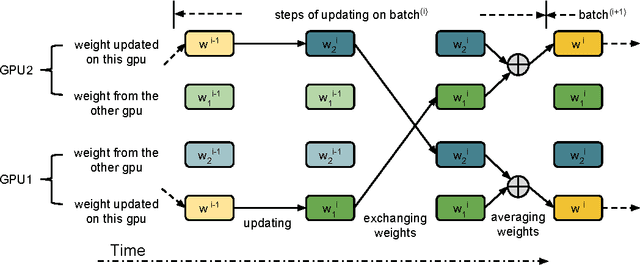

Theano-based Large-Scale Visual Recognition with Multiple GPUs

Apr 06, 2015

Abstract:In this report, we describe a Theano-based AlexNet (Krizhevsky et al., 2012) implementation and its naive data parallelism on multiple GPUs. Our performance on 2 GPUs is comparable with the state-of-art Caffe library (Jia et al., 2014) run on 1 GPU. To the best of our knowledge, this is the first open-source Python-based AlexNet implementation to-date.

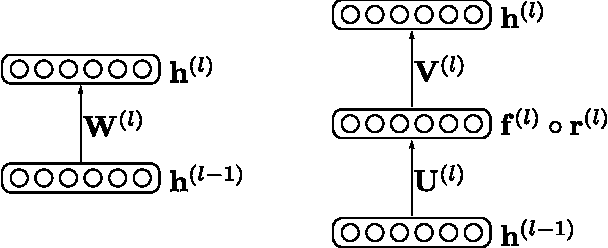

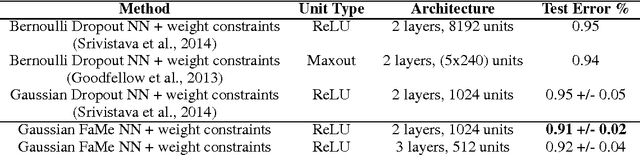

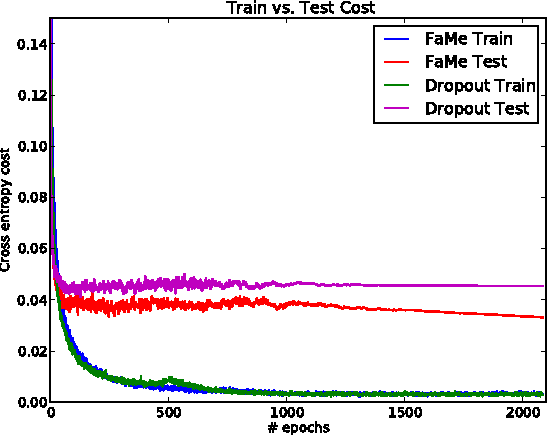

Neural Network Regularization via Robust Weight Factorization

Jan 05, 2015

Abstract:Regularization is essential when training large neural networks. As deep neural networks can be mathematically interpreted as universal function approximators, they are effective at memorizing sampling noise in the training data. This results in poor generalization to unseen data. Therefore, it is no surprise that a new regularization technique, Dropout, was partially responsible for the now-ubiquitous winning entry to ImageNet 2012 by the University of Toronto. Currently, Dropout (and related methods such as DropConnect) are the most effective means of regularizing large neural networks. These amount to efficiently visiting a large number of related models at training time, while aggregating them to a single predictor at test time. The proposed FaMe model aims to apply a similar strategy, yet learns a factorization of each weight matrix such that the factors are robust to noise.

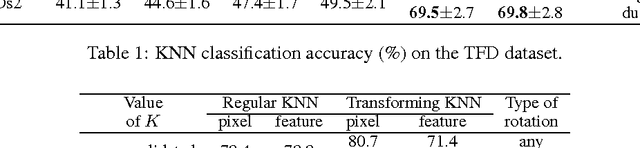

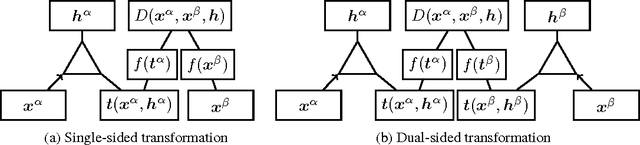

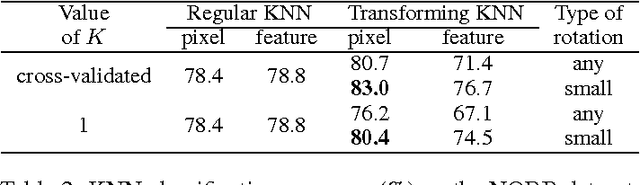

"Mental Rotation" by Optimizing Transforming Distance

Dec 05, 2014

Abstract:The human visual system is able to recognize objects despite transformations that can drastically alter their appearance. To this end, much effort has been devoted to the invariance properties of recognition systems. Invariance can be engineered (e.g. convolutional nets), or learned from data explicitly (e.g. temporal coherence) or implicitly (e.g. by data augmentation). One idea that has not, to date, been explored is the integration of latent variables which permit a search over a learned space of transformations. Motivated by evidence that people mentally simulate transformations in space while comparing examples, so-called "mental rotation", we propose a transforming distance. Here, a trained relational model actively transforms pairs of examples so that they are maximally similar in some feature space yet respect the learned transformational constraints. We apply our method to nearest-neighbour problems on the Toronto Face Database and NORB.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge