Vinay Shet

Accurate Deep Direct Geo-Localization from Ground Imagery and Phone-Grade GPS

Apr 20, 2018

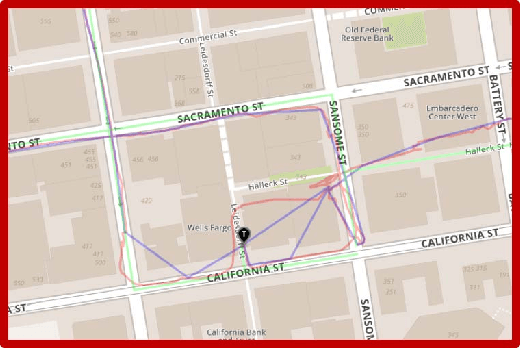

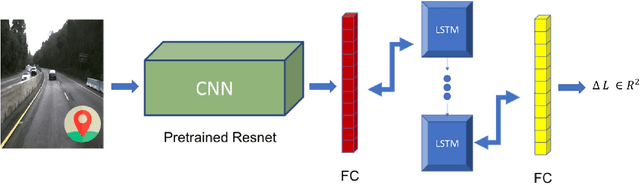

Abstract:One of the most critical topics in autonomous driving or ride-sharing technology is to accurately localize vehicles in the world frame. In addition to common multi-view camera systems, it usually also relies on industrial grade sensors, such as LiDAR, differential GPS, high precision IMU, and etc. In this paper, we develop an approach to provide an effective solution to this problem. We propose a method to train a geo-spatial deep neural network (CNN+LSTM) to predict accurate geo-locations (latitude and longitude) using only ordinary ground imagery and low accuracy phone-grade GPS. We evaluate our approach on the open dataset released during ACM Multimedia 2017 Grand Challenge. Having ground truth locations for training, we are able to reach nearly lane-level accuracy. We also evaluate the proposed method on our own collected images in San Francisco downtown area often described as "downtown canyon" where consumer GPS signals are extremely inaccurate. The results show the model can predict quality locations that suffice in real business applications, such as ride-sharing, only using phone-grade GPS. Unlike classic visual localization or recent PoseNet-like methods that may work well in indoor environments or small-scale outdoor environments, we avoid using a map or an SFM (structure-from-motion) model at all. More importantly, the proposed method can be scaled up without concerns over the potential failure of 3D reconstruction.

Large Scale Business Discovery from Street Level Imagery

Feb 02, 2016

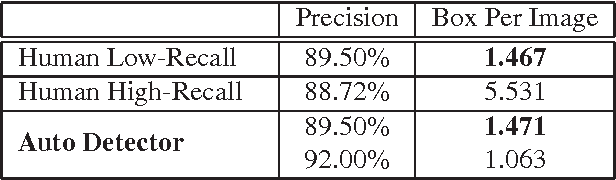

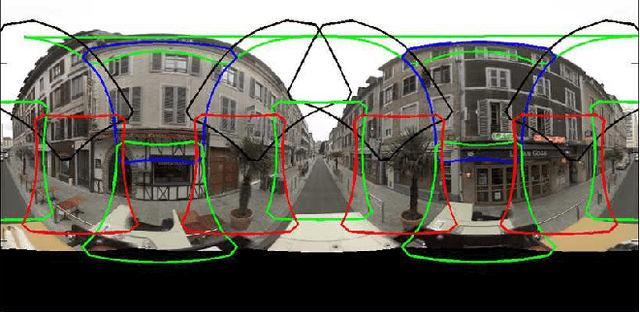

Abstract:Search with local intent is becoming increasingly useful due to the popularity of the mobile device. The creation and maintenance of accurate listings of local businesses worldwide is time consuming and expensive. In this paper, we propose an approach to automatically discover businesses that are visible on street level imagery. Precise business store front detection enables accurate geo-location of businesses, and further provides input for business categorization, listing generation, etc. The large variety of business categories in different countries makes this a very challenging problem. Moreover, manual annotation is prohibitive due to the scale of this problem. We propose the use of a MultiBox based approach that takes input image pixels and directly outputs store front bounding boxes. This end-to-end learning approach instead preempts the need for hand modeling either the proposal generation phase or the post-processing phase, leveraging large labelled training datasets. We demonstrate our approach outperforms the state of the art detection techniques with a large margin in terms of performance and run-time efficiency. In the evaluation, we show this approach achieves human accuracy in the low-recall settings. We also provide an end-to-end evaluation of business discovery in the real world.

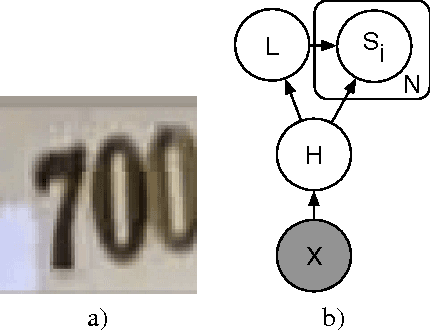

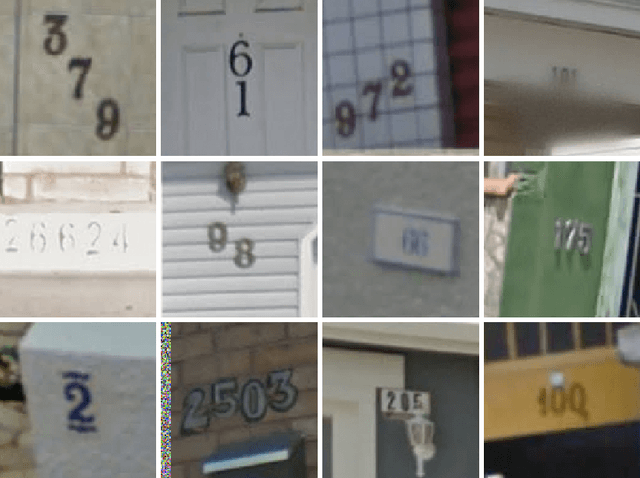

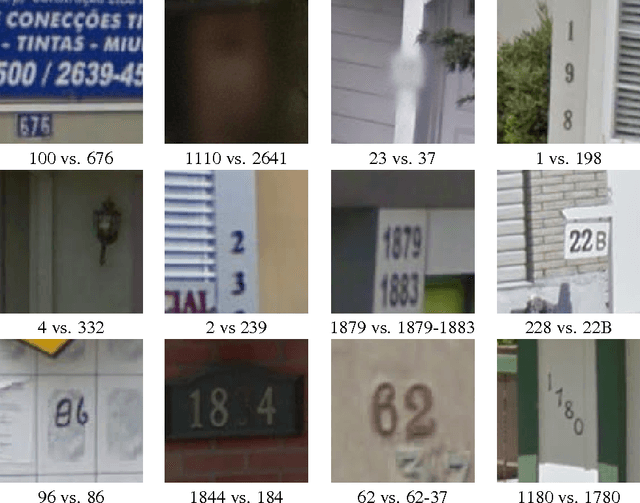

Multi-digit Number Recognition from Street View Imagery using Deep Convolutional Neural Networks

Apr 14, 2014

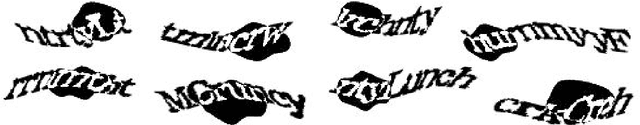

Abstract:Recognizing arbitrary multi-character text in unconstrained natural photographs is a hard problem. In this paper, we address an equally hard sub-problem in this domain viz. recognizing arbitrary multi-digit numbers from Street View imagery. Traditional approaches to solve this problem typically separate out the localization, segmentation, and recognition steps. In this paper we propose a unified approach that integrates these three steps via the use of a deep convolutional neural network that operates directly on the image pixels. We employ the DistBelief implementation of deep neural networks in order to train large, distributed neural networks on high quality images. We find that the performance of this approach increases with the depth of the convolutional network, with the best performance occurring in the deepest architecture we trained, with eleven hidden layers. We evaluate this approach on the publicly available SVHN dataset and achieve over $96\%$ accuracy in recognizing complete street numbers. We show that on a per-digit recognition task, we improve upon the state-of-the-art, achieving $97.84\%$ accuracy. We also evaluate this approach on an even more challenging dataset generated from Street View imagery containing several tens of millions of street number annotations and achieve over $90\%$ accuracy. To further explore the applicability of the proposed system to broader text recognition tasks, we apply it to synthetic distorted text from reCAPTCHA. reCAPTCHA is one of the most secure reverse turing tests that uses distorted text to distinguish humans from bots. We report a $99.8\%$ accuracy on the hardest category of reCAPTCHA. Our evaluations on both tasks indicate that at specific operating thresholds, the performance of the proposed system is comparable to, and in some cases exceeds, that of human operators.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge