Trikay Nalamada

A Penalized Shared-parameter Algorithm for Estimating Optimal Dynamic Treatment Regimens

Jul 13, 2021

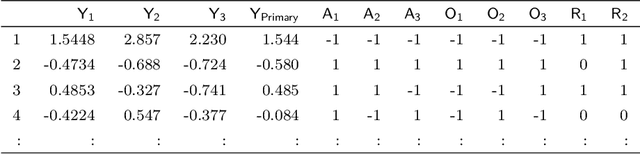

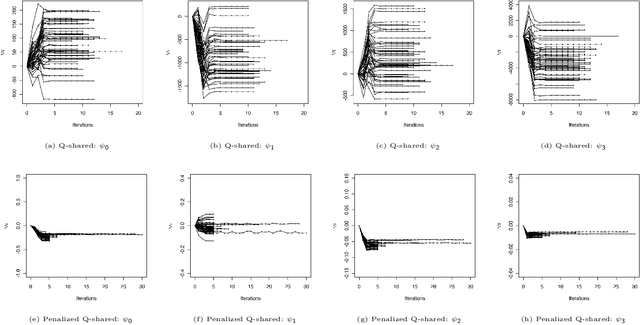

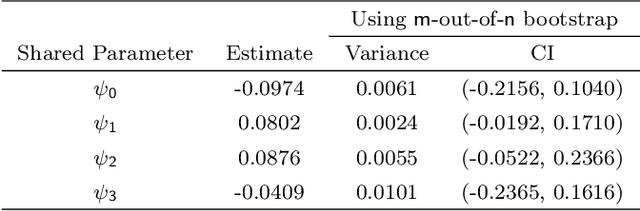

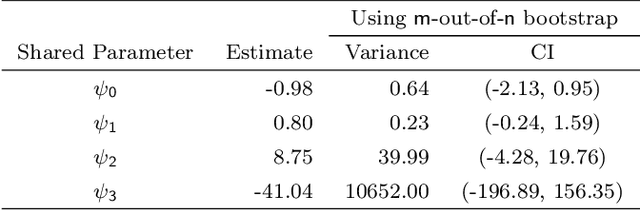

Abstract:A dynamic treatment regimen (DTR) is a set of decision rules to personalize treatments for an individual using their medical history. The Q-learning based Q-shared algorithm has been used to develop DTRs that involve decision rules shared across multiple stages of intervention. We show that the existing Q-shared algorithm can suffer from non-convergence due to the use of linear models in the Q-learning setup, and identify the condition in which Q-shared fails. Leveraging properties from expansion-constrained ordinary least-squares, we give a penalized Q-shared algorithm that not only converges in settings that violate the condition, but can outperform the original Q-shared algorithm even when the condition is satisfied. We give evidence for the proposed method in a real-world application and several synthetic simulations.

Rotate to Attend: Convolutional Triplet Attention Module

Oct 06, 2020

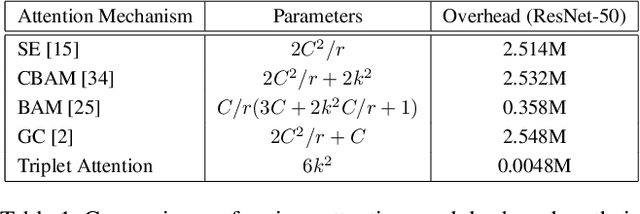

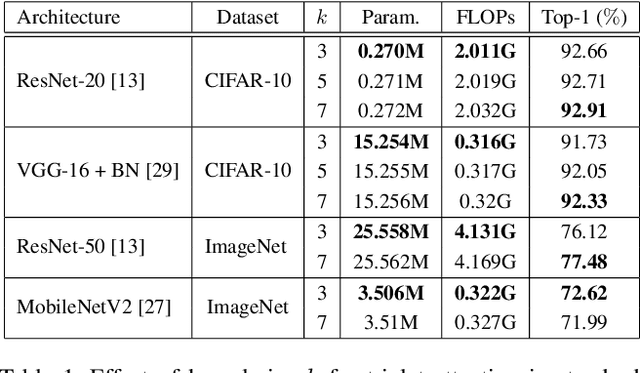

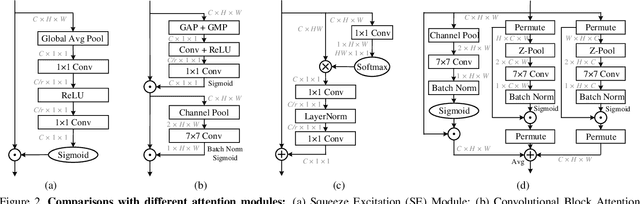

Abstract:Benefiting from the capability of building inter-dependencies among channels or spatial locations, attention mechanisms have been extensively studied and broadly used in a variety of computer vision tasks recently. In this paper, we investigate light-weight but effective attention mechanisms and present triplet attention, a novel method for computing attention weights by capturing cross-dimension interaction using a three-branch structure. For an input tensor, triplet attention builds inter-dimensional dependencies by the rotation operation followed by residual transformations and encodes inter-channel and spatial information with negligible computational overhead. Our method is simple as well as efficient and can be easily plugged into classic backbone networks as an add-on module. We demonstrate the effectiveness of our method on various challenging tasks including image classification on ImageNet-1k and object detection on MSCOCO and PASCAL VOC datasets. Furthermore, we provide extensive in-sight into the performance of triplet attention by visually inspecting the GradCAM and GradCAM++ results. The empirical evaluation of our method supports our intuition on the importance of capturing dependencies across dimensions when computing attention weights. Code for this paper can be publicly accessed at https://github.com/LandskapeAI/triplet-attention

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge