Tianyu Geng

Modulo Video Recovery via Selective Spatiotemporal Vision Transformer

Nov 09, 2025

Abstract:Conventional image sensors have limited dynamic range, causing saturation in high-dynamic-range (HDR) scenes. Modulo cameras address this by folding incident irradiance into a bounded range, yet require specialized unwrapping algorithms to reconstruct the underlying signal. Unlike HDR recovery, which extends dynamic range from conventional sampling, modulo recovery restores actual values from folded samples. Despite being introduced over a decade ago, progress in modulo image recovery has been slow, especially in the use of modern deep learning techniques. In this work, we demonstrate that standard HDR methods are unsuitable for modulo recovery. Transformers, however, can capture global dependencies and spatial-temporal relationships crucial for resolving folded video frames. Still, adapting existing Transformer architectures for modulo recovery demands novel techniques. To this end, we present Selective Spatiotemporal Vision Transformer (SSViT), the first deep learning framework for modulo video reconstruction. SSViT employs a token selection strategy to improve efficiency and concentrate on the most critical regions. Experiments confirm that SSViT produces high-quality reconstructions from 8-bit folded videos and achieves state-of-the-art performance in modulo video recovery.

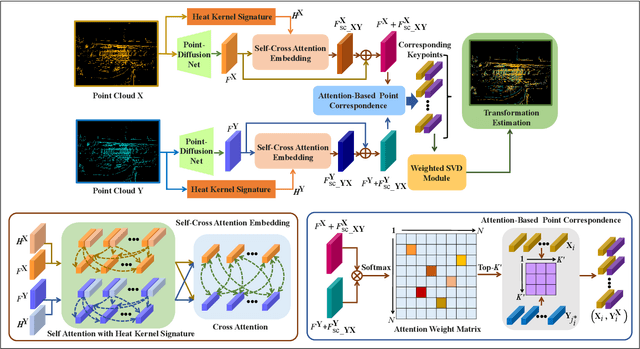

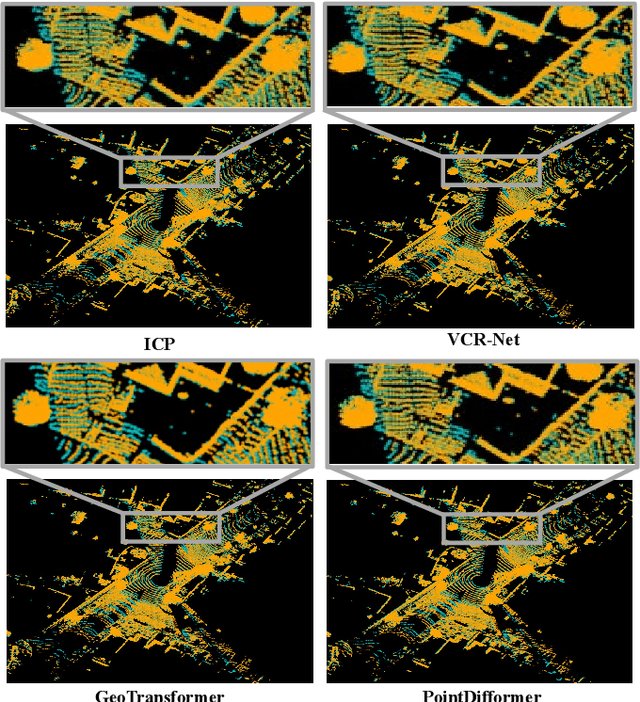

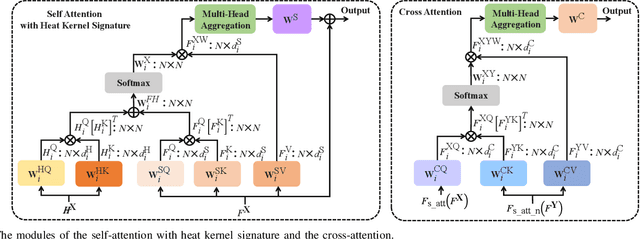

PointDifformer: Robust Point Cloud Registration With Neural Diffusion and Transformer

Apr 22, 2024

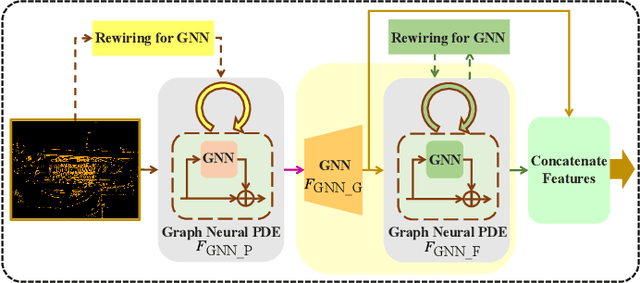

Abstract:Point cloud registration is a fundamental technique in 3-D computer vision with applications in graphics, autonomous driving, and robotics. However, registration tasks under challenging conditions, under which noise or perturbations are prevalent, can be difficult. We propose a robust point cloud registration approach that leverages graph neural partial differential equations (PDEs) and heat kernel signatures. Our method first uses graph neural PDE modules to extract high dimensional features from point clouds by aggregating information from the 3-D point neighborhood, thereby enhancing the robustness of the feature representations. Then, we incorporate heat kernel signatures into an attention mechanism to efficiently obtain corresponding keypoints. Finally, a singular value decomposition (SVD) module with learnable weights is used to predict the transformation between two point clouds. Empirical experiments on a 3-D point cloud dataset demonstrate that our approach not only achieves state-of-the-art performance for point cloud registration but also exhibits better robustness to additive noise or 3-D shape perturbations.

PosDiffNet: Positional Neural Diffusion for Point Cloud Registration in a Large Field of View with Perturbations

Jan 06, 2024Abstract:Point cloud registration is a crucial technique in 3D computer vision with a wide range of applications. However, this task can be challenging, particularly in large fields of view with dynamic objects, environmental noise, or other perturbations. To address this challenge, we propose a model called PosDiffNet. Our approach performs hierarchical registration based on window-level, patch-level, and point-level correspondence. We leverage a graph neural partial differential equation (PDE) based on Beltrami flow to obtain high-dimensional features and position embeddings for point clouds. We incorporate position embeddings into a Transformer module based on a neural ordinary differential equation (ODE) to efficiently represent patches within points. We employ the multi-level correspondence derived from the high feature similarity scores to facilitate alignment between point clouds. Subsequently, we use registration methods such as SVD-based algorithms to predict the transformation using corresponding point pairs. We evaluate PosDiffNet on several 3D point cloud datasets, verifying that it achieves state-of-the-art (SOTA) performance for point cloud registration in large fields of view with perturbations. The implementation code of experiments is available at https://github.com/AI-IT-AVs/PosDiffNet.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge