Taijiang Mu

Generating Animatable 3D Cartoon Faces from Single Portraits

Jul 04, 2023

Abstract:With the booming of virtual reality (VR) technology, there is a growing need for customized 3D avatars. However, traditional methods for 3D avatar modeling are either time-consuming or fail to retain similarity to the person being modeled. We present a novel framework to generate animatable 3D cartoon faces from a single portrait image. We first transfer an input real-world portrait to a stylized cartoon image with a StyleGAN. Then we propose a two-stage reconstruction method to recover the 3D cartoon face with detailed texture, which first makes a coarse estimation based on template models, and then refines the model by non-rigid deformation under landmark supervision. Finally, we propose a semantic preserving face rigging method based on manually created templates and deformation transfer. Compared with prior arts, qualitative and quantitative results show that our method achieves better accuracy, aesthetics, and similarity criteria. Furthermore, we demonstrate the capability of real-time facial animation of our 3D model.

VGPN: Voice-Guided Pointing Robot Navigation for Humans

Apr 03, 2020

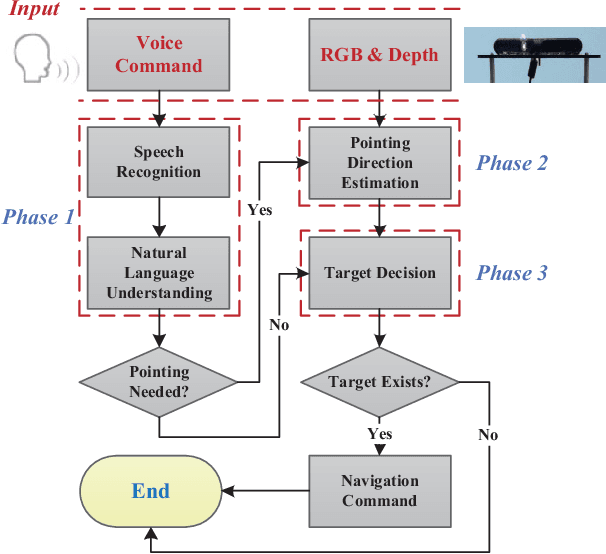

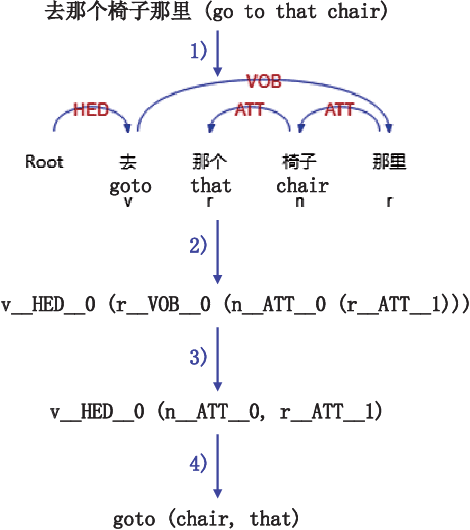

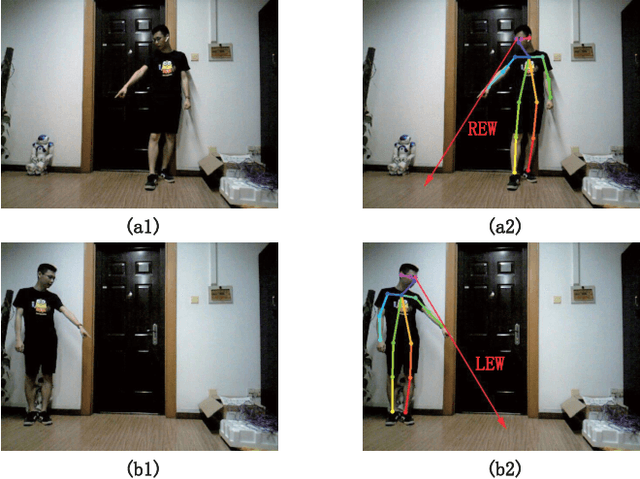

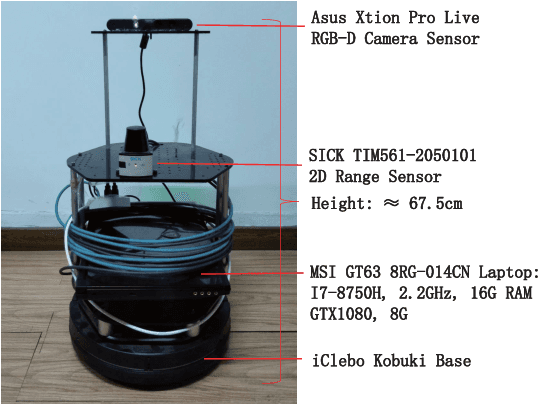

Abstract:Pointing gestures are widely used in robot navigationapproaches nowadays. However, most approaches only use point-ing gestures, and these have two major limitations. Firstly, they need to recognize pointing gestures all the time, which leads to long processing time and significant system overheads. Secondly,the user's pointing direction may not be very accurate, so the robot may go to an undesired place. To relieve these limitations,we propose a voice-guided pointing robot navigation approach named VGPN, and implement its prototype on a wheeled robot,TurtleBot 2. VGPN recognizes a pointing gesture only if voice information is insufficient for navigation. VGPN also uses voice information as a supplementary channel to help determine the target position of the user's pointing gesture. In the evaluation,we compare VGPN to the pointing-only navigation approach. The results show that VGPN effectively reduces the processing timecost when pointing gesture is unnecessary, and improves the usersatisfaction with navigation accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge