Susan G. Hill

SCOUT: A Situated and Multi-Modal Human-Robot Dialogue Corpus

Nov 19, 2024

Abstract:We introduce the Situated Corpus Of Understanding Transactions (SCOUT), a multi-modal collection of human-robot dialogue in the task domain of collaborative exploration. The corpus was constructed from multiple Wizard-of-Oz experiments where human participants gave verbal instructions to a remotely-located robot to move and gather information about its surroundings. SCOUT contains 89,056 utterances and 310,095 words from 278 dialogues averaging 320 utterances per dialogue. The dialogues are aligned with the multi-modal data streams available during the experiments: 5,785 images and 30 maps. The corpus has been annotated with Abstract Meaning Representation and Dialogue-AMR to identify the speaker's intent and meaning within an utterance, and with Transactional Units and Relations to track relationships between utterances to reveal patterns of the Dialogue Structure. We describe how the corpus and its annotations have been used to develop autonomous human-robot systems and enable research in open questions of how humans speak to robots. We release this corpus to accelerate progress in autonomous, situated, human-robot dialogue, especially in the context of navigation tasks where details about the environment need to be discovered.

* 14 pages, 7 figures

Laying Down the Yellow Brick Road: Development of a Wizard-of-Oz Interface for Collecting Human-Robot Dialogue

Oct 17, 2017

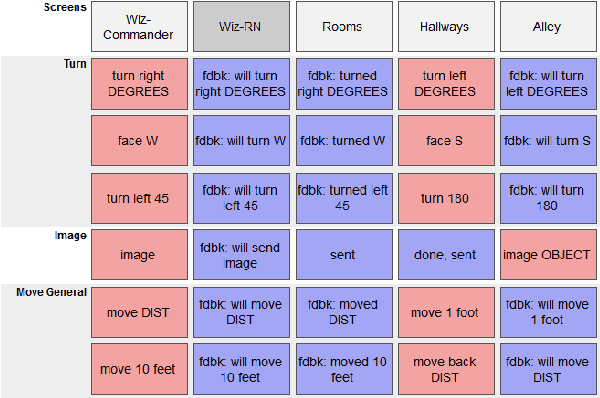

Abstract:We describe the adaptation and refinement of a graphical user interface designed to facilitate a Wizard-of-Oz (WoZ) approach to collecting human-robot dialogue data. The data collected will be used to develop a dialogue system for robot navigation. Building on an interface previously used in the development of dialogue systems for virtual agents and video playback, we add templates with open parameters which allow the wizard to quickly produce a wide variety of utterances. Our research demonstrates that this approach to data collection is viable as an intermediate step in developing a dialogue system for physical robots in remote locations from their users - a domain in which the human and robot need to regularly verify and update a shared understanding of the physical environment. We show that our WoZ interface and the fixed set of utterances and templates therein provide for a natural pace of dialogue with good coverage of the navigation domain.

Applying the Wizard-of-Oz Technique to Multimodal Human-Robot Dialogue

Mar 10, 2017

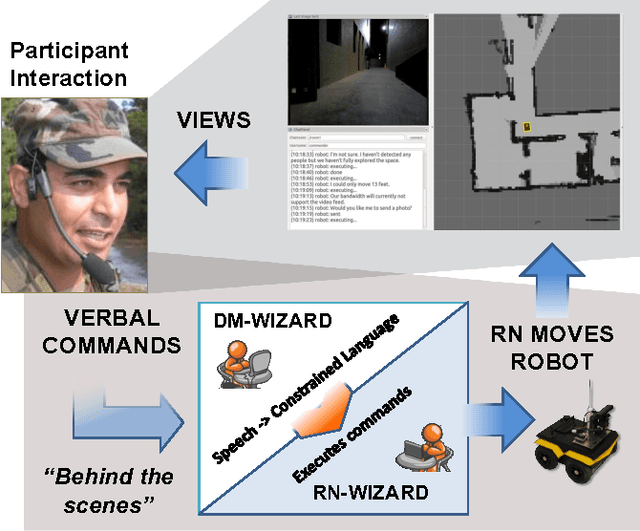

Abstract:Our overall program objective is to provide more natural ways for soldiers to interact and communicate with robots, much like how soldiers communicate with other soldiers today. We describe how the Wizard-of-Oz (WOz) method can be applied to multimodal human-robot dialogue in a collaborative exploration task. While the WOz method can help design robot behaviors, traditional approaches place the burden of decisions on a single wizard. In this work, we consider two wizards to stand in for robot navigation and dialogue management software components. The scenario used to elicit data is one in which a human-robot team is tasked with exploring an unknown environment: a human gives verbal instructions from a remote location and the robot follows them, clarifying possible misunderstandings as needed via dialogue. We found the division of labor between wizards to be workable, which holds promise for future software development.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge