Sung Min Lee

Deep Learning-Based Solvability of Underdetermined Inverse Problems in Medical Imaging

Jan 07, 2020

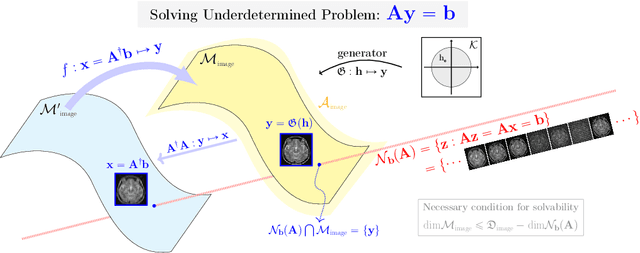

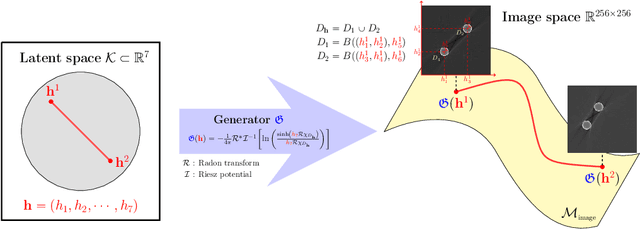

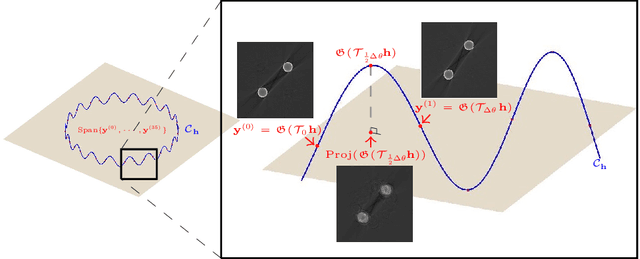

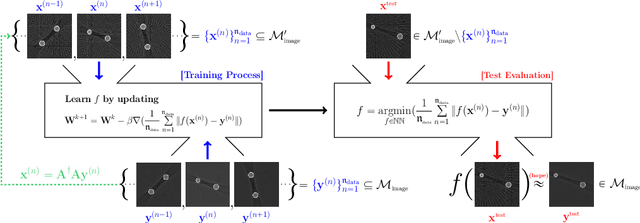

Abstract:Recently, with the significant developments in deep learning techniques, solving underdetermined inverse problems has become one of the major concerns in the medical imaging domain. Typical examples include undersampled magnetic resonance imaging, interior tomography, and sparse-view computed tomography, where deep learning techniques have achieved excellent performances. Although deep learning methods appear to overcome the limitations of existing mathematical methods when handling various underdetermined problems, there is a lack of rigorous mathematical foundations that would allow us to elucidate the reasons for the remarkable performance of deep learning methods. This study focuses on learning the causal relationship regarding the structure of the training data suitable for deep learning, to solve highly underdetermined inverse problems. We observe that a majority of the problems of solving underdetermined linear systems in medical imaging are highly non-linear. Furthermore, we analyze if a desired reconstruction map can be learnable from the training data and underdetermined system.

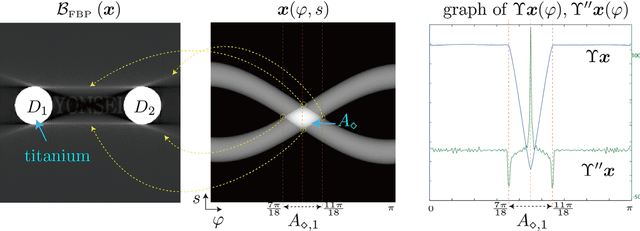

CT sinogram-consistency learning for metal-induced beam hardening correction

Jan 12, 2018

Abstract:This paper proposes a sinogram consistency learning method to deal with beam-hardening related artifacts in polychromatic computerized tomography (CT). The presence of highly attenuating materials in the scan field causes an inconsistent sinogram, that does not match the range space of the Radon transform. When the mismatched data are entered into the range space during CT reconstruction, streaking and shading artifacts are generated owing to the inherent nature of the inverse Radon transform. The proposed learning method aims to repair inconsistent sinograms by removing the primary metal-induced beam-hardening factors along the metal trace in the sinogram. Taking account of the fundamental difficulty in obtaining sufficient training data in a medical environment, the learning method is designed to use simulated training data and a patient-type specific learning model is used to simplify the learning process. The feasibility of the proposed method is investigated using a dataset, consisting of real CT scan of pelvises containing hip prostheses. The anatomical areas in training and test data are different, in order to demonstrate that the proposed method extracts the beam hardening features, selectively. The results show that our method successfully corrects sinogram inconsistency by extracting beam-hardening sources by means of deep learning. This paper proposed a deep learning method of sinogram correction for beam hardening reduction in CT for the first time. Conventional methods for beam hardening reduction are based on regularizations, and have the fundamental drawback of being not easily able to use manifold CT images, while a deep learning approach has the potential to do so.

Automatic Estimation of Fetal Abdominal Circumference from Ultrasound Images

Nov 02, 2017

Abstract:Ultrasound diagnosis is routinely used in obstetrics and gynecology for fetal biometry, and owing to its time-consuming process, there has been a great demand for automatic estimation. However, the automated analysis of ultrasound images is complicated because they are patient-specific, operator-dependent, and machine-specific. Among various types of fetal biometry, the accurate estimation of abdominal circumference (AC) is especially difficult to perform automatically because the abdomen has low contrast against surroundings, non-uniform contrast, and irregular shape compared to other parameters.We propose a method for the automatic estimation of the fetal AC from 2D ultrasound data through a specially designed convolutional neural network (CNN), which takes account of doctors' decision process, anatomical structure, and the characteristics of the ultrasound image. The proposed method uses CNN to classify ultrasound images (stomach bubble, amniotic fluid, and umbilical vein) and Hough transformation for measuring AC. We test the proposed method using clinical ultrasound data acquired from 56 pregnant women. Experimental results show that, with relatively small training samples, the proposed CNN provides sufficient classification results for AC estimation through the Hough transformation. The proposed method automatically estimates AC from ultrasound images. The method is quantitatively evaluated, and shows stable performance in most cases and even for ultrasound images deteriorated by shadowing artifacts. As a result of experiments for our acceptance check, the accuracies are 0.809 and 0.771 with the expert 1 and expert 2, respectively, while the accuracy between the two experts is 0.905. However, for cases of oversized fetus, when the amniotic fluid is not observed or the abdominal area is distorted, it could not correctly estimate AC.

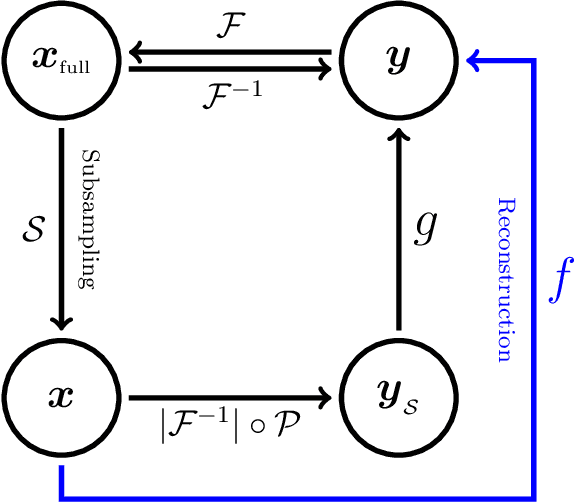

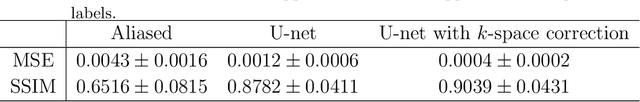

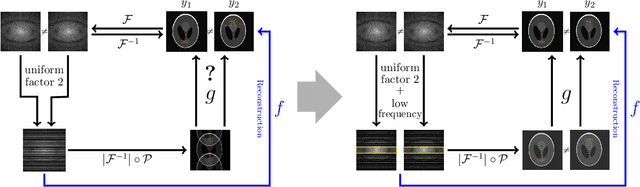

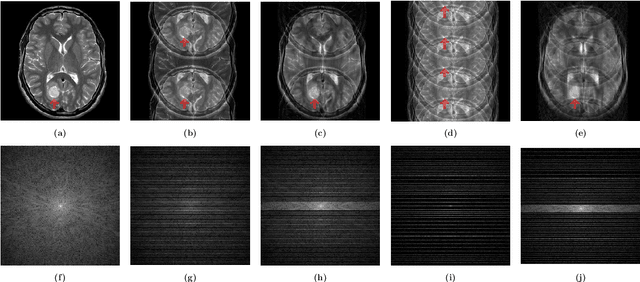

Deep learning for undersampled MRI reconstruction

Sep 11, 2017

Abstract:This paper presents a deep learning method for faster magnetic resonance imaging (MRI) by reducing k-space data with sub-Nyquist sampling strategies and provides a rationale for why the proposed approach works well. Uniform subsampling is used in the time-consuming phase-encoding direction to capture high-resolution image information, while permitting the image-folding problem dictated by the Poisson summation formula. To deal with the localization uncertainty due to image folding, very few low-frequency k-space data are added. Training the deep learning net involves input and output images that are pairs of Fourier transforms of the subsampled and fully sampled k-space data. Numerous experiments show the remarkable performance of the proposed method; only 29% of k-space data can generate images of high quality as effectively as standard MRI reconstruction with fully sampled data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge