Souvik Hazra

Cross-modal Learning of Graph Representations using Radar Point Cloud for Long-Range Gesture Recognition

Mar 31, 2022

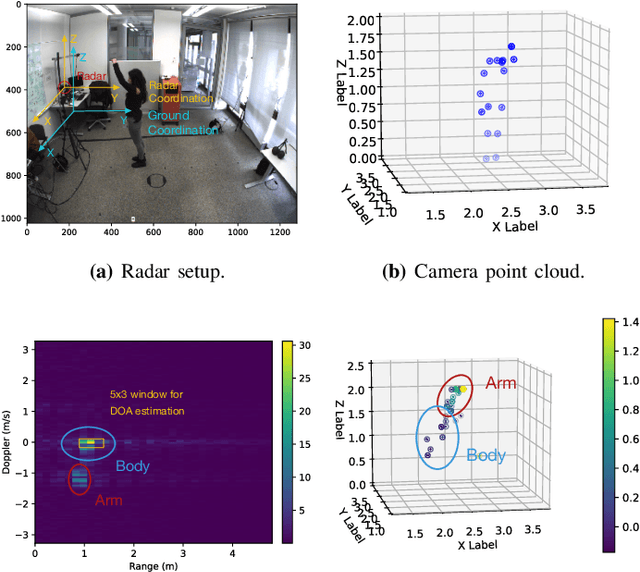

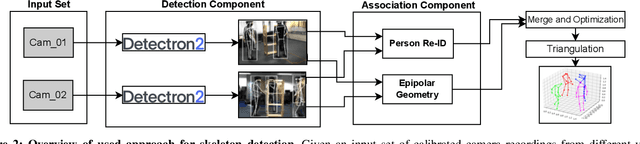

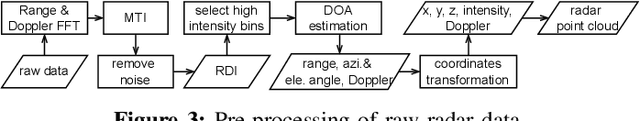

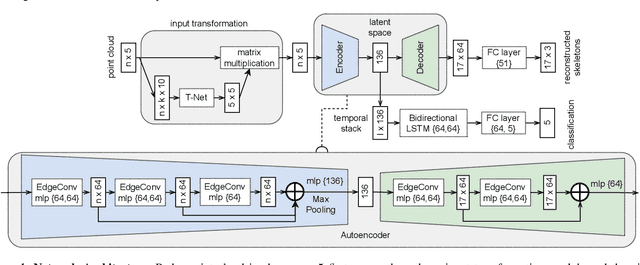

Abstract:Gesture recognition is one of the most intuitive ways of interaction and has gathered particular attention for human computer interaction. Radar sensors possess multiple intrinsic properties, such as their ability to work in low illumination, harsh weather conditions, and being low-cost and compact, making them highly preferable for a gesture recognition solution. However, most literature work focuses on solutions with a limited range that is lower than a meter. We propose a novel architecture for a long-range (1m - 2m) gesture recognition solution that leverages a point cloud-based cross-learning approach from camera point cloud to 60-GHz FMCW radar point cloud, which allows learning better representations while suppressing noise. We use a variant of Dynamic Graph CNN (DGCNN) for the cross-learning, enabling us to model relationships between the points at a local and global level and to model the temporal dynamics a Bi-LSTM network is employed. In the experimental results section, we demonstrate our model's overall accuracy of 98.4% for five gestures and its generalization capability.

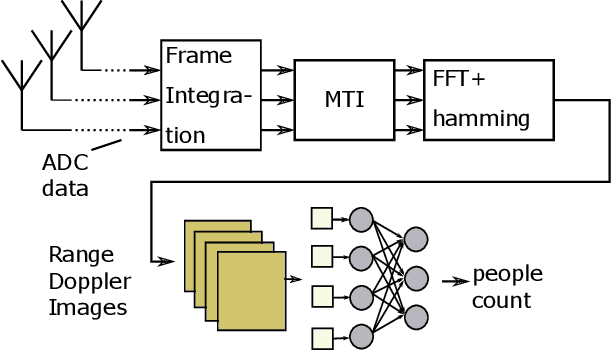

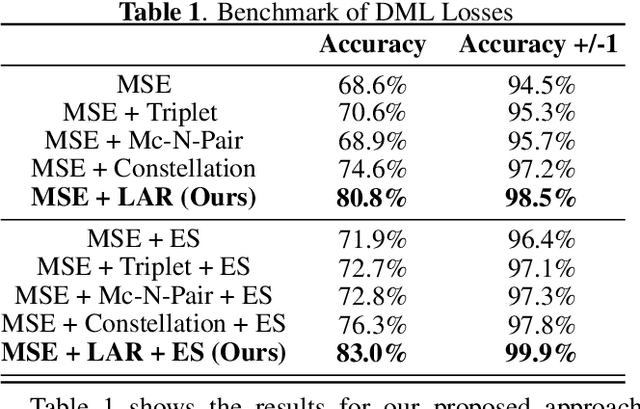

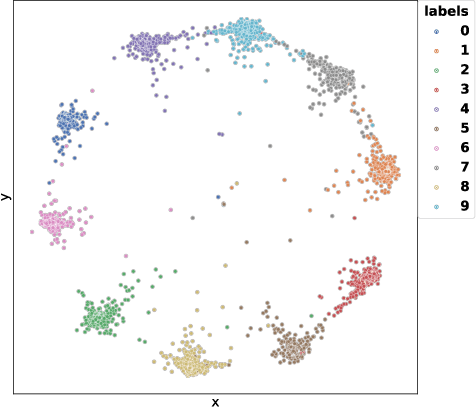

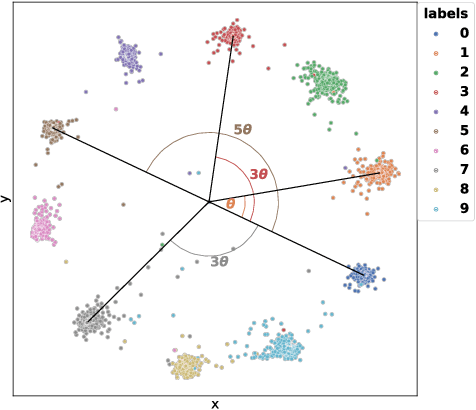

Label-Aware Ranked Loss for robust People Counting using Automotive in-cabin Radar

Oct 12, 2021

Abstract:In this paper, we introduce the Label-Aware Ranked loss, a novel metric loss function. Compared to the state-of-the-art Deep Metric Learning losses, this function takes advantage of the ranked ordering of the labels in regression problems. To this end, we first show that the loss minimises when datapoints of different labels are ranked and laid at uniform angles between each other in the embedding space. Then, to measure its performance, we apply the proposed loss on a regression task of people counting with a short-range radar in a challenging scenario, namely a vehicle cabin. The introduced approach improves the accuracy as well as the neighboring labels accuracy up to 83.0% and 99.9%: An increase of 6.7%and 2.1% on state-of-the-art methods, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge