Sourav De

Convex Clustering Redefined: Robust Learning with the Median of Means Estimator

Nov 12, 2025

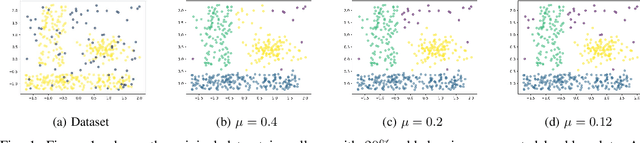

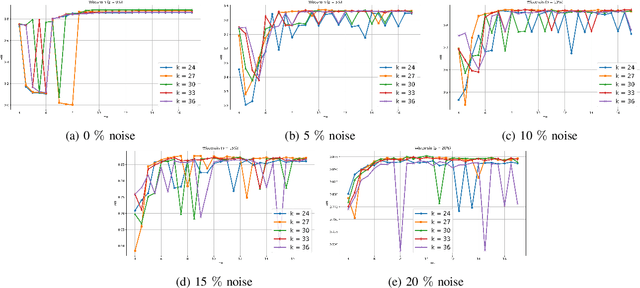

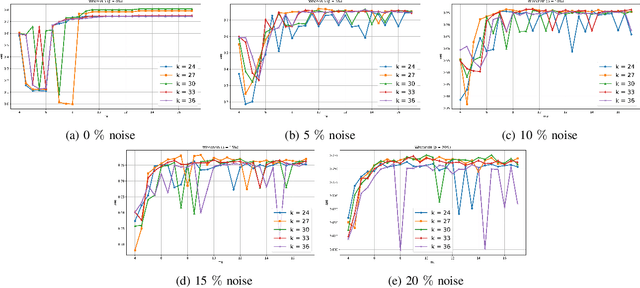

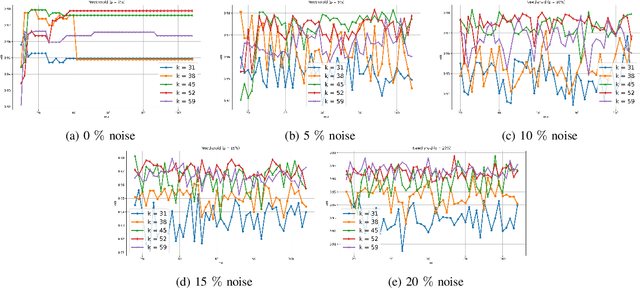

Abstract:Clustering approaches that utilize convex loss functions have recently attracted growing interest in the formation of compact data clusters. Although classical methods like k-means and its wide family of variants are still widely used, all of them require the number of clusters k to be supplied as input, and many are notably sensitive to initialization. Convex clustering provides a more stable alternative by formulating the clustering task as a convex optimization problem, ensuring a unique global solution. However, it faces challenges in handling high-dimensional data, especially in the presence of noise and outliers. Additionally, strong fusion regularization, controlled by the tuning parameter, can hinder effective cluster formation within a convex clustering framework. To overcome these challenges, we introduce a robust approach that integrates convex clustering with the Median of Means (MoM) estimator, thus developing an outlier-resistant and efficient clustering framework that does not necessitate prior knowledge of the number of clusters. By leveraging the robustness of MoM alongside the stability of convex clustering, our method enhances both performance and efficiency, especially on large-scale datasets. Theoretical analysis demonstrates weak consistency under specific conditions, while experiments on synthetic and real-world datasets validate the method's superior performance compared to existing approaches.

CIMulator: A Comprehensive Simulation Platform for Computing-In-Memory Circuit Macros with Low Bit-Width and Real Memory Materials

Jun 26, 2023

Abstract:This paper presents a simulation platform, namely CIMulator, for quantifying the efficacy of various synaptic devices in neuromorphic accelerators for different neural network architectures. Nonvolatile memory devices, such as resistive random-access memory, ferroelectric field-effect transistor, and volatile static random-access memory devices, can be selected as synaptic devices. A multilayer perceptron and convolutional neural networks (CNNs), such as LeNet-5, VGG-16, and a custom CNN named C4W-1, are simulated to evaluate the effects of these synaptic devices on the training and inference outcomes. The dataset used in the simulations are MNIST, CIFAR-10, and a white blood cell dataset. By applying batch normalization and appropriate optimizers in the training phase, neuromorphic systems with very low-bit-width or binary weights could achieve high pattern recognition rates that approach software-based CNN accuracy. We also introduce spiking neural networks with RRAM-based synaptic devices for the recognition of MNIST handwritten digits.

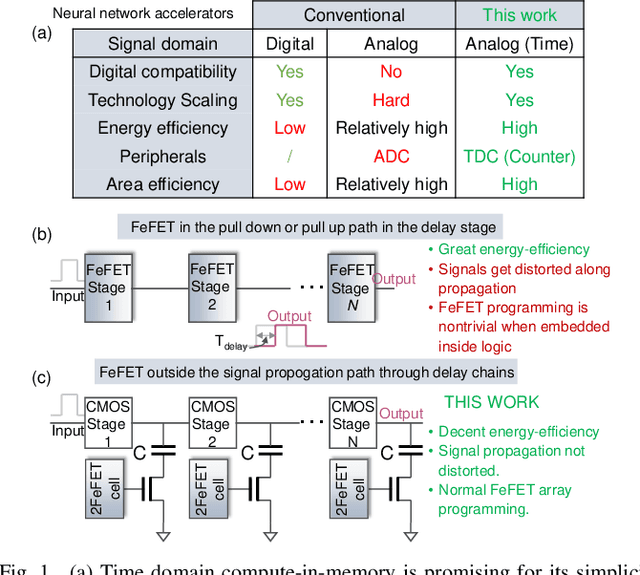

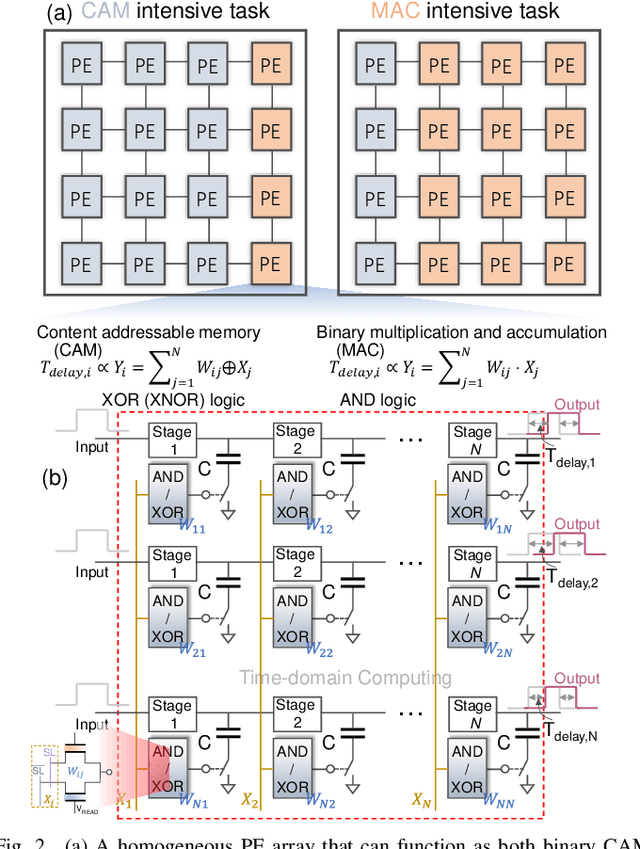

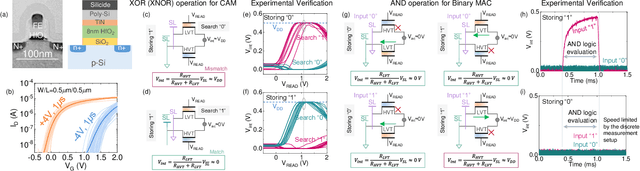

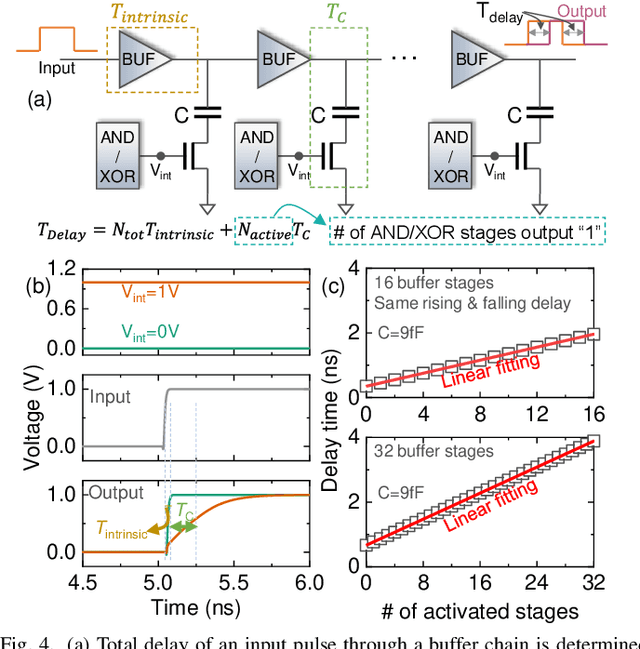

A Homogeneous Processing Fabric for Matrix-Vector Multiplication and Associative Search Using Ferroelectric Time-Domain Compute-in-Memory

Sep 24, 2022

Abstract:In this work, we propose a ferroelectric FET(FeFET) time-domain compute-in-memory (TD-CiM) array as a homogeneous processing fabric for binary multiplication-accumulation (MAC) and content addressable memory (CAM). We demonstrate that: i) the XOR(XNOR)/AND logic function can be realized using a single cell composed of 2FeFETs connected in series; ii) a two-phase computation in an inverter chain with each stage featuring the XOR/AND cell to control the associated capacitor loading and the computation results of binary MAC and CAM are reflected in the chain output signal delay, illustrating full digital compatibility; iii) comprehensive theoretical and experimental validation of the proposed 2FeFET cell and inverter delay chains and their robustness against FeFET variation; iv) the homogeneous processing fabric is applied in hyperdimensional computing to show dynamic and fine-grain resource allocation to accommodate different tasks requiring varying demands over the binary MAC and CAM resources.

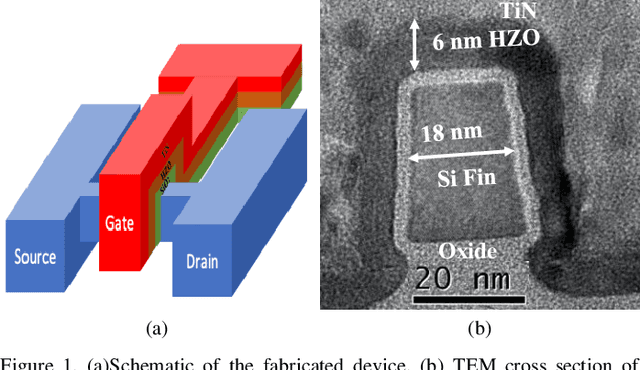

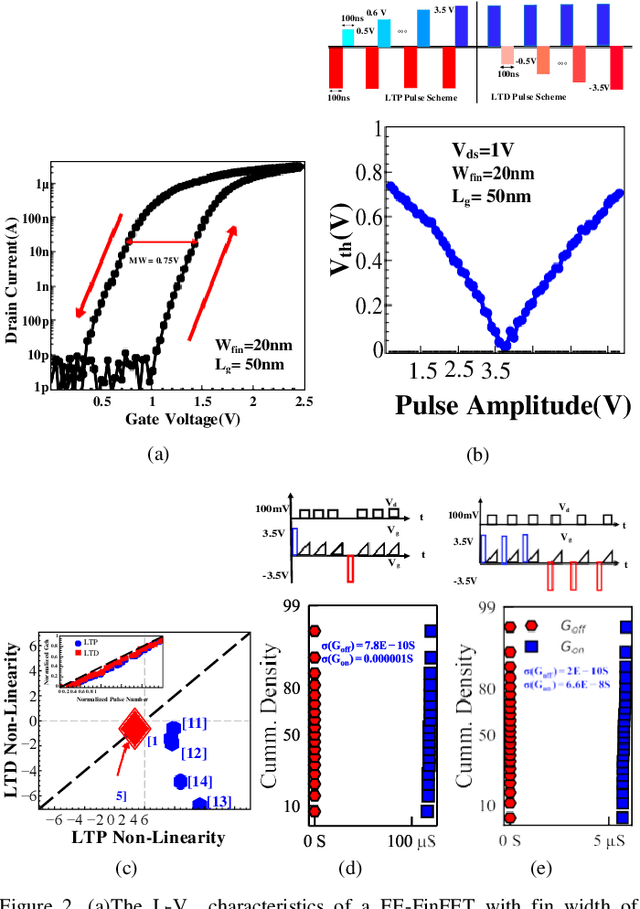

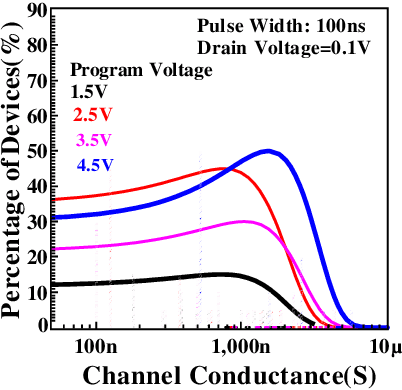

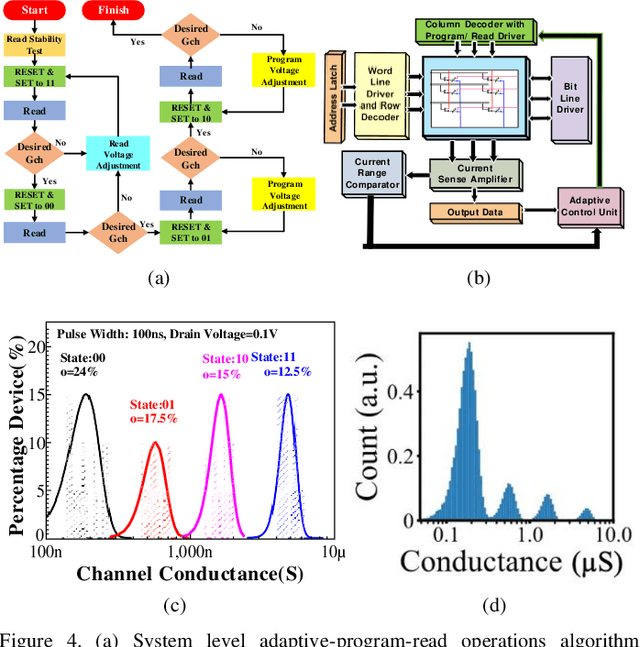

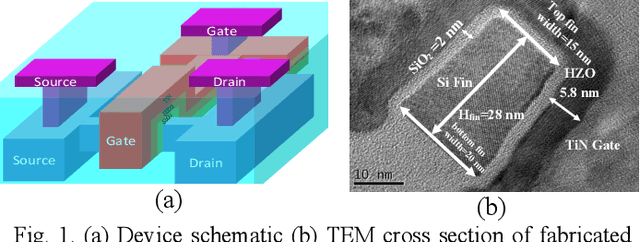

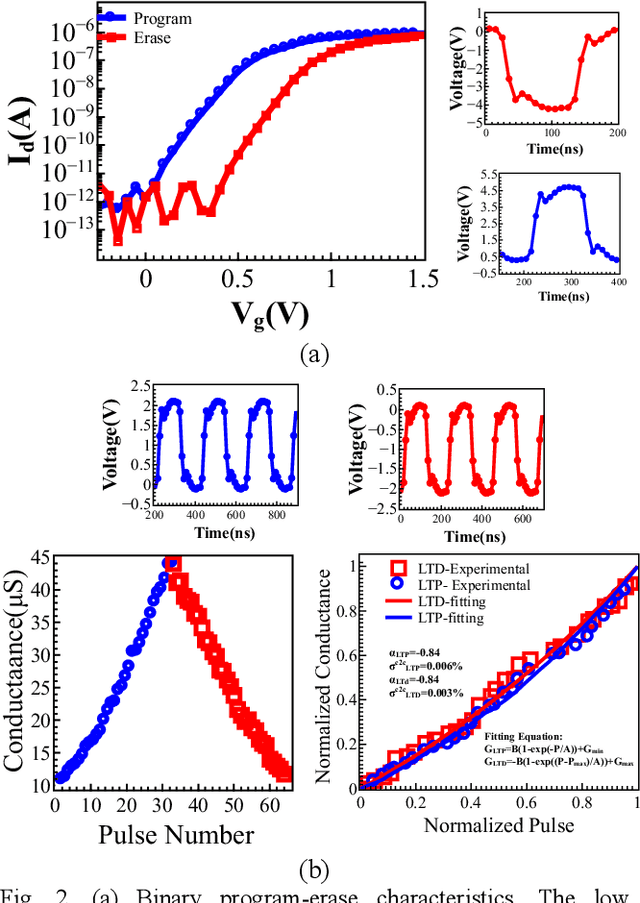

Neuromorphic Computing with Deeply Scaled Ferroelectric FinFET in Presence of Process Variation, Device Aging and Flicker Noise

Mar 05, 2021

Abstract:This paper reports a comprehensive study on the applicability of ultra-scaled ferroelectric FinFETs with 6 nm thick hafnium zirconium oxide layer for neuromorphic computing in the presence of process variation, flicker noise, and device aging. An intricate study has been conducted about the impact of such variations on the inference accuracy of pre-trained neural networks consisting of analog, quaternary (2-bit/cell) and binary synapse. A pre-trained neural network with 97.5% inference accuracy on the MNIST dataset has been adopted as the baseline. Process variation, flicker noise, and device aging characterization have been performed and a statistical model has been developed to capture all these effects during neural network simulation. Extrapolated retention above 10 years have been achieved for binary read-out procedure. We have demonstrated that the impact of (1) retention degradation due to the oxide thickness scaling, (2) process variation, and (3) flicker noise can be abated in ferroelectric FinFET based binary neural networks, which exhibits superior performance over quaternary and analog neural network, amidst all variations. The performance of a neural network is the result of coalesced performance of device, architecture and algorithm. This research corroborates the applicability of deeply scaled ferroelectric FinFETs for non-von Neumann computing with proper combination of architecture and algorithm.

Alleviation of Temperature Variation Induced Accuracy Degradation in Ferroelectric FinFET Based Neural Network

Mar 03, 2021

Abstract:This paper reports the impacts of temperature variation on the inference accuracy of pre-trained all-ferroelectric FinFET deep neural networks, along with plausible design techniques to abate these impacts. We adopted a pre-trained artificial neural network (NN) with 96.4% inference accuracy on the MNIST dataset as the baseline. As an aftermath of temperature change, the conductance drift of a programmed cell was captured by a compact model over a wide range of gate bias. We observe a significant inference accuracy degradation in the analog neural network at 233 K for a NN trained at 300 K. Finally, we deployed binary neural networks with "read voltage" optimization to ensure immunity of NN to accuracy degradation under temperature variation, maintaining an inference accuracy 96.1%

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge