Simon Huber

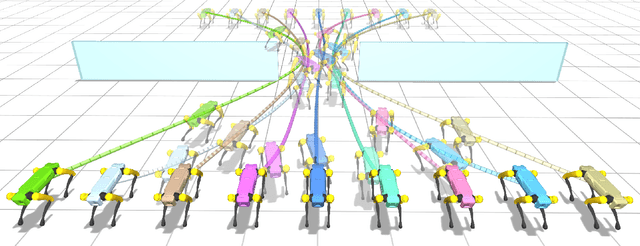

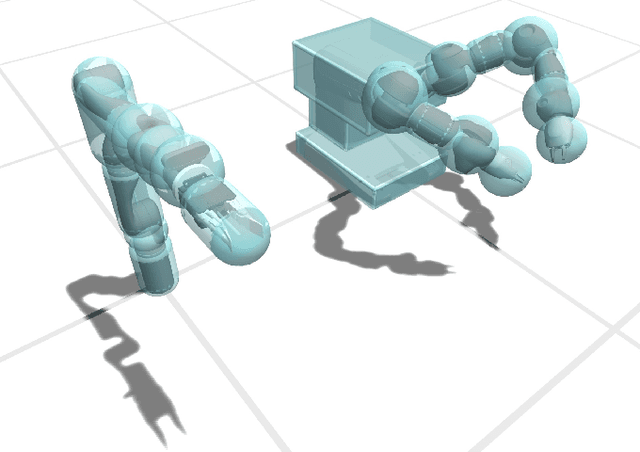

Multi-robot workspace design and motion planning for package sorting

Dec 15, 2024Abstract:Robotic systems are routinely used in the logistics industry to enhance operational efficiency, but the design of robot workspaces remains a complex and manual task, which limits the system's flexibility to changing demands. This paper aims to automate robot workspace design by proposing a computational framework to generate a budget-minimizing layout by selectively placing stationary robots on a floor grid, which includes robotic arms and conveyor belts, and plan their cooperative motions to sort packages from given input and output locations. We propose a hierarchical solving strategy that first optimizes the layout to minimize the hardware budget with a subgraph optimization subject to network flow constraints, followed by task allocation and motion planning based on the generated layout. In addition, we demonstrate how to model conveyor belts as manipulators with multiple end effectors to integrate them into our design and planning framework. We evaluated our framework on a set of simulated scenarios and showed that it can generate optimal layouts and collision-free motion trajectories, adapting to different available robots, cost assignments, and box payloads.

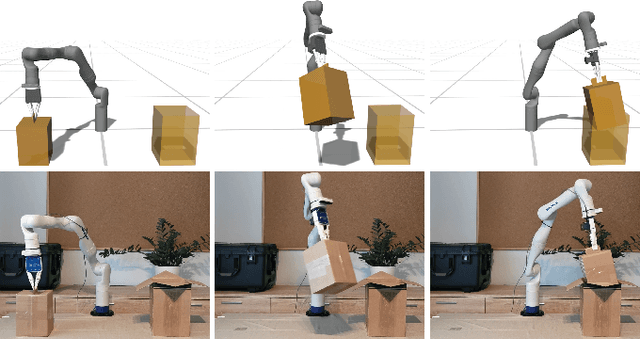

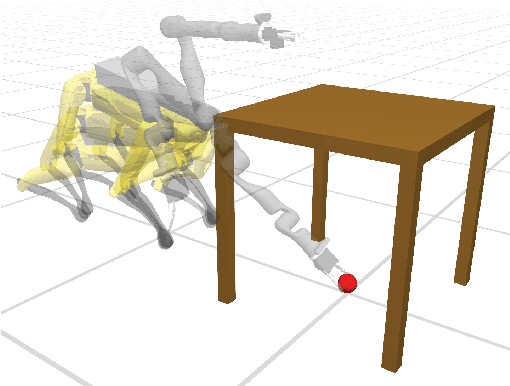

Differentiable Collision Avoidance Using Collision Primitives

Apr 20, 2022

Abstract:A central aspect of robotic motion planning is collision avoidance, where a multitude of different approaches are currently in use. Optimization-based motion planning is one method, that often heavily relies on distance computations between robots and obstacles. These computations can easily become a bottleneck, as they do not scale well with the complexity of the robots or the environment. To improve performance, many different methods suggested to use collision primitives, i.e. simple shapes that approximate the more complex rigid bodies, and that are simpler to compute distances to and from. However, each pair of primitives requires its own specialized code, and certain pairs are known to suffer from numerical issues. In this paper, we propose an easy-to-use, unified treatment of a wide variety of primitives. We formulate distance computation as a minimization problem, which we solve iteratively. We show how to take derivatives of this minimization problem, allowing it to be seamlessly integrated into a trajectory optimization method. Our experiments show that our method performs favourably, both in terms of timing and the quality of the trajectory. The source code of our implementation will be released upon acceptance.

A Modular Benchmarking Infrastructure for High-Performance and Reproducible Deep Learning

Jan 29, 2019

Abstract:We introduce Deep500: the first customizable benchmarking infrastructure that enables fair comparison of the plethora of deep learning frameworks, algorithms, libraries, and techniques. The key idea behind Deep500 is its modular design, where deep learning is factorized into four distinct levels: operators, network processing, training, and distributed training. Our evaluation illustrates that Deep500 is customizable (enables combining and benchmarking different deep learning codes) and fair (uses carefully selected metrics). Moreover, Deep500 is fast (incurs negligible overheads), verifiable (offers infrastructure to analyze correctness), and reproducible. Finally, as the first distributed and reproducible benchmarking system for deep learning, Deep500 provides software infrastructure to utilize the most powerful supercomputers for extreme-scale workloads.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge