Simon Coghlan

Control Search Rankings, Control the World: What is a Good Search Engine?

Feb 05, 2025

Abstract:This paper examines the ethical question, 'What is a good search engine?' Since search engines are gatekeepers of global online information, it is vital they do their job ethically well. While the Internet is now several decades old, the topic remains under-explored from interdisciplinary perspectives. This paper presents a novel role-based approach involving four ethical models of types of search engine behavior: Customer Servant, Librarian, Journalist, and Teacher. It explores these ethical models with reference to the research field of information retrieval, and by means of a case study involving the COVID-19 global pandemic. It also reflects on the four ethical models in terms of the history of search engine development, from earlier crude efforts in the 1990s, to the very recent prospect of Large Language Model-based conversational information seeking systems taking on the roles of established web search engines like Google. Finally, the paper outlines considerations that inform present and future regulation and accountability for search engines as they continue to evolve. The paper should interest information retrieval researchers and others interested in the ethics of search engines.

Readying Medical Students for Medical AI: The Need to Embed AI Ethics Education

Sep 07, 2021

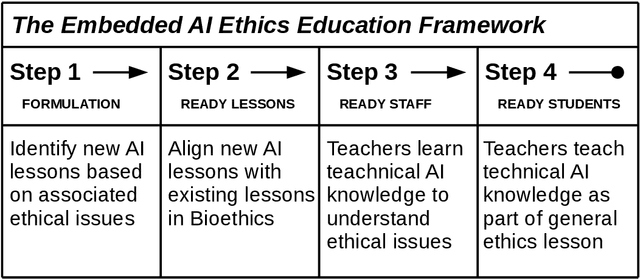

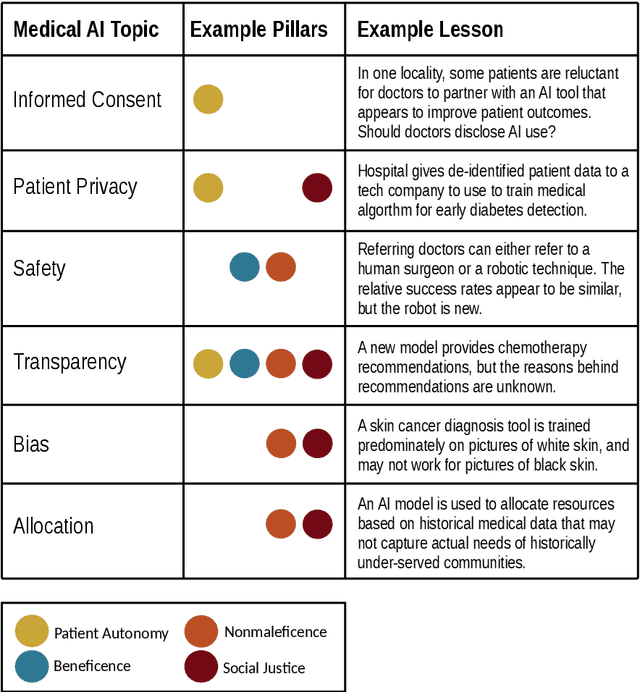

Abstract:Medical students will almost inevitably encounter powerful medical AI systems early in their careers. Yet, contemporary medical education does not adequately equip students with the basic clinical proficiency in medical AI needed to use these tools safely and effectively. Education reform is urgently needed, but not easily implemented, largely due to an already jam-packed medical curricula. In this article, we propose an education reform framework as an effective and efficient solution, which we call the Embedded AI Ethics Education Framework. Unlike other calls for education reform to accommodate AI teaching that are more radical in scope, our framework is modest and incremental. It leverages existing bioethics or medical ethics curricula to develop and deliver content on the ethical issues associated with medical AI, especially the harms of technology misuse, disuse, and abuse that affect the risk-benefit analyses at the heart of healthcare. In doing so, the framework provides a simple tool for going beyond the "What?" and the "Why?" of medical AI ethics education, to answer the "How?", giving universities, course directors, and/or professors a broad road-map for equipping their students with the necessary clinical proficiency in medical AI.

The Three Ghosts of Medical AI: Can the Black-Box Present Deliver?

Dec 10, 2020

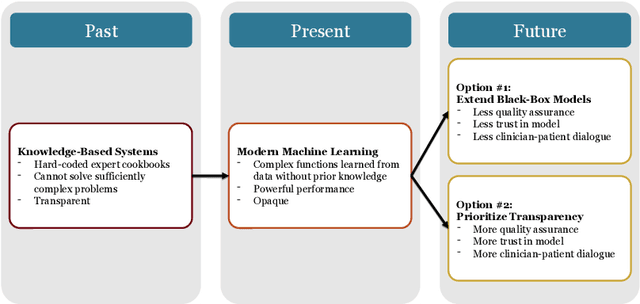

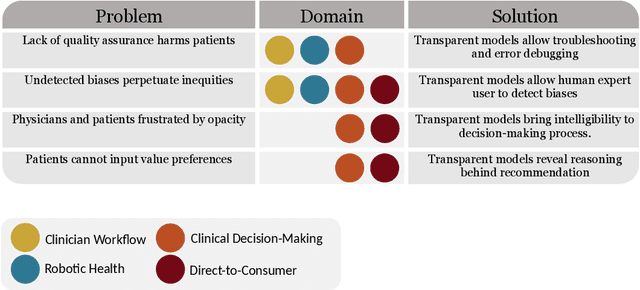

Abstract:Our title alludes to the three Christmas ghosts encountered by Ebenezer Scrooge in \textit{A Christmas Carol}, who guide Ebenezer through the past, present, and future of Christmas holiday events. Similarly, our article will take readers through a journey of the past, present, and future of medical AI. In doing so, we focus on the crux of modern machine learning: the reliance on powerful but intrinsically opaque models. When applied to the healthcare domain, these models fail to meet the needs for transparency that their clinician and patient end-users require. We review the implications of this failure, and argue that opaque models (1) lack quality assurance, (2) fail to elicit trust, and (3) restrict physician-patient dialogue. We then discuss how upholding transparency in all aspects of model design and model validation can help ensure the reliability of medical AI.

Good proctor or "Big Brother"? AI Ethics and Online Exam Supervision Technologies

Nov 15, 2020

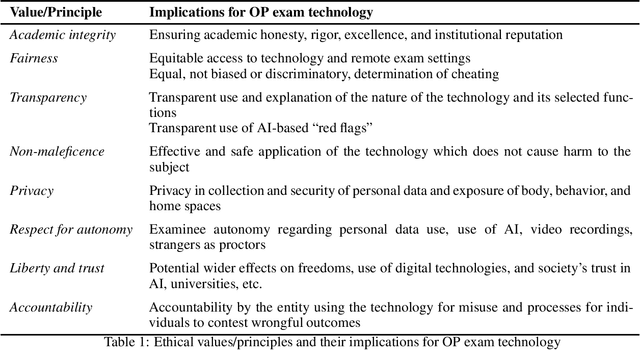

Abstract:This article philosophically analyzes online exam supervision technologies, which have been thrust into the public spotlight due to campus lockdowns during the COVID-19 pandemic and the growing demand for online courses. Online exam proctoring technologies purport to provide effective oversight of students sitting online exams, using artificial intelligence (AI) systems and human invigilators to supplement and review those systems. Such technologies have alarmed some students who see them as `Big Brother-like', yet some universities defend their judicious use. Critical ethical appraisal of online proctoring technologies is overdue. This article philosophically analyzes these technologies, focusing on the ethical concepts of academic integrity, fairness, non-maleficence, transparency, privacy, respect for autonomy, liberty, and trust. Most of these concepts are prominent in the new field of AI ethics and all are relevant to the education context. The essay provides ethical considerations that educational institutions will need to carefully review before electing to deploy and govern specific online proctoring technologies.

Trust and Medical AI: The challenges we face and the expertise needed to overcome them

Aug 18, 2020

Abstract:Artificial intelligence (AI) is increasingly of tremendous interest in the medical field. However, failures of medical AI could have serious consequences for both clinical outcomes and the patient experience. These consequences could erode public trust in AI, which could in turn undermine trust in our healthcare institutions. This article makes two contributions. First, it describes the major conceptual, technical, and humanistic challenges in medical AI. Second, it proposes a solution that hinges on the education and accreditation of new expert groups who specialize in the development, verification, and operation of medical AI technologies. These groups will be required to maintain trust in our healthcare institutions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge