Shweta Shinde

Quantitative Verification of Neural Networks And its Security Applications

Jun 25, 2019

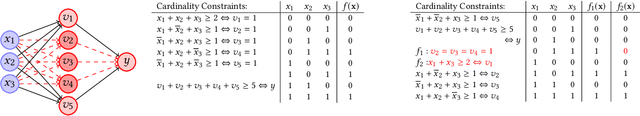

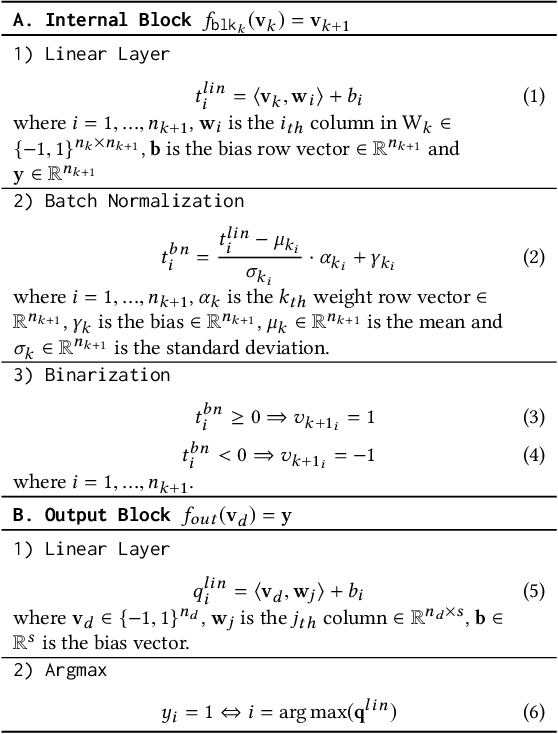

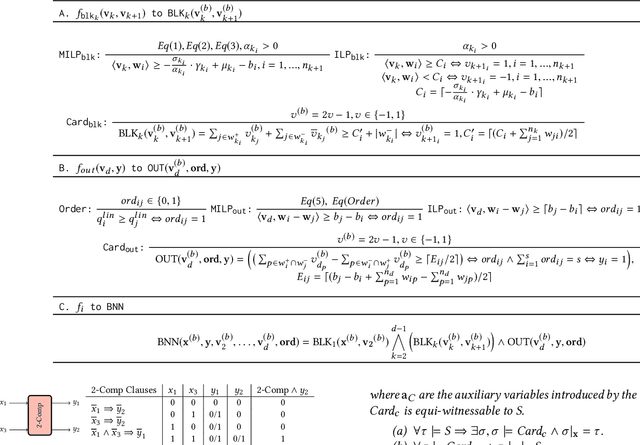

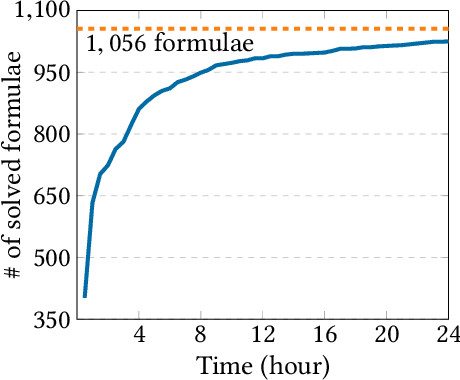

Abstract:Neural networks are increasingly employed in safety-critical domains. This has prompted interest in verifying or certifying logically encoded properties of neural networks. Prior work has largely focused on checking existential properties, wherein the goal is to check whether there exists any input that violates a given property of interest. However, neural network training is a stochastic process, and many questions arising in their analysis require probabilistic and quantitative reasoning, i.e., estimating how many inputs satisfy a given property. To this end, our paper proposes a novel and principled framework to quantitative verification of logical properties specified over neural networks. Our framework is the first to provide PAC-style soundness guarantees, in that its quantitative estimates are within a controllable and bounded error from the true count. We instantiate our algorithmic framework by building a prototype tool called NPAQ that enables checking rich properties over binarized neural networks. We show how emerging security analyses can utilize our framework in 3 concrete point applications: quantifying robustness to adversarial inputs, efficacy of trojan attacks, and fairness/bias of given neural networks.

Privado: Practical and Secure DNN Inference

Oct 01, 2018

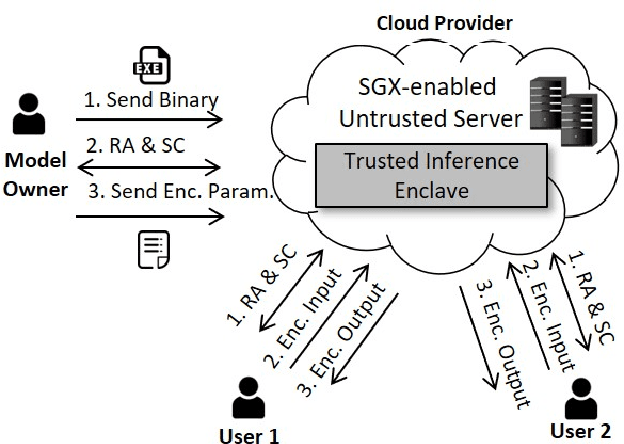

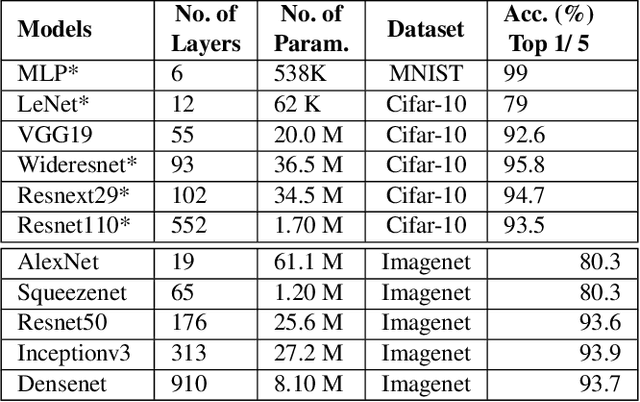

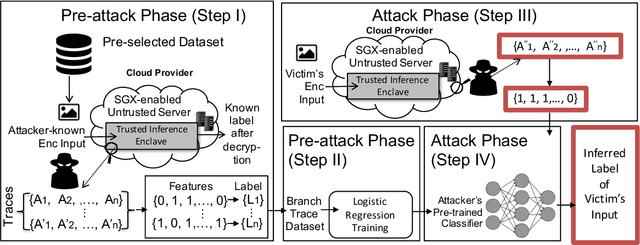

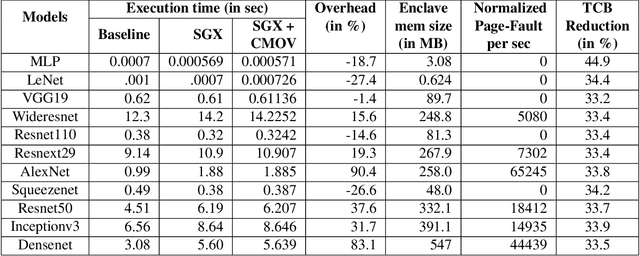

Abstract:Recently, cloud providers have extended support for trusted hardware primitives such as Intel SGX. Simultaneously, the field of deep learning is seeing enormous innovation and increase in adoption. In this paper, we therefore ask the question: "Can third-party cloud services use SGX to provide practical, yet secure DNN Inference-as-a-service? " Our work addresses the three main challenges that SGX-based DNN inferencing faces, namely, security, ease-of-use, and performance. We first demonstrate that side-channel based attacks on DNN models are indeed possible. We show that, by observing access patterns, we can recover inputs to the DNN model. This motivates the need for Privado, a system we have designed for secure inference-as-a-service. Privado is input-oblivious: it transforms any deep learning framework written in C/C++ to be free of input-dependent access patterns. Privado is fully-automated and has a low TCB: with zero developer effort, given an ONNX description, it generates compact C code for the model which can run within SGX-enclaves. Privado has low performance overhead: we have used Privado with Torch, and have shown its overhead to be 20.77\% on average on 10 contemporary networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge