Shreyas Goenka

Sequential Community Mode Estimation

Nov 16, 2021

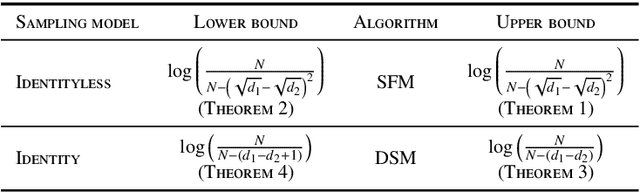

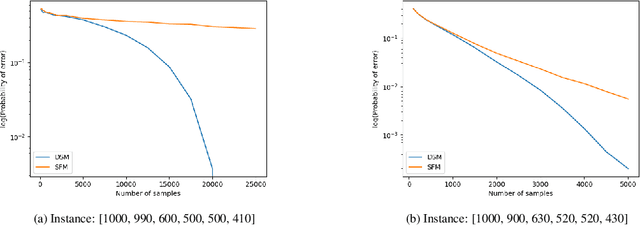

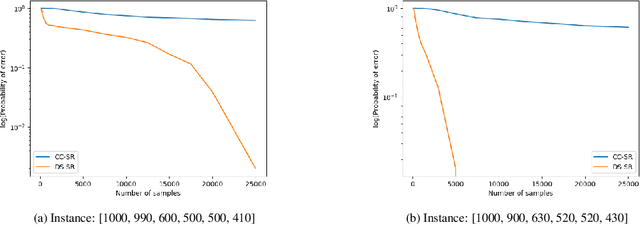

Abstract:We consider a population, partitioned into a set of communities, and study the problem of identifying the largest community within the population via sequential, random sampling of individuals. There are multiple sampling domains, referred to as \emph{boxes}, which also partition the population. Each box may consist of individuals of different communities, and each community may in turn be spread across multiple boxes. The learning agent can, at any time, sample (with replacement) a random individual from any chosen box; when this is done, the agent learns the community the sampled individual belongs to, and also whether or not this individual has been sampled before. The goal of the agent is to minimize the probability of mis-identifying the largest community in a \emph{fixed budget} setting, by optimizing both the sampling strategy as well as the decision rule. We propose and analyse novel algorithms for this problem, and also establish information theoretic lower bounds on the probability of error under any algorithm. In several cases of interest, the exponential decay rates of the probability of error under our algorithms are shown to be optimal up to constant factors. The proposed algorithms are further validated via simulations on real-world datasets.

Federated Action Recognition on Heterogeneous Embedded Devices

Jul 18, 2021

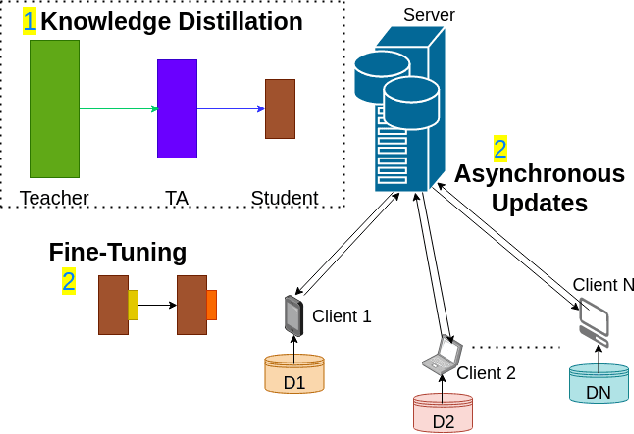

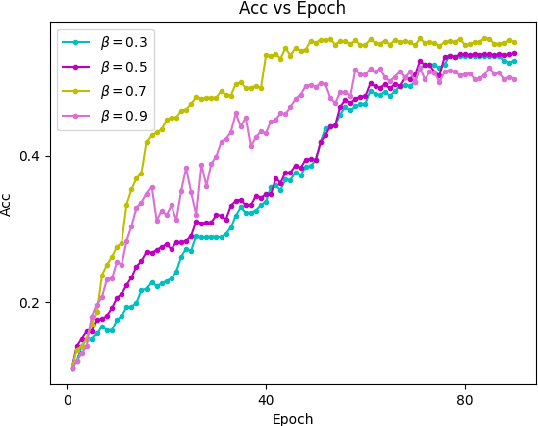

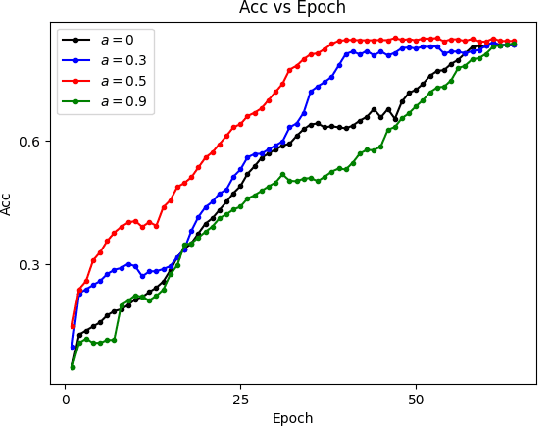

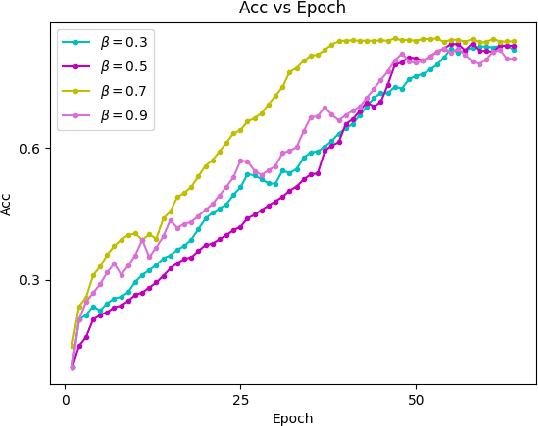

Abstract:Federated learning allows a large number of devices to jointly learn a model without sharing data. In this work, we enable clients with limited computing power to perform action recognition, a computationally heavy task. We first perform model compression at the central server through knowledge distillation on a large dataset. This allows the model to learn complex features and serves as an initialization for model fine-tuning. The fine-tuning is required because the limited data present in smaller datasets is not adequate for action recognition models to learn complex spatio-temporal features. Because the clients present are often heterogeneous in their computing resources, we use an asynchronous federated optimization and we further show a convergence bound. We compare our approach to two baseline approaches: fine-tuning at the central server (no clients) and fine-tuning using (heterogeneous) clients using synchronous federated averaging. We empirically show on a testbed of heterogeneous embedded devices that we can perform action recognition with comparable accuracy to the two baselines above, while our asynchronous learning strategy reduces the training time by 40%, relative to synchronous learning.

* 13 pages, 12 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge