Shida He

Long range teleoperation for fine manipulation tasks under time-delay network conditions

Mar 21, 2019

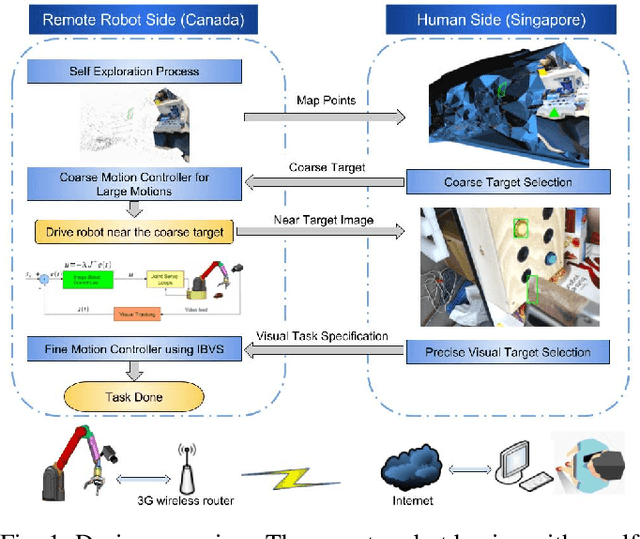

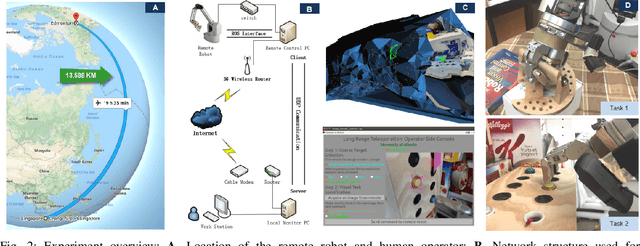

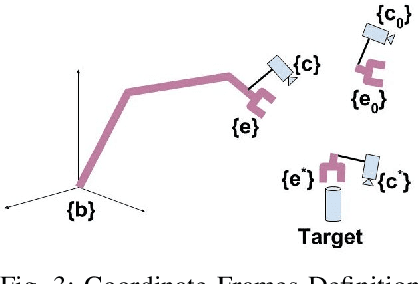

Abstract:We present a coarse-to-fine approach based semi-autonomous teleoperation system using vision guidance. The system is optimized for long range teleoperation tasks under time-delay network conditions and does not require prior knowledge of the remote scene. Our system initializes with a self exploration behavior that senses the remote surroundings through a freely mounted eye-in-hand web cam. The self exploration stage estimates hand-eye calibration and provides a telepresence interface via real-time 3D geometric reconstruction. The human operator is able to specify a visual task through the interface and a coarse-to-fine controller guides the remote robot enabling our system to work in high latency networks. Large motions are guided by coarse 3D estimation, whereas fine motions use image cues (IBVS). Network data transmission cost is minimized by sending only sparse points and a final image to the human side. Experiments from Singapore to Canada on multiple tasks were conducted to show our system's capability to work in long range teleoperation tasks.

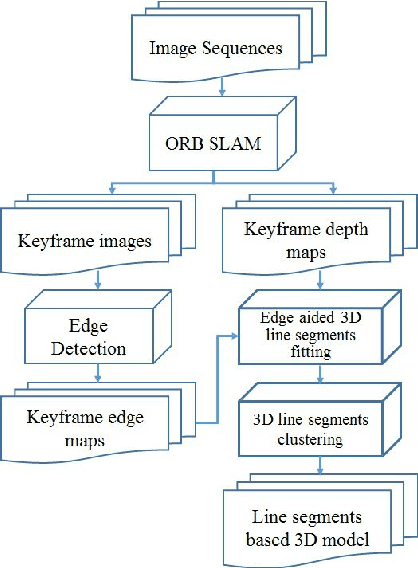

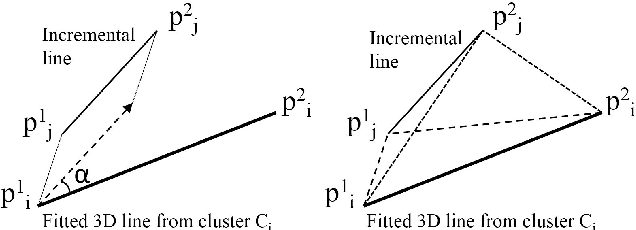

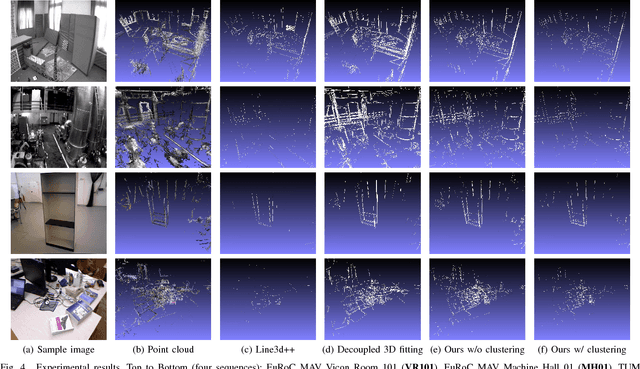

Incremental 3D Line Segment Extraction from Semi-dense SLAM

Apr 26, 2018

Abstract:Although semi-dense Simultaneous Localization and Mapping (SLAM) has been becoming more popular over the last few years, there is a lack of efficient methods for representing and processing their large scale point clouds. In this paper, we propose using 3D line segments to simplify the point clouds generated by semi-dense SLAM. Specifically, we present a novel incremental approach for 3D line segment extraction. This approach reduces a 3D line segment fitting problem into two 2D line segment fitting problems and takes advantage of both images and depth maps. In our method, 3D line segments are fitted incrementally along detected edge segments via minimizing fitting errors on two planes. By clustering the detected line segments, the resulting 3D representation of the scene achieves a good balance between compactness and completeness. Our experimental results show that the 3D line segments generated by our method are highly accurate. As an application, we demonstrate that these line segments greatly improve the quality of 3D surface reconstruction compared to a feature point based baseline.

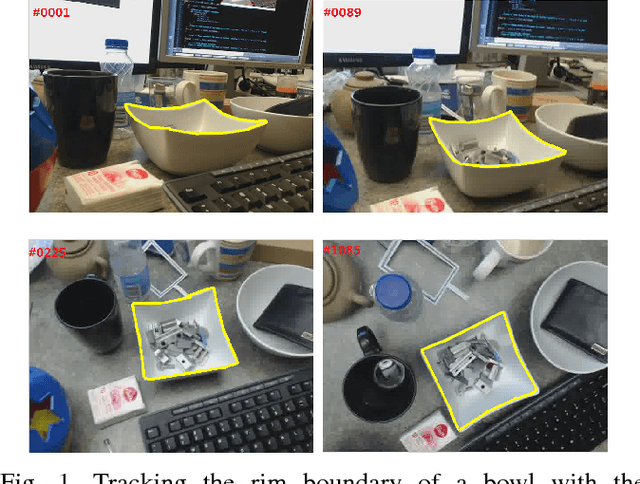

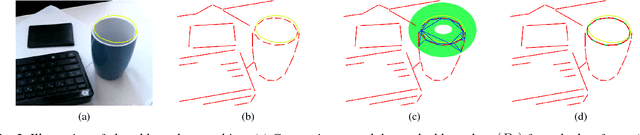

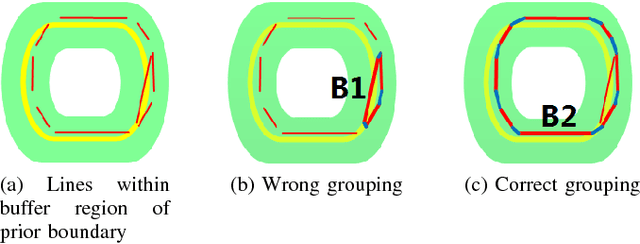

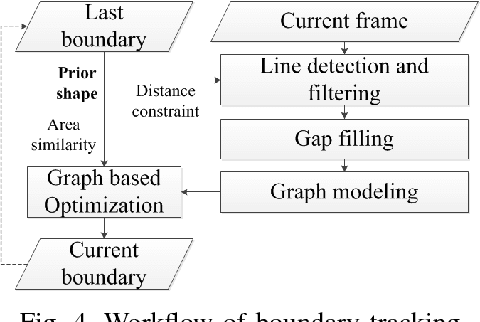

Real-Time Salient Closed Boundary Tracking via Line Segments Perceptual Grouping

Aug 09, 2017

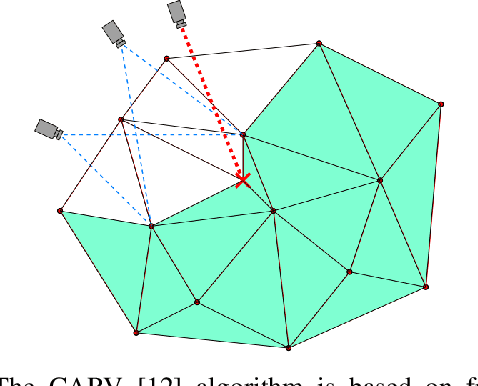

Abstract:This paper presents a novel real-time method for tracking salient closed boundaries from video image sequences. This method operates on a set of straight line segments that are produced by line detection. The tracking scheme is coherently integrated into a perceptual grouping framework in which the visual tracking problem is tackled by identifying a subset of these line segments and connecting them sequentially to form a closed boundary with the largest saliency and a certain similarity to the previous one. Specifically, we define a new tracking criterion which combines a grouping cost and an area similarity constraint. The proposed criterion makes the resulting boundary tracking more robust to local minima. To achieve real-time tracking performance, we use Delaunay Triangulation to build a graph model with the detected line segments and then reduce the tracking problem to finding the optimal cycle in this graph. This is solved by our newly proposed closed boundary candidates searching algorithm called "Bidirectional Shortest Path (BDSP)". The efficiency and robustness of the proposed method are tested on real video sequences as well as during a robot arm pouring experiment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge