Shibani Hamsa

Speaker Identification from emotional and noisy speech data using learned voice segregation and Speech VGG

Oct 23, 2022

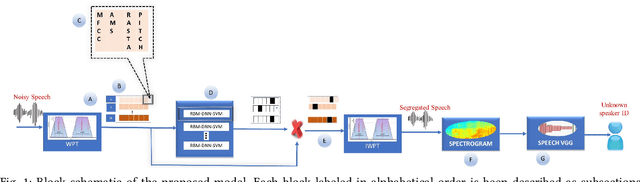

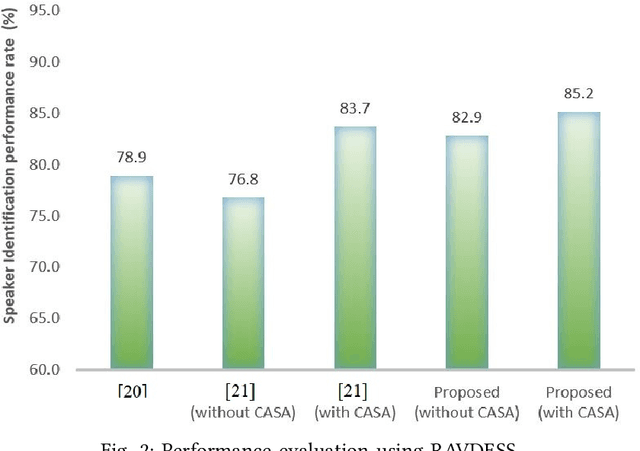

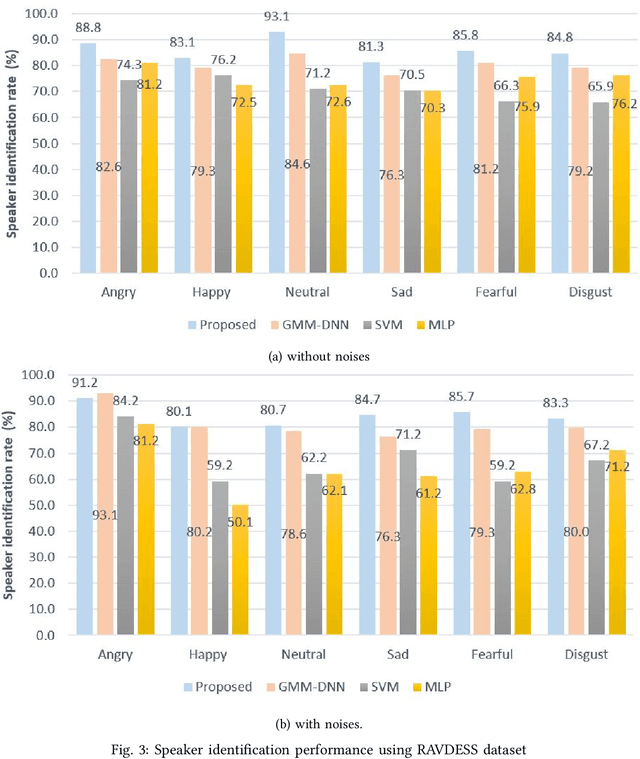

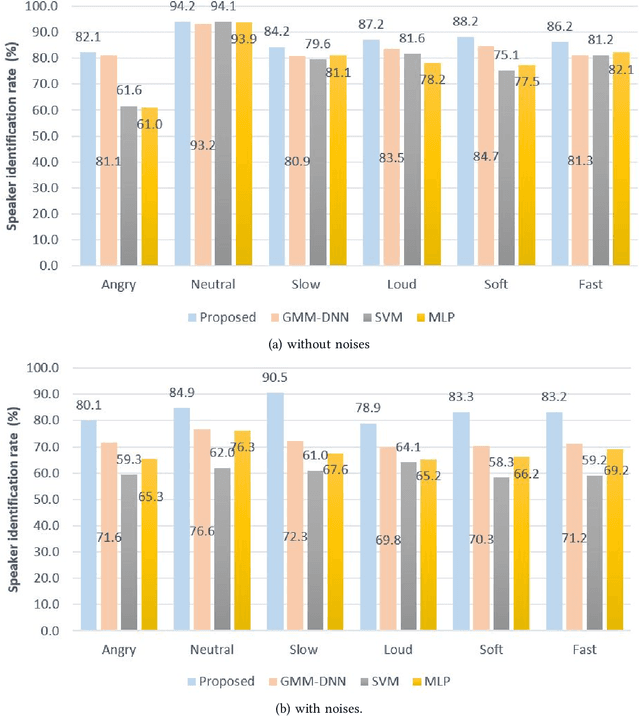

Abstract:Speech signals are subjected to more acoustic interference and emotional factors than other signals. Noisy emotion-riddled speech data is a challenge for real-time speech processing applications. It is essential to find an effective way to segregate the dominant signal from other external influences. An ideal system should have the capacity to accurately recognize required auditory events from a complex scene taken in an unfavorable situation. This paper proposes a novel approach to speaker identification in unfavorable conditions such as emotion and interference using a pre-trained Deep Neural Network mask and speech VGG. The proposed model obtained superior performance over the recent literature in English and Arabic emotional speech data and reported an average speaker identification rate of 85.2\%, 87.0\%, and 86.6\% using the Ryerson audio-visual dataset (RAVDESS), speech under simulated and actual stress (SUSAS) dataset and Emirati-accented Speech dataset (ESD) respectively.

CASA-Based Speaker Identification Using Cascaded GMM-CNN Classifier in Noisy and Emotional Talking Conditions

Feb 11, 2021

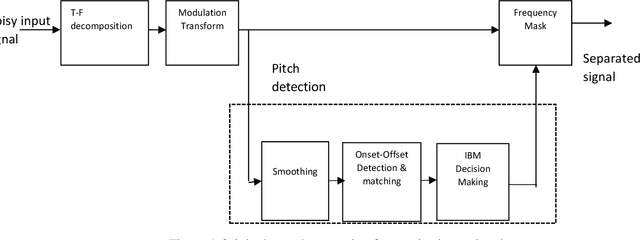

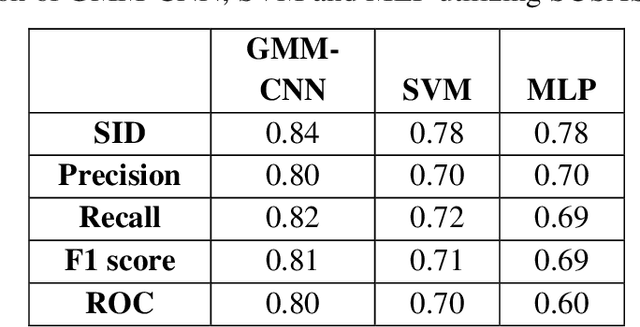

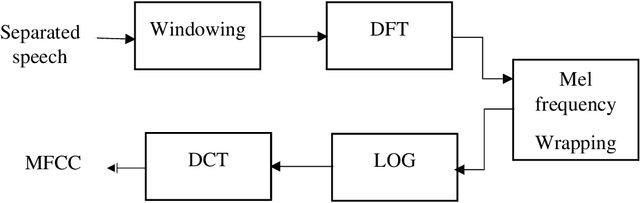

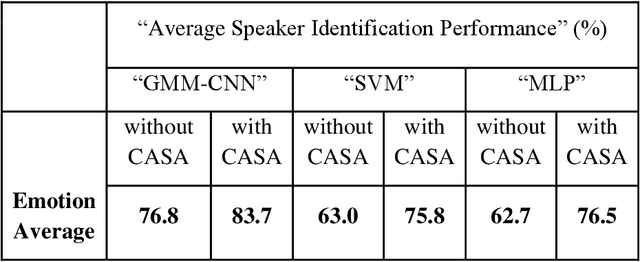

Abstract:This work aims at intensifying text-independent speaker identification performance in real application situations such as noisy and emotional talking conditions. This is achieved by incorporating two different modules: a Computational Auditory Scene Analysis CASA based pre-processing module for noise reduction and cascaded Gaussian Mixture Model Convolutional Neural Network GMM-CNN classifier for speaker identification followed by emotion recognition. This research proposes and evaluates a novel algorithm to improve the accuracy of speaker identification in emotional and highly-noise susceptible conditions. Experiments demonstrate that the proposed model yields promising results in comparison with other classifiers when Speech Under Simulated and Actual Stress SUSAS database, Emirati Speech Database ESD, the Ryerson Audio-Visual Database of Emotional Speech and Song RAVDESS database and the Fluent Speech Commands database are used in a noisy environment.

* Published in Applied Soft Computing journal

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge