Satyavrat Wagle

Multi-Agent Reinforcement Learning for Graph Discovery in D2D-Enabled Federated Learning

Mar 29, 2025

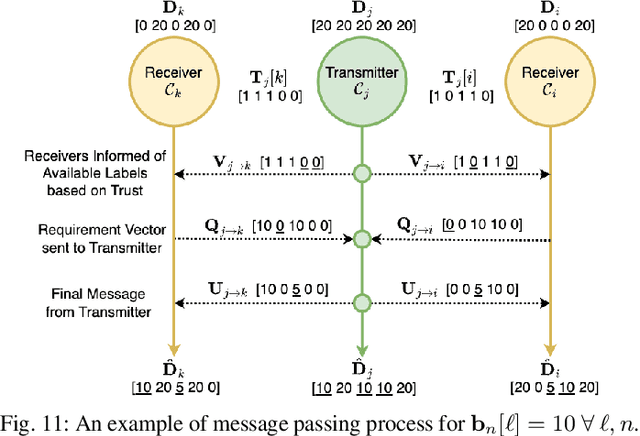

Abstract:Augmenting federated learning (FL) with device-to-device (D2D) communications can help improve convergence speed and reduce model bias through local information exchange. However, data privacy concerns, trust constraints between devices, and unreliable wireless channels each pose challenges in finding an effective yet resource efficient D2D graph structure. In this paper, we develop a decentralized reinforcement learning (RL) method for D2D graph discovery that promotes communication of impactful datapoints over reliable links for multiple learning paradigms, while following both data and device-specific trust constraints. An independent RL agent at each device trains a policy to predict the impact of incoming links in a decentralized manner without exposure of local data or significant communication overhead. For supervised settings, the D2D graph aims to improve device-specific label diversity without compromising system-level performance. For semi-supervised settings, we enable this by incorporating distributed label propagation. For unsupervised settings, we develop a variation-based diversity metric which estimates data diversity in terms of occupied latent space. Numerical experiments on five widely used datasets confirm that the data diversity improvements induced by our method increase convergence speed by up to 3 times while reducing energy consumption by up to 5 times. They also show that our method is resilient to stragglers and changes in the aggregation interval. Finally, we show that our method offers scalability benefits for larger system sizes without increases in relative overhead, and adaptability to various downstream FL architectures and to dynamic wireless environments.

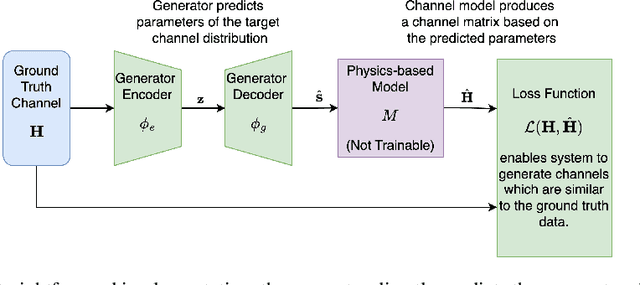

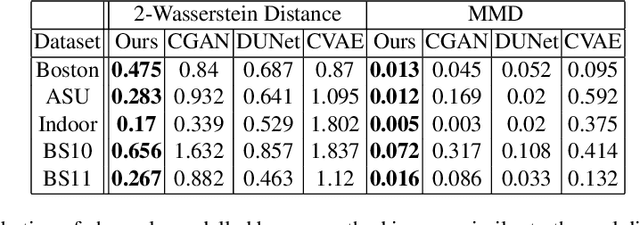

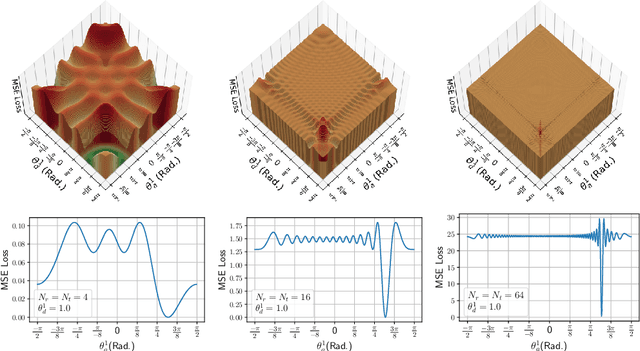

Physics-Informed Generative Approaches for Wireless Channel Modeling

Mar 11, 2025

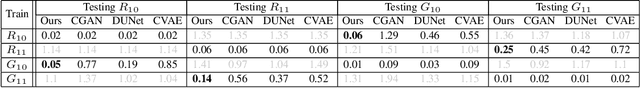

Abstract:In recent years, machine learning (ML) methods have become increasingly popular in wireless communication systems for several applications. A critical bottleneck for designing ML systems for wireless communications is the availability of realistic wireless channel datasets, which are extremely resource intensive to produce. To this end, the generation of realistic wireless channels plays a key role in the subsequent design of effective ML algorithms for wireless communication systems. Generative models have been proposed to synthesize channel matrices, but outputs produced by such methods may not correspond to geometrically viable channels and do not provide any insight into the scenario of interest. In this work, we aim to address both these issues by integrating a parametric, physics-based geometric channel (PBGC) modeling framework with generative methods. To address limitations with gradient flow through the PBGC model, a linearized reformulation is presented, which ensures smooth gradient flow during generative model training, while also capturing insights about the underlying physical environment. We evaluate our model against prior baselines by comparing the generated samples in terms of the 2-Wasserstein distance and through the utility of generated data when used for downstream compression tasks.

Unsupervised Federated Optimization at the Edge: D2D-Enabled Learning without Labels

Apr 15, 2024

Abstract:Federated learning (FL) is a popular solution for distributed machine learning (ML). While FL has traditionally been studied for supervised ML tasks, in many applications, it is impractical to assume availability of labeled data across devices. To this end, we develop Cooperative Federated unsupervised Contrastive Learning ({\tt CF-CL)} to facilitate FL across edge devices with unlabeled datasets. {\tt CF-CL} employs local device cooperation where either explicit (i.e., raw data) or implicit (i.e., embeddings) information is exchanged through device-to-device (D2D) communications to improve local diversity. Specifically, we introduce a \textit{smart information push-pull} methodology for data/embedding exchange tailored to FL settings with either soft or strict data privacy restrictions. Information sharing is conducted through a probabilistic importance sampling technique at receivers leveraging a carefully crafted reserve dataset provided by transmitters. In the implicit case, embedding exchange is further integrated into the local ML training at the devices via a regularization term incorporated into the contrastive loss, augmented with a dynamic contrastive margin to adjust the volume of latent space explored. Numerical evaluations demonstrate that {\tt CF-CL} leads to alignment of latent spaces learned across devices, results in faster and more efficient global model training, and is effective in extreme non-i.i.d. data distribution settings across devices.

Smart Information Exchange for Unsupervised Federated Learning via Reinforcement Learning

Feb 15, 2024

Abstract:One of the main challenges of decentralized machine learning paradigms such as Federated Learning (FL) is the presence of local non-i.i.d. datasets. Device-to-device transfers (D2D) between distributed devices has been shown to be an effective tool for dealing with this problem and robust to stragglers. In an unsupervised case, however, it is not obvious how data exchanges should take place due to the absence of labels. In this paper, we propose an approach to create an optimal graph for data transfer using Reinforcement Learning. The goal is to form links that will provide the most benefit considering the environment's constraints and improve convergence speed in an unsupervised FL environment. Numerical analysis shows the advantages in terms of convergence speed and straggler resilience of the proposed method to different available FL schemes and benchmark datasets.

A Reinforcement Learning-Based Approach to Graph Discovery in D2D-Enabled Federated Learning

Aug 07, 2023

Abstract:Augmenting federated learning (FL) with direct device-to-device (D2D) communications can help improve convergence speed and reduce model bias through rapid local information exchange. However, data privacy concerns, device trust issues, and unreliable wireless channels each pose challenges to determining an effective yet resource efficient D2D structure. In this paper, we develop a decentralized reinforcement learning (RL) methodology for D2D graph discovery that promotes communication of non-sensitive yet impactful data-points over trusted yet reliable links. Each device functions as an RL agent, training a policy to predict the impact of incoming links. Local (device-level) and global rewards are coupled through message passing within and between device clusters. Numerical experiments confirm the advantages offered by our method in terms of convergence speed and straggler resilience across several datasets and FL schemes.

Embedding Alignment for Unsupervised Federated Learning via Smart Data Exchange

Aug 04, 2022

Abstract:Federated learning (FL) has been recognized as one of the most promising solutions for distributed machine learning (ML). In most of the current literature, FL has been studied for supervised ML tasks, in which edge devices collect labeled data. Nevertheless, in many applications, it is impractical to assume existence of labeled data across devices. To this end, we develop a novel methodology, Cooperative Federated unsupervised Contrastive Learning (CF-CL), for FL across edge devices with unlabeled datasets. CF-CL employs local device cooperation where data are exchanged among devices through device-to-device (D2D) communications to avoid local model bias resulting from non-independent and identically distributed (non-i.i.d.) local datasets. CF-CL introduces a push-pull smart data sharing mechanism tailored to unsupervised FL settings, in which, each device pushes a subset of its local datapoints to its neighbors as reserved data points, and pulls a set of datapoints from its neighbors, sampled through a probabilistic importance sampling technique. We demonstrate that CF-CL leads to (i) alignment of unsupervised learned latent spaces across devices, (ii) faster global convergence, allowing for less frequent global model aggregations; and (iii) is effective in extreme non-i.i.d. data settings across the devices.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge