Robert Radwin

A System for Human-Robot Teaming through End-User Programming and Shared Autonomy

Jan 22, 2024

Abstract:Many industrial tasks-such as sanding, installing fasteners, and wire harnessing-are difficult to automate due to task complexity and variability. We instead investigate deploying robots in an assistive role for these tasks, where the robot assumes the physical task burden and the skilled worker provides both the high-level task planning and low-level feedback necessary to effectively complete the task. In this article, we describe the development of a system for flexible human-robot teaming that combines state-of-the-art methods in end-user programming and shared autonomy and its implementation in sanding applications. We demonstrate the use of the system in two types of sanding tasks, situated in aircraft manufacturing, that highlight two potential workflows within the human-robot teaming setup. We conclude by discussing challenges and opportunities in human-robot teaming identified during the development, application, and demonstration of our system.

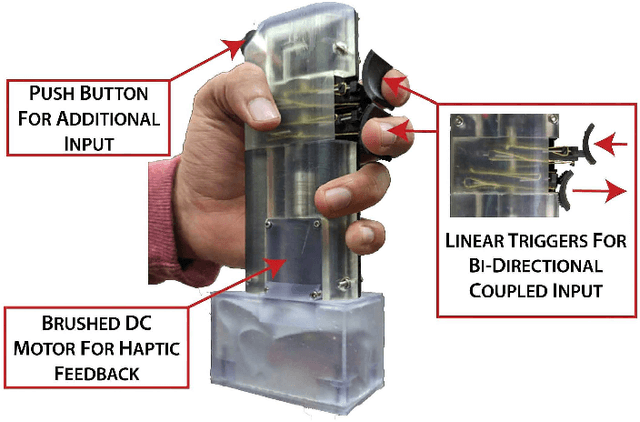

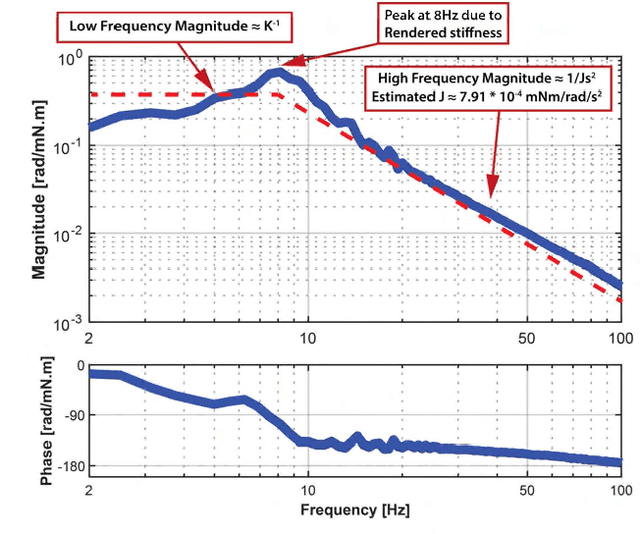

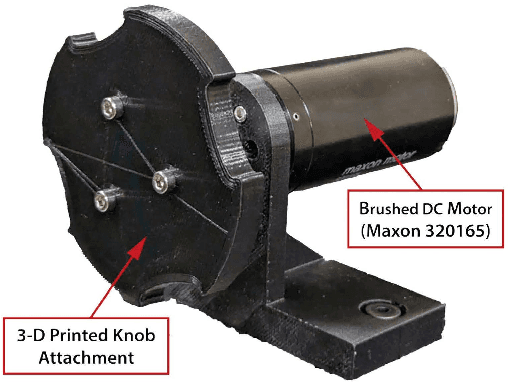

Handheld Haptic Device with Coupled Bidirectional Input

May 30, 2023

Abstract:Handheld kinesthetic haptic interfaces can provide greater mobility and richer tactile information as compared to traditional grounded devices. In this paper, we introduce a new handheld haptic interface which takes input using bidirectional coupled finger flexion. We present the device design motivation and design details and experimentally evaluate its performance in terms of transparency and rendering bandwidth using a handheld prototype device. In addition, we assess the device's functional performance through a user study comparing the proposed device to a commonly used grounded input device in a set of targeting and tracking tasks.

Coordinated Multi-Robot Shared Autonomy Based on Scheduling and Demonstrations

Mar 28, 2023Abstract:Shared autonomy methods, where a human operator and a robot arm work together, have enabled robots to complete a range of complex and highly variable tasks. Existing work primarily focuses on one human sharing autonomy with a single robot. By contrast, in this paper we present an approach for multi-robot shared autonomy that enables one operator to provide real-time corrections across two coordinated robots completing the same task in parallel. Sharing autonomy with multiple robots presents fundamental challenges. The human can only correct one robot at a time, and without coordination, the human may be left idle for long periods of time. Accordingly, we develop an approach that aligns the robot's learned motions to best utilize the human's expertise. Our key idea is to leverage Learning from Demonstration (LfD) and time warping to schedule the motions of the robots based on when they may require assistance. Our method uses variability in operator demonstrations to identify the types of corrections an operator might apply during shared autonomy, leverages flexibility in how quickly the task was performed in demonstrations to aid in scheduling, and iteratively estimates the likelihood of when corrections may be needed to ensure that only one robot is completing an action requiring assistance. Through a preliminary simulated study, we show that our method can decrease the overall time spent sanding by iteratively estimating the times when each robot could need assistance and generating an optimized schedule that allows the operator to provide corrections to each robot during these times.

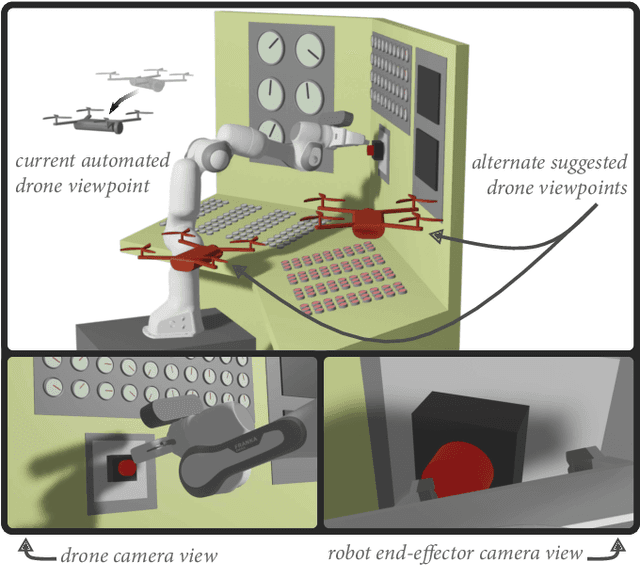

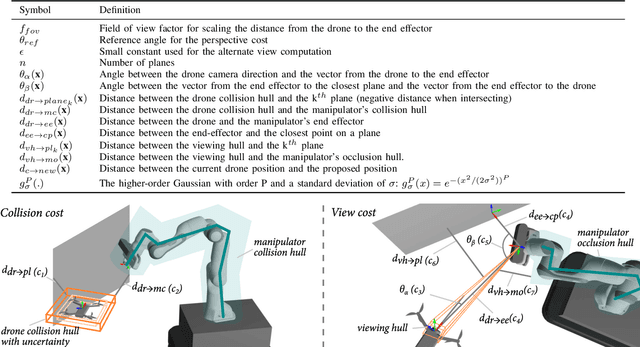

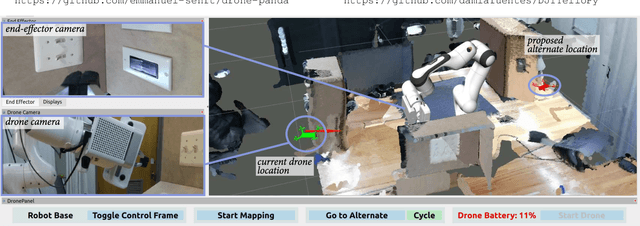

A Method For Automated Drone Viewpoints to Support Remote Robot Manipulation

Aug 08, 2022

Abstract:Drones can provide a minimally-constrained adapting camera view to support robot telemanipulation. Furthermore, the drone view can be automated to reduce the burden on the operator during teleoperation. However, existing approaches do not focus on two important aspects of using a drone as an automated view provider. The first is how the drone should select from a range of quality viewpoints within the workspace (e.g., opposite sides of an object). The second is how to compensate for unavoidable drone pose uncertainty in determining the viewpoint. In this paper, we provide a nonlinear optimization method that yields effective and adaptive drone viewpoints for telemanipulation with an articulated manipulator. Our first key idea is to use sparse human-in-the-loop input to toggle between multiple automatically-generated drone viewpoints. Our second key idea is to introduce optimization objectives that maintain a view of the manipulator while considering drone uncertainty and the impact on viewpoint occlusion and environment collisions. We provide an instantiation of our drone viewpoint method within a drone-manipulator remote teleoperation system. Finally, we provide an initial validation of our method in tasks where we complete common household and industrial manipulations.

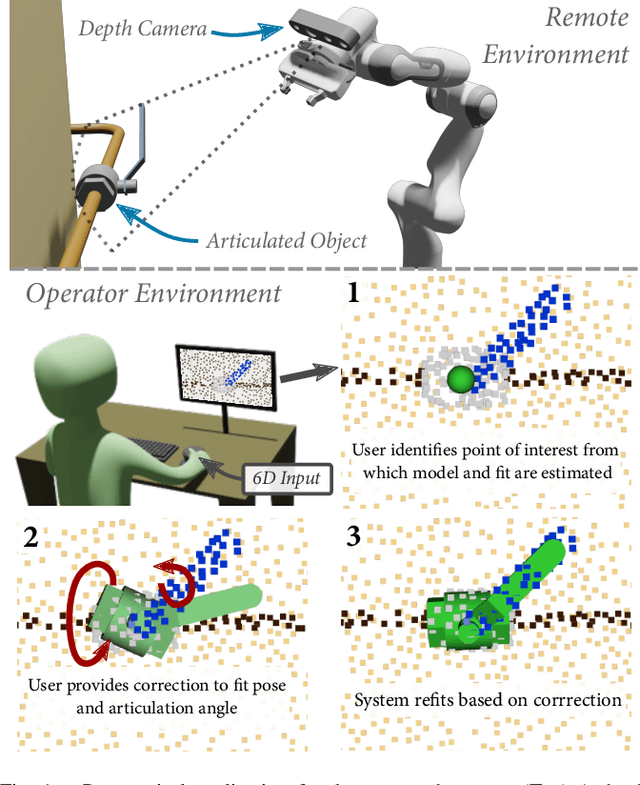

Registering Articulated Objects With Human-in-the-loop Corrections

Mar 11, 2022

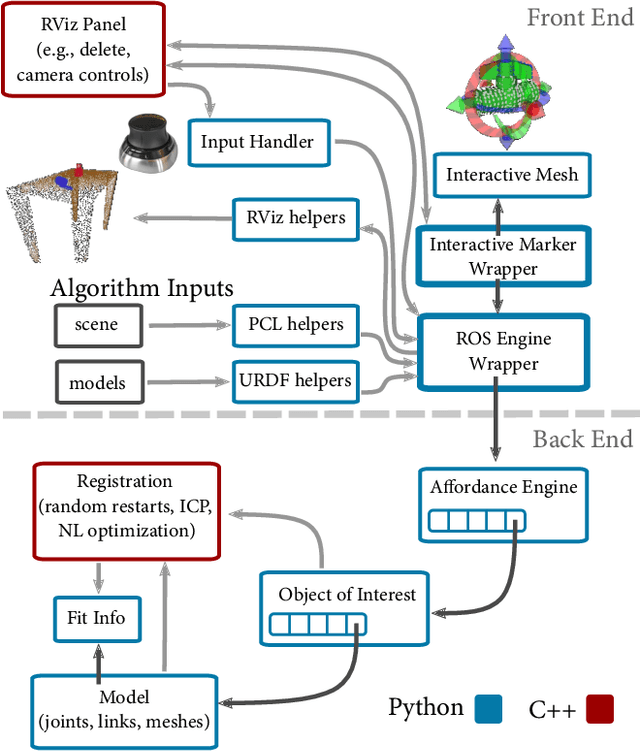

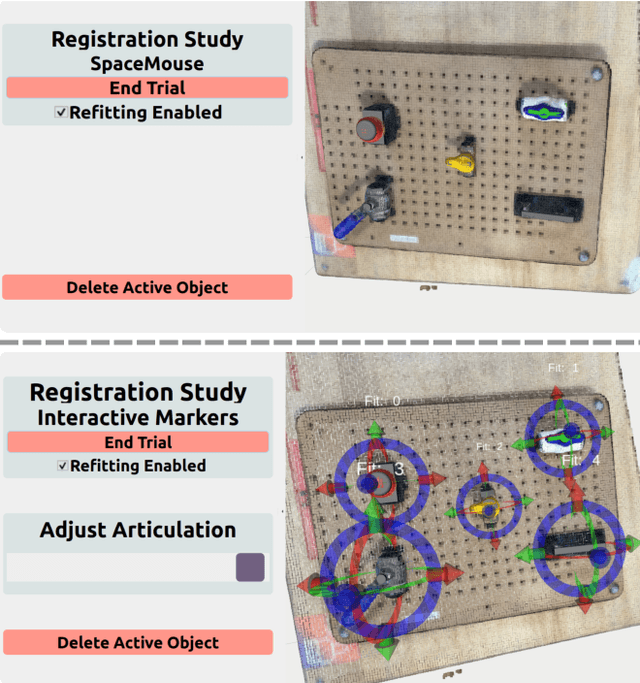

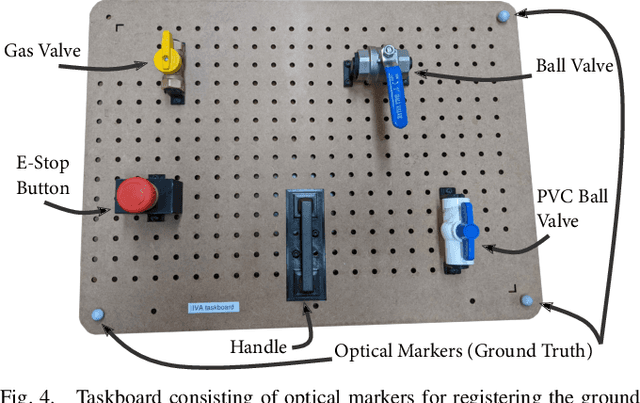

Abstract:Remotely programming robots to execute tasks often relies on registering objects of interest in the robot's environment. Frequently, these tasks involve articulating objects such as opening or closing a valve. However, existing human-in-the-loop methods for registering objects do not consider articulations and the corresponding impact to the geometry of the object, which can cause the methods to fail. In this work, we present an approach where the registration system attempts to automatically determine the object model, pose, and articulation for user-selected points using a nonlinear iterative closest point algorithm. When the automated fitting is incorrect, the operator can iteratively intervene with corrections after which the system will refit the object. We present an implementation of our fitting procedure and evaluate it with a user study that shows that it can improve user performance, in measures of time on task and task load, ease of use, and usefulness compared to a manual registration approach. We also present a situated example that demonstrates the integration of our method in an end-to-end system for articulating a remote valve.

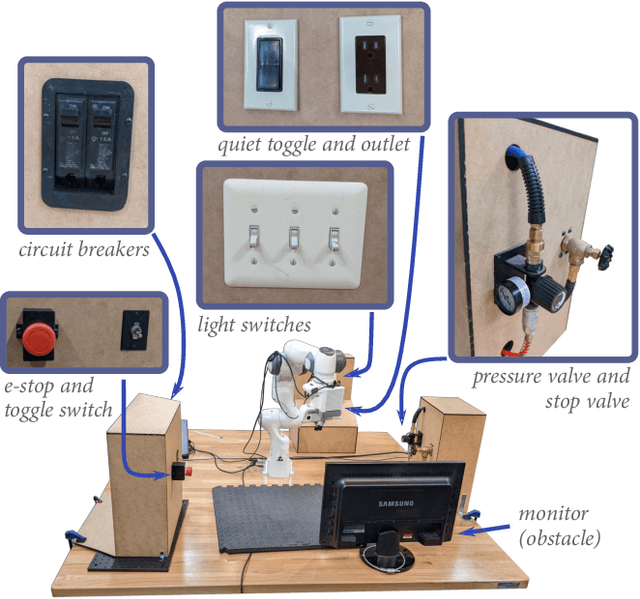

Task-Level Authoring for Remote Robot Teleoperation

Sep 06, 2021

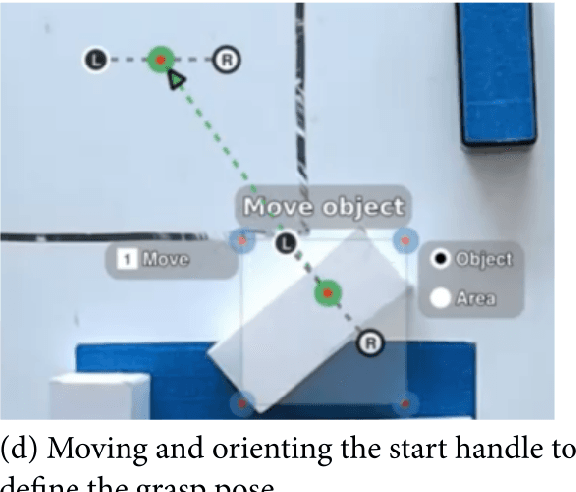

Abstract:Remote teleoperation of robots can broaden the reach of domain specialists across a wide range of industries such as home maintenance, health care, light manufacturing, and construction. However, current direct control methods are impractical, and existing tools for programming robot remotely have focused on users with significant robotic experience. Extending robot remote programming to end users, i.e., users who are experts in a domain but novices in robotics, requires tools that balance the rich features necessary for complex teleoperation tasks with ease of use. The primary challenge to usability is that novice users are unable to specify complete and robust task plans to allow a robot to perform duties autonomously, particularly in highly variable environments. Our solution is to allow operators to specify shorter sequences of high-level commands, which we call task-level authoring, to create periods of variable robot autonomy. This approach allows inexperienced users to create robot behaviors in uncertain environments by interleaving exploration, specification of behaviors, and execution as separate steps. End users are able to break down the specification of tasks and adapt to the current needs of the interaction and environments, combining the reactivity of direct control to asynchronous operation. In this paper, we describe a prototype system contextualized in light manufacturing and its empirical validation in a user study where 18 participants with some programming experience were able to perform a variety of complex telemanipulation tasks with little training. Our results show that our approach allowed users to create flexible periods of autonomy and solve rich manipulation tasks. Furthermore, participants significantly preferred our system over comparative more direct interfaces, demonstrating the potential of our approach.

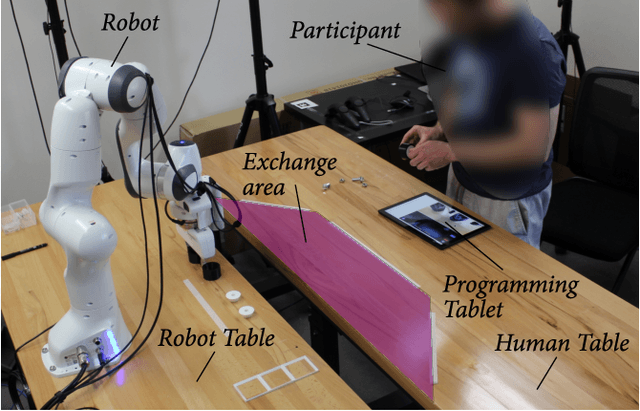

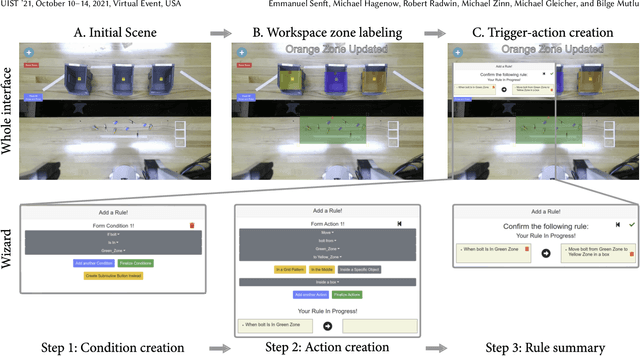

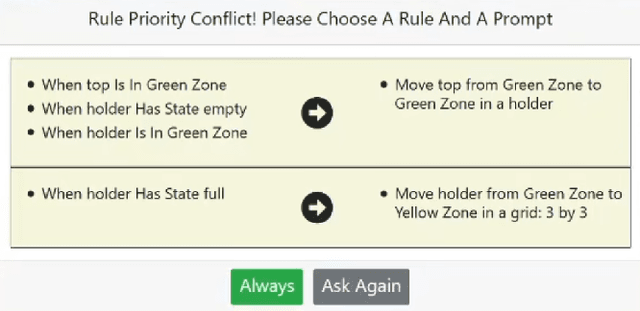

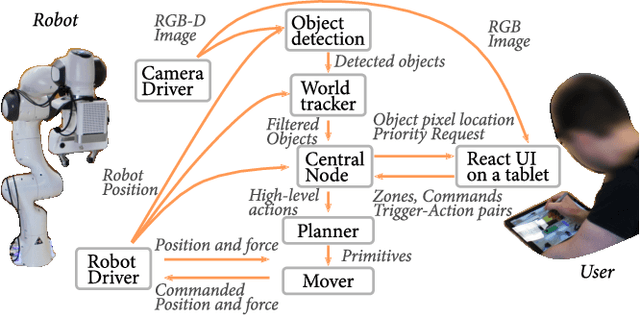

Situated Live Programming for Human-Robot Collaboration

Aug 08, 2021

Abstract:We present situated live programming for human-robot collaboration, an approach that enables users with limited programming experience to program collaborative applications for human-robot interaction. Allowing end users, such as shop floor workers, to program collaborative robots themselves would make it easy to "retask" robots from one process to another, facilitating their adoption by small and medium enterprises. Our approach builds on the paradigm of trigger-action programming (TAP) by allowing end users to create rich interactions through simple trigger-action pairings. It enables end users to iteratively create, edit, and refine a reactive robot program while executing partial programs. This live programming approach enables the user to utilize the task space and objects by incrementally specifying situated trigger-action pairs, substantially lowering the barrier to entry for programming or reprogramming robots for collaboration. We instantiate situated live programming in an authoring system where users can create trigger-action programs by annotating an augmented video feed from the robot's perspective and assign robot actions to trigger conditions. We evaluated this system in a study where participants (n = 10) developed robot programs for solving collaborative light-manufacturing tasks. Results showed that users with little programming experience were able to program HRC tasks in an interactive fashion and our situated live programming approach further supported individualized strategies and workflows. We conclude by discussing opportunities and limitations of the proposed approach, our system implementation, and our study and discuss a roadmap for expanding this approach to a broader range of tasks and applications.

Informing Real-time Corrections in Corrective Shared Autonomy Through Expert Demonstrations

Jul 10, 2021

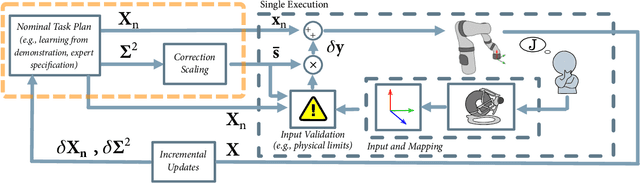

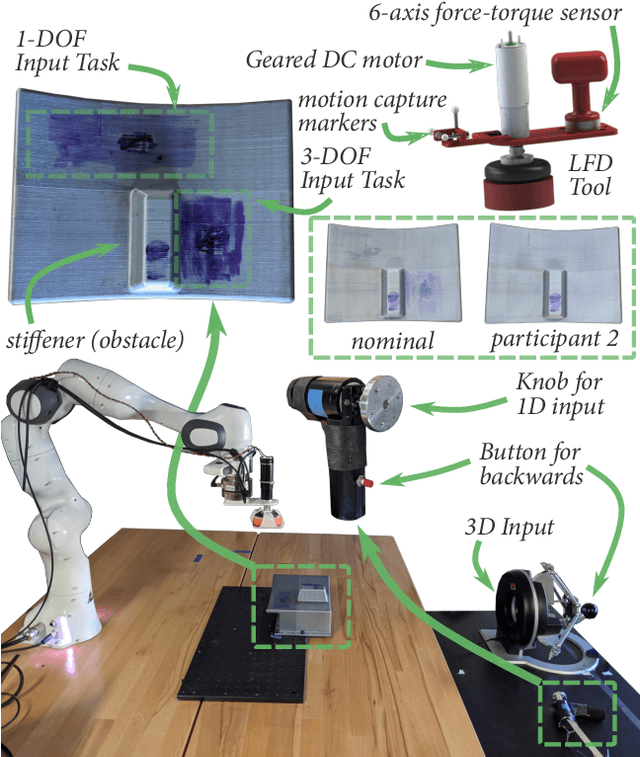

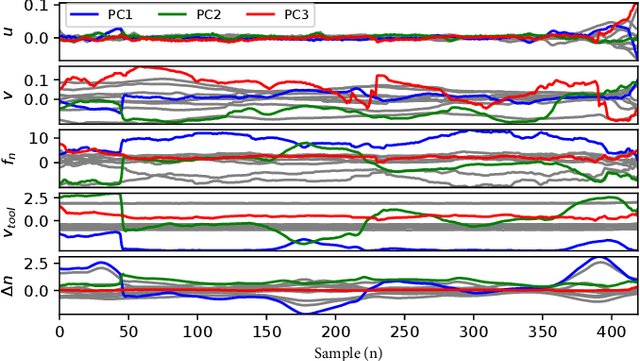

Abstract:Corrective Shared Autonomy is a method where human corrections are layered on top of an otherwise autonomous robot behavior. Specifically, a Corrective Shared Autonomy system leverages an external controller to allow corrections across a range of task variables (e.g., spinning speed of a tool, applied force, path) to address the specific needs of a task. However, this inherent flexibility makes the choice of what corrections to allow at any given instant difficult to determine. This choice of corrections includes determining appropriate robot state variables, scaling for these variables, and a way to allow a user to specify the corrections in an intuitive manner. This paper enables efficient Corrective Shared Autonomy by providing an automated solution based on Learning from Demonstration to both extract the nominal behavior and address these core problems. Our evaluation shows that this solution enables users to successfully complete a surface cleaning task, identifies different strategies users employed in applying corrections, and points to future improvements for our solution.

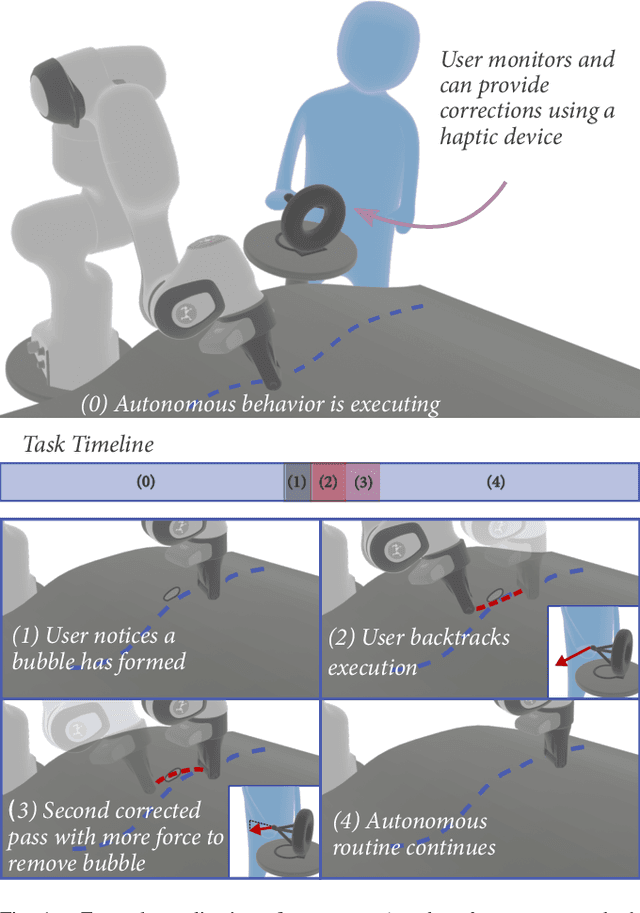

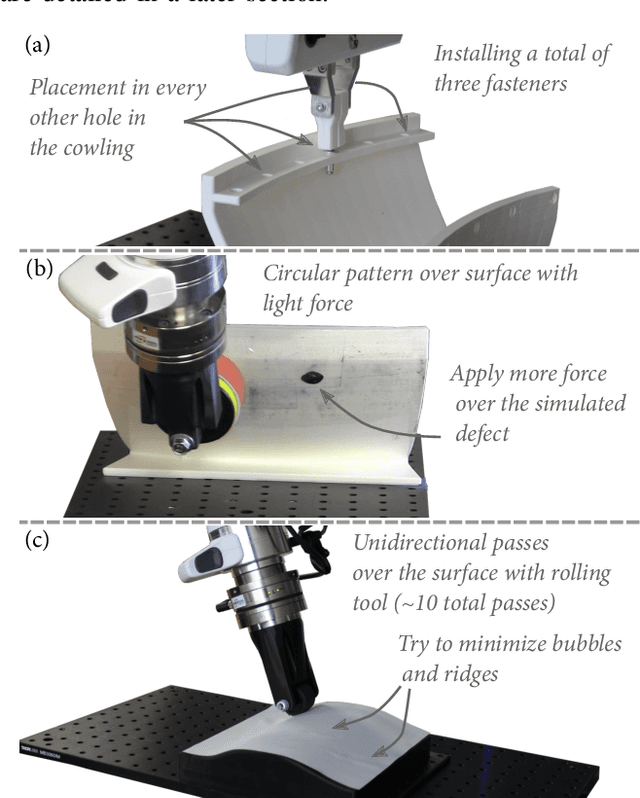

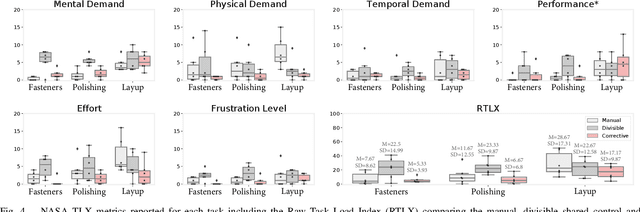

Corrective Shared Autonomy for Addressing Task Variability

Feb 14, 2021

Abstract:Many tasks, particularly those involving interaction with the environment, are characterized by high variability, making robotic autonomy difficult. One flexible solution is to introduce the input of a human with superior experience and cognitive abilities as part of a shared autonomy policy. However, current methods for shared autonomy are not designed to address the wide range of necessary corrections (e.g., positions, forces, execution rate, etc.) that the user may need to provide to address task variability. In this paper, we present corrective shared autonomy, where users provide corrections to key robot state variables on top of an otherwise autonomous task model. We provide an instantiation of this shared autonomy paradigm and demonstrate its viability and benefits such as low user effort and physical demand via a system-level user study on three tasks involving variability situated in aircraft manufacturing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge