Randall S. Burd

Exploring Runtime Decision Support for Trauma Resuscitation

Jul 06, 2022

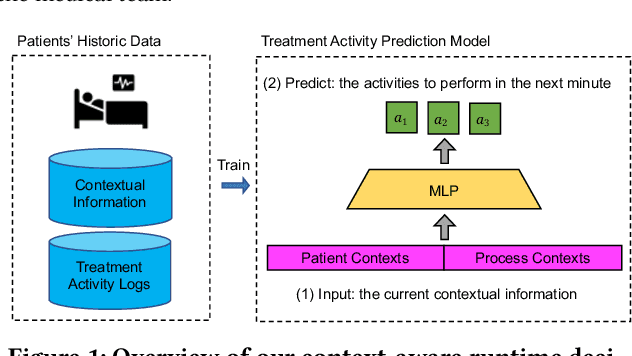

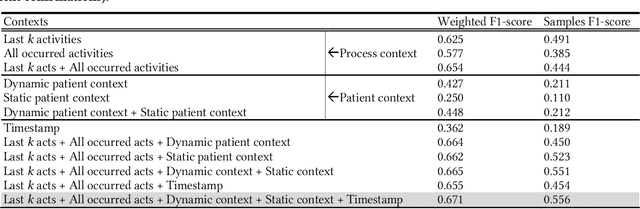

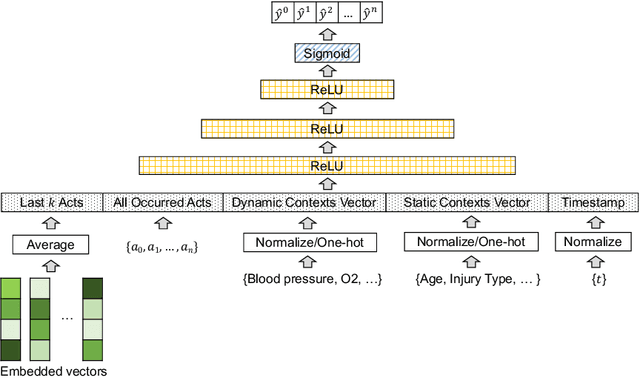

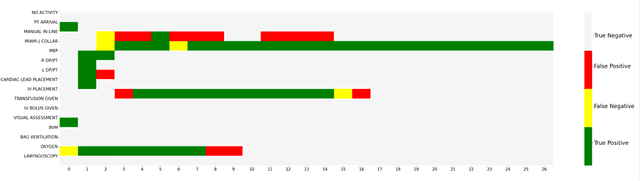

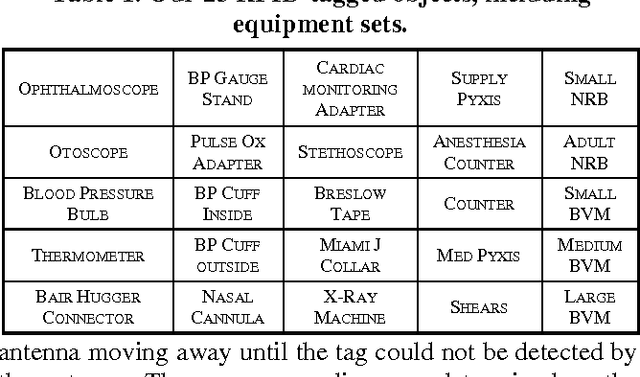

Abstract:AI-based recommender systems have been successfully applied in many domains (e.g., e-commerce, feeds ranking). Medical experts believe that incorporating such methods into a clinical decision support system may help reduce medical team errors and improve patient outcomes during treatment processes (e.g., trauma resuscitation, surgical processes). Limited research, however, has been done to develop automatic data-driven treatment decision support. We explored the feasibility of building a treatment recommender system to provide runtime next-minute activity predictions. The system uses patient context (e.g., demographics and vital signs) and process context (e.g., activities) to continuously predict activities that will be performed in the next minute. We evaluated our system on a pre-recorded dataset of trauma resuscitation and conducted an ablation study on different model variants. The best model achieved an average F1-score of 0.67 for 61 activity types. We include medical team feedback and discuss the future work.

Generating Privacy-Preserving Process Data with Deep Generative Models

Mar 15, 2022

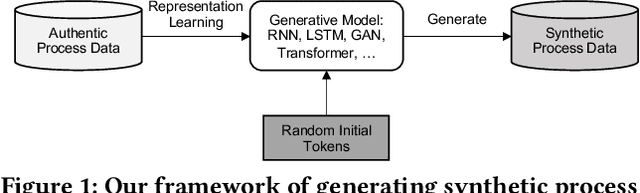

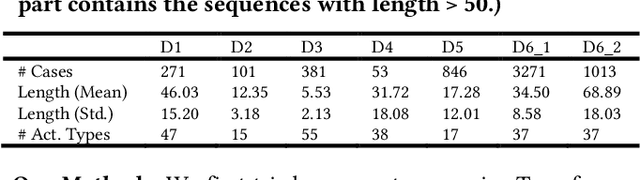

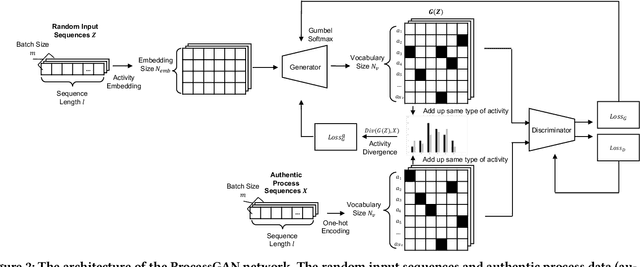

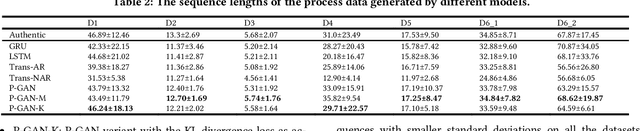

Abstract:Process data with confidential information cannot be shared directly in public, which hinders the research in process data mining and analytics. Data encryption methods have been studied to protect the data, but they still may be decrypted, which leads to individual identification. We experimented with different models of representation learning and used the learned model to generate synthetic process data. We introduced an adversarial generative network for process data generation (ProcessGAN) with two Transformer networks for the generator and the discriminator. We evaluated ProcessGAN and traditional models on six real-world datasets, of which two are public and four are collected in medical domains. We used statistical metrics and supervised learning scores to evaluate the synthetic data. We also used process mining to discover workflows for the authentic and synthetic datasets and had medical experts evaluate the clinical applicability of the synthetic workflows. We found that ProcessGAN outperformed traditional sequential models when trained on small authentic datasets of complex processes. ProcessGAN better represented the long-range dependencies between the activities, which is important for complicated processes such as the medical processes. Traditional sequential models performed better when trained on large data of simple processes. We conclude that ProcessGAN can generate a large amount of sharable synthetic process data indistinguishable from authentic data.

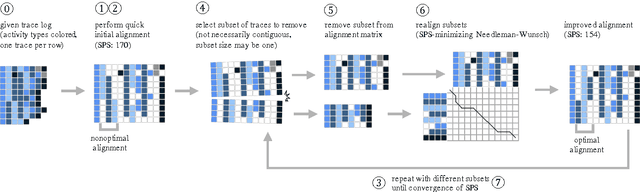

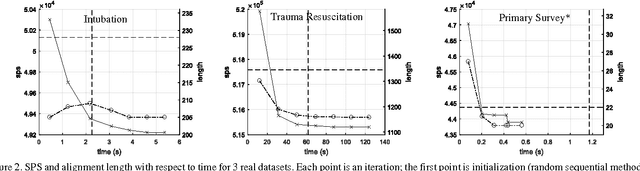

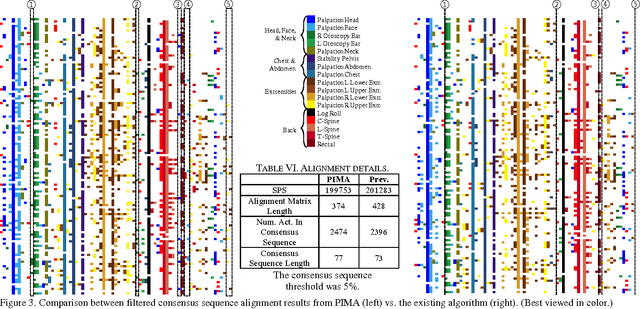

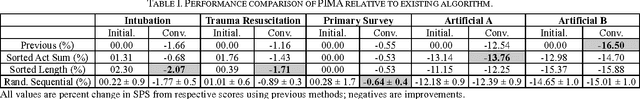

Process-oriented Iterative Multiple Alignment for Medical Process Mining

Sep 16, 2017

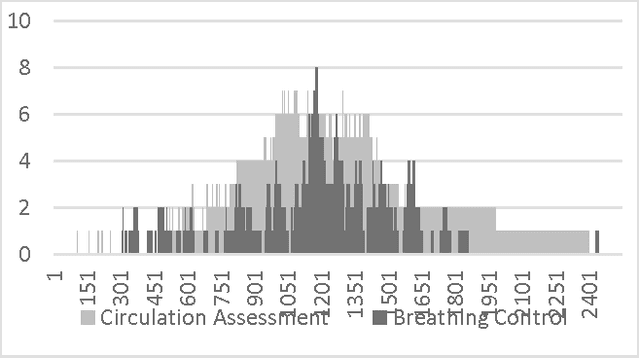

Abstract:Adapted from biological sequence alignment, trace alignment is a process mining technique used to visualize and analyze workflow data. Any analysis done with this method, however, is affected by the alignment quality. The best existing trace alignment techniques use progressive guide-trees to heuristically approximate the optimal alignment in O(N2L2) time. These algorithms are heavily dependent on the selected guide-tree metric, often return sum-of-pairs-score-reducing errors that interfere with interpretation, and are computationally intensive for large datasets. To alleviate these issues, we propose process-oriented iterative multiple alignment (PIMA), which contains specialized optimizations to better handle workflow data. We demonstrate that PIMA is a flexible framework capable of achieving better sum-of-pairs score than existing trace alignment algorithms in only O(NL2) time. We applied PIMA to analyzing medical workflow data, showing how iterative alignment can better represent the data and facilitate the extraction of insights from data visualization.

Progress Estimation and Phase Detection for Sequential Processes

Jul 14, 2017

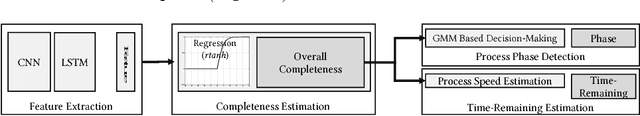

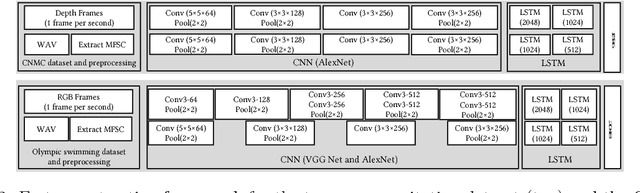

Abstract:Process modeling and understanding are fundamental for advanced human-computer interfaces and automation systems. Most recent research has focused on activity recognition, but little has been done on sensor-based detection of process progress. We introduce a real-time, sensor-based system for modeling, recognizing and estimating the progress of a work process. We implemented a multimodal deep learning structure to extract the relevant spatio-temporal features from multiple sensory inputs and used a novel deep regression structure for overall completeness estimation. Using process completeness estimation with a Gaussian mixture model, our system can predict the phase for sequential processes. The performance speed, calculated using completeness estimation, allows online estimation of the remaining time. To train our system, we introduced a novel rectified hyperbolic tangent (rtanh) activation function and conditional loss. Our system was tested on data obtained from the medical process (trauma resuscitation) and sports events (Olympic swimming competition). Our system outperformed the existing trauma-resuscitation phase detectors with a phase detection accuracy of over 86%, an F1-score of 0.67, a completeness estimation error of under 12.6%, and a remaining-time estimation error of less than 7.5 minutes. For the Olympic swimming dataset, our system achieved an accuracy of 88%, an F1-score of 0.58, a completeness estimation error of 6.3% and a remaining-time estimation error of 2.9 minutes.

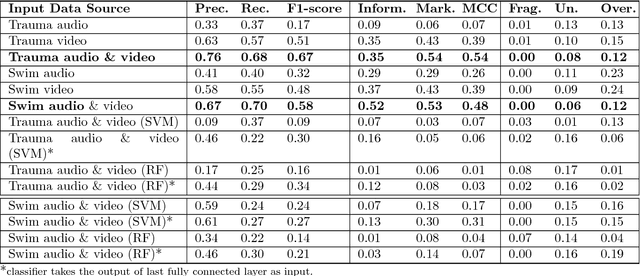

Concurrent Activity Recognition with Multimodal CNN-LSTM Structure

Feb 06, 2017

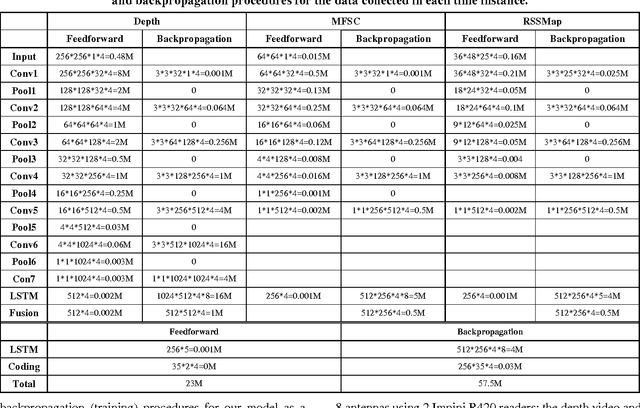

Abstract:We introduce a system that recognizes concurrent activities from real-world data captured by multiple sensors of different types. The recognition is achieved in two steps. First, we extract spatial and temporal features from the multimodal data. We feed each datatype into a convolutional neural network that extracts spatial features, followed by a long-short term memory network that extracts temporal information in the sensory data. The extracted features are then fused for decision making in the second step. Second, we achieve concurrent activity recognition with a single classifier that encodes a binary output vector in which elements indicate whether the corresponding activity types are currently in progress. We tested our system with three datasets from different domains recorded using different sensors and achieved performance comparable to existing systems designed specifically for those domains. Our system is the first to address the concurrent activity recognition with multisensory data using a single model, which is scalable, simple to train and easy to deploy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge