Quang-Cuong Pham

Estimating Force Interactions of Deformable Linear Objects from their Shapes

Feb 01, 2026Abstract:This work introduces an analytical approach for detecting and estimating external forces acting on deformable linear objects (DLOs) using only their observed shapes. In many robot-wire interaction tasks, contact occurs not at the end-effector but at other points along the robot's body. Such scenarios arise when robots manipulate wires indirectly (e.g., by nudging) or when wires act as passive obstacles in the environment. Accurately identifying these interactions is crucial for safe and efficient trajectory planning, helping to prevent wire damage, avoid restricted robot motions, and mitigate potential hazards. Existing approaches often rely on expensive external force-torque sensor or that contacts occur at the end-effector for accurate force estimation. Using wire shape information acquired from a depth camera and under the assumption that the wire is in or near its static equilibrium, our method estimates both the location and magnitude of external forces without additional prior knowledge. This is achieved by exploiting derived consistency conditions and solving a system of linear equations based on force-torque balance along the wire. The approach was validated through simulation, where it achieved high accuracy, and through real-world experiments, where accurate estimation was demonstrated in selected interaction scenarios.

Fast Payload Calibration for Sensorless Contact Estimation Using Model Pre-training

Sep 05, 2024

Abstract:Force and torque sensing is crucial in robotic manipulation across both collaborative and industrial settings. Traditional methods for dynamics identification enable the detection and control of external forces and torques without the need for costly sensors. However, these approaches show limitations in scenarios where robot dynamics, particularly the end-effector payload, are subject to changes. Moreover, existing calibration techniques face trade-offs between efficiency and accuracy due to concerns over joint space coverage. In this paper, we introduce a calibration scheme that leverages pre-trained Neural Network models to learn calibrated dynamics across a wide range of joint space in advance. This offline learning strategy significantly reduces the need for online data collection, whether for selection of the optimal model or identification of payload features, necessitating merely a 4-second trajectory for online calibration. This method is particularly effective in tasks that require frequent dynamics recalibration for precise contact estimation. We further demonstrate the efficacy of this approach through applications in sensorless joint and task compliance, accounting for payload variability.

Automatic Fingerpad Customization for Precise and Stable Grasping of 3D-Print Parts

Mar 28, 2024Abstract:The rise in additive manufacturing comes with unique opportunities and challenges. Massive part customization and rapid design changes are made possible with additive manufacturing, however, manufacturing industries that desire the implementation of robotics automation to improve production efficiency could face challenges in the gripper design and grasp planning due to highly complex geometrical shapes resulting from massive part customization. Yet, current gripper design for such objects are often manual and rely on ad-hoc design intuition. This would be limiting as such grippers would lack the ability to grasp different objects or grasp points, which is important for practical implementations. Hence, we introduce a fast, end-to-end approach to customize rigid gripper fingerpads that could achieve precise and stable grasping for different objects at multiple grasp points. Our approach relies on two key components: (i) a method based on set Boolean operations, e.g. intersections, subtractions, and unions to extract object features and synthesize gripper surfaces that conform to different local shapes to form caging grasps; (ii) a method to evaluate the grasp quality of synthesized grippers. We experimentally demonstrate the validity of our approach by synthesizing fingerpads that, once mounted on a physical robot gripper, are able to grasp different objects at multiple grasp points, all with tightly constrained grasps.

Grasping, Part Identification, and Pose Refinement in One Shot with a Tactile Gripper

Dec 29, 2023

Abstract:The rise in additive manufacturing comes with unique opportunities and challenges. Rapid changes to part design and massive part customization distinctive to 3D-Print (3DP) can be easily achieved. Customized parts that are unique, yet exhibit similar features such as dental moulds, shoe insoles, or engine vanes could be industrially manufactured with 3DP. However, the opportunity for massive part customization comes with unique challenges for the existing production paradigm of robotics applications, as the current robotics paradigm for part identification and pose refinement is repetitive, where data-driven and object-dependent approaches are often used. Thus, a bottleneck exists in robotics applications for 3DP parts where massive customization is involved, as it is difficult for feature-based deep learning approaches to distinguish between similar parts such as shoe insoles belonging to different people. As such, we propose a method that augments patterns on 3DP parts so that grasping, part identification, and pose refinement can be executed in one shot with a tactile gripper. We also experimentally evaluate our approach from three perspectives, including real insertion tasks that mimic robotic sorting and packing, and achieved excellent classification results, a high insertion success rate of 95%, and a sub-millimeter pose refinement accuracy.

Stable Modular Control via Contraction Theory for Reinforcement Learning

Nov 07, 2023

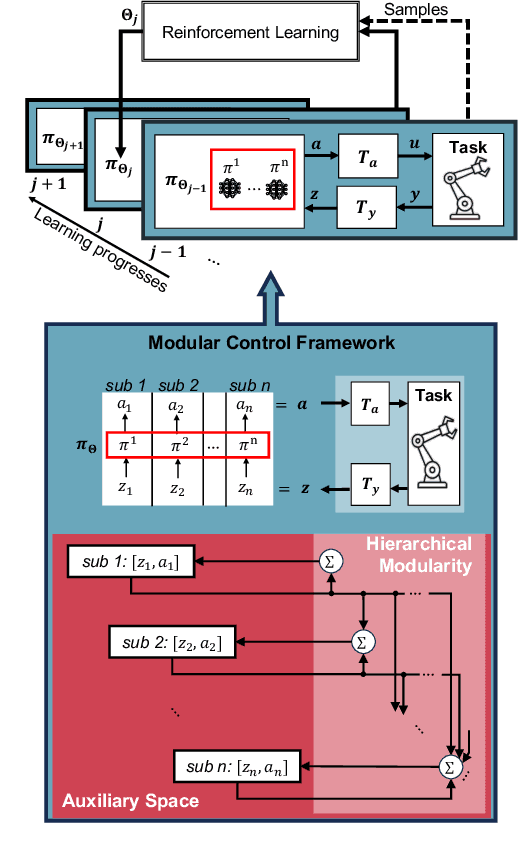

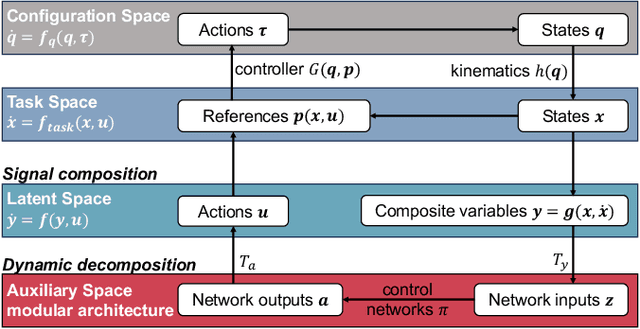

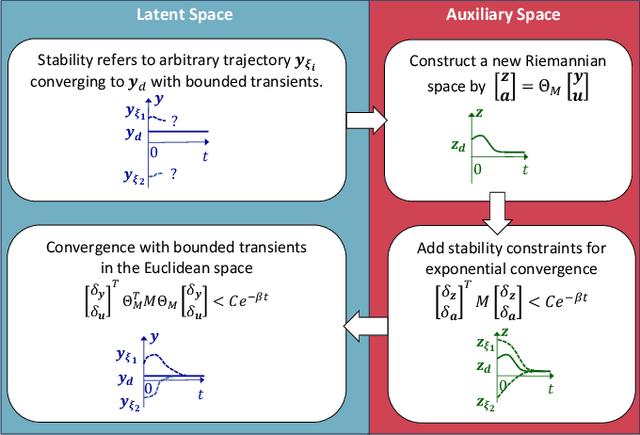

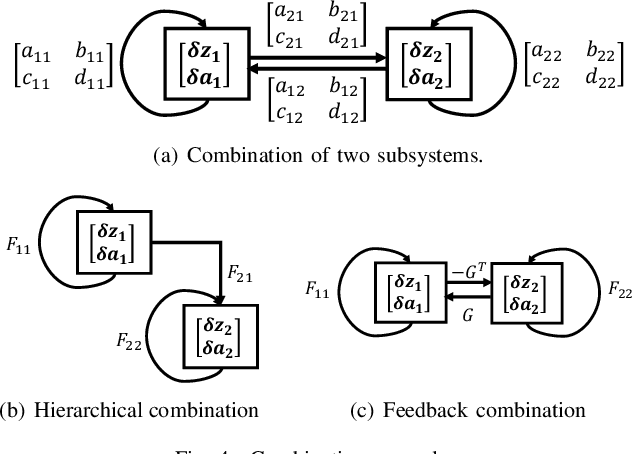

Abstract:We propose a novel way to integrate control techniques with reinforcement learning (RL) for stability, robustness, and generalization: leveraging contraction theory to realize modularity in neural control, which ensures that combining stable subsystems can automatically preserve the stability. We realize such modularity via signal composition and dynamic decomposition. Signal composition creates the latent space, within which RL applies to maximizing rewards. Dynamic decomposition is realized by coordinate transformation that creates an auxiliary space, within which the latent signals are coupled in the way that their combination can preserve stability provided each signal, that is, each subsystem, has stable self-feedbacks. Leveraging modularity, the nonlinear stability problem is deconstructed into algebraically solvable ones, the stability of the subsystems in the auxiliary space, yielding linear constraints on the input gradients of control networks that can be as simple as switching the signs of network weights. This minimally invasive method for stability allows arguably easy integration into the modular neural architectures in machine learning, like hierarchical RL, and improves their performance. We demonstrate in simulation the necessity and the effectiveness of our method: the necessity for robustness and generalization, and the effectiveness in improving hierarchical RL for manipulation learning.

Dynamic Manipulation of a Deformable Linear Object: Simulation and Learning

Oct 02, 2023

Abstract:We show that it is possible to learn an open-loop policy in simulation for the dynamic manipulation of a deformable linear object (DLO) -- e.g., a rope, wire, or cable -- that can be executed by a real robot without additional training. Our method is enabled by integrating an existing state-of-the-art DLO model (Discrete Elastic Rods) with MuJoCo, a robot simulator. We describe how this integration was done, check that validation results produced in simulation match what we expect from analysis of the physics, and apply policy optimization to train an open-loop policy from data collected only in simulation that uses a robot arm to fling a wire precisely between two obstacles. This policy achieves a success rate of 76.7% when executed by a real robot in hardware experiments without additional training on the real task.

Sensorless Physical Human-robot Interaction Using Deep-Learning

Sep 28, 2023

Abstract:Physical human-robot interaction has been an area of interest for decades. Collaborative tasks, such as joint compliance, demand high-quality joint torque sensing. While external torque sensors are reliable, they come with the drawbacks of being expensive and vulnerable to impacts. To address these issues, studies have been conducted to estimate external torques using only internal signals, such as joint states and current measurements. However, insufficient attention has been given to friction hysteresis approximation, which is crucial for tasks involving extensive dynamic to static state transitions. In this paper, we propose a deep-learning-based method that leverages a novel long-term memory scheme to achieve dynamics identification, accurately approximating the static hysteresis. We also introduce modifications to the well-known Residual Learning architecture, retaining high accuracy while reducing inference time. The robustness of the proposed method is illustrated through a joint compliance and task compliance experiment.

Planning Optimal Trajectories for Mobile Manipulators under End-effector Trajectory Continuity Constraint

Sep 21, 2023

Abstract:Mobile manipulators have been employed in many applications which are usually performed by multiple fixed-base robots or a large-size system, thanks to the mobility of the mobile base. However, the mobile base also brings redundancies to the system, which makes trajectory planning more challenging. One class of problems recently arising from mobile 3D printing is the trajectory-continuous tasks, in which the end-effector is required to follow a designed continuous trajectory (time-parametrized path) in task space. This paper formulates and solves the optimal trajectory planning problem for mobile manipulators under end-effector trajectory continuity constraint, which allows considerations of other constraints and trajectory optimization. To demonstrate our method, a discrete optimal trajectory planning algorithm is proposed to solve mobile 3D printing tasks in multiple experiments.

Real-time Batched Distance Computation for Time-Optimal Safe Path Tracking

Sep 21, 2023

Abstract:In human-robot collaboration, there has been a trade-off relationship between the speed of collaborative robots and the safety of human workers. In our previous paper, we introduced a time-optimal path tracking algorithm designed to maximize speed while ensuring safety for human workers. This algorithm runs in real-time and provides the safe and fastest control input for every cycle with respect to ISO standards. However, true optimality has not been achieved due to inaccurate distance computation resulting from conservative model simplification. To attain true optimality, we require a method that can compute distances 1. at many robot configurations to examine along a trajectory 2. in real-time for online robot control 3. as precisely as possible for optimal control. In this paper, we propose a batched, fast and precise distance checking method based on precomputed link-local SDFs. Our method can check distances for 500 waypoints along a trajectory within less than 1 millisecond using a GPU at runtime, making it suited for time-critical robotic control. Additionally, a neural approximation has been proposed to accelerate preprocessing by a factor of 2. Finally, we experimentally demonstrate that our method can navigate a 6-DoF robot earlier than a geometric-primitives-based distance checker in a dynamic and collaborative environment.

Reinforcement Learning with Parameterized Manipulation Primitives for Robotic Assembly

Jun 11, 2023

Abstract:A common theme in robot assembly is the adoption of Manipulation Primitives as the atomic motion to compose assembly strategy, typically in the form of a state machine or a graph. While this approach has shown great performance and robustness in increasingly complex assembly tasks, the state machine has to be engineered manually in most cases. Such hard-coded strategies will fail to handle unexpected situations that are not considered in the design. To address this issue, we propose to find dynamics sequence of manipulation primitives through Reinforcement Learning. Leveraging parameterized manipulation primitives, the proposed method greatly improves both assembly performance and sample efficiency of Reinforcement Learning compared to a previous work using non-parameterized manipulation primitives. In practice, our method achieves good zero-shot sim-to-real performance on high-precision peg insertion tasks with different geometry, clearance, and material.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge