Qilong Han

Towards Adaptive Humanoid Control via Multi-Behavior Distillation and Reinforced Fine-Tuning

Nov 11, 2025Abstract:Humanoid robots are promising to learn a diverse set of human-like locomotion behaviors, including standing up, walking, running, and jumping. However, existing methods predominantly require training independent policies for each skill, yielding behavior-specific controllers that exhibit limited generalization and brittle performance when deployed on irregular terrains and in diverse situations. To address this challenge, we propose Adaptive Humanoid Control (AHC) that adopts a two-stage framework to learn an adaptive humanoid locomotion controller across different skills and terrains. Specifically, we first train several primary locomotion policies and perform a multi-behavior distillation process to obtain a basic multi-behavior controller, facilitating adaptive behavior switching based on the environment. Then, we perform reinforced fine-tuning by collecting online feedback in performing adaptive behaviors on more diverse terrains, enhancing terrain adaptability for the controller. We conduct experiments in both simulation and real-world experiments in Unitree G1 robots. The results show that our method exhibits strong adaptability across various situations and terrains. Project website: https://ahc-humanoid.github.io.

From Pairwise to Ranking: Climbing the Ladder to Ideal Collaborative Filtering with Pseudo-Ranking

Dec 24, 2024

Abstract:Intuitively, an ideal collaborative filtering (CF) model should learn from users' full rankings over all items to make optimal top-K recommendations. Due to the absence of such full rankings in practice, most CF models rely on pairwise loss functions to approximate full rankings, resulting in an immense performance gap. In this paper, we provide a novel analysis using the multiple ordinal classification concept to reveal the inevitable gap between a pairwise approximation and the ideal case. However, bridging the gap in practice encounters two formidable challenges: (1) none of the real-world datasets contains full ranking information; (2) there does not exist a loss function that is capable of consuming ranking information. To overcome these challenges, we propose a pseudo-ranking paradigm (PRP) that addresses the lack of ranking information by introducing pseudo-rankings supervised by an original noise injection mechanism. Additionally, we put forward a new ranking loss function designed to handle ranking information effectively. To ensure our method's robustness against potential inaccuracies in pseudo-rankings, we equip the ranking loss function with a gradient-based confidence mechanism to detect and mitigate abnormal gradients. Extensive experiments on four real-world datasets demonstrate that PRP significantly outperforms state-of-the-art methods.

Unlocking the Hidden Treasures: Enhancing Recommendations with Unlabeled Data

Dec 24, 2024

Abstract:Collaborative filtering (CF) stands as a cornerstone in recommender systems, yet effectively leveraging the massive unlabeled data presents a significant challenge. Current research focuses on addressing the challenge of unlabeled data by extracting a subset that closely approximates negative samples. Regrettably, the remaining data are overlooked, failing to fully integrate this valuable information into the construction of user preferences. To address this gap, we introduce a novel positive-neutral-negative (PNN) learning paradigm. PNN introduces a neutral class, encompassing intricate items that are challenging to categorize directly as positive or negative samples. By training a model based on this triple-wise partial ranking, PNN offers a promising solution to learning complex user preferences. Through theoretical analysis, we connect PNN to one-way partial AUC (OPAUC) to validate its efficacy. Implementing the PNN paradigm is, however, technically challenging because: (1) it is difficult to classify unlabeled data into neutral or negative in the absence of supervised signals; (2) there does not exist any loss function that can handle set-level triple-wise ranking relationships. To address these challenges, we propose a semi-supervised learning method coupled with a user-aware attention model for knowledge acquisition and classification refinement. Additionally, a novel loss function with a two-step centroid ranking approach enables handling set-level rankings. Extensive experiments on four real-world datasets demonstrate that, when combined with PNN, a wide range of representative CF models can consistently and significantly boost their performance. Even with a simple matrix factorization, PNN can achieve comparable performance to sophisticated graph neutral networks.

SSDRec: Self-Augmented Sequence Denoising for Sequential Recommendation

Mar 07, 2024Abstract:Traditional sequential recommendation methods assume that users' sequence data is clean enough to learn accurate sequence representations to reflect user preferences. In practice, users' sequences inevitably contain noise (e.g., accidental interactions), leading to incorrect reflections of user preferences. Consequently, some pioneer studies have explored modeling sequentiality and correlations in sequences to implicitly or explicitly reduce noise's influence. However, relying on only available intra-sequence information (i.e., sequentiality and correlations in a sequence) is insufficient and may result in over-denoising and under-denoising problems (OUPs), especially for short sequences. To improve reliability, we propose to augment sequences by inserting items before denoising. However, due to the data sparsity issue and computational costs, it is challenging to select proper items from the entire item universe to insert into proper positions in a target sequence. Motivated by the above observation, we propose a novel framework--Self-augmented Sequence Denoising for sequential Recommendation (SSDRec) with a three-stage learning paradigm to solve the above challenges. In the first stage, we empower SSDRec by a global relation encoder to learn multi-faceted inter-sequence relations in a data-driven manner. These relations serve as prior knowledge to guide subsequent stages. In the second stage, we devise a self-augmentation module to augment sequences to alleviate OUPs. Finally, we employ a hierarchical denoising module in the third stage to reduce the risk of false augmentations and pinpoint all noise in raw sequences. Extensive experiments on five real-world datasets demonstrate the superiority of \model over state-of-the-art denoising methods and its flexible applications to mainstream sequential recommendation models. The source code is available at https://github.com/zc-97/SSDRec.

Adaptive Hardness Negative Sampling for Collaborative Filtering

Jan 10, 2024

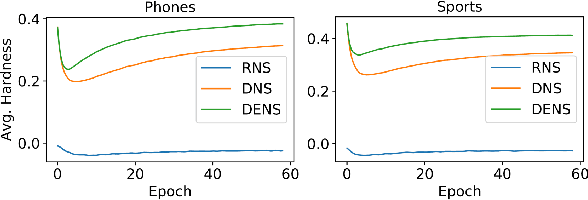

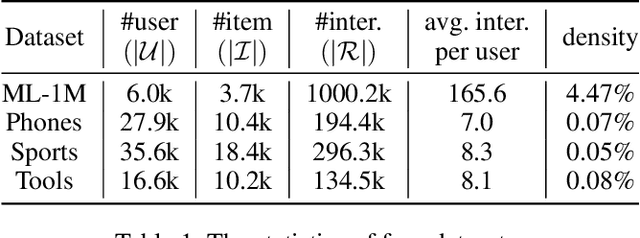

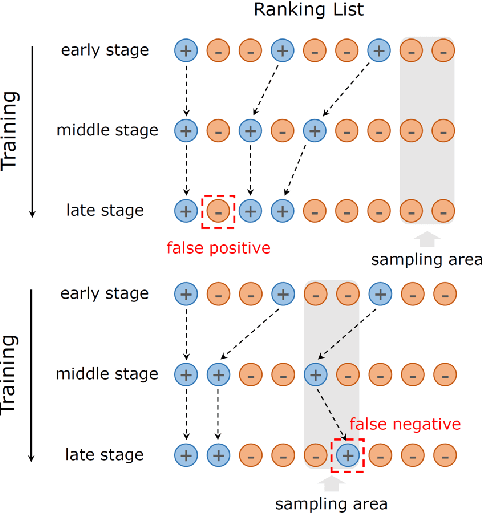

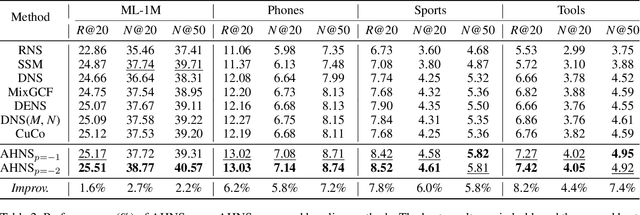

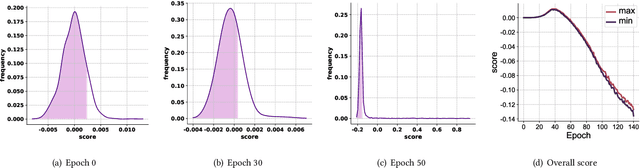

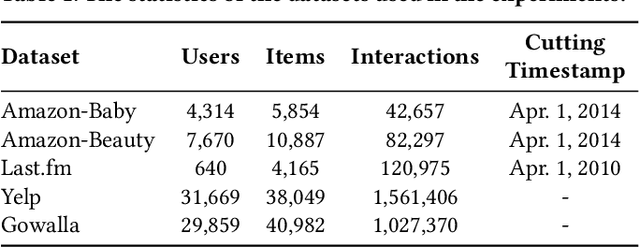

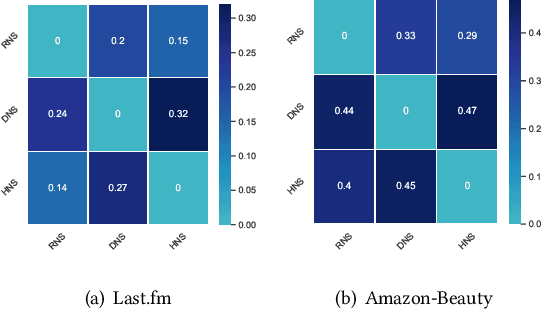

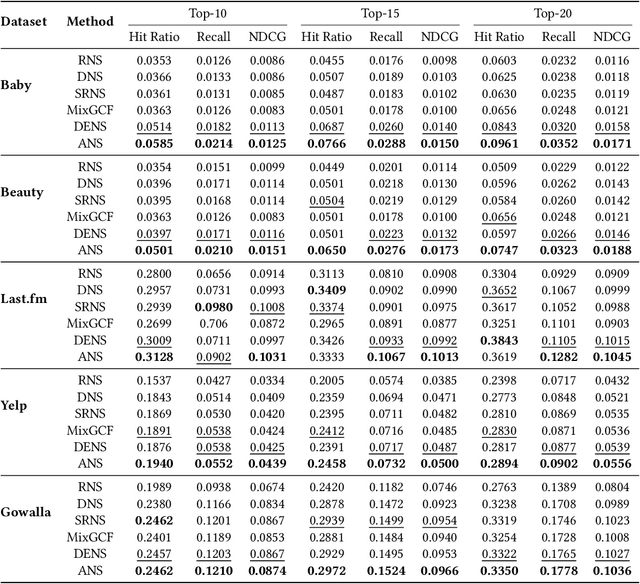

Abstract:Negative sampling is essential for implicit collaborative filtering to provide proper negative training signals so as to achieve desirable performance. We experimentally unveil a common limitation of all existing negative sampling methods that they can only select negative samples of a fixed hardness level, leading to the false positive problem (FPP) and false negative problem (FNP). We then propose a new paradigm called adaptive hardness negative sampling (AHNS) and discuss its three key criteria. By adaptively selecting negative samples with appropriate hardnesses during the training process, AHNS can well mitigate the impacts of FPP and FNP. Next, we present a concrete instantiation of AHNS called AHNS_{p<0}, and theoretically demonstrate that AHNS_{p<0} can fit the three criteria of AHNS well and achieve a larger lower bound of normalized discounted cumulative gain. Besides, we note that existing negative sampling methods can be regarded as more relaxed cases of AHNS. Finally, we conduct comprehensive experiments, and the results show that AHNS_{p<0} can consistently and substantially outperform several state-of-the-art competitors on multiple datasets.

Augmented Negative Sampling for Collaborative Filtering

Aug 11, 2023

Abstract:Negative sampling is essential for implicit-feedback-based collaborative filtering, which is used to constitute negative signals from massive unlabeled data to guide supervised learning. The state-of-the-art idea is to utilize hard negative samples that carry more useful information to form a better decision boundary. To balance efficiency and effectiveness, the vast majority of existing methods follow the two-pass approach, in which the first pass samples a fixed number of unobserved items by a simple static distribution and then the second pass selects the final negative items using a more sophisticated negative sampling strategy. However, selecting negative samples from the original items is inherently restricted, and thus may not be able to contrast positive samples well. In this paper, we confirm this observation via experiments and introduce two limitations of existing solutions: ambiguous trap and information discrimination. Our response to such limitations is to introduce augmented negative samples. This direction renders a substantial technical challenge because constructing unconstrained negative samples may introduce excessive noise that distorts the decision boundary. To this end, we introduce a novel generic augmented negative sampling paradigm and provide a concrete instantiation. First, we disentangle hard and easy factors of negative items. Next, we generate new candidate negative samples by augmenting only the easy factors in a regulated manner: the direction and magnitude of the augmentation are carefully calibrated. Finally, we design an advanced negative sampling strategy to identify the final augmented negative samples, which considers not only the score function used in existing methods but also a new metric called augmentation gain. Extensive experiments on real-world datasets demonstrate that our method significantly outperforms state-of-the-art baselines.

Denoising and Prompt-Tuning for Multi-Behavior Recommendation

Feb 12, 2023Abstract:In practical recommendation scenarios, users often interact with items under multi-typed behaviors (e.g., click, add-to-cart, and purchase). Traditional collaborative filtering techniques typically assume that users only have a single type of behavior with items, making it insufficient to utilize complex collaborative signals to learn informative representations and infer actual user preferences. Consequently, some pioneer studies explore modeling multi-behavior heterogeneity to learn better representations and boost the performance of recommendations for a target behavior. However, a large number of auxiliary behaviors (i.e., click and add-to-cart) could introduce irrelevant information to recommenders, which could mislead the target behavior (i.e., purchase) recommendation, rendering two critical challenges: (i) denoising auxiliary behaviors and (ii) bridging the semantic gap between auxiliary and target behaviors. Motivated by the above observation, we propose a novel framework-Denoising and Prompt-Tuning (DPT) with a three-stage learning paradigm to solve the aforementioned challenges. In particular, DPT is equipped with a pattern-enhanced graph encoder in the first stage to learn complex patterns as prior knowledge in a data-driven manner to guide learning informative representation and pinpointing reliable noise for subsequent stages. Accordingly, we adopt different lightweight tuning approaches with effectiveness and efficiency in the following stages to further attenuate the influence of noise and alleviate the semantic gap among multi-typed behaviors. Extensive experiments on two real-world datasets demonstrate the superiority of DPT over a wide range of state-of-the-art methods. The implementation code is available online at https://github.com/zc-97/DPT.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge