Philipp Reis

ZeloS -- A Research Platform for Early-Stage Validation of Research Findings Related to Automated Driving

May 05, 2025Abstract:This paper presents ZeloS, a research platform designed and built for practical validation of automated driving methods in an early stage of research. We overview ZeloS' hardware setup and automation architecture and focus on motion planning and control. ZeloS weighs 69 kg, measures a length of 117 cm, and is equipped with all-wheel steering, all-wheel drive, and various onboard sensors for localization. The hardware setup and the automation architecture of ZeloS are designed and built with a focus on modularity and the goal of being simple yet effective. The modular design allows the modification of individual automation modules without the need for extensive onboarding into the automation architecture. As such, this design supports ZeloS in being a versatile research platform for validating various automated driving methods. The motion planning component and control of ZeloS feature optimization-based methods that allow for explicitly considering constraints. We demonstrate the hardware and automation setup by presenting experimental data.

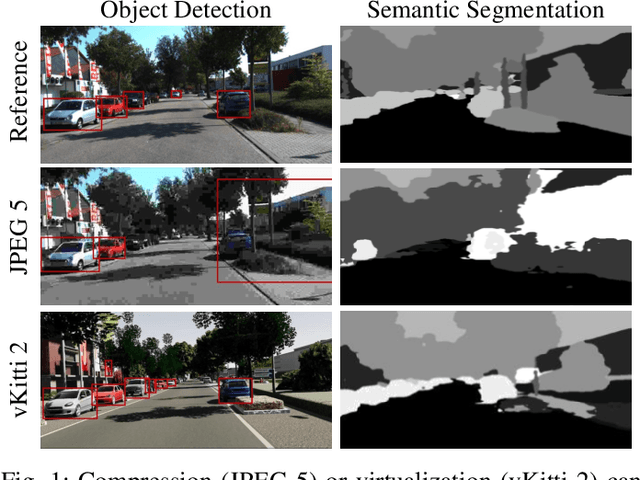

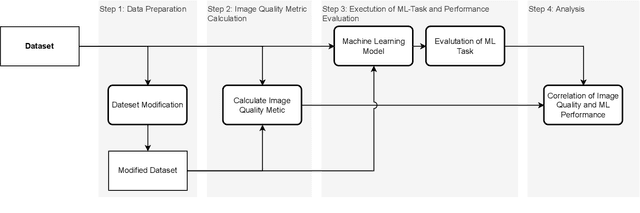

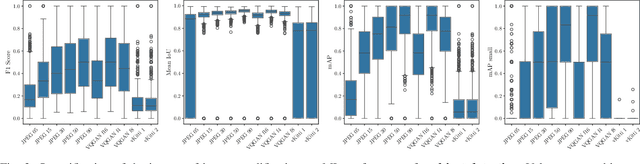

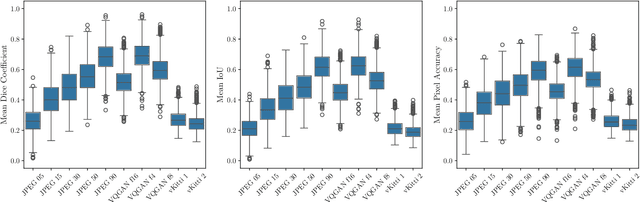

Data Quality Matters: Quantifying Image Quality Impact on Machine Learning Performance

Mar 28, 2025

Abstract:Precise perception of the environment is essential in highly automated driving systems, which rely on machine learning tasks such as object detection and segmentation. Compression of sensor data is commonly used for data handling, while virtualization is used for hardware-in-the-loop validation. Both methods can alter sensor data and degrade model performance. This necessitates a systematic approach to quantifying image validity. This paper presents a four-step framework to evaluate the impact of image modifications on machine learning tasks. First, a dataset with modified images is prepared to ensure one-to-one matching image pairs, enabling measurement of deviations resulting from compression and virtualization. Second, image deviations are quantified by comparing the effects of compression and virtualization against original camera-based sensor data. Third, the performance of state-of-the-art object detection models is analyzed to determine how altered input data affects perception tasks, including bounding box accuracy and reliability. Finally, a correlation analysis is performed to identify relationships between image quality and model performance. As a result, the LPIPS metric achieves the highest correlation between image deviation and machine learning performance across all evaluated machine learning tasks.

A Framework for a Capability-driven Evaluation of Scenario Understanding for Multimodal Large Language Models in Autonomous Driving

Mar 14, 2025Abstract:Multimodal large language models (MLLMs) hold the potential to enhance autonomous driving by combining domain-independent world knowledge with context-specific language guidance. Their integration into autonomous driving systems shows promising results in isolated proof-of-concept applications, while their performance is evaluated on selective singular aspects of perception, reasoning, or planning. To leverage their full potential a systematic framework for evaluating MLLMs in the context of autonomous driving is required. This paper proposes a holistic framework for a capability-driven evaluation of MLLMs in autonomous driving. The framework structures scenario understanding along the four core capability dimensions semantic, spatial, temporal, and physical. They are derived from the general requirements of autonomous driving systems, human driver cognition, and language-based reasoning. It further organises the domain into context layers, processing modalities, and downstream tasks such as language-based interaction and decision-making. To illustrate the framework's applicability, two exemplary traffic scenarios are analysed, grounding the proposed dimensions in realistic driving situations. The framework provides a foundation for the structured evaluation of MLLMs' potential for scenario understanding in autonomous driving.

Adversarial and Reactive Traffic Agents for Realistic Driving Simulation

Sep 21, 2024

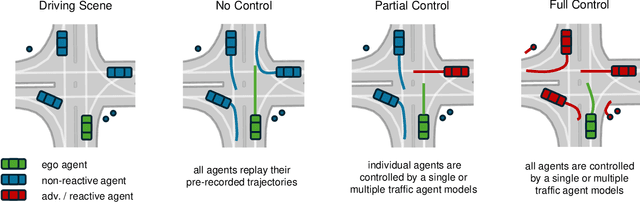

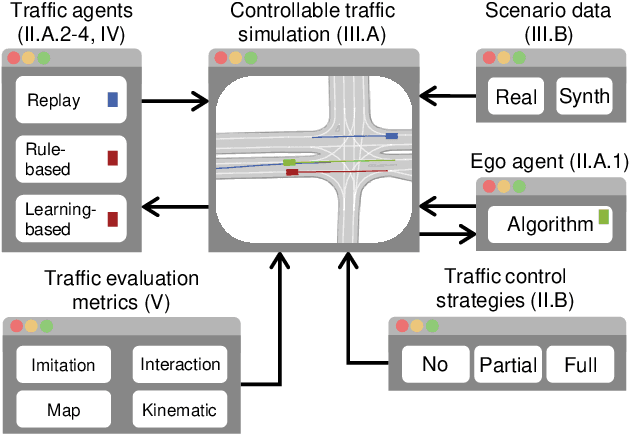

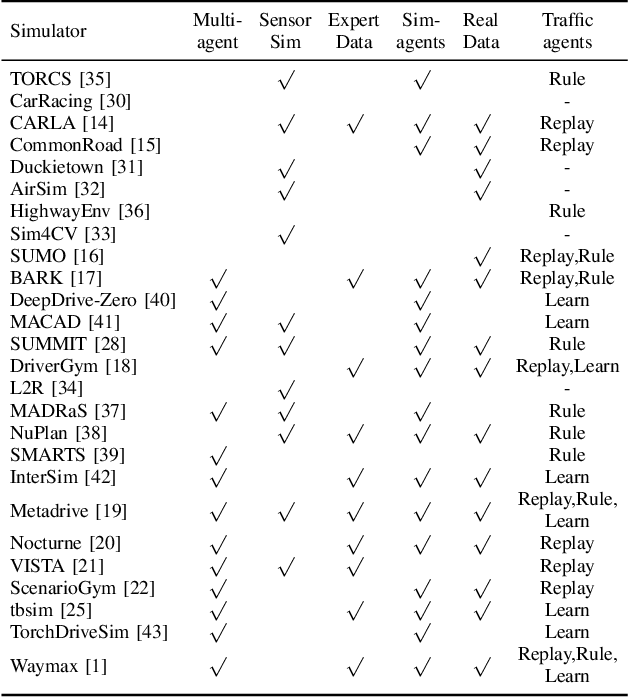

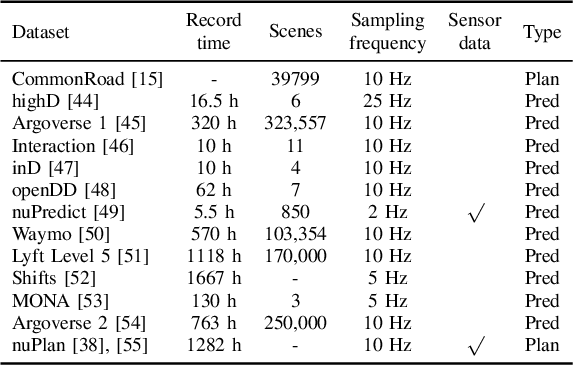

Abstract:Despite advancements in perception and planning for autonomous vehicles (AVs), validating their performance remains a significant challenge. The deployment of planning algorithms in real-world environments is often ineffective due to discrepancies between simulations and real traffic conditions. Evaluating AVs planning algorithms in simulation typically involves replaying driving logs from recorded real-world traffic. However, agents replayed from offline data are not reactive, lack the ability to respond to arbitrary AV behavior, and cannot behave in an adversarial manner to test certain properties of the driving policy. Therefore, simulation with realistic and potentially adversarial agents represents a critical task for AV planning software validation. In this work, we aim to review current research efforts in the field of adversarial and reactive traffic agents, with a particular focus on the application of classical and adversarial learning-based techniques. The objective of this work is to categorize existing approaches based on the proposed scenario controllability, defined by the number of reactive or adversarial agents. Moreover, we examine existing traffic simulations with respect to their employed default traffic agents and potential extensions, collate datasets that provide initial driving data, and collect relevant evaluation metrics.

Behavior Forests: Real-Time Discovery of Dynamic Behavior for Data Selection

Jul 02, 2024

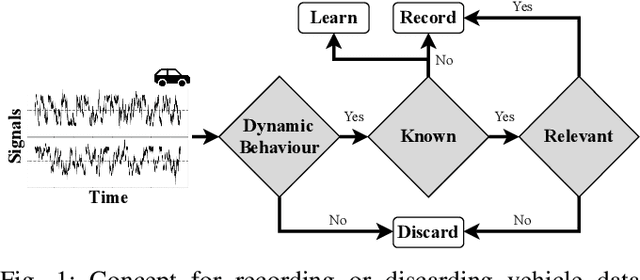

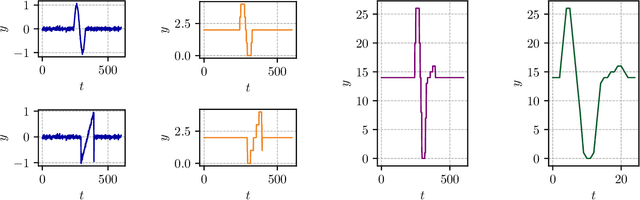

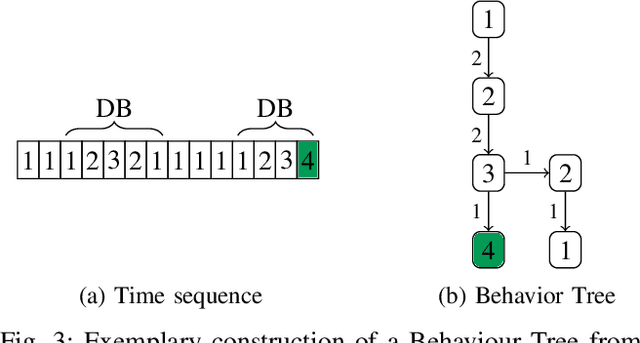

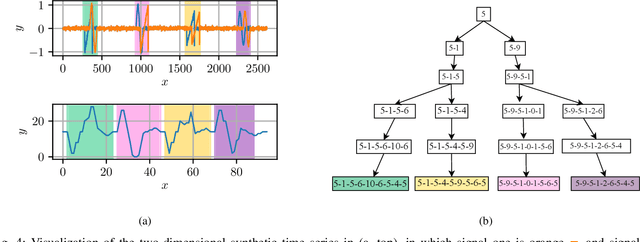

Abstract:Automated Driving Systems (ADS) development relies on utilizing real-world vehicle data. The volume of data generated by modern vehicles presents transmission, storage, and computational challenges. Focusing on Dynamic Behavior (DB) offers a promising approach to distinguish relevant from irrelevant information for ADS functionalities, thereby reducing data. Time series pattern recognition is beneficial for this task as it can analyze the temporal context of vehicle driving behavior. However, existing state-of-the-art methods often lack the adaptability to identify variable-length patterns or provide analytical descriptions of discovered patterns. This contribution proposes a Behavior Forest framework for real-time data selection by constructing a Behavior Graph during vehicle operation, facilitating analytical descriptions without pre-training. The method demonstrates its performance using a synthetically generated and electrocardiogram data set. An automotive time series data set is used to evaluate the data reduction capabilities, in which this method discarded 96.01% of the incoming data stream, while relevant DB remain included.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge