Pete Burnap

Topic Modelling: Going Beyond Token Outputs

Jan 16, 2024

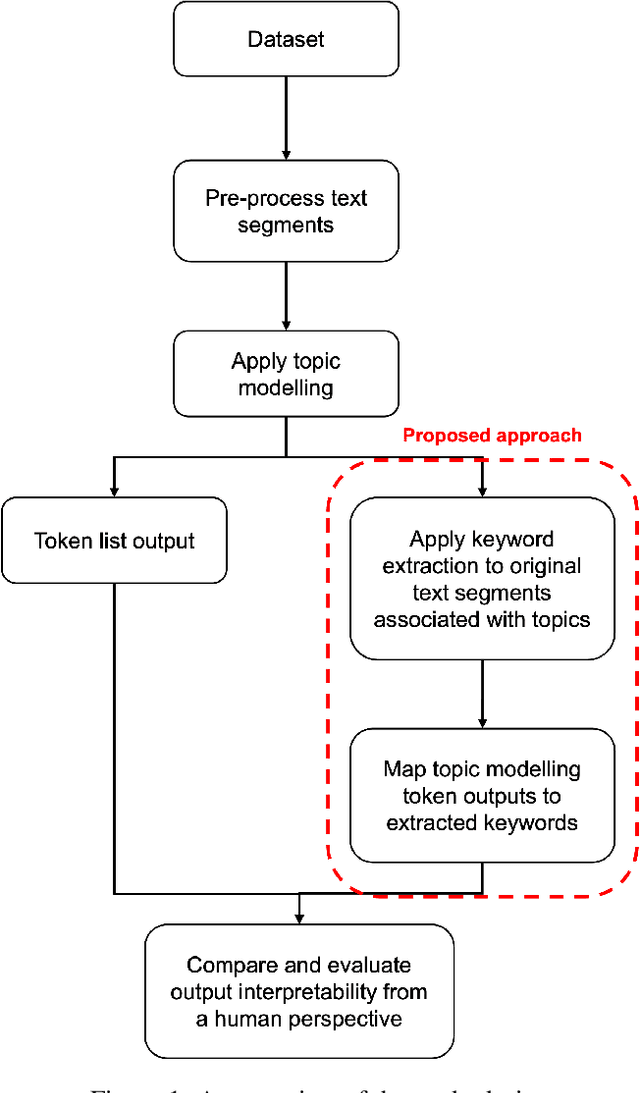

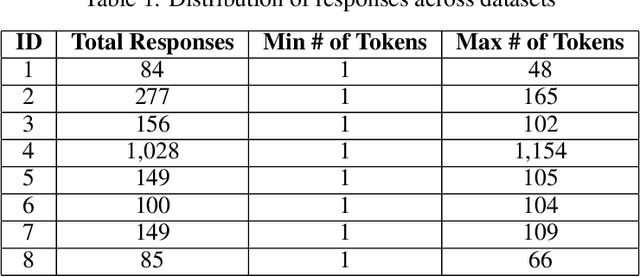

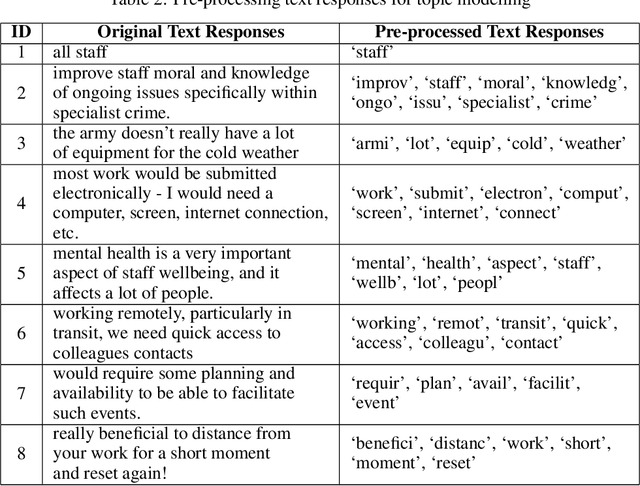

Abstract:Topic modelling is a text mining technique for identifying salient themes from a number of documents. The output is commonly a set of topics consisting of isolated tokens that often co-occur in such documents. Manual effort is often associated with interpreting a topic's description from such tokens. However, from a human's perspective, such outputs may not adequately provide enough information to infer the meaning of the topics; thus, their interpretability is often inaccurately understood. Although several studies have attempted to automatically extend topic descriptions as a means of enhancing the interpretation of topic models, they rely on external language sources that may become unavailable, must be kept up-to-date to generate relevant results, and present privacy issues when training on or processing data. This paper presents a novel approach towards extending the output of traditional topic modelling methods beyond a list of isolated tokens. This approach removes the dependence on external sources by using the textual data itself by extracting high-scoring keywords and mapping them to the topic model's token outputs. To measure the interpretability of the proposed outputs against those of the traditional topic modelling approach, independent annotators manually scored each output based on their quality and usefulness, as well as the efficiency of the annotation task. The proposed approach demonstrated higher quality and usefulness, as well as higher efficiency in the annotation task, in comparison to the outputs of a traditional topic modelling method, demonstrating an increase in their interpretability.

Enhancing Enterprise Network Security: Comparing Machine-Level and Process-Level Analysis for Dynamic Malware Detection

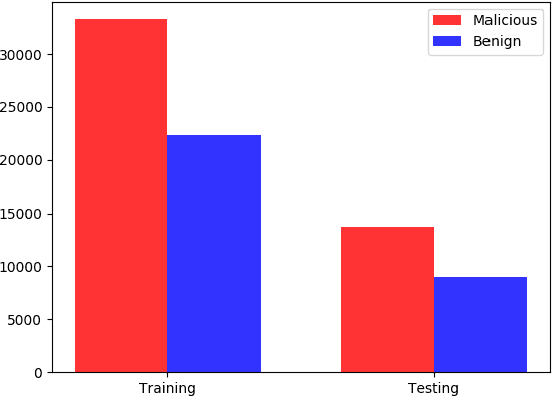

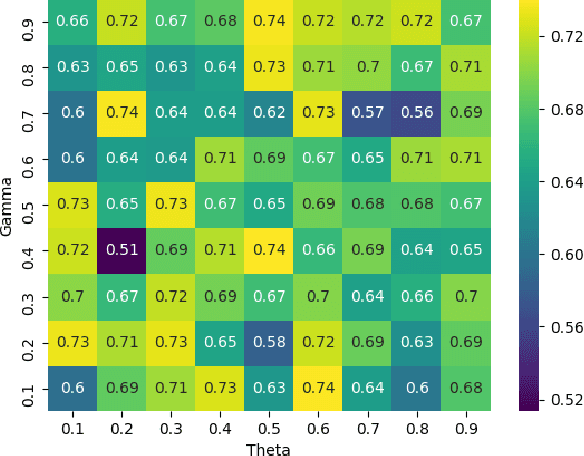

Oct 27, 2023Abstract:Analysing malware is important to understand how malicious software works and to develop appropriate detection and prevention methods. Dynamic analysis can overcome evasion techniques commonly used to bypass static analysis and provide insights into malware runtime activities. Much research on dynamic analysis focused on investigating machine-level information (e.g., CPU, memory, network usage) to identify whether a machine is running malicious activities. A malicious machine does not necessarily mean all running processes on the machine are also malicious. If we can isolate the malicious process instead of isolating the whole machine, we could kill the malicious process, and the machine can keep doing its job. Another challenge dynamic malware detection research faces is that the samples are executed in one machine without any background applications running. It is unrealistic as a computer typically runs many benign (background) applications when a malware incident happens. Our experiment with machine-level data shows that the existence of background applications decreases previous state-of-the-art accuracy by about 20.12% on average. We also proposed a process-level Recurrent Neural Network (RNN)-based detection model. Our proposed model performs better than the machine-level detection model; 0.049 increase in detection rate and a false-positive rate below 0.1.

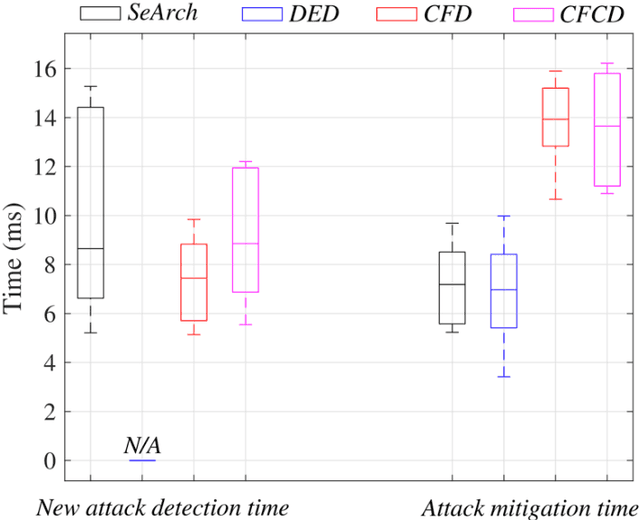

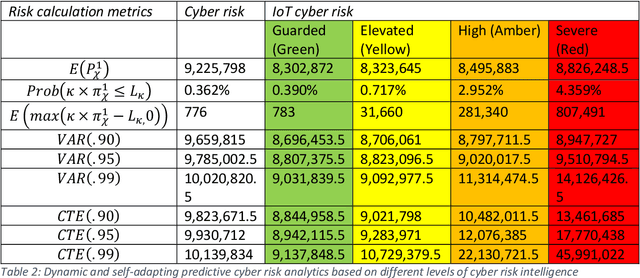

Design of a dynamic and self-adapting system, supported with artificial intelligence, machine learning and real-time intelligence for predictive cyber risk analytics

May 19, 2020

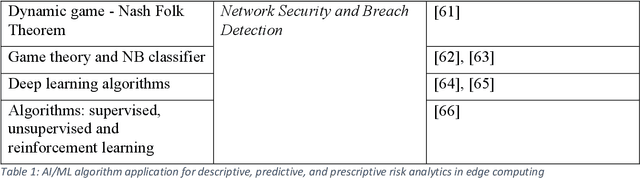

Abstract:This paper surveys deep learning algorithms, IoT cyber security and risk models, and established mathematical formulas to identify the best approach for developing a dynamic and self-adapting system for predictive cyber risk analytics supported with Artificial Intelligence and Machine Learning and real-time intelligence in edge computing. The paper presents a new mathematical approach for integrating concepts for cognition engine design, edge computing and Artificial Intelligence and Machine Learning to automate anomaly detection. This engine instigates a step change by applying Artificial Intelligence and Machine Learning embedded at the edge of IoT networks, to deliver safe and functional real-time intelligence for predictive cyber risk analytics. This will enhance capacities for risk analytics and assists in the creation of a comprehensive and systematic understanding of the opportunities and threats that arise when edge computing nodes are deployed, and when Artificial Intelligence and Machine Learning technologies are migrated to the periphery of the internet and into local IoT networks.

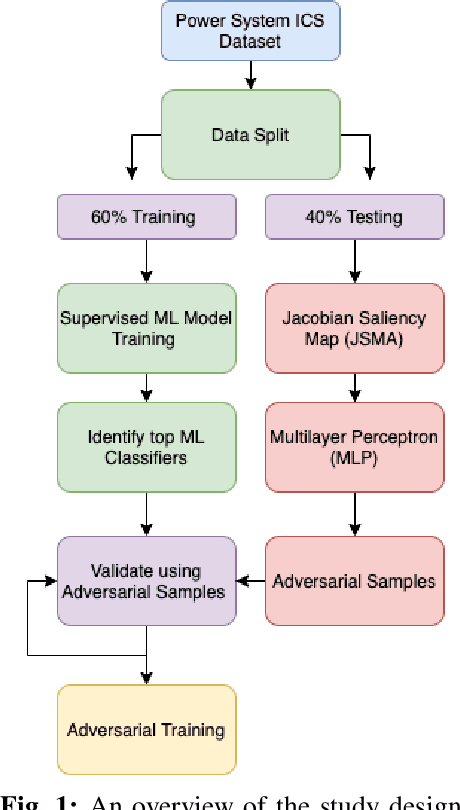

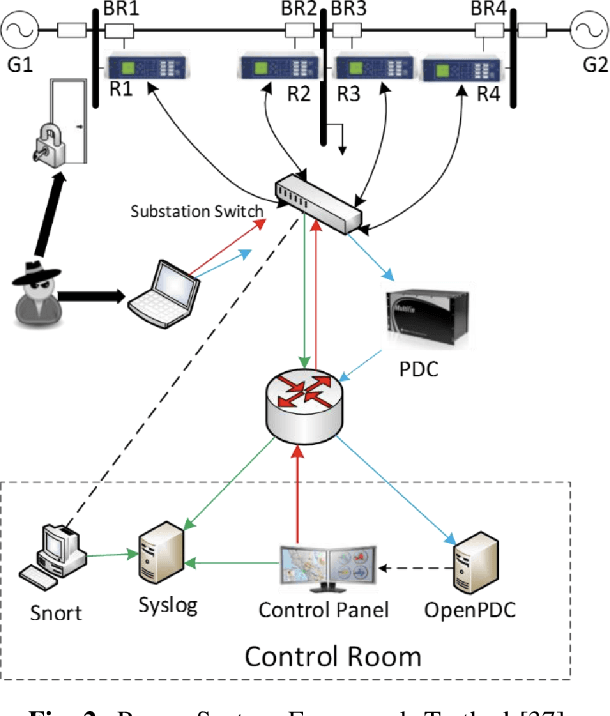

Adversarial Attacks on Machine Learning Cybersecurity Defences in Industrial Control Systems

Apr 10, 2020

Abstract:The proliferation and application of machine learning based Intrusion Detection Systems (IDS) have allowed for more flexibility and efficiency in the automated detection of cyber attacks in Industrial Control Systems (ICS). However, the introduction of such IDSs has also created an additional attack vector; the learning models may also be subject to cyber attacks, otherwise referred to as Adversarial Machine Learning (AML). Such attacks may have severe consequences in ICS systems, as adversaries could potentially bypass the IDS. This could lead to delayed attack detection which may result in infrastructure damages, financial loss, and even loss of life. This paper explores how adversarial learning can be used to target supervised models by generating adversarial samples using the Jacobian-based Saliency Map attack and exploring classification behaviours. The analysis also includes the exploration of how such samples can support the robustness of supervised models using adversarial training. An authentic power system dataset was used to support the experiments presented herein. Overall, the classification performance of two widely used classifiers, Random Forest and J48, decreased by 16 and 20 percentage points when adversarial samples were present. Their performances improved following adversarial training, demonstrating their robustness towards such attacks.

The Enemy Among Us: Detecting Hate Speech with Threats Based 'Othering' Language Embeddings

Mar 08, 2018

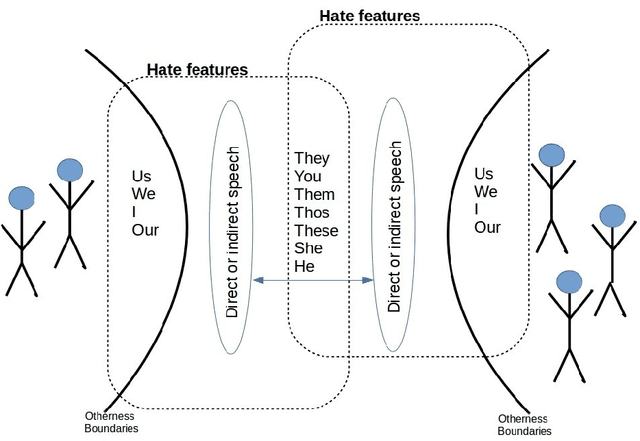

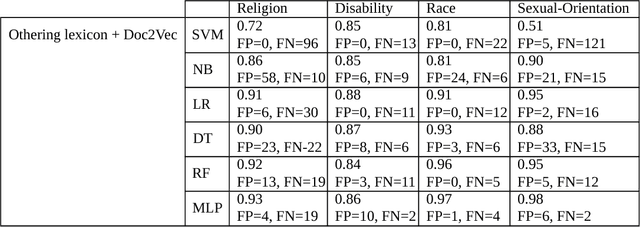

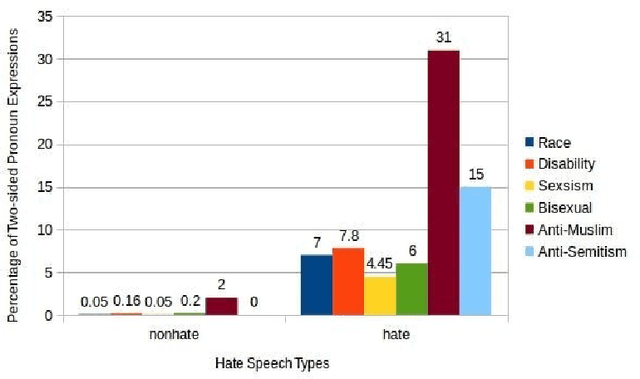

Abstract:Offensive or antagonistic language targeted at individuals and social groups based on their personal characteristics (also known as cyber hate speech or cyberhate) has been frequently posted and widely circulated viathe World Wide Web. This can be considered as a key risk factor for individual and societal tension linked toregional instability. Automated Web-based cyberhate detection is important for observing and understandingcommunity and regional societal tension - especially in online social networks where posts can be rapidlyand widely viewed and disseminated. While previous work has involved using lexicons, bags-of-words orprobabilistic language parsing approaches, they often suffer from a similar issue which is that cyberhate can besubtle and indirect - thus depending on the occurrence of individual words or phrases can lead to a significantnumber of false negatives, providing inaccurate representation of the trends in cyberhate. This problemmotivated us to challenge thinking around the representation of subtle language use, such as references toperceived threats from "the other" including immigration or job prosperity in a hateful context. We propose anovel framework that utilises language use around the concept of "othering" and intergroup threat theory toidentify these subtleties and we implement a novel classification method using embedding learning to computesemantic distances between parts of speech considered to be part of an "othering" narrative. To validate ourapproach we conduct several experiments on different types of cyberhate, namely religion, disability, race andsexual orientation, with F-measure scores for classifying hateful instances obtained through applying ourmodel of 0.93, 0.86, 0.97 and 0.98 respectively, providing a significant improvement in classifier accuracy overthe state-of-the-art

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge