Pedro J. Freire

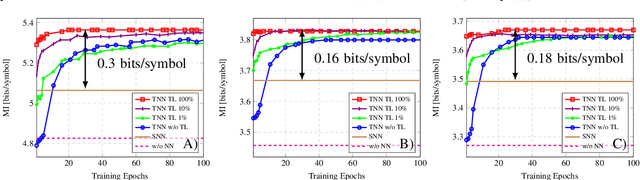

Multi-Task Learning to Enhance Generazability of Neural Network Equalizers in Coherent Optical Systems

Jul 04, 2023

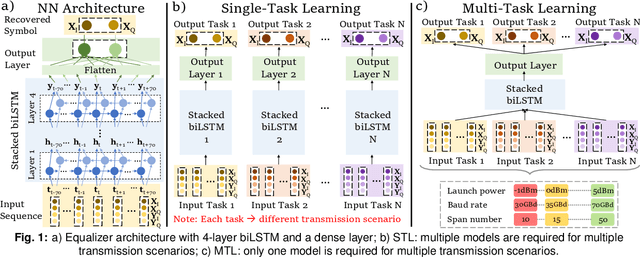

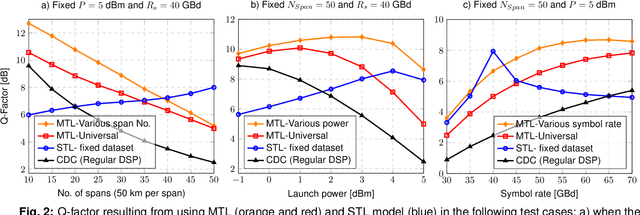

Abstract:For the first time, multi-task learning is proposed to improve the flexibility of NN-based equalizers in coherent systems. A "single" NN-based equalizer improves Q-factor by up to 4 dB compared to CDC, without re-training, even with variations in launch power, symbol rate, or transmission distance.

Hardware Realization of Nonlinear Activation Functions for NN-based Optical Equalizers

May 16, 2023

Abstract:To reduce the complexity of the hardware implementation of neural network-based optical channel equalizers, we demonstrate that the performance of the biLSTM equalizer with approximated activation functions is close to that of the original model.

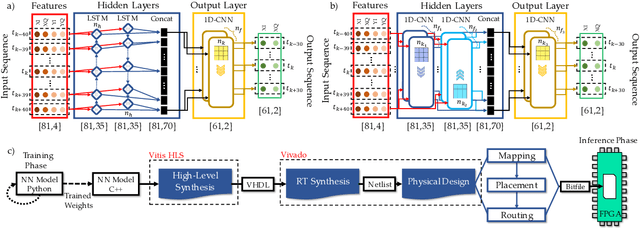

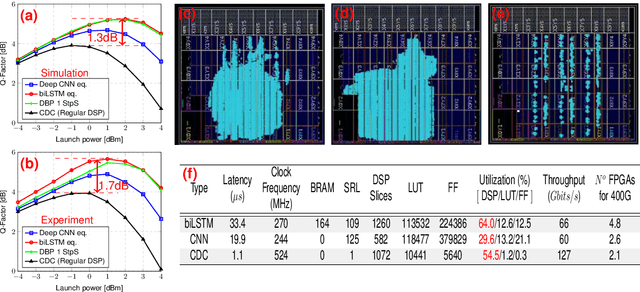

Implementing Neural Network-Based Equalizers in a Coherent Optical Transmission System Using Field-Programmable Gate Arrays

Dec 09, 2022

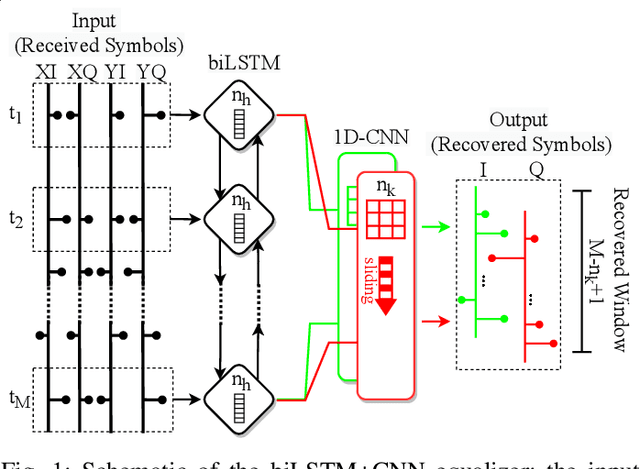

Abstract:In this work, we demonstrate the offline FPGA realization of both recurrent and feedforward neural network (NN)-based equalizers for nonlinearity compensation in coherent optical transmission systems. First, we present a realization pipeline showing the conversion of the models from Python libraries to the FPGA chip synthesis and implementation. Then, we review the main alternatives for the hardware implementation of nonlinear activation functions. The main results are divided into three parts: a performance comparison, an analysis of how activation functions are implemented, and a report on the complexity of the hardware. The performance in Q-factor is presented for the cases of bidirectional long-short-term memory coupled with convolutional NN (biLSTM + CNN) equalizer, CNN equalizer, and standard 1-StpS digital back-propagation (DBP) for the simulation and experiment propagation of a single channel dual-polarization (SC-DP) 16QAM at 34 GBd along 17x70km of LEAF. The biLSTM+CNN equalizer provides a similar result to DBP and a 1.7 dB Q-factor gain compared with the chromatic dispersion compensation baseline in the experimental dataset. After that, we assess the Q-factor and the impact of hardware utilization when approximating the activation functions of NN using Taylor series, piecewise linear, and look-up table (LUT) approximations. We also show how to mitigate the approximation errors with extra training and provide some insights into possible gradient problems in the LUT approximation. Finally, to evaluate the complexity of hardware implementation to achieve 400G throughput, fixed-point NN-based equalizers with approximated activation functions are developed and implemented in an FPGA.

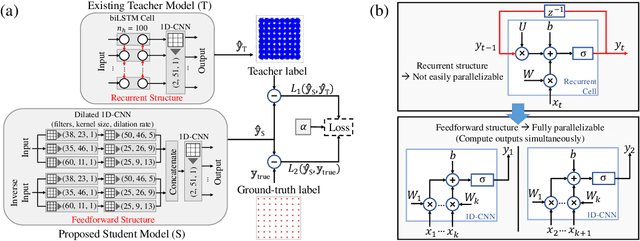

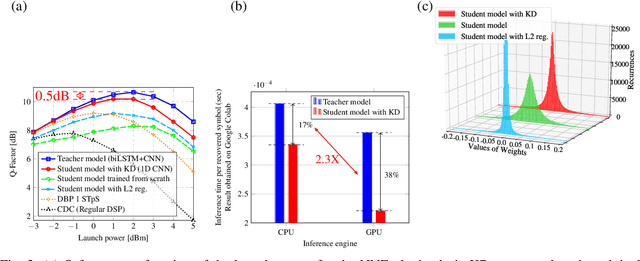

Knowledge Distillation Applied to Optical Channel Equalization: Solving the Parallelization Problem of Recurrent Connection

Dec 08, 2022

Abstract:To circumvent the non-parallelizability of recurrent neural network-based equalizers, we propose knowledge distillation to recast the RNN into a parallelizable feedforward structure. The latter shows 38\% latency decrease, while impacting the Q-factor by only 0.5dB.

Reducing Computational Complexity of Neural Networks in Optical Channel Equalization: From Concepts to Implementation

Aug 26, 2022

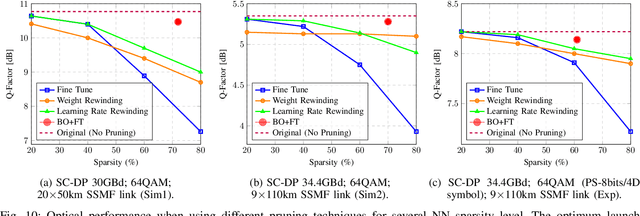

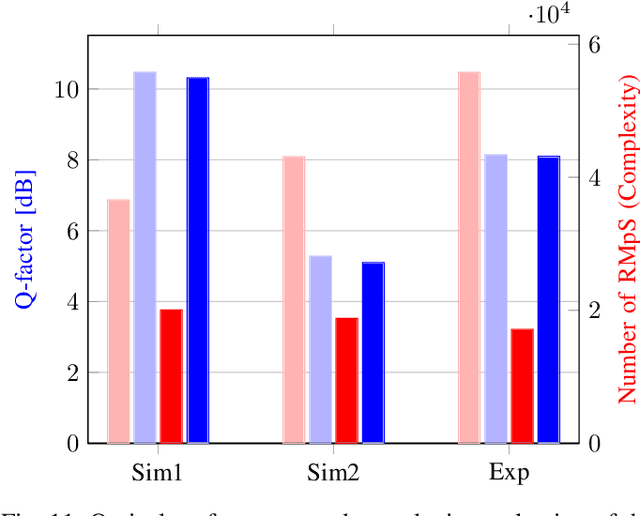

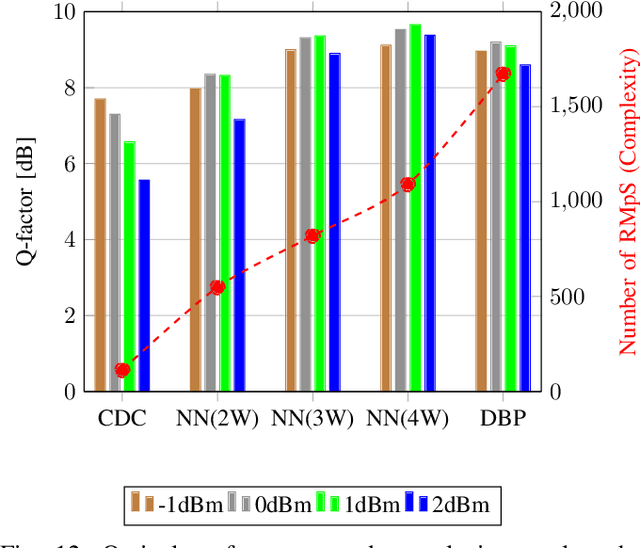

Abstract:In this paper, a new methodology is proposed that allows for the low-complexity development of neural network (NN) based equalizers for the mitigation of impairments in high-speed coherent optical transmission systems. In this work, we provide a comprehensive description and comparison of various deep model compression approaches that have been applied to feed-forward and recurrent NN designs. Additionally, we evaluate the influence these strategies have on the performance of each NN equalizer. Quantization, weight clustering, pruning, and other cutting-edge strategies for model compression are taken into consideration. In this work, we propose and evaluate a Bayesian optimization-assisted compression, in which the hyperparameters of the compression are chosen to simultaneously reduce complexity and improve performance. In conclusion, the trade-off between the complexity of each compression approach and its performance is evaluated by utilizing both simulated and experimental data in order to complete the analysis. By utilizing optimal compression approaches, we show that it is possible to design an NN-based equalizer that is simpler to implement and has better performance than the conventional digital back-propagation (DBP) equalizer with only one step per span. This is accomplished by reducing the number of multipliers used in the NN equalizer after applying the weighted clustering and pruning algorithms. Furthermore, we demonstrate that an equalizer based on NN can also achieve superior performance while still maintaining the same degree of complexity as the full electronic chromatic dispersion compensation block. We conclude our analysis by highlighting open questions and existing challenges, as well as possible future research directions.

Computational Complexity Evaluation of Neural Network Applications in Signal Processing

Jun 24, 2022

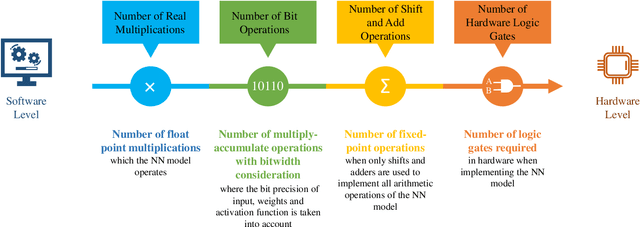

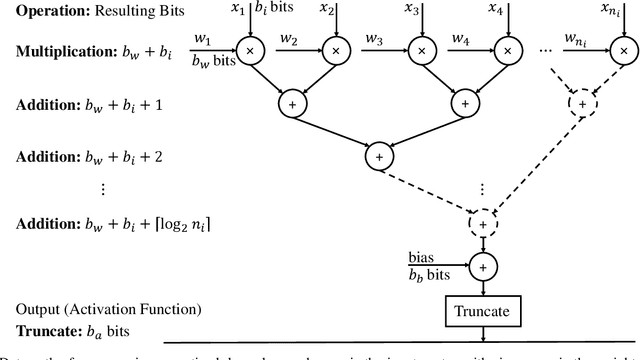

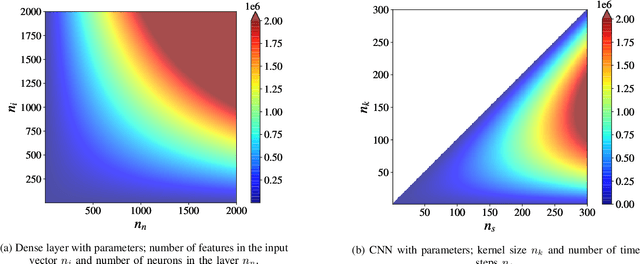

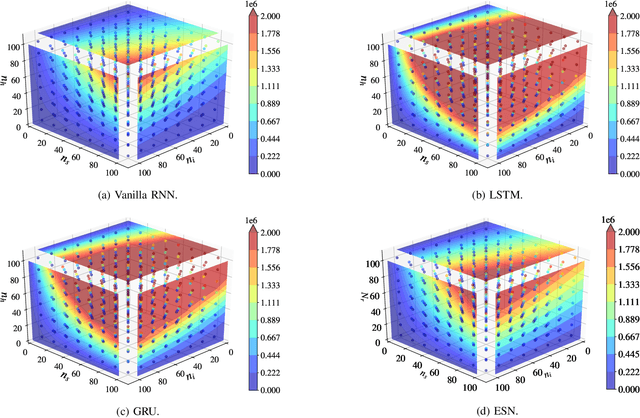

Abstract:In this paper, we provide a systematic approach for assessing and comparing the computational complexity of neural network layers in digital signal processing. We provide and link four software-to-hardware complexity measures, defining how the different complexity metrics relate to the layers' hyper-parameters. This paper explains how to compute these four metrics for feed-forward and recurrent layers, and defines in which case we ought to use a particular metric depending on whether we characterize a more soft- or hardware-oriented application. One of the four metrics, called `the number of additions and bit shifts (NABS)', is newly introduced for heterogeneous quantization. NABS characterizes the impact of not only the bitwidth used in the operation but also the type of quantization used in the arithmetical operations. We intend this work to serve as a baseline for the different levels (purposes) of complexity estimation related to the neural networks' application in real-time digital signal processing, aiming at unifying the computational complexity estimation.

Towards FPGA Implementation of Neural Network-Based Nonlinearity Mitigation Equalizers in Coherent Optical Transmission Systems

Jun 24, 2022

Abstract:For the first time, recurrent and feedforward neural network-based equalizers for nonlinearity compensation are implemented in an FPGA, with a level of complexity comparable to that of a dispersion equalizer. We demonstrate that the NN-based equalizers can outperform a 1 step-per-span DBP.

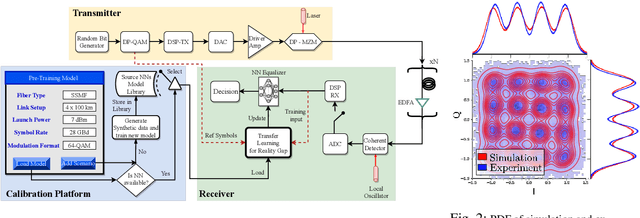

Domain Adaptation: the Key Enabler of Neural Network Equalizers in Coherent Optical Systems

Feb 25, 2022

Abstract:We introduce the domain adaptation and randomization approach for calibrating neural network-based equalizers for real transmissions, using synthetic data. The approach renders up to 99\% training process reduction, which we demonstrate in three experimental setups.

Neural networks based post-equalization in coherent optical systems: regression versus classification

Oct 17, 2021

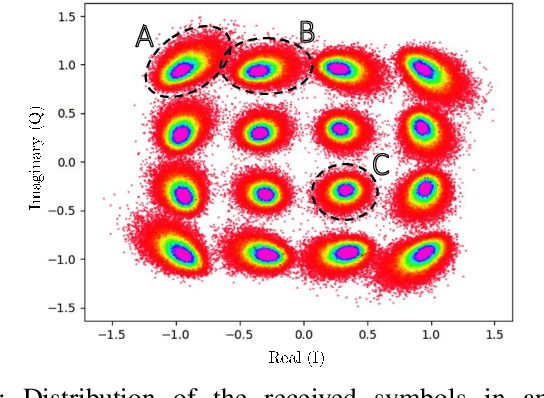

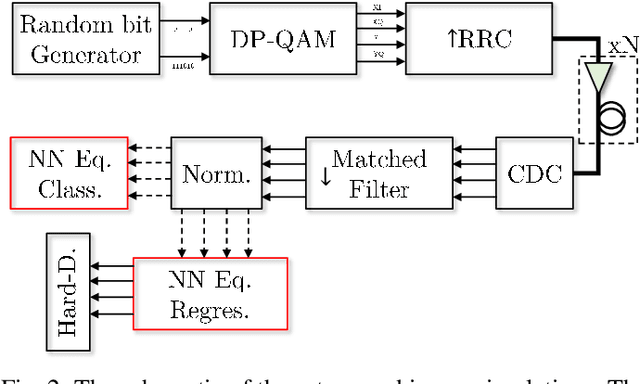

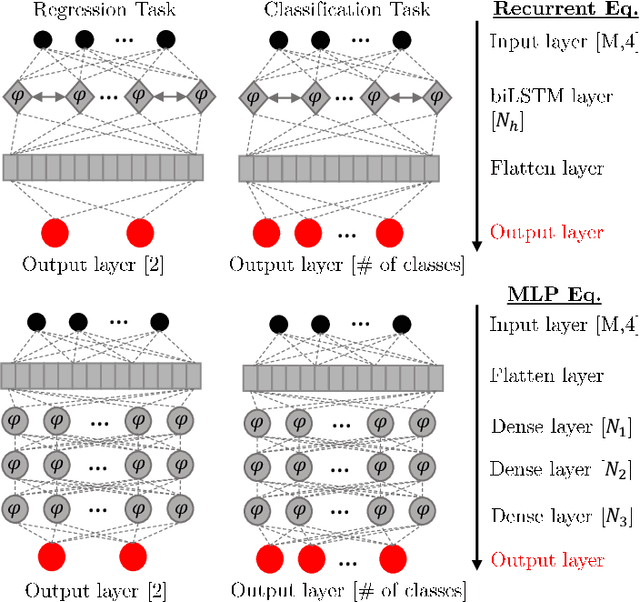

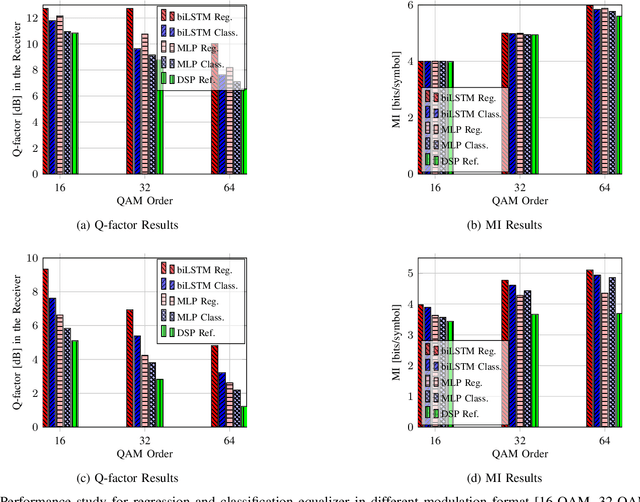

Abstract:In this paper, we address the question of which type of predictive modeling, classification, or regression, fits better the task of equalization using neural networks (NN) based post-processing in coherent optical communication, where the transmission channel is nonlinear and dispersive. For the first time, we presented some possible drawbacks in using each type of predictive task in a machine learning context for the nonlinear channel equalization problem. We studied two types of equalizers based on the feed-forward and recurrent neural networks over several different transmission scenarios, in linear and nonlinear regimes of the optical channel. We observed in all those cases that the training based on regression results in faster convergence and finally a superior performance, in terms of Q-factor and achievable information rate.

Neural Networks-based Equalizers for Coherent Optical Transmission: Caveats and Pitfalls

Sep 30, 2021

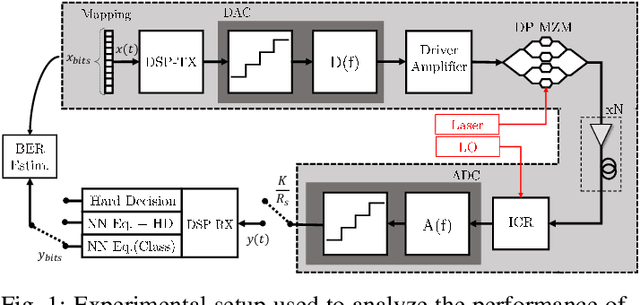

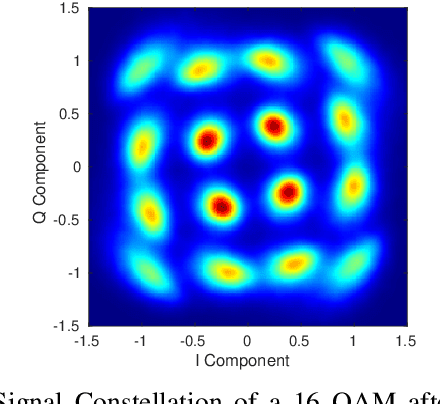

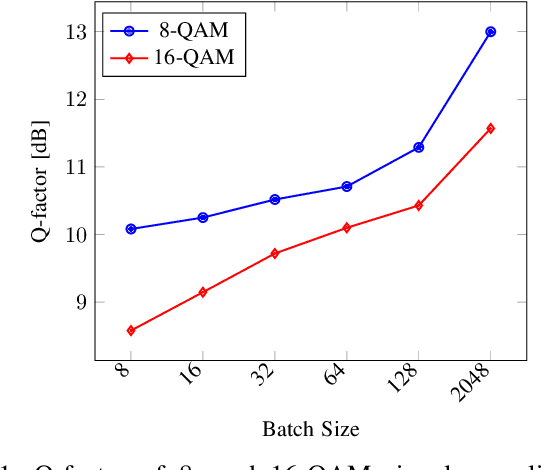

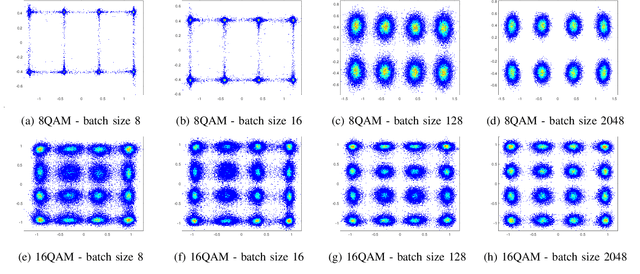

Abstract:This paper performs a detailed multi-faceted analysis of the key challenges and common design caveats related to the development of efficient neural networks (NN) nonlinear channel equalizers in coherent optical communication systems. Our study aims to guide researchers and engineers working in this field. We start by clarifying the metrics used to evaluate the equalizers' performance, relating them to the loss functions employed in the training of the NN equalizers. The relationships between the channel propagation model's accuracy and the performance of the equalizers are addressed and quantified. Next, we assess the impact of the order of the pseudo-random bit sequence used to generate the -- numerical and experimental -- data as well as of the DAC memory limitations on the operation of the NN equalizers both during training and validation phases. Finally, we examine the critical issues of overfitting limitations, a difference between using classification instead of regression, and the batch size-related peculiarities. We conclude by providing analytical expressions for the equalizers' complexity evaluation in the digital signal processing (DSP) terms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge