Paweł Teisseyre

A generalized approach to label shift: the Conditional Probability Shift Model

Mar 04, 2025Abstract:In many practical applications of machine learning, a discrepancy often arises between a source distribution from which labeled training examples are drawn and a target distribution for which only unlabeled data is observed. Traditionally, two main scenarios have been considered to address this issue: covariate shift (CS), where only the marginal distribution of features changes, and label shift (LS), which involves a change in the class variable's prior distribution. However, these frameworks do not encompass all forms of distributional shift. This paper introduces a new setting, Conditional Probability Shift (CPS), which captures the case when the conditional distribution of the class variable given some specific features changes while the distribution of remaining features given the specific features and the class is preserved. For this scenario we present the Conditional Probability Shift Model (CPSM) based on modeling the class variable's conditional probabilities using multinomial regression. Since the class variable is not observed for the target data, the parameters of the multinomial model for its distribution are estimated using the Expectation-Maximization algorithm. The proposed method is generic and can be combined with any probabilistic classifier. The effectiveness of CPSM is demonstrated through experiments on synthetic datasets and a case study using the MIMIC medical database, revealing its superior balanced classification accuracy on the target data compared to existing methods, particularly in situations situations of conditional distribution shift and no apriori distribution shift, which are not detected by LS-based methods.

Class prior estimation for positive-unlabeled learning when label shift occurs

Feb 28, 2025

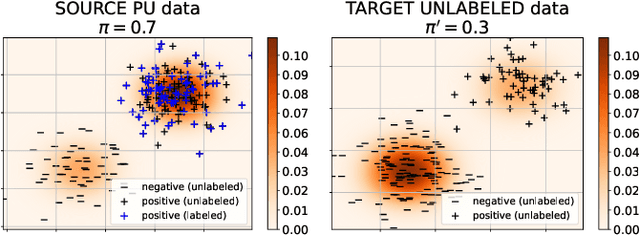

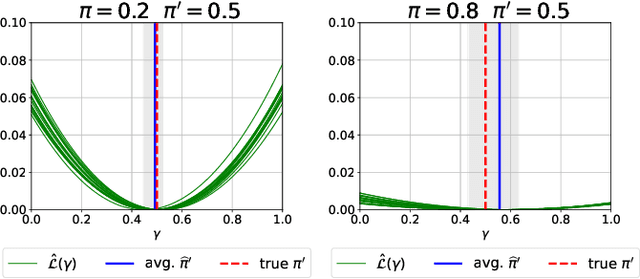

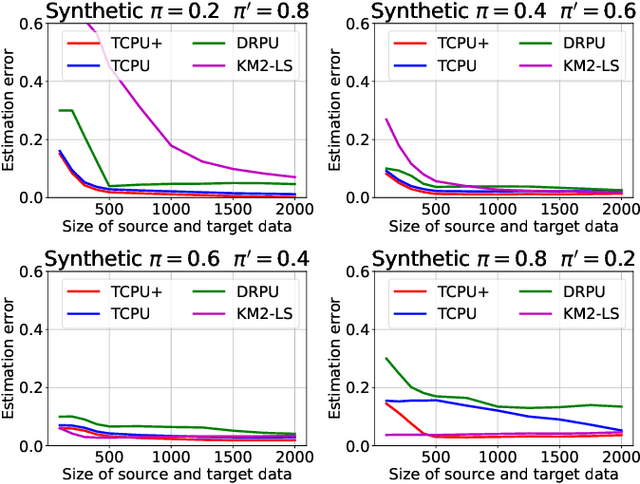

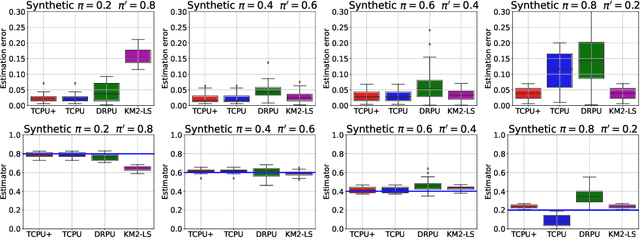

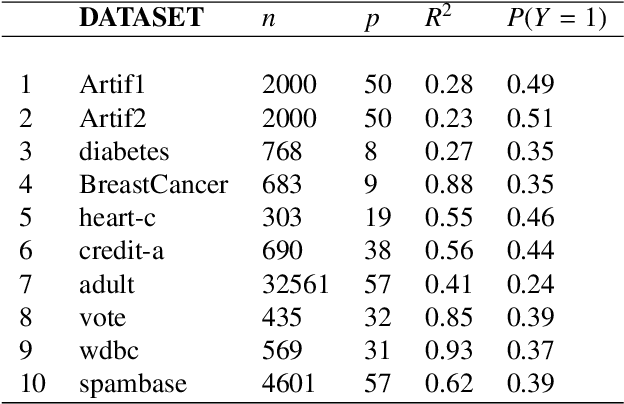

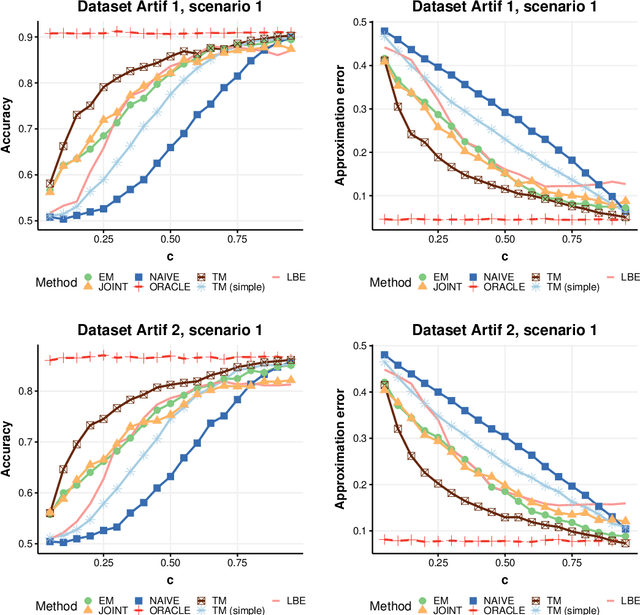

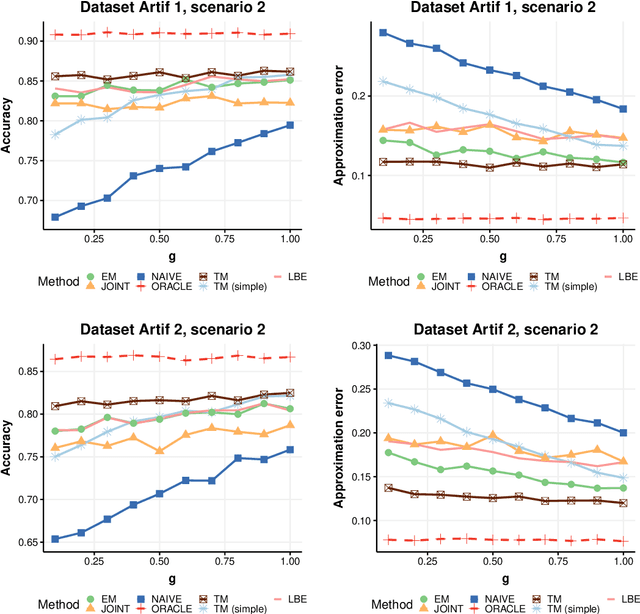

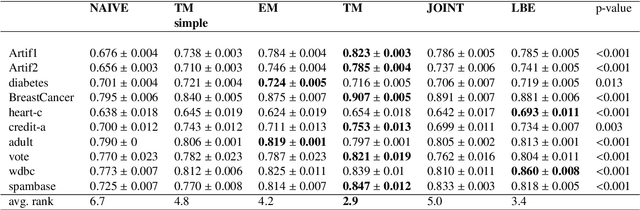

Abstract:We study estimation of class prior for unlabeled target samples which is possibly different from that of source population. It is assumed that for the source data only samples from positive class and from the whole population are available (PU learning scenario). We introduce a novel direct estimator of class prior which avoids estimation of posterior probabilities and has a simple geometric interpretation. It is based on a distribution matching technique together with kernel embedding and is obtained as an explicit solution to an optimisation task. We establish its asymptotic consistency as well as a non-asymptotic bound on its deviation from the unknown prior, which is calculable in practice. We study finite sample behaviour for synthetic and real data and show that the proposal, together with a suitably modified version for large values of source prior, works on par or better than its competitors.

Cost-constrained multi-label group feature selection using shadow features

Aug 03, 2024

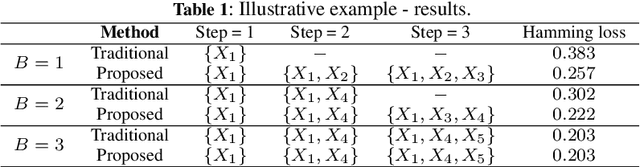

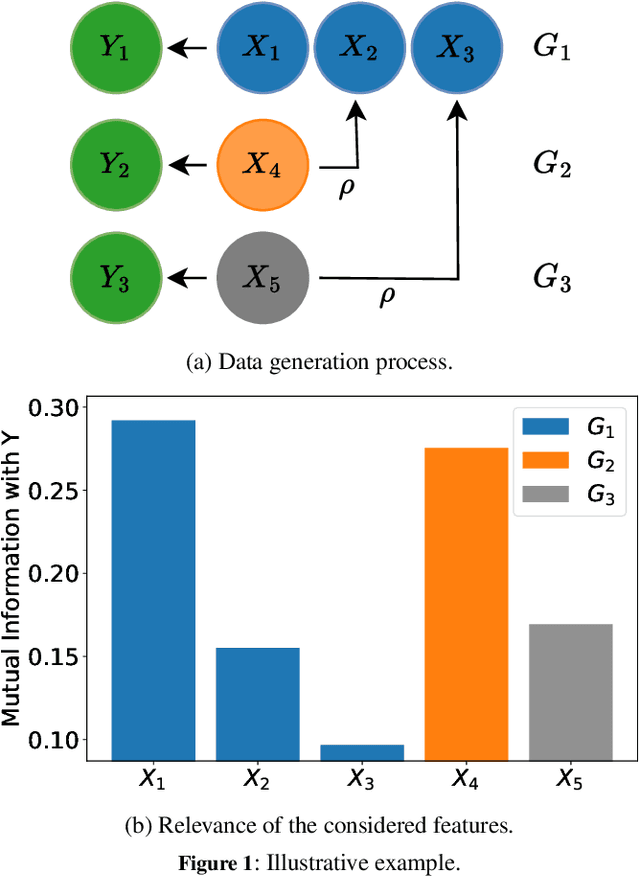

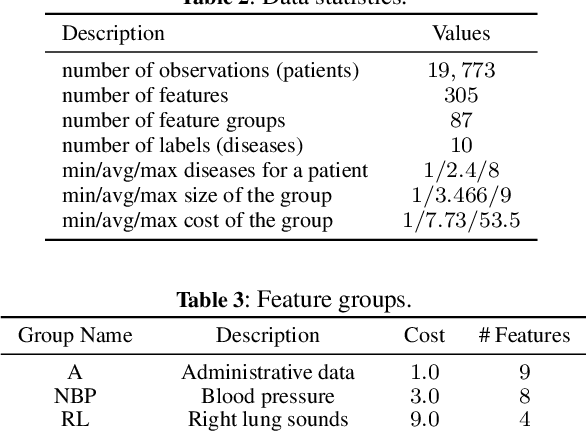

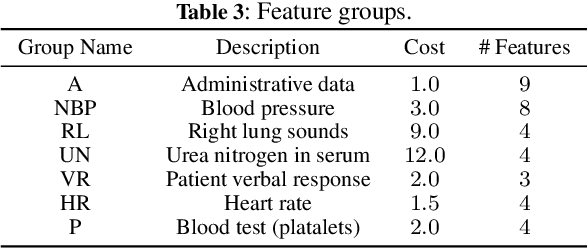

Abstract:We consider the problem of feature selection in multi-label classification, considering the costs assigned to groups of features. In this task, the goal is to select a subset of features that will be useful for predicting the label vector, but at the same time, the cost associated with the selected features will not exceed the assumed budget. Solving the problem is of great importance in medicine, where we may be interested in predicting various diseases based on groups of features. The groups may be associated with parameters obtained from a certain diagnostic test, such as a blood test. Because diagnostic test costs can be very high, considering cost information when selecting relevant features becomes crucial to reducing the cost of making predictions. We focus on the feature selection method based on information theory. The proposed method consists of two steps. First, we select features sequentially while maximizing conditional mutual information until the budget is exhausted. In the second step, we select additional cost-free features, i.e., those coming from groups that have already been used in previous steps. Limiting the number of added features is possible using the stop rule based on the concept of so-called shadow features, which are randomized counterparts of the original ones. In contrast to existing approaches based on penalized criteria, in our method, we avoid the need for computationally demanding optimization of the penalty parameter. Experiments conducted on the MIMIC medical database show the effectiveness of the method, especially when the assumed budget is limited.

Verifying the Selected Completely at Random Assumption in Positive-Unlabeled Learning

Mar 29, 2024Abstract:The goal of positive-unlabeled (PU) learning is to train a binary classifier on the basis of training data containing positive and unlabeled instances, where unlabeled observations can belong either to the positive class or to the negative class. Modeling PU data requires certain assumptions on the labeling mechanism that describes which positive observations are assigned a label. The simplest assumption, considered in early works, is SCAR (Selected Completely at Random Assumption), according to which the propensity score function, defined as the probability of assigning a label to a positive observation, is constant. On the other hand, a much more realistic assumption is SAR (Selected at Random), which states that the propensity function solely depends on the observed feature vector. SCAR-based algorithms are much simpler and computationally much faster compared to SAR-based algorithms, which usually require challenging estimation of the propensity score. In this work, we propose a relatively simple and computationally fast test that can be used to determine whether the observed data meet the SCAR assumption. Our test is based on generating artificial labels conforming to the SCAR case, which in turn allows to mimic the distribution of the test statistic under the null hypothesis of SCAR. We justify our method theoretically. In experiments, we demonstrate that the test successfully detects various deviations from SCAR scenario and at the same time it is possible to effectively control the type I error. The proposed test can be recommended as a pre-processing step to decide which final PU algorithm to choose in cases when nature of labeling mechanism is not known.

Joint empirical risk minimization for instance-dependent positive-unlabeled data

Dec 27, 2023Abstract:Learning from positive and unlabeled data (PU learning) is actively researched machine learning task. The goal is to train a binary classification model based on a training dataset containing part of positives which are labeled, and unlabeled instances. Unlabeled set includes remaining part of positives and all negative observations. An important element in PU learning is modeling of the labeling mechanism, i.e. labels' assignment to positive observations. Unlike in many prior works, we consider a realistic setting for which probability of label assignment, i.e. propensity score, is instance-dependent. In our approach we investigate minimizer of an empirical counterpart of a joint risk which depends on both posterior probability of inclusion in a positive class as well as on a propensity score. The non-convex empirical risk is alternately optimised with respect to parameters of both functions. In the theoretical analysis we establish risk consistency of the minimisers using recently derived methods from the theory of empirical processes. Besides, the important development here is a proposed novel implementation of an optimisation algorithm, for which sequential approximation of a set of positive observations among unlabeled ones is crucial. This relies on modified technique of 'spies' as well as on a thresholding rule based on conditional probabilities. Experiments conducted on 20 data sets for various labeling scenarios show that the proposed method works on par or more effectively than state-of-the-art methods based on propensity function estimation.

Joint estimation of posterior probability and propensity score function for positive and unlabelled data

Sep 16, 2022

Abstract:Positive and unlabelled learning is an important problem which arises naturally in many applications. The significant limitation of almost all existing methods lies in assuming that the propensity score function is constant (SCAR assumption), which is unrealistic in many practical situations. Avoiding this assumption, we consider parametric approach to the problem of joint estimation of posterior probability and propensity score functions. We show that under mild assumptions when both functions have the same parametric form (e.g. logistic with different parameters) the corresponding parameters are identifiable. Motivated by this, we propose two approaches to their estimation: joint maximum likelihood method and the second approach based on alternating maximization of two Fisher consistent expressions. Our experimental results show that the proposed methods are comparable or better than the existing methods based on Expectation-Maximisation scheme.

Asymptotic consistency and order specification for logistic classifier chains in multi-label learning

Feb 24, 2016

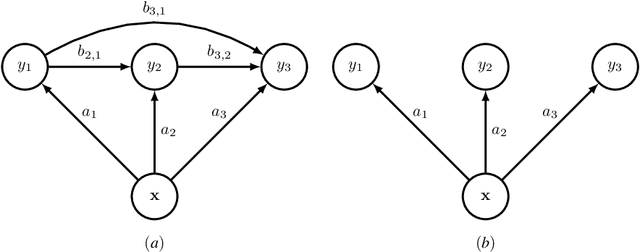

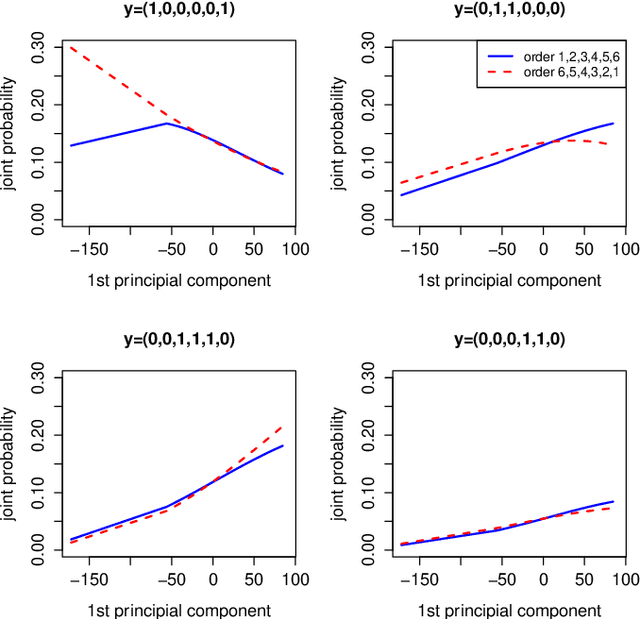

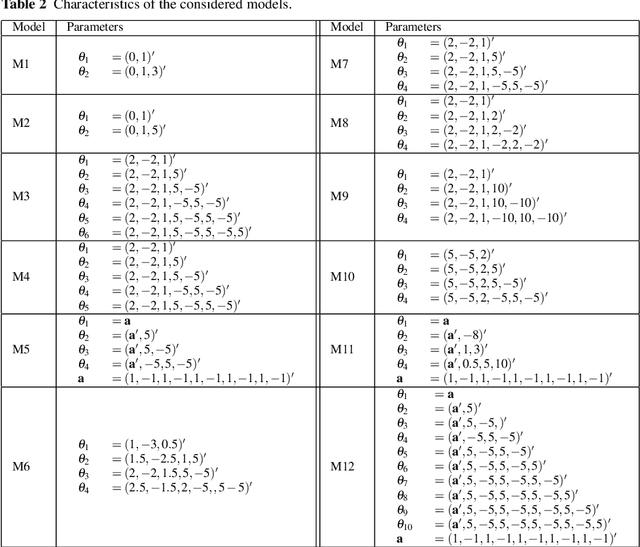

Abstract:Classifier chains are popular and effective method to tackle a multi-label classification problem. The aim of this paper is to study the asymptotic properties of the chain model in which the conditional probabilities are of the logistic form. In particular we find conditions on the number of labels and the distribution of feature vector under which the estimated mode of the joint distribution of labels converges to the true mode. Best of our knowledge, this important issue has not yet been studied in the context of multi-label learning. We also investigate how the order of model building in a chain influences the estimation of the joint distribution of labels. We establish the link between the problem of incorrect ordering in the chain and incorrect model specification. We propose a procedure of determining the optimal ordering of labels in the chain, which is based on using measures of correct specification and allows to find the ordering such that the consecutive logistic models are best possibly specified. The other important question raised in this paper is how accurately can we estimate the joint posterior probability when the ordering of labels is wrong or the logistic models in the chain are incorrectly specified. The numerical experiments illustrate the theoretical results.

Feature ranking for multi-label classification using Markov Networks

Feb 24, 2016

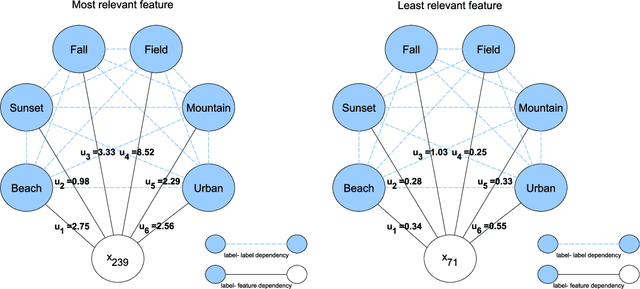

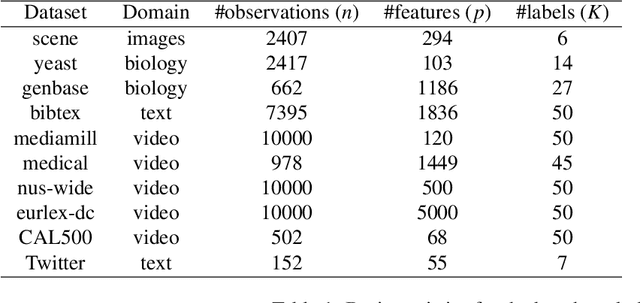

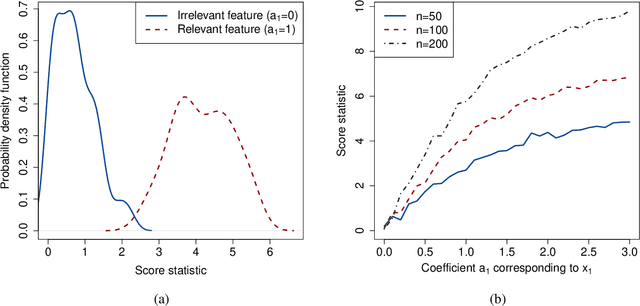

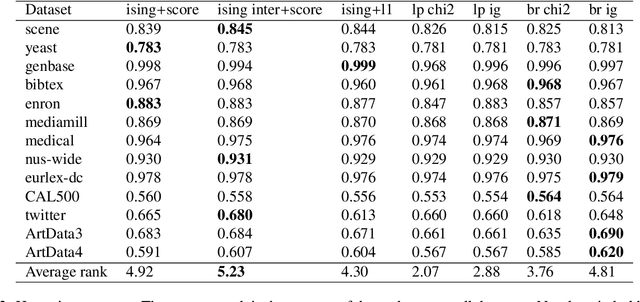

Abstract:We propose a simple and efficient method for ranking features in multi-label classification. The method produces a ranking of features showing their relevance in predicting labels, which in turn allows to choose a final subset of features. The procedure is based on Markov Networks and allows to model the dependencies between labels and features in a direct way. In the first step we build a simple network using only labels and then we test how much adding a single feature affects the initial network. More specifically, in the first step we use the Ising model whereas the second step is based on the score statistic, which allows to test a significance of added features very quickly. The proposed approach does not require transformation of label space, gives interpretable results and allows for attractive visualization of dependency structure. We give a theoretical justification of the procedure by discussing some theoretical properties of the Ising model and the score statistic. We also discuss feature ranking procedure based on fitting Ising model using $l_1$ regularized logistic regressions. Numerical experiments show that the proposed methods outperform the conventional approaches on the considered artificial and real datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge