Paramita Das

Social Biases in Knowledge Representations of Wikidata separates Global North from Global South

May 05, 2025Abstract:Knowledge Graphs have become increasingly popular due to their wide usage in various downstream applications, including information retrieval, chatbot development, language model construction, and many others. Link prediction (LP) is a crucial downstream task for knowledge graphs, as it helps to address the problem of the incompleteness of the knowledge graphs. However, previous research has shown that knowledge graphs, often created in a (semi) automatic manner, are not free from social biases. These biases can have harmful effects on downstream applications, especially by leading to unfair behavior toward minority groups. To understand this issue in detail, we develop a framework -- AuditLP -- deploying fairness metrics to identify biased outcomes in LP, specifically how occupations are classified as either male or female-dominated based on gender as a sensitive attribute. We have experimented with the sensitive attribute of age and observed that occupations are categorized as young-biased, old-biased, and age-neutral. We conduct our experiments on a large number of knowledge triples that belong to 21 different geographies extracted from the open-sourced knowledge graph, Wikidata. Our study shows that the variance in the biased outcomes across geographies neatly mirrors the socio-economic and cultural division of the world, resulting in a transparent partition of the Global North from the Global South.

REVERSUM: A Multi-staged Retrieval-Augmented Generation Method to Enhance Wikipedia Tail Biographies through Personal Narratives

Feb 17, 2025Abstract:Wikipedia is an invaluable resource for factual information about a wide range of entities. However, the quality of articles on less-known entities often lags behind that of the well-known ones. This study proposes a novel approach to enhancing Wikipedia's B and C category biography articles by leveraging personal narratives such as autobiographies and biographies. By utilizing a multi-staged retrieval-augmented generation technique -- REVerSum -- we aim to enrich the informational content of these lesser-known articles. Our study reveals that personal narratives can significantly improve the quality of Wikipedia articles, providing a rich source of reliable information that has been underutilized in previous studies. Based on crowd-based evaluation, REVerSum generated content outperforms the best performing baseline by 17% in terms of integrability to the original Wikipedia article and 28.5\% in terms of informativeness. Code and Data are available at: https://github.com/sayantan11995/wikipedia_enrichment

* Accepted at COLING2025 Industry Track

On the effective transfer of knowledge from English to Hindi Wikipedia

Dec 07, 2024Abstract:Although Wikipedia is the largest multilingual encyclopedia, it remains inherently incomplete. There is a significant disparity in the quality of content between high-resource languages (HRLs, e.g., English) and low-resource languages (LRLs, e.g., Hindi), with many LRL articles lacking adequate information. To bridge these content gaps, we propose a lightweight framework to enhance knowledge equity between English and Hindi. In case the English Wikipedia page is not up-to-date, our framework extracts relevant information from external resources readily available (such as English books) and adapts it to align with Wikipedia's distinctive style, including its \textit{neutral point of view} (NPOV) policy, using in-context learning capabilities of large language models. The adapted content is then machine-translated into Hindi for integration into the corresponding Wikipedia articles. On the other hand, if the English version is comprehensive and up-to-date, the framework directly transfers knowledge from English to Hindi. Our framework effectively generates new content for Hindi Wikipedia sections, enhancing Hindi Wikipedia articles respectively by 65% and 62% according to automatic and human judgment-based evaluations.

Diversity matters: Robustness of bias measurements in Wikidata

Feb 27, 2023Abstract:With the widespread use of knowledge graphs (KG) in various automated AI systems and applications, it is very important to ensure that information retrieval algorithms leveraging them are free from societal biases. Previous works have depicted biases that persist in KGs, as well as employed several metrics for measuring the biases. However, such studies lack the systematic exploration of the sensitivity of the bias measurements, through varying sources of data, or the embedding algorithms used. To address this research gap, in this work, we present a holistic analysis of bias measurement on the knowledge graph. First, we attempt to reveal data biases that surface in Wikidata for thirteen different demographics selected from seven continents. Next, we attempt to unfold the variance in the detection of biases by two different knowledge graph embedding algorithms - TransE and ComplEx. We conduct our extensive experiments on a large number of occupations sampled from the thirteen demographics with respect to the sensitive attribute, i.e., gender. Our results show that the inherent data bias that persists in KG can be altered by specific algorithm bias as incorporated by KG embedding learning algorithms. Further, we show that the choice of the state-of-the-art KG embedding algorithm has a strong impact on the ranking of biased occupations irrespective of gender. We observe that the similarity of the biased occupations across demographics is minimal which reflects the socio-cultural differences around the globe. We believe that this full-scale audit of the bias measurement pipeline will raise awareness among the community while deriving insights related to design choices of data and algorithms both and refrain from the popular dogma of ``one-size-fits-all''.

* 11 pages

Quality change: norm or exception? Measurement, Analysis and Detection of Quality Change in Wikipedia

Nov 02, 2021

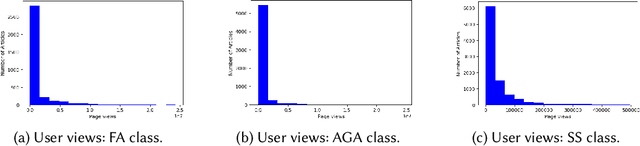

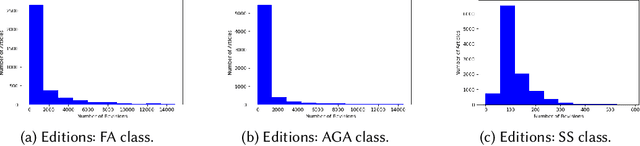

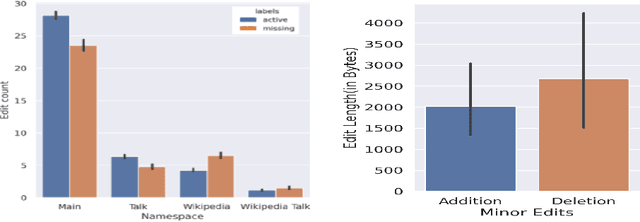

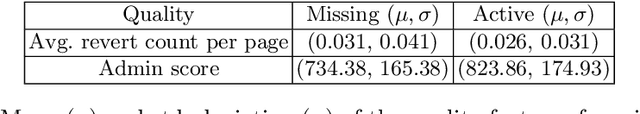

Abstract:Wikipedia has been turned into an immensely popular crowd-sourced encyclopedia for information dissemination on numerous versatile topics in the form of subscription free content. It allows anyone to contribute so that the articles remain comprehensive and updated. For enrichment of content without compromising standards, the Wikipedia community enumerates a detailed set of guidelines, which should be followed. Based on these, articles are categorized into several quality classes by the Wikipedia editors with increasing adherence to guidelines. This quality assessment task by editors is laborious as well as demands platform expertise. As a first objective, in this paper, we study evolution of a Wikipedia article with respect to such quality scales. Our results show novel non-intuitive patterns emerging from this exploration. As a second objective we attempt to develop an automated data driven approach for the detection of the early signals influencing the quality change of articles. We posit this as a change point detection problem whereby we represent an article as a time series of consecutive revisions and encode every revision by a set of intuitive features. Finally, various change point detection algorithms are used to efficiently and accurately detect the future change points. We also perform various ablation studies to understand which group of features are most effective in identifying the change points. To the best of our knowledge, this is the first work that rigorously explores English Wikipedia article quality life cycle from the perspective of quality indicators and provides a novel unsupervised page level approach to detect quality switch, which can help in automatic content monitoring in Wikipedia thus contributing significantly to the CSCW community.

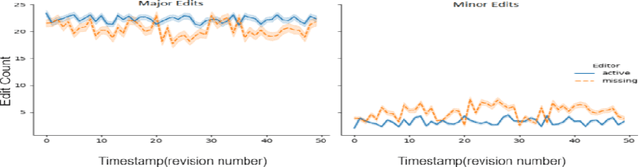

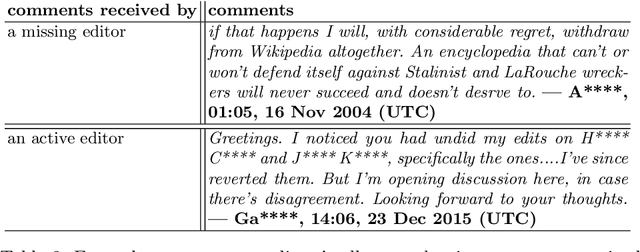

When expertise gone missing: Uncovering the loss of prolific contributors in Wikipedia

Sep 21, 2021

Abstract:Success of planetary-scale online collaborative platforms such as Wikipedia is hinged on active and continued participation of its voluntary contributors. The phenomenal success of Wikipedia as a valued multilingual source of information is a testament to the possibilities of collective intelligence. Specifically, the sustained and prudent contributions by the experienced prolific editors play a crucial role to operate the platform smoothly for decades. However, it has been brought to light that growth of Wikipedia is stagnating in terms of the number of editors that faces steady decline over time. This decreasing productivity and ever increasing attrition rate in both newcomer and experienced editors is a major concern for not only the future of this platform but also for several industry-scale information retrieval systems such as Siri, Alexa which depend on Wikipedia as knowledge store. In this paper, we have studied the ongoing crisis in which experienced and prolific editors withdraw. We performed extensive analysis of the editor activities and their language usage to identify features that can forecast prolific Wikipedians, who are at risk of ceasing voluntary services. To the best of our knowledge, this is the first work which proposes a scalable prediction pipeline, towards detecting the prolific Wikipedians, who might be at a risk of retiring from the platform and, thereby, can potentially enable moderators to launch appropriate incentive mechanisms to retain such `would-be missing' valued Wikipedians.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge