Paolo Arcaini

Assessing Vision-Language Models for Perception in Autonomous Underwater Robotic Software

Feb 13, 2026Abstract:Autonomous Underwater Robots (AURs) operate in challenging underwater environments, including low visibility and harsh water conditions. Such conditions present challenges for software engineers developing perception modules for the AUR software. To successfully carry out these tasks, deep learning has been incorporated into the AUR software to support its operations. However, the unique challenges of underwater environments pose difficulties for deep learning models, which often rely on labeled data that is scarce and noisy. This may undermine the trustworthiness of AUR software that relies on perception modules. Vision-Language Models (VLMs) offer promising solutions for AUR software as they generalize to unseen objects and remain robust in noisy conditions by inferring information from contextual cues. Despite this potential, their performance and uncertainty in underwater environments remain understudied from a software engineering perspective. Motivated by the needs of an industrial partner in assurance and risk management for maritime systems to assess the potential use of VLMs in this context, we present an empirical evaluation of VLM-based perception modules within the AUR software. We assess their ability to detect underwater trash by computing performance, uncertainty, and their relationship, to enable software engineers to select appropriate VLMs for their AUR software.

FairFLRep: Fairness aware fault localization and repair of Deep Neural Networks

Aug 11, 2025Abstract:Deep neural networks (DNNs) are being utilized in various aspects of our daily lives, including high-stakes decision-making applications that impact individuals. However, these systems reflect and amplify bias from the data used during training and testing, potentially resulting in biased behavior and inaccurate decisions. For instance, having different misclassification rates between white and black sub-populations. However, effectively and efficiently identifying and correcting biased behavior in DNNs is a challenge. This paper introduces FairFLRep, an automated fairness-aware fault localization and repair technique that identifies and corrects potentially bias-inducing neurons in DNN classifiers. FairFLRep focuses on adjusting neuron weights associated with sensitive attributes, such as race or gender, that contribute to unfair decisions. By analyzing the input-output relationships within the network, FairFLRep corrects neurons responsible for disparities in predictive quality parity. We evaluate FairFLRep on four image classification datasets using two DNN classifiers, and four tabular datasets with a DNN model. The results show that FairFLRep consistently outperforms existing methods in improving fairness while preserving accuracy. An ablation study confirms the importance of considering fairness during both fault localization and repair stages. Our findings also show that FairFLRep is more efficient than the baseline approaches in repairing the network.

MultiDrive: A Co-Simulation Framework Bridging 2D and 3D Driving Simulation for AV Software Validation

May 20, 2025Abstract:Scenario-based testing using simulations is a cornerstone of Autonomous Vehicles (AVs) software validation. So far, developers needed to choose between low-fidelity 2D simulators to explore the scenario space efficiently, and high-fidelity 3D simulators to study relevant scenarios in more detail, thus reducing testing costs while mitigating the sim-to-real gap. This paper presents a novel framework that leverages multi-agent co-simulation and procedural scenario generation to support scenario-based testing across low- and high-fidelity simulators for the development of motion planning algorithms. Our framework limits the effort required to transition scenarios between simulators and automates experiment execution, trajectory analysis, and visualization. Experiments with a reference motion planner show that our framework uncovers discrepancies between the planner's intended and actual behavior, thus exposing weaknesses in planning assumptions under more realistic conditions. Our framework is available at: https://github.com/TUM-AVS/MultiDrive

A Machine Learning-Based Error Mitigation Approach For Reliable Software Development On IBM'S Quantum Computers

Apr 19, 2024

Abstract:Quantum computers have the potential to outperform classical computers for some complex computational problems. However, current quantum computers (e.g., from IBM and Google) have inherent noise that results in errors in the outputs of quantum software executing on the quantum computers, affecting the reliability of quantum software development. The industry is increasingly interested in machine learning (ML)--based error mitigation techniques, given their scalability and practicality. However, existing ML-based techniques have limitations, such as only targeting specific noise types or specific quantum circuits. This paper proposes a practical ML-based approach, called Q-LEAR, with a novel feature set, to mitigate noise errors in quantum software outputs. We evaluated Q-LEAR on eight quantum computers and their corresponding noisy simulators, all from IBM, and compared Q-LEAR with a state-of-the-art ML-based approach taken as baseline. Results show that, compared to the baseline, Q-LEAR achieved a 25% average improvement in error mitigation on both real quantum computers and simulators. We also discuss the implications and practicality of Q-LEAR, which, we believe, is valuable for practitioners.

Using a Variational Autoencoder to Learn Valid Search Spaces of Safely Monitored Autonomous Robots for Last-Mile Delivery

Mar 06, 2023Abstract:The use of autonomous robots for delivery of goods to customers is an exciting new way to provide a reliable and sustainable service. However, in the real world, autonomous robots still require human supervision for safety reasons. We tackle the realworld problem of optimizing autonomous robot timings to maximize deliveries, while ensuring that there are never too many robots running simultaneously so that they can be monitored safely. We assess the use of a recent hybrid machine-learningoptimization approach COIL (constrained optimization in learned latent space) and compare it with a baseline genetic algorithm for the purposes of exploring variations of this problem. We also investigate new methods for improving the speed and efficiency of COIL. We show that only COIL can find valid solutions where appropriate numbers of robots run simultaneously for all problem variations tested. We also show that when COIL has learned its latent representation, it can optimize 10% faster than the GA, making it a good choice for daily re-optimization of robots where delivery requests for each day are allocated to robots while maintaining safe numbers of robots running at once.

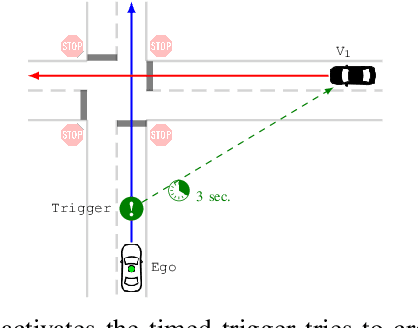

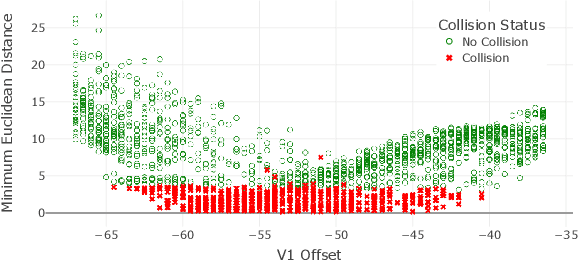

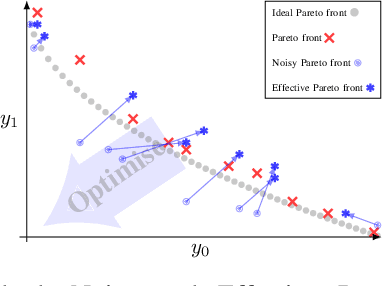

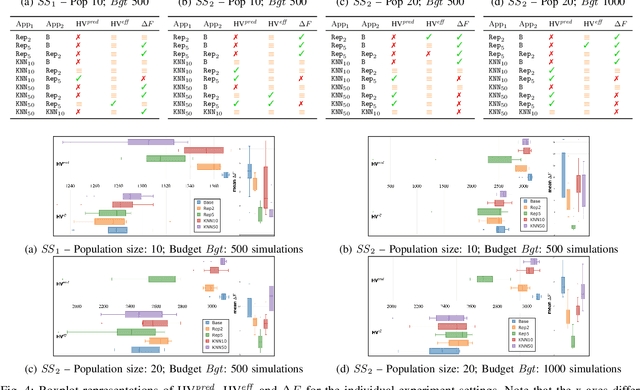

Handling Noise in Search-Based Scenario Generation for Autonomous Driving Systems

Sep 16, 2021

Abstract:This paper presents the first evaluation of k-nearest neighbours-Averaging (kNN-Avg) on a real-world case study. kNN-Avg is a novel technique that tackles the challenges of noisy multi-objective optimisation (MOO). Existing studies suggest the use of repetition to overcome noise. In contrast, kNN-Avg approximates these repetitions and exploits previous executions, thereby avoiding the cost of re-running. We use kNN-Avg for the scenario generation of a real-world autonomous driving system (ADS) and show that it is better than the noisy baseline. Furthermore, we compare it to the repetition-method and outline indicators as to which approach to choose in which situations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge