Marc Kaufeld

MultiDrive: A Co-Simulation Framework Bridging 2D and 3D Driving Simulation for AV Software Validation

May 20, 2025Abstract:Scenario-based testing using simulations is a cornerstone of Autonomous Vehicles (AVs) software validation. So far, developers needed to choose between low-fidelity 2D simulators to explore the scenario space efficiently, and high-fidelity 3D simulators to study relevant scenarios in more detail, thus reducing testing costs while mitigating the sim-to-real gap. This paper presents a novel framework that leverages multi-agent co-simulation and procedural scenario generation to support scenario-based testing across low- and high-fidelity simulators for the development of motion planning algorithms. Our framework limits the effort required to transition scenarios between simulators and automates experiment execution, trajectory analysis, and visualization. Experiments with a reference motion planner show that our framework uncovers discrepancies between the planner's intended and actual behavior, thus exposing weaknesses in planning assumptions under more realistic conditions. Our framework is available at: https://github.com/TUM-AVS/MultiDrive

DualAD: Dual-Layer Planning for Reasoning in Autonomous Driving

Sep 26, 2024

Abstract:We present a novel autonomous driving framework, DualAD, designed to imitate human reasoning during driving. DualAD comprises two layers: a rule-based motion planner at the bottom layer that handles routine driving tasks requiring minimal reasoning, and an upper layer featuring a rule-based text encoder that converts driving scenarios from absolute states into text description. This text is then processed by a large language model (LLM) to make driving decisions. The upper layer intervenes in the bottom layer's decisions when potential danger is detected, mimicking human reasoning in critical situations. Closed-loop experiments demonstrate that DualAD, using a zero-shot pre-trained model, significantly outperforms rule-based motion planners that lack reasoning abilities. Our experiments also highlight the effectiveness of the text encoder, which considerably enhances the model's scenario understanding. Additionally, the integrated DualAD model improves with stronger LLMs, indicating the framework's potential for further enhancement. We make code and benchmarks publicly available.

Investigating Driving Interactions: A Robust Multi-Agent Simulation Framework for Autonomous Vehicles

Feb 07, 2024

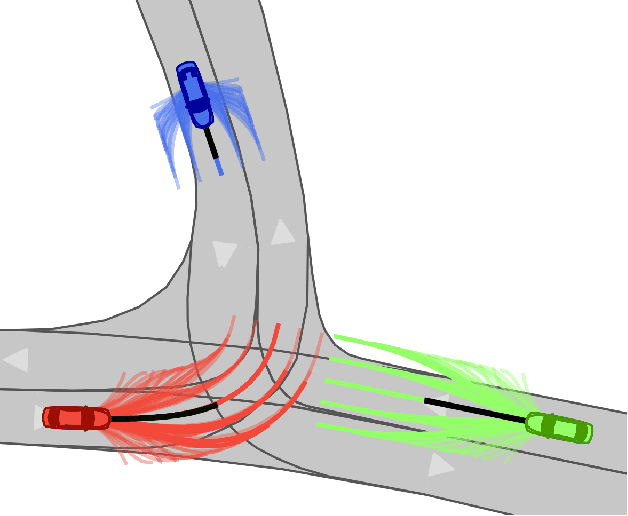

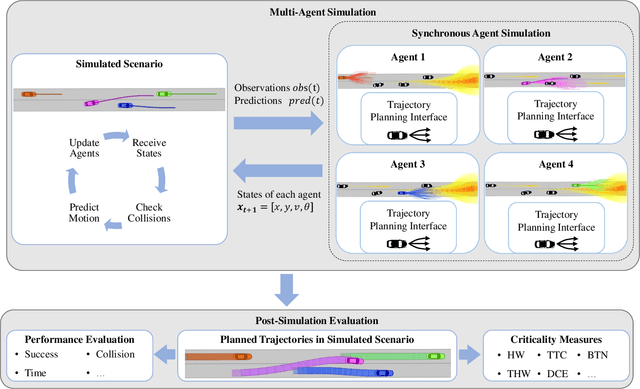

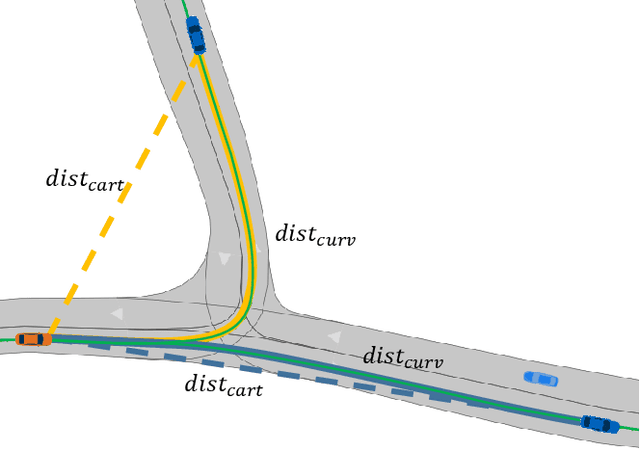

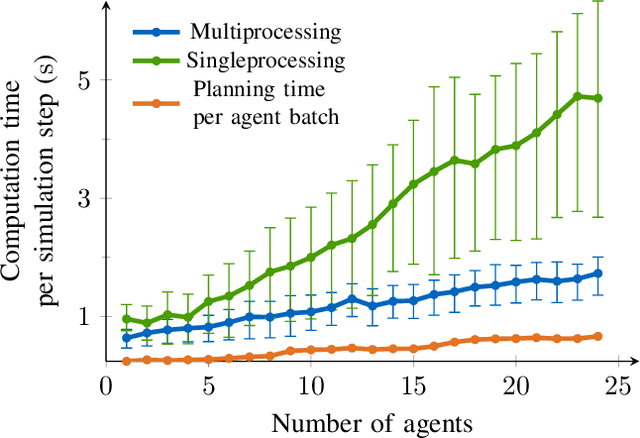

Abstract:Current validation methods often rely on recorded data and basic functional checks, which may not be sufficient to encompass the scenarios an autonomous vehicle might encounter. In addition, there is a growing need for complex scenarios with changing vehicle interactions for comprehensive validation. This work introduces a novel synchronous multi-agent simulation framework for autonomous vehicles in interactive scenarios. Our approach creates an interactive scenario and incorporates publicly available edge-case scenarios wherein simulated vehicles are replaced by agents navigating to predefined destinations. We provide a platform that enables the integration of different autonomous driving planning methodologies and includes a set of evaluation metrics to assess autonomous driving behavior. Our study explores different planning setups and adjusts simulation complexity to test the framework's adaptability and performance. Results highlight the critical role of simulating vehicle interactions to enhance autonomous driving systems. Our setup offers unique insights for developing advanced algorithms for complex driving tasks to accelerate future investigations and developments in this field. The multi-agent simulation framework is available as open-source software: https://github.com/TUM-AVS/Frenetix-Motion-Planner

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge