P. Michael Furlong

Wandering around: A bioinspired approach to visual attention through object motion sensitivity

Feb 10, 2025

Abstract:Active vision enables dynamic visual perception, offering an alternative to static feedforward architectures in computer vision, which rely on large datasets and high computational resources. Biological selective attention mechanisms allow agents to focus on salient Regions of Interest (ROIs), reducing computational demand while maintaining real-time responsiveness. Event-based cameras, inspired by the mammalian retina, enhance this capability by capturing asynchronous scene changes enabling efficient low-latency processing. To distinguish moving objects while the event-based camera is in motion the agent requires an object motion segmentation mechanism to accurately detect targets and center them in the visual field (fovea). Integrating event-based sensors with neuromorphic algorithms represents a paradigm shift, using Spiking Neural Networks to parallelize computation and adapt to dynamic environments. This work presents a Spiking Convolutional Neural Network bioinspired attention system for selective attention through object motion sensitivity. The system generates events via fixational eye movements using a Dynamic Vision Sensor integrated into the Speck neuromorphic hardware, mounted on a Pan-Tilt unit, to identify the ROI and saccade toward it. The system, characterized using ideal gratings and benchmarked against the Event Camera Motion Segmentation Dataset, reaches a mean IoU of 82.2% and a mean SSIM of 96% in multi-object motion segmentation. The detection of salient objects reaches 88.8% accuracy in office scenarios and 89.8% in low-light conditions on the Event-Assisted Low-Light Video Object Segmentation Dataset. A real-time demonstrator shows the system's 0.12 s response to dynamic scenes. Its learning-free design ensures robustness across perceptual scenes, making it a reliable foundation for real-time robotic applications serving as a basis for more complex architectures.

Advancing the Scientific Frontier with Increasingly Autonomous Systems

Sep 15, 2020Abstract:A close partnership between people and partially autonomous machines has enabled decades of space exploration. But to further expand our horizons, our systems must become more capable. Increasing the nature and degree of autonomy - allowing our systems to make and act on their own decisions as directed by mission teams - enables new science capabilities and enhances science return. The 2011 Planetary Science Decadal Survey (PSDS) and on-going pre-Decadal mission studies have identified increased autonomy as a core technology required for future missions. However, even as scientific discovery has necessitated the development of autonomous systems and past flight demonstrations have been successful, institutional barriers have limited its maturation and infusion on existing planetary missions. Consequently, the authors and endorsers of this paper recommend that new programmatic pathways be developed to infuse autonomy, infrastructure for support autonomous systems be invested in, new practices be adopted, and the cost-saving value of autonomy for operations be studied.

Multi-Modal Active Perception for Information Gathering in Science Missions

Dec 28, 2017

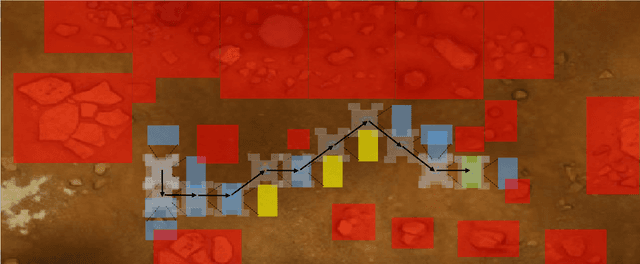

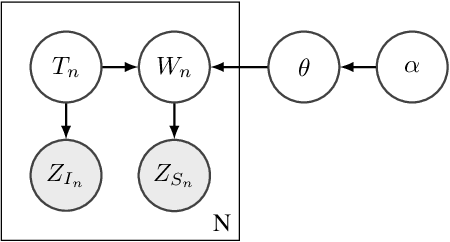

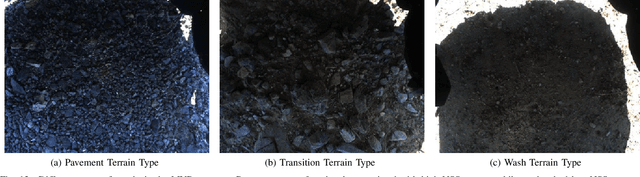

Abstract:Robotic science missions in remote environments, such as deep ocean and outer space, can involve studying phenomena that cannot directly be observed using on-board sensors but must be deduced by combining measurements of correlated variables with domain knowledge. Traditionally, in such missions, robots passively gather data along prescribed paths, while inference, path planning, and other high level decision making is largely performed by a supervisory science team. However, communication constraints hinder these processes, and hence the rate of scientific progress. This paper presents an active perception approach that aims to reduce robots' reliance on human supervision and improve science productivity by encoding scientists' domain knowledge and decision making process on-board. We use Bayesian networks to compactly model critical aspects of scientific knowledge while remaining robust to observation and modeling uncertainty. We then formulate path planning and sensor scheduling as an information gain maximization problem, and propose a sampling-based solution based on Monte Carlo tree search to plan informative sensing actions which exploit the knowledge encoded in the network. The computational complexity of our framework does not grow with the number of observations taken and allows long horizon planning in an anytime manner, making it highly applicable to field robotics. Simulation results show statistically significant performance improvements over baseline methods, and we validate the practicality of our approach through both hardware experiments and simulated experiments with field data gathered during the NASA Mojave Volatiles Prospector science expedition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge