Ondřej Glembek

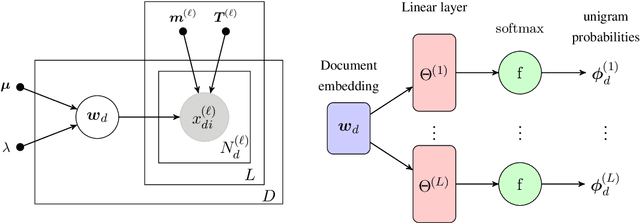

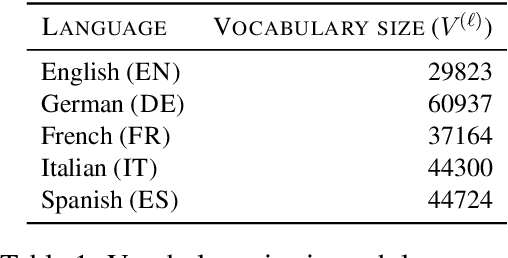

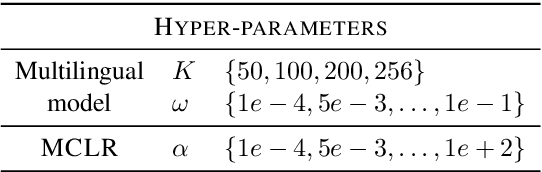

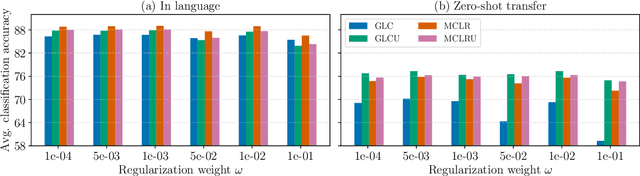

Bayesian multilingual topic model for zero-shot cross-lingual topic identification

Jul 02, 2020

Abstract:This paper presents a Bayesian multilingual topic model for learning language-independent document embeddings. Our model learns to represent the documents in the form of Gaussian distributions, thereby encoding the uncertainty in its covariance. We propagate the learned uncertainties through linear classifiers for zero-shot cross-lingual topic identification. Our experiments on 5 language Europarl and Reuters (MLDoc) corpora show that the proposed model outperforms multi-lingual word embedding and BiLSTM sentence encoder based systems with significant margins in the majority of the transfer directions. Moreover, our system trained under a single day on a single GPU with much lower amounts of data performs competitively as compared to the state-of-the-art universal BiLSTM sentence encoder trained on 93 languages. Our experimental analysis shows that the amount of parallel data improves the overall performance of embeddings. Nonetheless, exploiting the uncertainties is always beneficial.

BUT VOiCES 2019 System Description

Jul 13, 2019

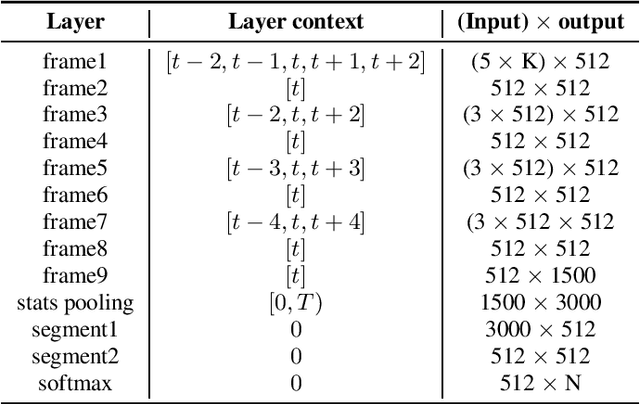

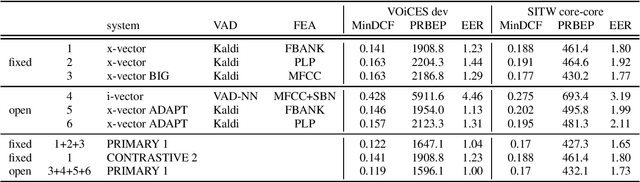

Abstract:This is a description of our effort in VOiCES 2019 Speaker Recognition challenge. All systems in the fixed condition are based on the x-vector paradigm with different features and DNN topologies. The single best system reaches 1.2% EER and a fusion of 3 systems yields 1.0% EER, which is 15% relative improvement. The open condition allowed us to use external data which we did for the PLDA adaptation and achieved less than ~10% relative improvement. In the submission to open condition, we used 3 x-vector systems and also one i-vector based system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge