Niklas Kuehl

On the Interdependence of Reliance Behavior and Accuracy in AI-Assisted Decision-Making

Apr 18, 2023

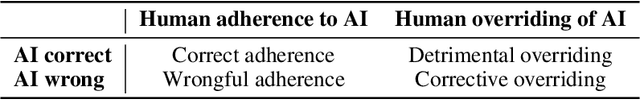

Abstract:In AI-assisted decision-making, a central promise of putting a human in the loop is that they should be able to complement the AI system by adhering to its correct and overriding its mistaken recommendations. In practice, however, we often see that humans tend to over- or under-rely on AI recommendations, meaning that they either adhere to wrong or override correct recommendations. Such reliance behavior is detrimental to decision-making accuracy. In this work, we articulate and analyze the interdependence between reliance behavior and accuracy in AI-assisted decision-making, which has been largely neglected in prior work. We also propose a visual framework to make this interdependence more tangible. This framework helps us interpret and compare empirical findings, as well as obtain a nuanced understanding of the effects of interventions (e.g., explanations) in AI-assisted decision-making. Finally, we infer several interesting properties from the framework: (i) when humans under-rely on AI recommendations, there may be no possibility for them to complement the AI in terms of decision-making accuracy; (ii) when humans cannot discern correct and wrong AI recommendations, no such improvement can be expected either; (iii) interventions may lead to an increase in decision-making accuracy that is solely driven by an increase in humans' adherence to AI recommendations, without any ability to discern correct and wrong. Our work emphasizes the importance of measuring and reporting both effects on accuracy and reliance behavior when empirically assessing interventions.

On Explanations, Fairness, and Appropriate Reliance in Human-AI Decision-Making

Sep 23, 2022

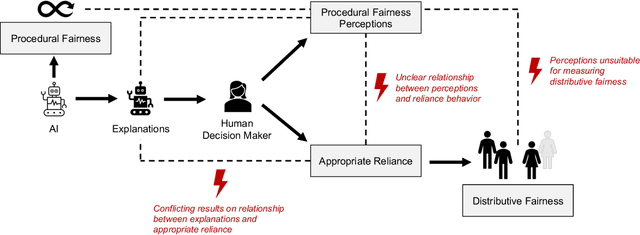

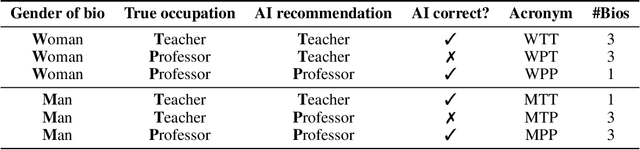

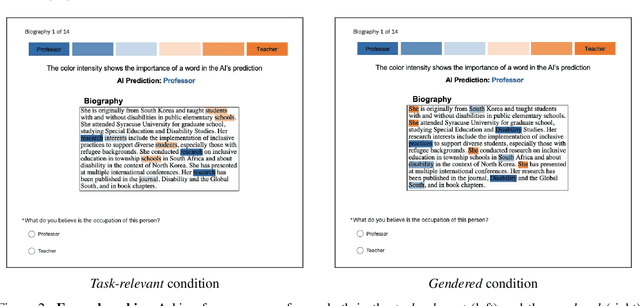

Abstract:Explanations have been framed as an essential feature for better and fairer human-AI decision-making. In the context of fairness, this has not been appropriately studied, as prior works have mostly evaluated explanations based on their effects on people's perceptions. We argue, however, that for explanations to promote fairer decisions, they must enable humans to discern correct and wrong AI recommendations. To validate our conceptual arguments, we conduct an empirical study to examine the relationship between explanations, fairness perceptions, and reliance behavior. Our findings show that explanations influence people's fairness perceptions, which, in turn, affect reliance. However, we observe that low fairness perceptions lead to more overrides of AI recommendations, regardless of whether they are correct or wrong. This (i) raises doubts about the usefulness of existing explanations for enhancing distributive fairness, and, (ii) makes an important case for why perceptions must not be confused as a proxy for appropriate reliance.

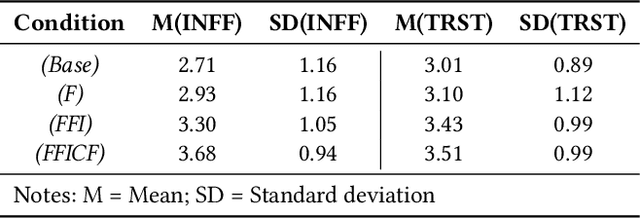

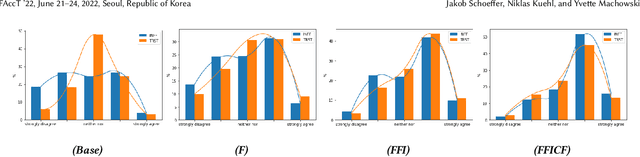

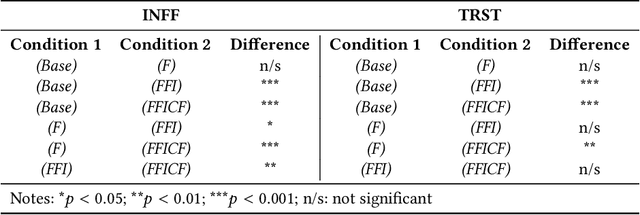

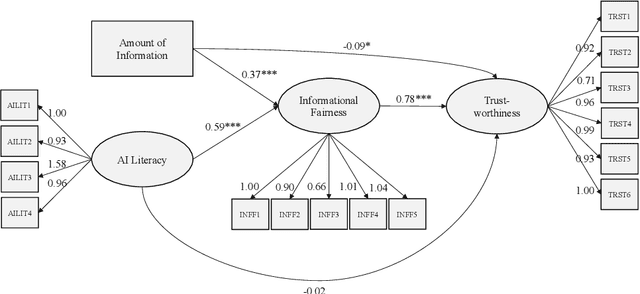

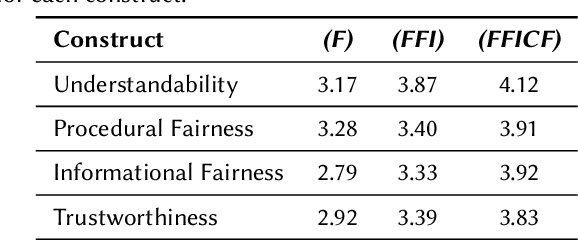

"There Is Not Enough Information": On the Effects of Explanations on Perceptions of Informational Fairness and Trustworthiness in Automated Decision-Making

May 11, 2022

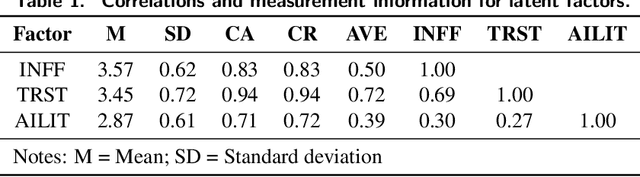

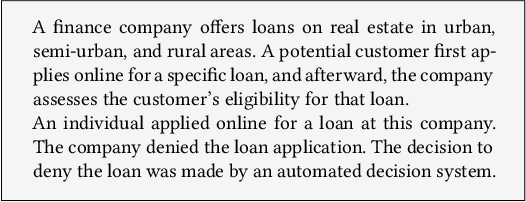

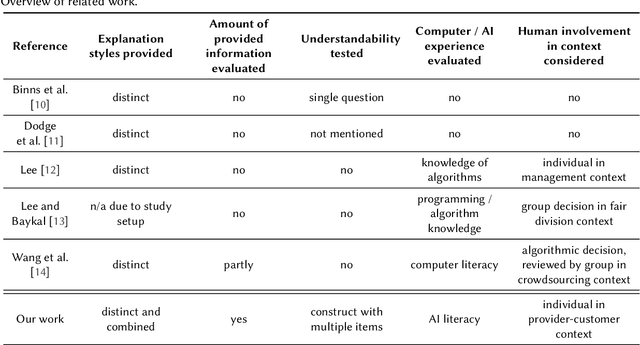

Abstract:Automated decision systems (ADS) are increasingly used for consequential decision-making. These systems often rely on sophisticated yet opaque machine learning models, which do not allow for understanding how a given decision was arrived at. In this work, we conduct a human subject study to assess people's perceptions of informational fairness (i.e., whether people think they are given adequate information on and explanation of the process and its outcomes) and trustworthiness of an underlying ADS when provided with varying types of information about the system. More specifically, we instantiate an ADS in the area of automated loan approval and generate different explanations that are commonly used in the literature. We randomize the amount of information that study participants get to see by providing certain groups of people with the same explanations as others plus additional explanations. From our quantitative analyses, we observe that different amounts of information as well as people's (self-assessed) AI literacy significantly influence the perceived informational fairness, which, in turn, positively relates to perceived trustworthiness of the ADS. A comprehensive analysis of qualitative feedback sheds light on people's desiderata for explanations, among which are (i) consistency (both with people's expectations and across different explanations), (ii) disclosure of monotonic relationships between features and outcome, and (iii) actionability of recommendations.

On the Relationship Between Explanations, Fairness Perceptions, and Decisions

Apr 29, 2022

Abstract:It is known that recommendations of AI-based systems can be incorrect or unfair. Hence, it is often proposed that a human be the final decision-maker. Prior work has argued that explanations are an essential pathway to help human decision-makers enhance decision quality and mitigate bias, i.e., facilitate human-AI complementarity. For these benefits to materialize, explanations should enable humans to appropriately rely on AI recommendations and override the algorithmic recommendation when necessary to increase distributive fairness of decisions. The literature, however, does not provide conclusive empirical evidence as to whether explanations enable such complementarity in practice. In this work, we (a) provide a conceptual framework to articulate the relationships between explanations, fairness perceptions, reliance, and distributive fairness, (b) apply it to understand (seemingly) contradictory research findings at the intersection of explanations and fairness, and (c) derive cohesive implications for the formulation of research questions and the design of experiments.

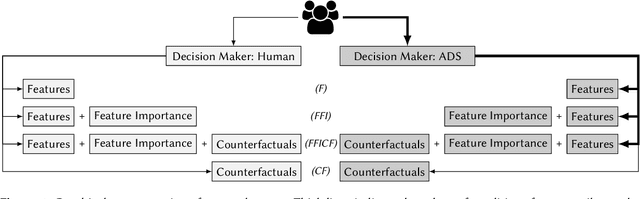

Perceptions of Fairness and Trustworthiness Based on Explanations in Human vs. Automated Decision-Making

Sep 13, 2021

Abstract:Automated decision systems (ADS) have become ubiquitous in many high-stakes domains. Those systems typically involve sophisticated yet opaque artificial intelligence (AI) techniques that seldom allow for full comprehension of their inner workings, particularly for affected individuals. As a result, ADS are prone to deficient oversight and calibration, which can lead to undesirable (e.g., unfair) outcomes. In this work, we conduct an online study with 200 participants to examine people's perceptions of fairness and trustworthiness towards ADS in comparison to a scenario where a human instead of an ADS makes a high-stakes decision -- and we provide thorough identical explanations regarding decisions in both cases. Surprisingly, we find that people perceive ADS as fairer than human decision-makers. Our analyses also suggest that people's AI literacy affects their perceptions, indicating that people with higher AI literacy favor ADS more strongly over human decision-makers, whereas low-AI-literacy people exhibit no significant differences in their perceptions.

Appropriate Fairness Perceptions? On the Effectiveness of Explanations in Enabling People to Assess the Fairness of Automated Decision Systems

Aug 14, 2021

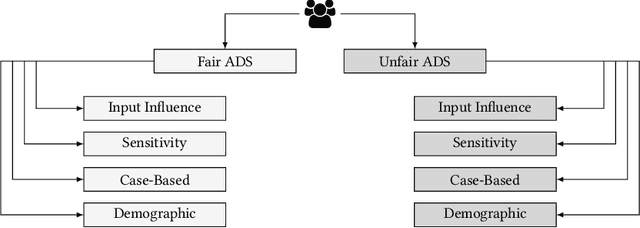

Abstract:It is often argued that one goal of explaining automated decision systems (ADS) is to facilitate positive perceptions (e.g., fairness or trustworthiness) of users towards such systems. This viewpoint, however, makes the implicit assumption that a given ADS is fair and trustworthy, to begin with. If the ADS issues unfair outcomes, then one might expect that explanations regarding the system's workings will reveal its shortcomings and, hence, lead to a decrease in fairness perceptions. Consequently, we suggest that it is more meaningful to evaluate explanations against their effectiveness in enabling people to appropriately assess the quality (e.g., fairness) of an associated ADS. We argue that for an effective explanation, perceptions of fairness should increase if and only if the underlying ADS is fair. In this in-progress work, we introduce the desideratum of appropriate fairness perceptions, propose a novel study design for evaluating it, and outline next steps towards a comprehensive experiment.

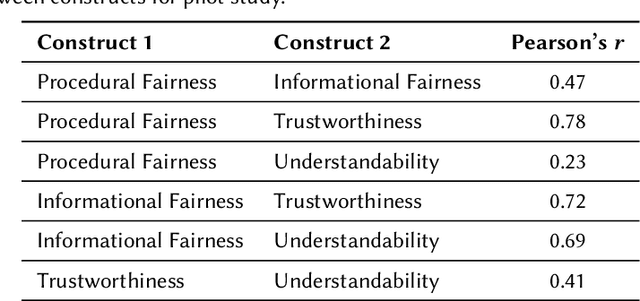

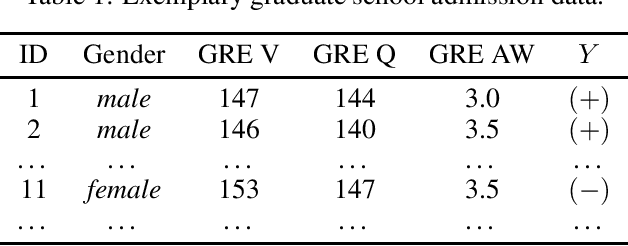

A Study on Fairness and Trust Perceptions in Automated Decision Making

Mar 08, 2021

Abstract:Automated decision systems are increasingly used for consequential decision making -- for a variety of reasons. These systems often rely on sophisticated yet opaque models, which do not (or hardly) allow for understanding how or why a given decision was arrived at. This is not only problematic from a legal perspective, but non-transparent systems are also prone to yield undesirable (e.g., unfair) outcomes because their sanity is difficult to assess and calibrate in the first place. In this work, we conduct a study to evaluate different attempts of explaining such systems with respect to their effect on people's perceptions of fairness and trustworthiness towards the underlying mechanisms. A pilot study revealed surprising qualitative insights as well as preliminary significant effects, which will have to be verified, extended and thoroughly discussed in the larger main study.

A Ranking Approach to Fair Classification

Feb 08, 2021

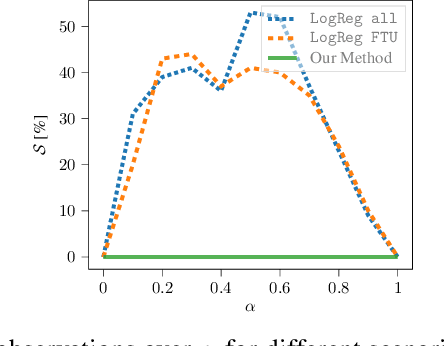

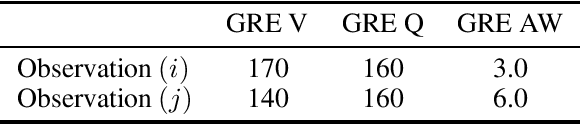

Abstract:Algorithmic decision systems are increasingly used in areas such as hiring, school admission, or loan approval. Typically, these systems rely on labeled data for training a classification model. However, in many scenarios, ground-truth labels are unavailable, and instead we have only access to imperfect labels as the result of (potentially biased) human-made decisions. Despite being imperfect, historical decisions often contain some useful information on the unobserved true labels. In this paper, we focus on scenarios where only imperfect labels are available and propose a new fair ranking-based decision system, as an alternative to traditional classification algorithms. Our approach is both intuitive and easy to implement, and thus particularly suitable for adoption in real-world settings. More in detail, we introduce a distance-based decision criterion, which incorporates useful information from historical decisions and accounts for unwanted correlation between protected and legitimate features. Through extensive experiments on synthetic and real-world data, we show that our method is fair, as it a) assigns the desirable outcome to the most qualified individuals, and b) removes the effect of stereotypes in decision-making, thereby outperforming traditional classification algorithms. Additionally, we are able to show theoretically that our method is consistent with a prominent concept of individual fairness which states that "similar individuals should be treated similarly."

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge