Niklas Gunnarsson

Online learning in motion modeling for intra-interventional image sequences

Oct 15, 2024Abstract:Image monitoring and guidance during medical examinations can aid both diagnosis and treatment. However, the sampling frequency is often too low, which creates a need to estimate the missing images. We present a probabilistic motion model for sequential medical images, with the ability to both estimate motion between acquired images and forecast the motion ahead of time. The core is a low-dimensional temporal process based on a linear Gaussian state-space model with analytically tractable solutions for forecasting, simulation, and imputation of missing samples. The results, from two experiments on publicly available cardiac datasets, show reliable motion estimates and an improved forecasting performance using patient-specific adaptation by online learning.

Learn2Reg: comprehensive multi-task medical image registration challenge, dataset and evaluation in the era of deep learning

Dec 23, 2021

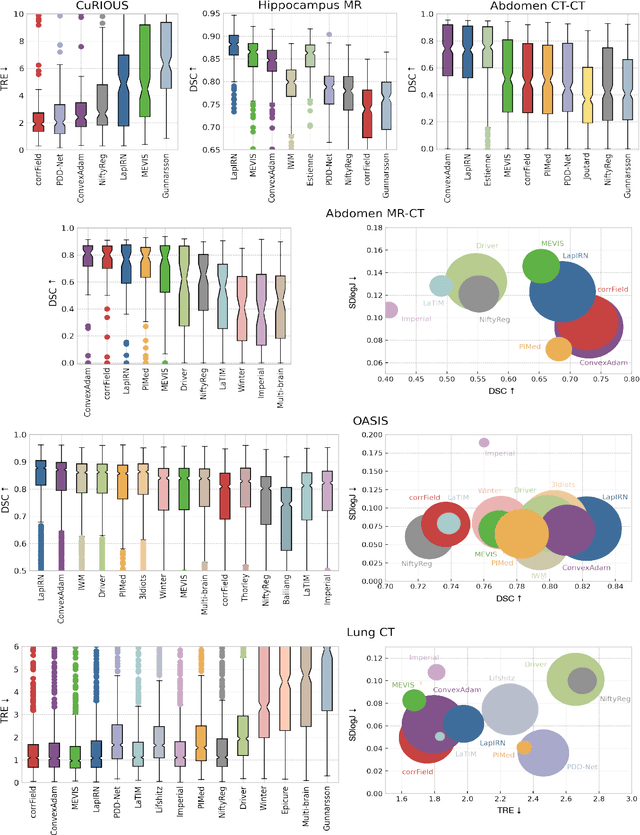

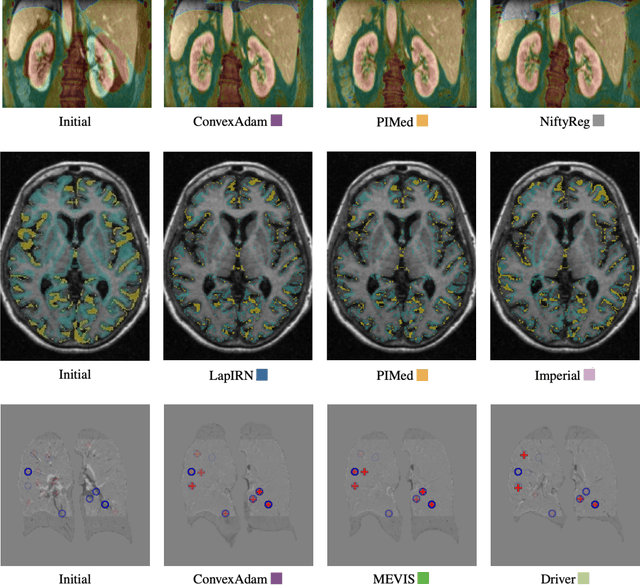

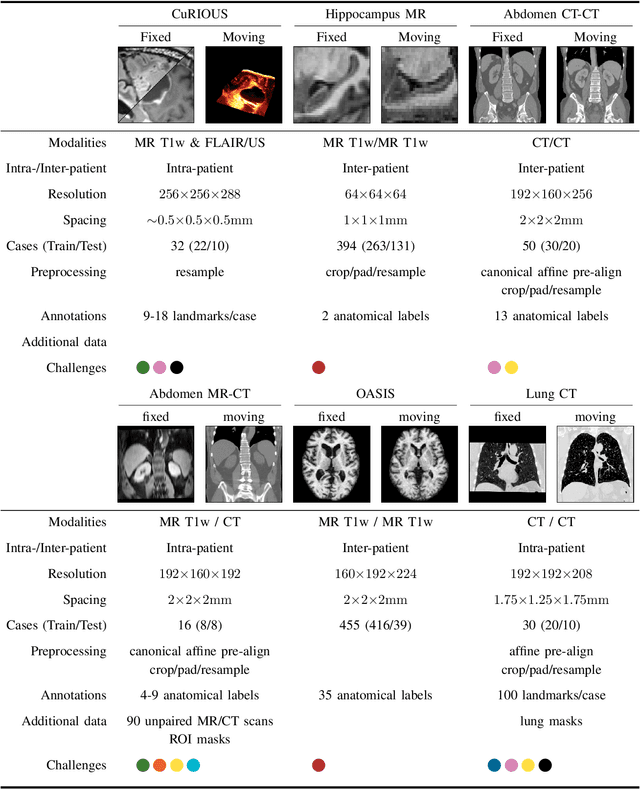

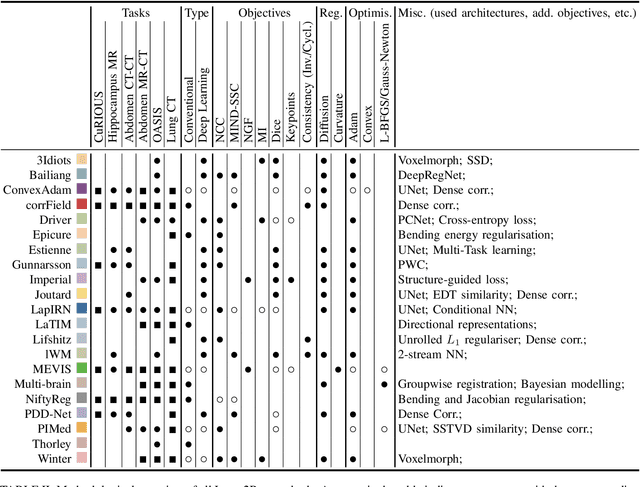

Abstract:Image registration is a fundamental medical image analysis task, and a wide variety of approaches have been proposed. However, only a few studies have comprehensively compared medical image registration approaches on a wide range of clinically relevant tasks, in part because of the lack of availability of such diverse data. This limits the development of registration methods, the adoption of research advances into practice, and a fair benchmark across competing approaches. The Learn2Reg challenge addresses these limitations by providing a multi-task medical image registration benchmark for comprehensive characterisation of deformable registration algorithms. A continuous evaluation will be possible at https://learn2reg.grand-challenge.org. Learn2Reg covers a wide range of anatomies (brain, abdomen, and thorax), modalities (ultrasound, CT, MR), availability of annotations, as well as intra- and inter-patient registration evaluation. We established an easily accessible framework for training and validation of 3D registration methods, which enabled the compilation of results of over 65 individual method submissions from more than 20 unique teams. We used a complementary set of metrics, including robustness, accuracy, plausibility, and runtime, enabling unique insight into the current state-of-the-art of medical image registration. This paper describes datasets, tasks, evaluation methods and results of the challenge, and the results of further analysis of transferability to new datasets, the importance of label supervision, and resulting bias.

Latent linear dynamics in spatiotemporal medical data

Mar 01, 2021

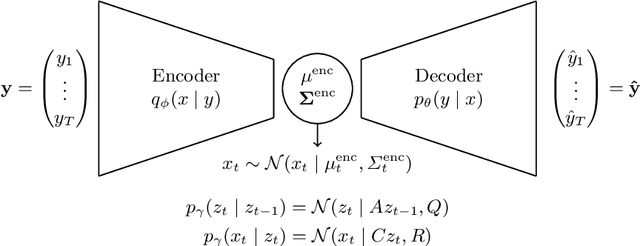

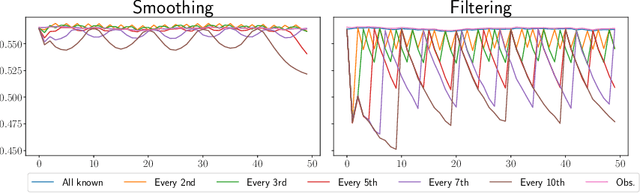

Abstract:Spatiotemporal imaging is common in medical imaging, with applications in e.g. cardiac diagnostics, surgical guidance and radiotherapy monitoring. In this paper, we present an unsupervised model that identifies the underlying dynamics of the system, only based on the sequential images. The model maps the input to a low-dimensional latent space wherein a linear relationship holds between a hidden state process and the observed latent process. Knowledge of the system dynamics enables denoising, imputation of missing values and extrapolation of future image frames. We use a Variational Auto-Encoder (VAE) for the dimensionality reduction and a Linear Gaussian State Space Model (LGSSM) for the latent dynamics. The model, known as a Kalman Variational Auto-Encoder, is end-to-end trainable and the weights, both in the VAE and LGSSM, are simultaneously updated by maximizing the evidence lower bound of the marginal log likelihood. Our experiment, on cardiac ultrasound time series, shows that the dynamical model provide better reconstructions than a similar model without dynamics. And also possibility to impute and extrapolate for missing samples.

Registration by tracking for sequential 2D MRI

Mar 24, 2020

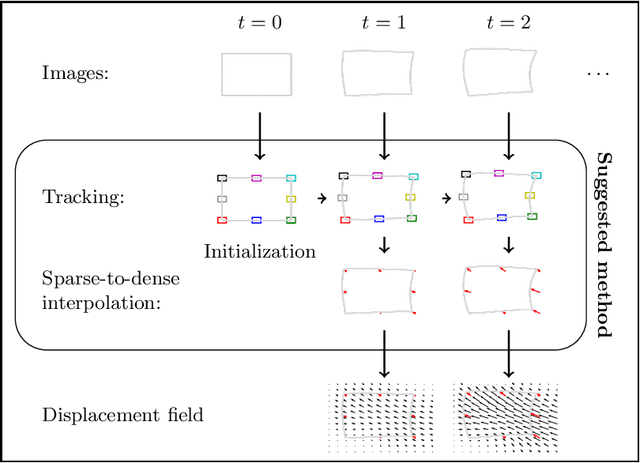

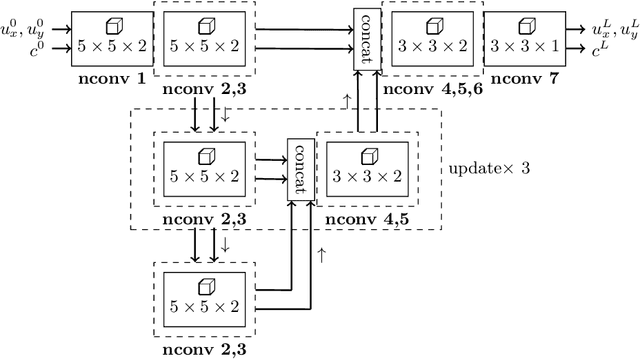

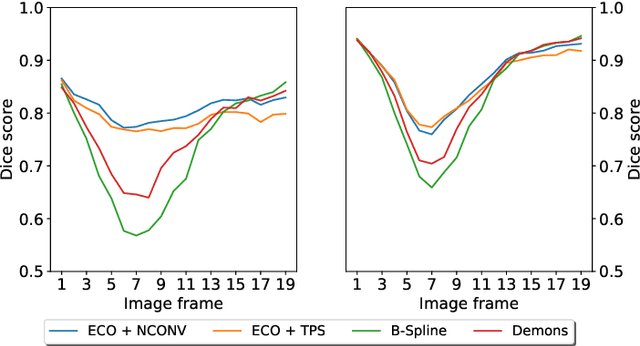

Abstract:Our anatomy is in constant motion. With modern MR imaging it is possible to record this motion in real-time during an ongoing radiation therapy session. In this paper we present an image registration method that exploits the sequential nature of 2D MR images to estimate the corresponding displacement field. The method employs several discriminative correlation filters that independently track specific points. Together with a sparse-to-dense interpolation scheme we can then estimate of the displacement field. The discriminative correlation filters are trained online, and our method is modality agnostic. For the interpolation scheme we use a neural network with normalized convolutions that is trained using synthetic diffeomorphic displacement fields. The method is evaluated on a segmented cardiac dataset and when compared to two conventional methods we observe an improved performance. This improvement is especially pronounced when it comes to the detection of larger motions of small objects.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge