Nihal Dhamani

Planning, scheduling, and execution on the Moon: the CADRE technology demonstration mission

Feb 20, 2025Abstract:NASA's Cooperative Autonomous Distributed Robotic Exploration (CADRE) mission, slated for flight to the Moon's Reiner Gamma region in 2025/2026, is designed to demonstrate multi-agent autonomous exploration of the Lunar surface and sub-surface. A team of three robots and a base station will autonomously explore a region near the lander, collecting the data required for 3D reconstruction of the surface with no human input; and then autonomously perform distributed sensing with multi-static ground penetrating radars (GPR), driving in formation while performing coordinated radar soundings to create a map of the subsurface. At the core of CADRE's software architecture is a novel autonomous, distributed planning, scheduling, and execution (PS&E) system. The system coordinates the robots' activities, planning and executing tasks that require multiple robots' participation while ensuring that each individual robot's thermal and power resources stay within prescribed bounds, and respecting ground-prescribed sleep-wake cycles. The system uses a centralized-planning, distributed-execution paradigm, and a leader election mechanism ensures robustness to failures of individual agents. In this paper, we describe the architecture of CADRE's PS&E system; discuss its design rationale; and report on verification and validation (V&V) testing of the system on CADRE's hardware in preparation for deployment on the Moon.

Operations for Autonomous Spacecraft

Nov 22, 2021

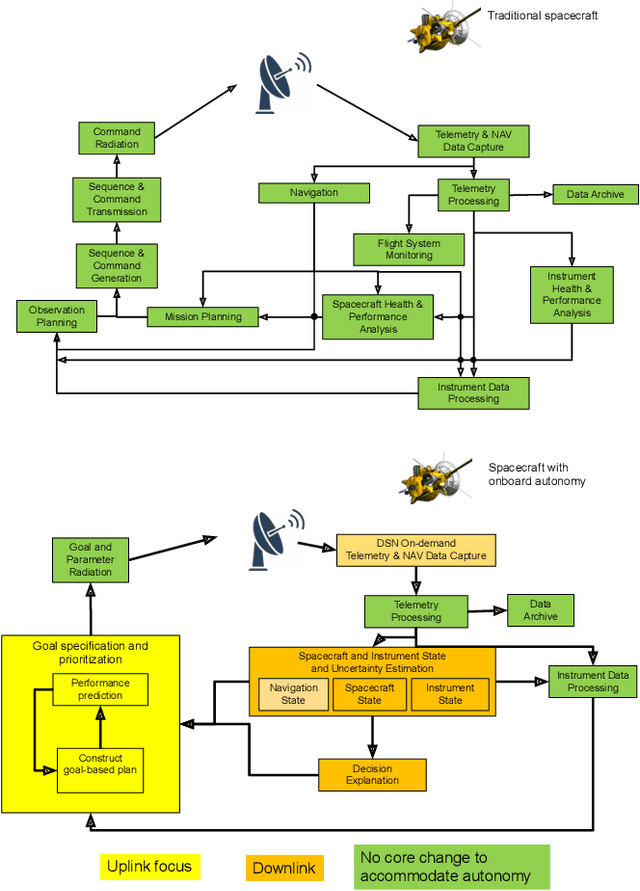

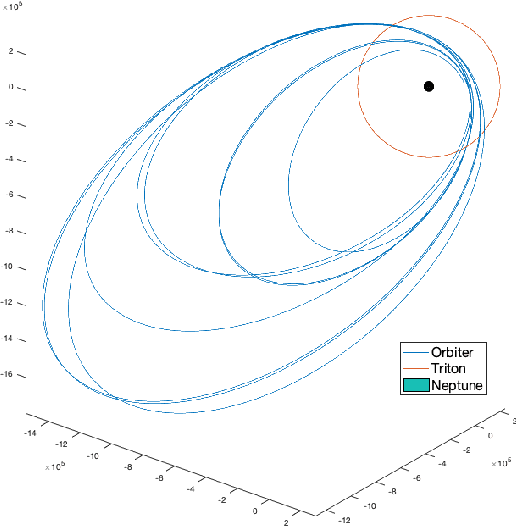

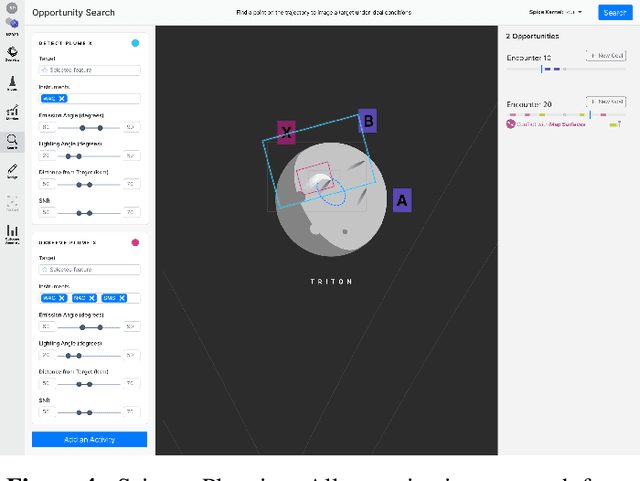

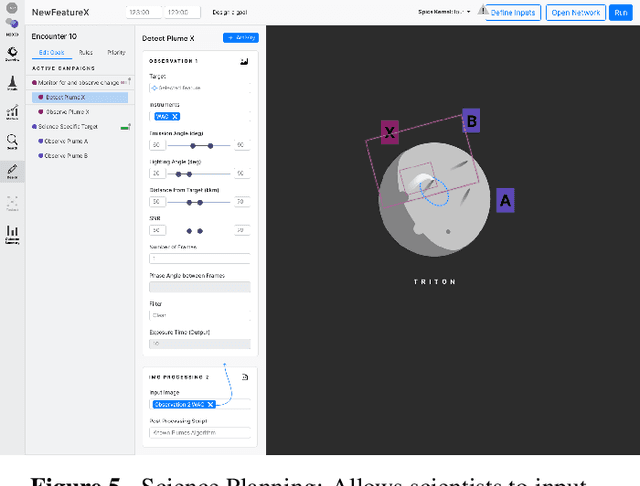

Abstract:Onboard autonomy technologies such as planning and scheduling, identification of scientific targets, and content-based data summarization, will lead to exciting new space science missions. However, the challenge of operating missions with such onboard autonomous capabilities has not been studied to a level of detail sufficient for consideration in mission concepts. These autonomy capabilities will require changes to current operations processes, practices, and tools. We have developed a case study to assess the changes needed to enable operators and scientists to operate an autonomous spacecraft by facilitating a common model between the ground personnel and the onboard algorithms. We assess the new operations tools and workflows necessary to enable operators and scientists to convey their desired intent to the spacecraft, and to be able to reconstruct and explain the decisions made onboard and the state of the spacecraft. Mock-ups of these tools were used in a user study to understand the effectiveness of the processes and tools in enabling a shared framework of understanding, and in the ability of the operators and scientists to effectively achieve mission science objectives.

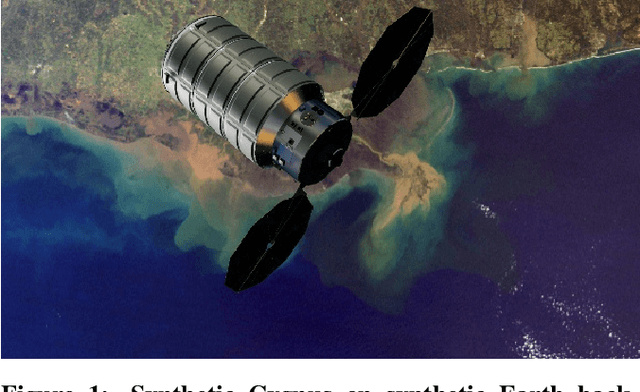

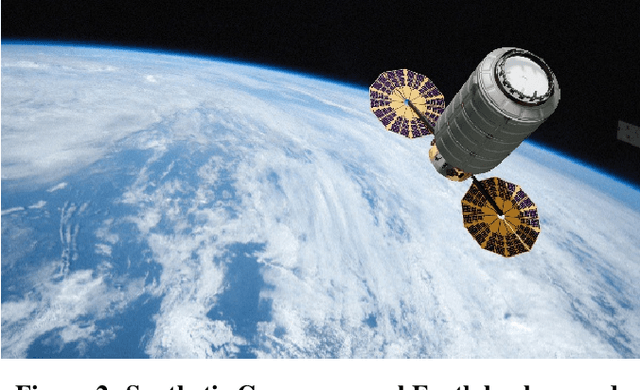

A Pipeline for Vision-Based On-Orbit Proximity Operations Using Deep Learning and Synthetic Imagery

Jan 14, 2021

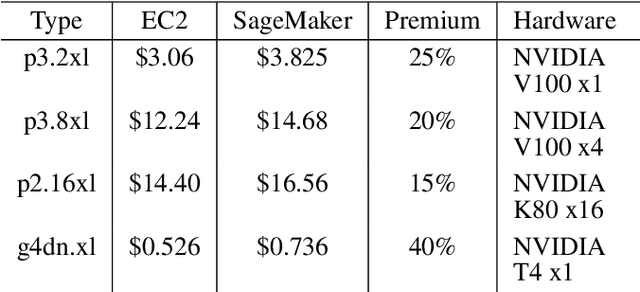

Abstract:Deep learning has become the gold standard for image processing over the past decade. Simultaneously, we have seen growing interest in orbital activities such as satellite servicing and debris removal that depend on proximity operations between spacecraft. However, two key challenges currently pose a major barrier to the use of deep learning for vision-based on-orbit proximity operations. Firstly, efficient implementation of these techniques relies on an effective system for model development that streamlines data curation, training, and evaluation. Secondly, a scarcity of labeled training data (images of a target spacecraft) hinders creation of robust deep learning models. This paper presents an open-source deep learning pipeline, developed specifically for on-orbit visual navigation applications, that addresses these challenges. The core of our work consists of two custom software tools built on top of a cloud architecture that interconnects all stages of the model development process. The first tool leverages Blender, an open-source 3D graphics toolset, to generate labeled synthetic training data with configurable model poses (positions and orientations), lighting conditions, backgrounds, and commonly observed in-space image aberrations. The second tool is a plugin-based framework for effective dataset curation and model training; it provides common functionality like metadata generation and remote storage access to all projects while giving complete independence to project-specific code. Time-consuming, graphics-intensive processes such as synthetic image generation and model training run on cloud-based computational resources which scale to any scope and budget and allow development of even the largest datasets and models from any machine. The presented system has been used in the Texas Spacecraft Laboratory with marked benefits in development speed and quality.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge