Nan Mu

BiM-GeoAttn-Net: Linear-Time Depth Modeling with Geometry-Aware Attention for 3D Aortic Dissection CTA Segmentation

Feb 27, 2026Abstract:Accurate segmentation of aortic dissection (AD) lumens in CT angiography (CTA) is essential for quantitative morphological assessment and clinical decision-making. However, reliable 3D delineation remains challenging due to limited long-range context modeling, which compromises inter-slice coherence, and insufficient structural discrimination under low-contrast conditions. To address these limitations, we propose BiM-GeoAttn-Net, a lightweight framework that integrates linear-time depth-wise state-space modeling with geometry-aware vessel refinement. Our approach is featured by Bidirectional Depth Mamba (BiM) to efficiently capture cross-slice dependencies and Geometry-Aware Vessel Attention (GeoAttn) module that employs orientation-sensitive anisotropic filtering to refine tubular structures and sharpen ambiguous boundaries. Extensive experiments on a multi-source AD CTA dataset demonstrate that BiM-GeoAttn-Net achieves a Dice score of 93.35% and an HD95 of 12.36 mm, outperforming representative CNN-, Transformer-, and SSM-based baselines in overlap metrics while maintaining competitive boundary accuracy. These results suggest that coupling linear-time depth modeling with geometry-aware refinement provides an effective, computationally efficient solution for robust 3D AD segmentation.

SpectralMamba-UNet: Frequency-Disentangled State Space Modeling for Texture-Structure Consistent Medical Image Segmentation

Feb 26, 2026Abstract:Accurate medical image segmentation requires effective modeling of both global anatomical structures and fine-grained boundary details. Recent state space models (e.g., Vision Mamba) offer efficient long-range dependency modeling. However, their one-dimensional serialization weakens local spatial continuity and high-frequency representation. To this end, we propose SpectralMamba-UNet, a novel frequency-disentangled framework to decouple the learning of structural and textural information in the spectral domain. Our Spectral Decomposition and Modeling (SDM) module applies discrete cosine transform to decompose low- and high-frequency features, where low frequency contributes to global contextual modeling via a frequency-domain Mamba and high frequency preserves boundary-sensitive details. To balance spectral contributions, we introduce a Spectral Channel Reweighting (SCR) mechanism to form channel-wise frequency-aware attention, and a Spectral-Guided Fusion (SGF) module to achieve adaptively multi-scale fusion in the decoder. Experiments on five public benchmarks demonstrate consistent improvements across diverse modalities and segmentation targets, validating the effectiveness and generalizability of our approach.

VFGS-Net: Frequency-Guided State-Space Learning for Topology-Preserving Retinal Vessel Segmentation

Feb 11, 2026Abstract:Accurate retinal vessel segmentation is a critical prerequisite for quantitative analysis of retinal images and computer-aided diagnosis of vascular diseases such as diabetic retinopathy. However, the elongated morphology, wide scale variation, and low contrast of retinal vessels pose significant challenges for existing methods, making it difficult to simultaneously preserve fine capillaries and maintain global topological continuity. To address these challenges, we propose the Vessel-aware Frequency-domain and Global Spatial modeling Network (VFGS-Net), an end-to-end segmentation framework that seamlessly integrates frequency-aware feature enhancement, dual-path convolutional representation learning, and bidirectional asymmetric spatial state-space modeling within a unified architecture. Specifically, VFGS-Net employs a dual-path feature convolution module to jointly capture fine-grained local textures and multi-scale contextual semantics. A novel vessel-aware frequency-domain channel attention mechanism is introduced to adaptively reweight spectral components, thereby enhancing vessel-relevant responses in high-level features. Furthermore, at the network bottleneck, we propose a bidirectional asymmetric Mamba2-based spatial modeling block to efficiently capture long-range spatial dependencies and strengthen the global continuity of vascular structures. Extensive experiments on four publicly available retinal vessel datasets demonstrate that VFGS-Net achieves competitive or superior performance compared to state-of-the-art methods. Notably, our model consistently improves segmentation accuracy for fine vessels, complex branching patterns, and low-contrast regions, highlighting its robustness and clinical potential.

SFD-Mamba2Net: Strcture-Guided Frequency-Enhanced Dual-Stream Mamba2 Network for Coronary Artery Segmentation

Sep 10, 2025

Abstract:Background: Coronary Artery Disease (CAD) is one of the leading causes of death worldwide. Invasive Coronary Angiography (ICA), regarded as the gold standard for CAD diagnosis, necessitates precise vessel segmentation and stenosis detection. However, ICA images are typically characterized by low contrast, high noise levels, and complex, fine-grained vascular structures, which pose significant challenges to the clinical adoption of existing segmentation and detection methods. Objective: This study aims to improve the accuracy of coronary artery segmentation and stenosis detection in ICA images by integrating multi-scale structural priors, state-space-based long-range dependency modeling, and frequency-domain detail enhancement strategies. Methods: We propose SFD-Mamba2Net, an end-to-end framework tailored for ICA-based vascular segmentation and stenosis detection. In the encoder, a Curvature-Aware Structural Enhancement (CASE) module is embedded to leverage multi-scale responses for highlighting slender tubular vascular structures, suppressing background interference, and directing attention toward vascular regions. In the decoder, we introduce a Progressive High-Frequency Perception (PHFP) module that employs multi-level wavelet decomposition to progressively refine high-frequency details while integrating low-frequency global structures. Results and Conclusions: SFD-Mamba2Net consistently outperformed state-of-the-art methods across eight segmentation metrics, and achieved the highest true positive rate and positive predictive value in stenosis detection.

FAD-Net: Frequency-Domain Attention-Guided Diffusion Network for Coronary Artery Segmentation using Invasive Coronary Angiography

Jun 13, 2025Abstract:Background: Coronary artery disease (CAD) remains one of the leading causes of mortality worldwide. Precise segmentation of coronary arteries from invasive coronary angiography (ICA) is critical for effective clinical decision-making. Objective: This study aims to propose a novel deep learning model based on frequency-domain analysis to enhance the accuracy of coronary artery segmentation and stenosis detection in ICA, thereby offering robust support for the stenosis detection and treatment of CAD. Methods: We propose the Frequency-Domain Attention-Guided Diffusion Network (FAD-Net), which integrates a frequency-domain-based attention mechanism and a cascading diffusion strategy to fully exploit frequency-domain information for improved segmentation accuracy. Specifically, FAD-Net employs a Multi-Level Self-Attention (MLSA) mechanism in the frequency domain, computing the similarity between queries and keys across high- and low-frequency components in ICAs. Furthermore, a Low-Frequency Diffusion Module (LFDM) is incorporated to decompose ICAs into low- and high-frequency components via multi-level wavelet transformation. Subsequently, it refines fine-grained arterial branches and edges by reintegrating high-frequency details via inverse fusion, enabling continuous enhancement of anatomical precision. Results and Conclusions: Extensive experiments demonstrate that FAD-Net achieves a mean Dice coefficient of 0.8717 in coronary artery segmentation, outperforming existing state-of-the-art methods. In addition, it attains a true positive rate of 0.6140 and a positive predictive value of 0.6398 in stenosis detection, underscoring its clinical applicability. These findings suggest that FAD-Net holds significant potential to assist in the accurate diagnosis and treatment planning of CAD.

Towards Multi-Source Retrieval-Augmented Generation via Synergizing Reasoning and Preference-Driven Retrieval

Nov 01, 2024

Abstract:Retrieval-Augmented Generation (RAG) has emerged as a reliable external knowledge augmentation technique to mitigate hallucination issues and parameterized knowledge limitations in Large Language Models (LLMs). Existing Adaptive RAG (ARAG) systems struggle to effectively explore multiple retrieval sources due to their inability to select the right source at the right time. To address this, we propose a multi-source ARAG framework, termed MSPR, which synergizes reasoning and preference-driven retrieval to adaptive decide "when and what to retrieve" and "which retrieval source to use". To better adapt to retrieval sources of differing characteristics, we also employ retrieval action adjustment and answer feedback strategy. They enable our framework to fully explore the high-quality primary source while supplementing it with secondary sources at the right time. Extensive and multi-dimensional experiments conducted on three datasets demonstrate the superiority and effectiveness of MSPR.

Parameter-Efficient Transformer with Hybrid Axial-Attention for Medical Image Segmentation

Nov 17, 2022

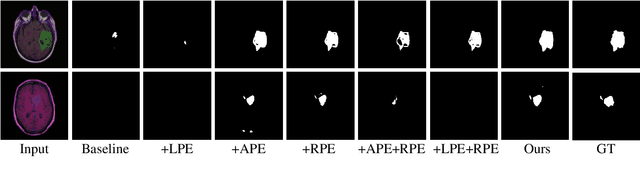

Abstract:Transformers have achieved remarkable success in medical image analysis owing to their powerful capability to use flexible self-attention mechanism. However, due to lacking intrinsic inductive bias in modeling visual structural information, they generally require a large-scale pre-training schedule, limiting the clinical applications over expensive small-scale medical data. To this end, we propose a parameter-efficient transformer to explore intrinsic inductive bias via position information for medical image segmentation. Specifically, we empirically investigate how different position encoding strategies affect the prediction quality of the region of interest (ROI), and observe that ROIs are sensitive to the position encoding strategies. Motivated by this, we present a novel Hybrid Axial-Attention (HAA), a form of position self-attention that can be equipped with spatial pixel-wise information and relative position information as inductive bias. Moreover, we introduce a gating mechanism to alleviate the burden of training schedule, resulting in efficient feature selection over small-scale datasets. Experiments on the BraTS and Covid19 datasets prove the superiority of our method over the baseline and previous works. Internal workflow visualization with interpretability is conducted to better validate our success.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge