Miroslav Krstic

Nonholonomic Robot Parking by Feedback -- Part I: Modular Strict CLF Designs

Nov 19, 2025Abstract:It has been known in the robotics literature since about 1995 that, in polar coordinates, the nonholonomic unicycle is asymptotically stabilizable by smooth feedback, even globally. We introduce a modular design framework that selects the forward velocity to decouple the radial coordinate, allowing the steering subsystem to be stabilized independently. Within this structure, we develop families of feedback laws using passivity, backstepping, and integrator forwarding. Each law is accompanied by a strict control Lyapunov function, including barrier variants that enforce angular constraints. These strict CLFs provide constructive class KL convergence estimates and enable eigenvalue assignment at the target equilibrium. The framework generalizes and extends prior modular and nonmodular approaches, while preparing the ground for inverse optimal and adaptive redesigns in the sequel paper.

Stabilization of nonlinear systems with unknown delays via delay-adaptive neural operator approximate predictors

Sep 30, 2025Abstract:This work establishes the first rigorous stability guarantees for approximate predictors in delay-adaptive control of nonlinear systems, addressing a key challenge in practical implementations where exact predictors are unavailable. We analyze two scenarios: (i) when the actuated input is directly measurable, and (ii) when it is estimated online. For the measurable input case, we prove semi-global practical asymptotic stability with an explicit bound proportional to the approximation error $\epsilon$. For the unmeasured input case, we demonstrate local practical asymptotic stability, with the region of attraction explicitly dependent on both the initial delay estimate and the predictor approximation error. To bridge theory and practice, we show that neural operators-a flexible class of neural network-based approximators-can achieve arbitrarily small approximation errors, thus satisfying the conditions of our stability theorems. Numerical experiments on two nonlinear benchmark systems-a biological protein activator/repressor model and a micro-organism growth Chemostat model-validate our theoretical results. In particular, our numerical simulations confirm stability under approximate predictors, highlight the strong generalization capabilities of neural operators, and demonstrate a substantial computational speedup of up to 15x compared to a baseline fixed-point method.

Neural Operators for Predictor Feedback Control of Nonlinear Delay Systems

Nov 28, 2024Abstract:Predictor feedback designs are critical for delay-compensating controllers in nonlinear systems. However, these designs are limited in practical applications as predictors cannot be directly implemented, but require numerical approximation schemes. These numerical schemes, typically combining finite difference and successive approximations, become computationally prohibitive when the dynamics of the system are expensive to compute. To alleviate this issue, we propose approximating the predictor mapping via a neural operator. In particular, we introduce a new perspective on predictor designs by recasting the predictor formulation as an operator learning problem. We then prove the existence of an arbitrarily accurate neural operator approximation of the predictor operator. Under the approximated-predictor, we achieve semiglobal practical stability of the closed-loop nonlinear system. The estimate is semiglobal in a unique sense - namely, one can increase the set of initial states as large as desired but this will naturally increase the difficulty of training a neural operator approximation which appears practically in the stability estimate. Furthermore, we emphasize that our result holds not just for neural operators, but any black-box predictor satisfying a universal approximation error bound. From a computational perspective, the advantage of the neural operator approach is clear as it requires training once, offline and then is deployed with very little computational cost in the feedback controller. We conduct experiments controlling a 5-link robotic manipulator with different state-of-the-art neural operator architectures demonstrating speedups on the magnitude of $10^2$ compared to traditional predictor approximation schemes.

Adaptive control of reaction-diffusion PDEs via neural operator-approximated gain kernels

Jul 01, 2024Abstract:Neural operator approximations of the gain kernels in PDE backstepping has emerged as a viable method for implementing controllers in real time. With such an approach, one approximates the gain kernel, which maps the plant coefficient into the solution of a PDE, with a neural operator. It is in adaptive control that the benefit of the neural operator is realized, as the kernel PDE solution needs to be computed online, for every updated estimate of the plant coefficient. We extend the neural operator methodology from adaptive control of a hyperbolic PDE to adaptive control of a benchmark parabolic PDE (a reaction-diffusion equation with a spatially-varying and unknown reaction coefficient). We prove global stability and asymptotic regulation of the plant state for a Lyapunov design of parameter adaptation. The key technical challenge of the result is handling the 2D nature of the gain kernels and proving that the target system with two distinct sources of perturbation terms, due to the parameter estimation error and due to the neural approximation error, is Lyapunov stable. To verify our theoretical result, we present simulations achieving calculation speedups up to 45x relative to the traditional finite difference solvers for every timestep in the simulation trajectory.

PDE Control Gym: A Benchmark for Data-Driven Boundary Control of Partial Differential Equations

May 18, 2024

Abstract:Over the last decade, data-driven methods have surged in popularity, emerging as valuable tools for control theory. As such, neural network approximations of control feedback laws, system dynamics, and even Lyapunov functions have attracted growing attention. With the ascent of learning based control, the need for accurate, fast, and easy-to-use benchmarks has increased. In this work, we present the first learning-based environment for boundary control of PDEs. In our benchmark, we introduce three foundational PDE problems - a 1D transport PDE, a 1D reaction-diffusion PDE, and a 2D Navier-Stokes PDE - whose solvers are bundled in an user-friendly reinforcement learning gym. With this gym, we then present the first set of model-free, reinforcement learning algorithms for solving this series of benchmark problems, achieving stability, although at a higher cost compared to model-based PDE backstepping. With the set of benchmark environments and detailed examples, this work significantly lowers the barrier to entry for learning-based PDE control - a topic largely unexplored by the data-driven control community. The entire benchmark is available on Github along with detailed documentation and the presented reinforcement learning models are open sourced.

Adaptive Neural-Operator Backstepping Control of a Benchmark Hyperbolic PDE

Jan 15, 2024Abstract:To stabilize PDEs, feedback controllers require gain kernel functions, which are themselves governed by PDEs. Furthermore, these gain-kernel PDEs depend on the PDE plants' functional coefficients. The functional coefficients in PDE plants are often unknown. This requires an adaptive approach to PDE control, i.e., an estimation of the plant coefficients conducted concurrently with control, where a separate PDE for the gain kernel must be solved at each timestep upon the update in the plant coefficient function estimate. Solving a PDE at each timestep is computationally expensive and a barrier to the implementation of real-time adaptive control of PDEs. Recently, results in neural operator (NO) approximations of functional mappings have been introduced into PDE control, for replacing the computation of the gain kernel with a neural network that is trained, once offline, and reused in real-time for rapid solution of the PDEs. In this paper, we present the first result on applying NOs in adaptive PDE control, presented for a benchmark 1-D hyperbolic PDE with recirculation. We establish global stabilization via Lyapunov analysis, in the plant and parameter error states, and also present an alternative approach, via passive identifiers, which avoids the strong assumptions on kernel differentiability. We then present numerical simulations demonstrating stability and observe speedups up to three orders of magnitude, highlighting the real-time efficacy of neural operators in adaptive control. Our code (Github) is made publicly available for future researchers.

Moving-Horizon Estimators for Hyperbolic and Parabolic PDEs in 1-D

Jan 04, 2024Abstract:Observers for PDEs are themselves PDEs. Therefore, producing real time estimates with such observers is computationally burdensome. For both finite-dimensional and ODE systems, moving-horizon estimators (MHE) are operators whose output is the state estimate, while their inputs are the initial state estimate at the beginning of the horizon as well as the measured output and input signals over the moving time horizon. In this paper we introduce MHEs for PDEs which remove the need for a numerical solution of an observer PDE in real time. We accomplish this using the PDE backstepping method which, for certain classes of both hyperbolic and parabolic PDEs, produces moving-horizon state estimates explicitly. Precisely, to explicitly produce the state estimates, we employ a backstepping transformation of a hard-to-solve observer PDE into a target observer PDE, which is explicitly solvable. The MHEs we propose are not new observer designs but simply the explicit MHE realizations, over a moving horizon of arbitrary length, of the existing backstepping observers. Our PDE MHEs lack the optimality of the MHEs that arose as duals of MPC, but they are given explicitly, even for PDEs. In the paper we provide explicit formulae for MHEs for both hyperbolic and parabolic PDEs, as well as simulation results that illustrate theoretically guaranteed convergence of the MHEs.

Gain Scheduling with a Neural Operator for a Transport PDE with Nonlinear Recirculation

Jan 04, 2024Abstract:To stabilize PDE models, control laws require space-dependent functional gains mapped by nonlinear operators from the PDE functional coefficients. When a PDE is nonlinear and its "pseudo-coefficient" functions are state-dependent, a gain-scheduling (GS) nonlinear design is the simplest approach to the design of nonlinear feedback. The GS version of PDE backstepping employs gains obtained by solving a PDE at each value of the state. Performing such PDE computations in real time may be prohibitive. The recently introduced neural operators (NO) can be trained to produce the gain functions, rapidly in real time, for each state value, without requiring a PDE solution. In this paper we introduce NOs for GS-PDE backstepping. GS controllers act on the premise that the state change is slow and, as a result, guarantee only local stability, even for ODEs. We establish local stabilization of hyperbolic PDEs with nonlinear recirculation using both a "full-kernel" approach and the "gain-only" approach to gain operator approximation. Numerical simulations illustrate stabilization and demonstrate speedup by three orders of magnitude over traditional PDE gain-scheduling. Code (Github) for the numerical implementation is published to enable exploration.

Neural Operators for Delay-Compensating Control of Hyperbolic PIDEs

Jul 21, 2023

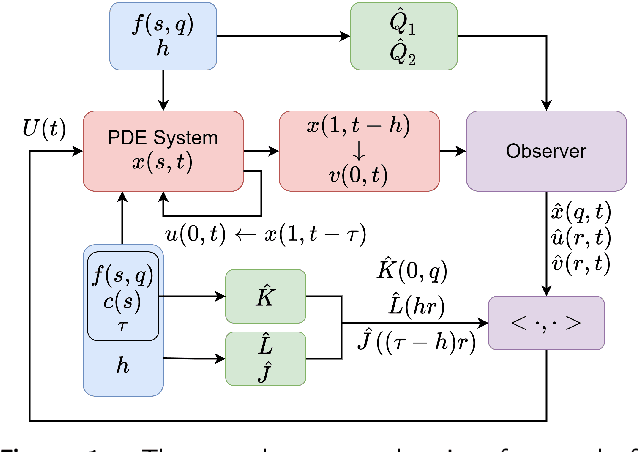

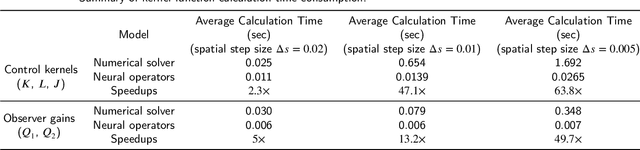

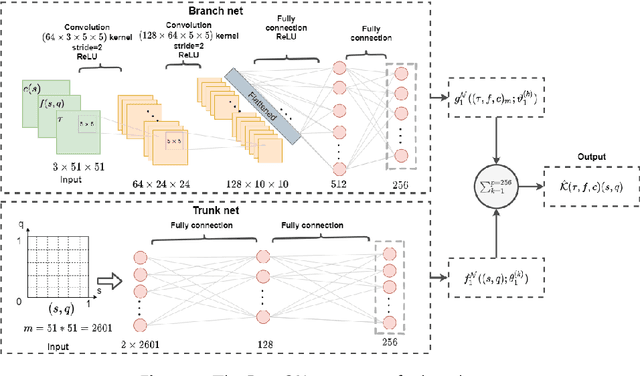

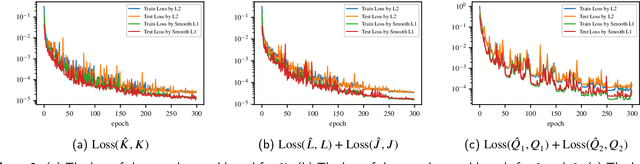

Abstract:The recently introduced DeepONet operator-learning framework for PDE control is extended from the results for basic hyperbolic and parabolic PDEs to an advanced hyperbolic class that involves delays on both the state and the system output or input. The PDE backstepping design produces gain functions that are outputs of a nonlinear operator, mapping functions on a spatial domain into functions on a spatial domain, and where this gain-generating operator's inputs are the PDE's coefficients. The operator is approximated with a DeepONet neural network to a degree of accuracy that is provably arbitrarily tight. Once we produce this approximation-theoretic result in infinite dimension, with it we establish stability in closed loop under feedback that employs approximate gains. In addition to supplying such results under full-state feedback, we also develop DeepONet-approximated observers and output-feedback laws and prove their own stabilizing properties under neural operator approximations. With numerical simulations we illustrate the theoretical results and quantify the numerical effort savings, which are of two orders of magnitude, thanks to replacing the numerical PDE solving with the DeepONet.

Newton Nonholonomic Source Seeking for Distance-Dependent Maps

Jul 21, 2023Abstract:The topics of source seeking and Newton-based extremum seeking have flourished, independently, but never combined. We present the first Newton-based source seeking algorithm. The algorithm employs forward velocity tuning, as in the very first source seeker for the unicycle, and incorporates an additional Riccati filter for inverting the Hessian inverse and feeding it into the demodulation signal. Using second-order Lie bracket averaging, we prove convergence to the source at a rate that is independent of the unknown Hessian of the map. The result is semiglobal and practical, for a map that is quadratic in the distance from the source. The paper presents a theory and simulations, which show advantage of the Newton-based over the gradient-based source seeking.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge