Maziyar Khadivi

FORLAPS: An Innovative Data-Driven Reinforcement Learning Approach for Prescriptive Process Monitoring

Jan 17, 2025

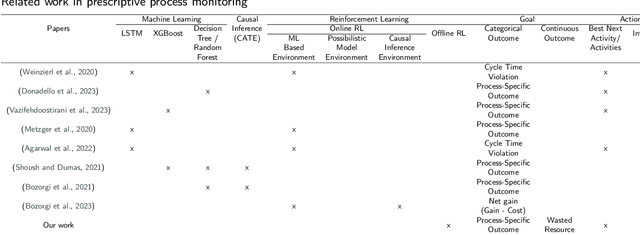

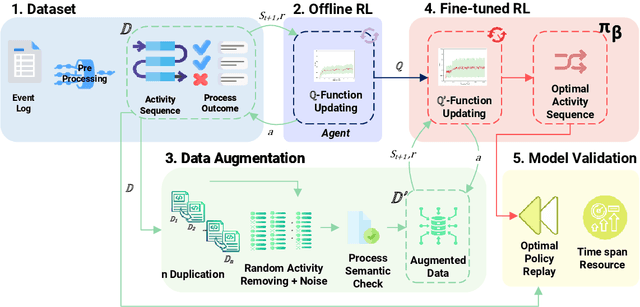

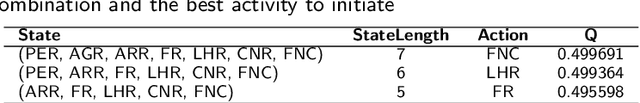

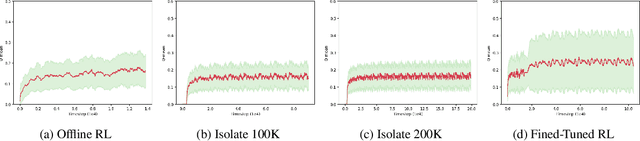

Abstract:We present a novel 5-step framework called Fine-Tuned Offline Reinforcement Learning Augmented Process Sequence Optimization (FORLAPS), which aims to identify optimal execution paths in business processes using reinforcement learning. We implemented this approach on real-life event logs from our case study an energy regulator in Canada and other real-life event logs, demonstrating the feasibility of the proposed method. Additionally, to compare FORLAPS with the existing models (Permutation Feature Importance and multi-task LSTM-Based model), we experimented to evaluate its effectiveness in terms of resource savings and process time span reduction. The experimental results on real-life event log validate that FORLAPS achieves 31% savings in resource time spent and a 23% reduction in process time span. Using this innovative data augmentation technique, we propose a fine-tuned reinforcement learning approach that aims to automatically fine-tune the model by selectively increasing the average estimated Q-value in the sampled batches. The results show that we obtained a 44% performance improvement compared to the pre-trained model. This study introduces an innovative evaluation model, benchmarking its performance against earlier works using nine publicly available datasets. Robustness is ensured through experiments utilizing the Damerau-Levenshtein distance as the primary metric. In addition, we discussed the suitability of datasets, taking into account their inherent properties, to evaluate the performance of different models. The proposed model, FORLAPS, demonstrated exceptional performance, outperforming existing state-of-the-art approaches in suggesting the most optimal policies or predicting the best next activities within a process trace.

A mathematical model for simultaneous personnel shift planning and unrelated parallel machine scheduling

Feb 24, 2024

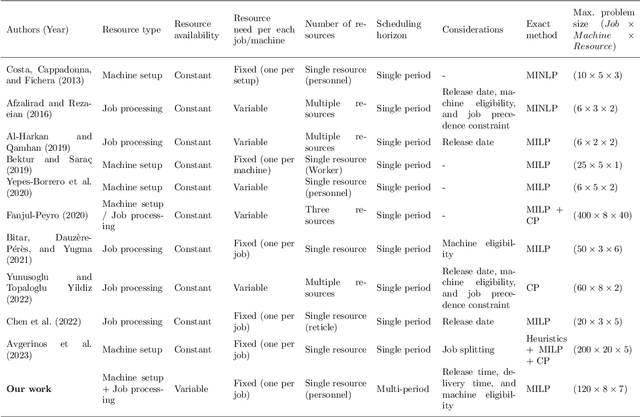

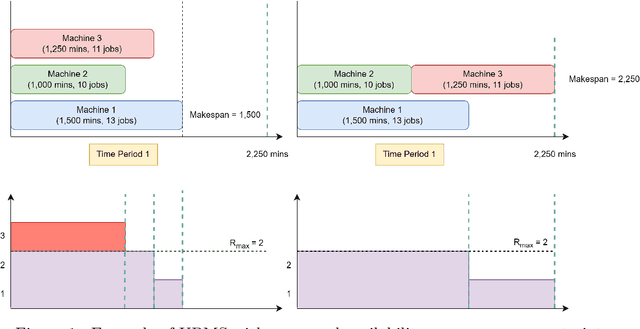

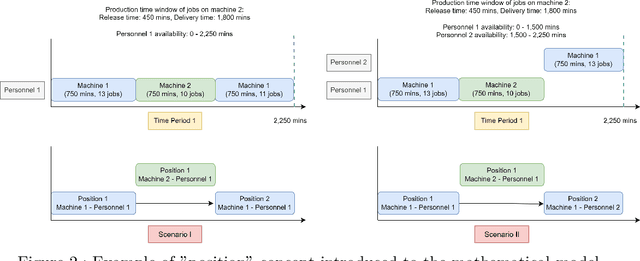

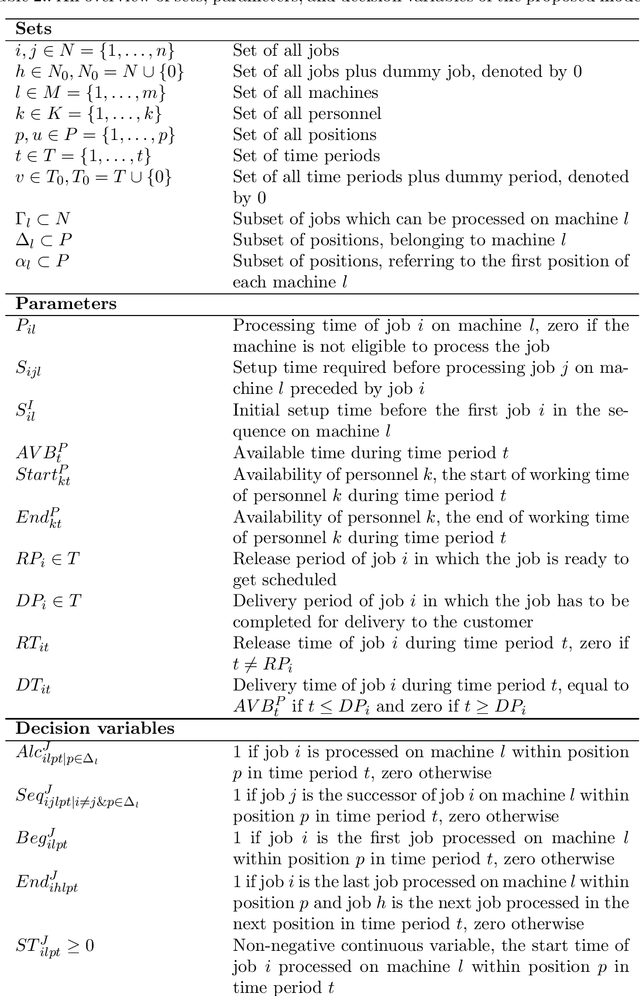

Abstract:This paper addresses a production scheduling problem derived from an industrial use case, focusing on unrelated parallel machine scheduling with the personnel availability constraint. The proposed model optimizes the production plan over a multi-period scheduling horizon, accommodating variations in personnel shift hours within each time period. It assumes shared personnel among machines, with one personnel required per machine for setup and supervision during job processing. Available personnel are fewer than the machines, thus limiting the number of machines that can operate in parallel. The model aims to minimize the total production time considering machine-dependent processing times and sequence-dependent setup times. The model handles practical scenarios like machine eligibility constraints and production time windows. A Mixed Integer Linear Programming (MILP) model is introduced to formulate the problem, taking into account both continuous and district variables. A two-step solution approach enhances computational speed, first maximizing accepted jobs and then minimizing production time. Validation with synthetic problem instances and a real industrial case study of a food processing plant demonstrates the performance of the model and its usefulness in personnel shift planning. The findings offer valuable insights for practical managerial decision-making in the context of production scheduling.

Deep reinforcement learning for machine scheduling: Methodology, the state-of-the-art, and future directions

Oct 04, 2023Abstract:Machine scheduling aims to optimize job assignments to machines while adhering to manufacturing rules and job specifications. This optimization leads to reduced operational costs, improved customer demand fulfillment, and enhanced production efficiency. However, machine scheduling remains a challenging combinatorial problem due to its NP-hard nature. Deep Reinforcement Learning (DRL), a key component of artificial general intelligence, has shown promise in various domains like gaming and robotics. Researchers have explored applying DRL to machine scheduling problems since 1995. This paper offers a comprehensive review and comparison of DRL-based approaches, highlighting their methodology, applications, advantages, and limitations. It categorizes these approaches based on computational components: conventional neural networks, encoder-decoder architectures, graph neural networks, and metaheuristic algorithms. Our review concludes that DRL-based methods outperform exact solvers, heuristics, and tabular reinforcement learning algorithms in terms of computation speed and generating near-global optimal solutions. These DRL-based approaches have been successfully applied to static and dynamic scheduling across diverse machine environments and job characteristics. However, DRL-based schedulers face limitations in handling complex operational constraints, configurable multi-objective optimization, generalization, scalability, interpretability, and robustness. Addressing these challenges will be a crucial focus for future research in this field. This paper serves as a valuable resource for researchers to assess the current state of DRL-based machine scheduling and identify research gaps. It also aids experts and practitioners in selecting the appropriate DRL approach for production scheduling.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge